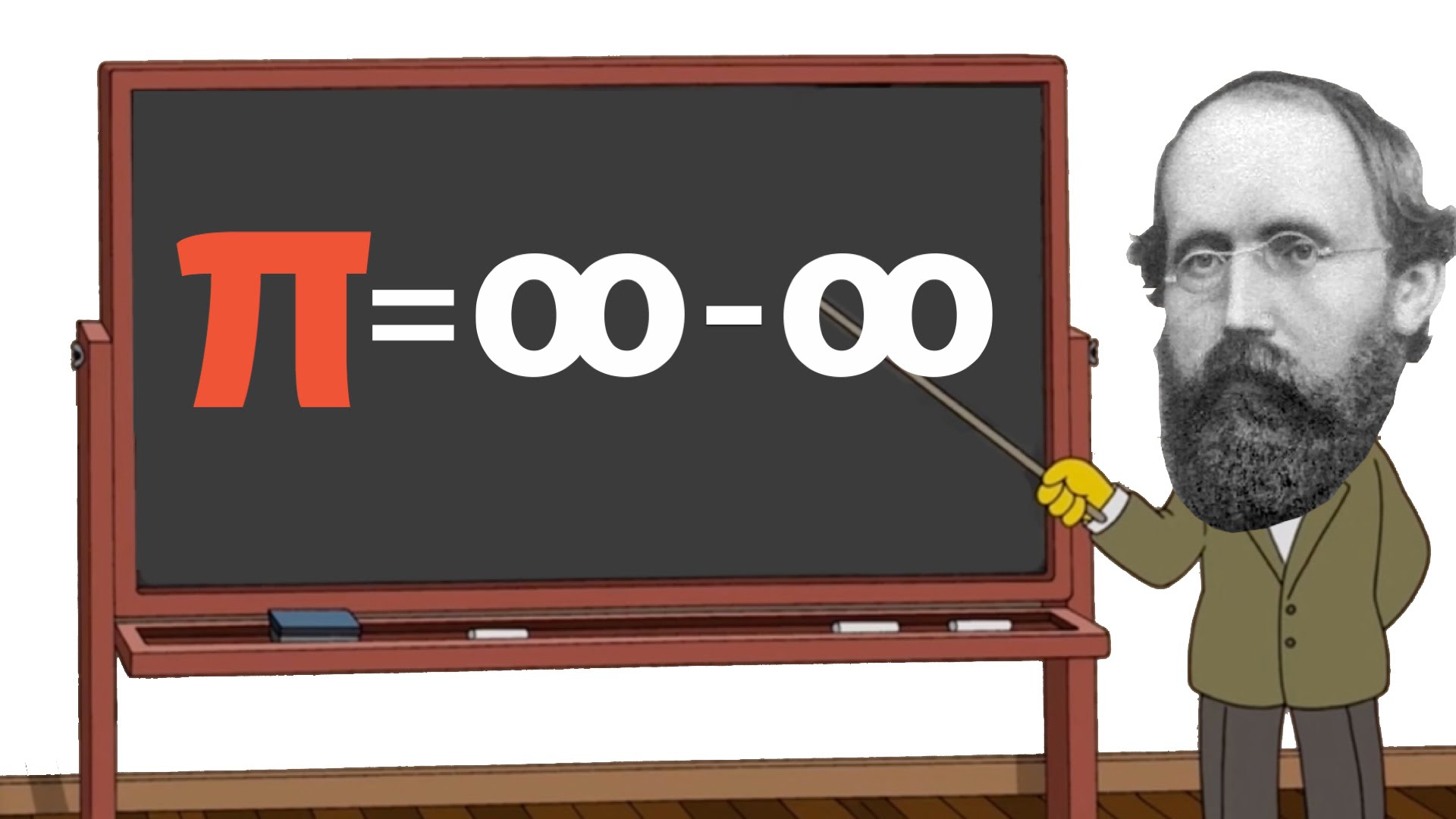

The phrase “infinity minus infinity” conjures a profound mathematical and philosophical conundrum. In pure mathematics, it represents an indeterminate form, a situation where the outcome cannot be determined without further context or a more precise definition of the ‘infinities’ involved. It is not simply zero, as one might instinctively assume, because infinity is not a number in the conventional sense but a concept representing unboundedness. This abstract challenge, however, is not confined to the ivory towers of theoretical mathematics; its echoes reverberate through the most cutting-edge domains of tech and innovation, particularly in the fields of AI, autonomous systems, big data, and computational modeling. Understanding the essence of this indeterminate form offers profound insights into how advanced technologies grapple with limitless inputs, continuous environments, and the quest for definitive outcomes from inherently ambiguous data.

The Indeterminate Nature of Limitless Systems

At its core, “infinity minus infinity” highlights the difficulty in performing operations on quantities that are ill-defined or unbounded. In a world increasingly saturated with data and complex systems, this abstract problem finds tangible parallels. Imagine attempting to measure the difference between two ever-expanding datasets, or define the precise boundary within a continuous, dynamic environment. The challenge lies in imposing determinacy on inherently indeterminate systems, a task central to modern technological advancement.

Big Data and the Illusion of Limitless Information

The era of big data epitomizes the challenge of dealing with vast, seemingly infinite quantities of information. Companies collect petabytes of data daily, with streams often appearing endless. When analysts attempt to identify trends, changes, or derive actionable insights, they are effectively performing operations that can, at a theoretical level, resemble “infinity minus infinity.” For example, comparing the global internet traffic from one second to the next, or measuring the change in sensor data from an autonomous vehicle moving through a perpetually changing environment.

The ‘infinity’ here is not literal but practical; the sheer scale of data makes it effectively limitless for any single human or even a typical computational system to fully grasp. Subtracting one massive, constantly growing dataset from another to find a ‘delta’ or ‘difference’ often leads to indeterminate forms if not properly contextualized. AI algorithms are tasked with finding patterns and anomalies within this ocean of data. Without sophisticated filtering, aggregation, and contextualization techniques, the algorithms would be overwhelmed by noise, failing to distinguish meaningful signals from the boundless background. This involves defining boundaries, sampling strategies, and statistical methods to transform an ‘infinite minus infinite’ scenario into a manageable, solvable problem.

Computational Limits and Theoretical Constructs

Computers operate on finite representations. While the human mind can conceptualize infinity, a silicon chip cannot directly process it. This fundamental limitation means that any technological system attempting to model or interact with infinite or near-infinite phenomena must employ approximations, heuristics, and bounded contexts. Autonomous flight systems, for instance, constantly process an immense stream of sensor data – visual, lidar, radar, GPS – from their environment. This continuous flow represents an ‘infinite’ input stream if every single photon or sound wave were to be considered.

When such a system is programmed to detect changes or track differences – for example, identifying a new obstacle or measuring a shift in its trajectory – it’s performing an operation akin to “infinity minus infinity” on the data stream. The technology resolves this by defining finite sampling rates, setting thresholds for significance, and creating simplified models of the environment. The challenge is to balance the need for comprehensive data with the computational feasibility of processing it, ensuring that critical information isn’t lost in the simplification while avoiding an indeterminate overload.

Resolving Ambiguity in Autonomous Decision-Making

One of the most profound applications of understanding indeterminate forms in technology is in the realm of autonomous decision-making. AI-powered systems, from AI follow mode in drones to self-driving cars, are constantly making choices based on a vast, continuous influx of data about their surroundings. When faced with multiple, equally plausible interpretations or an overwhelming array of potential actions, these systems encounter a practical equivalent of “infinity minus infinity”—a scenario where a clear, determinate path is not immediately obvious.

Predictive Analytics and Ambiguous Outcomes

Predictive analytics, a cornerstone of modern AI, thrives on identifying patterns and forecasting future states. However, in complex, dynamic environments, models can sometimes generate multiple outcomes with near-equal probability or encounter scenarios where the ‘difference’ between two potential futures is negligible, yet the implications are vastly different. Imagine an autonomous vehicle needing to decide between two very similar evasive maneuvers, each with an infinitesimally small, yet potentially critical, difference in projected safety.

Here, the system is attempting to “subtract” the risks and rewards of one infinite set of possibilities from another. A purely mathematical approach might yield an indeterminate form. To resolve this, advanced AI often employs reinforcement learning, Monte Carlo simulations, and probabilistic reasoning to assign weights and biases, effectively forcing a determination. These algorithms learn from past experiences and simulations to build a heuristic understanding of which indeterminate outcomes are ‘safer’ or ‘more efficient’, even when the raw statistical difference is ambiguous.

The Role of Context and Heuristics in AI

The genius of modern AI lies in its ability to introduce context and heuristics to resolve indeterminacy. Unlike a purely mathematical system that might halt at “infinity minus infinity,” AI is designed to proceed. For an autonomous drone navigating a complex urban environment, the sensor data at any given moment is virtually infinite in its detail. When identifying a target for “AI follow mode,” the system must filter out countless irrelevant data points, differentiate the target from similar objects, and predict its movement, all while managing its own flight path.

This involves applying contextual rules: “Is this object within the operational range?”, “Does its movement pattern match a human?”, “Is it occluded by foliage?” These rules act as bounds, transforming the infinite field of possibilities into a finite, manageable set. Heuristics, learned rules of thumb or computational shortcuts, allow the AI to make ‘good enough’ decisions in real-time, even when a perfectly optimized, globally informed choice would be computationally impossible or too time-consuming. This pragmatic approach to resolving indeterminate forms is what enables autonomous systems to function effectively in the real world.

Mapping, Remote Sensing, and Continuous Fields

The domains of mapping and remote sensing are fundamentally concerned with representing and interpreting continuous, often vast, physical spaces. From detailed topographic maps generated by drone photogrammetry to global climate models based on satellite data, these technologies grapple with the challenge of quantifying and comparing aspects of a world that, at sufficient resolution, appears to be infinitely detailed and constantly changing.

Granularity vs. Completeness in Environmental Models

When creating digital twins or highly accurate environmental models, developers face a dilemma: how much detail is enough? A true, infinitesimally granular representation of a landscape would be ‘infinite’. Every blade of grass, every ripple on a pond, every micro-variation in elevation could theoretically be mapped. To compare two such infinitely detailed models, or to track the ‘difference’ (subtraction) between the current state and a previous state, would again lead to an “infinity minus infinity” scenario.

Therefore, technology must define a practical level of granularity. Remote sensing satellites capture data at specific spatial and spectral resolutions, effectively quantizing continuous phenomena into discrete data points. Drone mapping campaigns define pixel sizes and ground sampling distances. The challenge is to choose a resolution that provides sufficient detail for the application without creating an unmanageably ‘infinite’ dataset. The “subtraction” of changes between two maps then becomes a comparison of finite, defined data sets, rather than an intractable infinite one.

Dynamic Environments and Perpetual Delta

Many environments are not static; they are in constant flux. Urban sprawl, forest growth, glacier melt, and atmospheric conditions are all continuous processes. Remote sensing is often employed to monitor these changes, effectively seeking the ‘delta’ or ‘difference’ between two states of a dynamic, continuous system. If the environment itself is viewed as an ‘infinite’ continuum of phenomena, then measuring the change between two points in time is a prime example of an “infinity minus infinity” problem that requires technological resolution.

AI and machine learning algorithms are crucial here. They are designed to identify significant changes amidst a backdrop of continuous, often minor, fluctuations. For instance, an algorithm monitoring deforestation needs to distinguish the felling of trees from seasonal leaf loss or natural tree fall. It learns to establish a baseline (an ‘infinite’ reference state), and then detect deviations that cross a predefined threshold of significance. This involves statistical techniques to normalize data, identify outliers, and filter out irrelevant noise, ensuring that the computed ‘difference’ is meaningful and not just an artifact of the continuous, dynamic nature of the environment.

The Human Element in Navigating the Infinite

Ultimately, while technology provides the tools to manage and approximate infinite concepts, the underlying drive to resolve “infinity minus infinity” in practical terms stems from human ingenuity. It’s our ability to define, constrain, and seek meaning within vastness that propels innovation forward, transforming theoretical impasses into solvable engineering challenges.

Defining Boundaries for Practical Application

The journey from “infinity minus infinity” to actionable intelligence is often about the human capacity to define boundaries. Whether it’s setting the scope of an AI model, defining the resolution for a map, or establishing the parameters for autonomous decision-making, it is the deliberate imposition of finite limits on infinite possibilities that makes technology effective. This means understanding the specific problem we are trying to solve and tailoring our technological approach to that context, rather than attempting to capture every conceivable detail of the universe.

The Pursuit of Determinacy in Design

Innovation in tech is frequently characterized by the pursuit of determinacy. Engineers and data scientists strive to build systems that produce clear, predictable, and robust outcomes, even when confronted with inputs that are inherently ambiguous, continuous, or vast. This involves designing algorithms that can prioritize, filter, and synthesize information, effectively transforming indeterminate forms into concrete results. From the safety protocols embedded in autonomous flight to the error correction mechanisms in quantum computing, the goal is to navigate the theoretical indeterminacy of infinite systems and deliver precise, reliable performance in the real world. The ongoing dialogue between theoretical limits and practical implementation remains a fertile ground for future breakthroughs in technology and innovation.