In the rapidly evolving landscape of drone technology and innovation, the concept of “testing a hypothesis” stands as an indispensable cornerstone. It is the scientific method’s core mechanism for validating new ideas, refining existing systems, and driving progress beyond mere speculation or trial-and-error. For breakthroughs in areas like autonomous flight, advanced mapping, AI-driven operations, and remote sensing, a rigorous approach to hypothesis testing ensures that innovations are not only novel but also robust, reliable, and demonstrably superior.

The Foundation of Technological Advancement in Drones

At its heart, a hypothesis is a proposed explanation for a phenomenon, a predictive statement based on initial observations or theoretical considerations, which can then be tested through experimentation. In the context of drone technology, this translates into a specific, testable prediction about how a new algorithm, hardware component, or operational methodology will perform or impact a system. For instance, an engineer might hypothesize that “integrating a new deep learning model for object recognition will reduce false positive detections in urban autonomous flight by 15%.”

The importance of hypothesis testing in drone innovation cannot be overstated. Without it, development would be akin to navigating a complex terrain without a map or compass – relying on intuition, which, while sometimes useful, is often insufficient for creating complex, safety-critical systems. By systematically testing hypotheses, developers and researchers gain empirical evidence to support or refute their ideas, leading to data-driven decisions that accelerate development cycles, minimize risks, and optimize performance. It’s the structured pathway through which new concepts transition from theoretical promise to practical application, embedding a culture of evidence-based development throughout the drone industry.

Formulating Hypotheses for Drone Technologies

The journey of innovation in drone tech begins long before any hardware is assembled or code is written; it starts with careful observation and the intelligent formulation of a hypothesis. A well-crafted hypothesis is the blueprint for any successful test, guiding the experimental design and data analysis.

From Observation to Testable Statements

Innovation often springs from identifying a problem or an opportunity for improvement. Perhaps current drone navigation systems struggle in GPS-denied environments, or remote sensing data for agricultural applications lacks the necessary resolution for early disease detection. These observations spark questions: “Can we improve navigation without GPS?” or “Can we achieve higher resolution imagery with a new sensor?” These questions then evolve into specific, testable predictions.

For example, observing erratic behavior of a drone’s AI follow mode could lead to the hypothesis: “A new reinforcement learning algorithm, trained on diverse environmental datasets, will improve target tracking stability by 20% in variable terrain compared to the current PID-based system.” Similarly, if current drone mapping tools are slow, one might hypothesize: “Implementing a cloud-based parallel processing architecture will reduce the processing time for large-scale photogrammetry maps by 30% without compromising accuracy.” The key is to transform a general idea into a statement that predicts a measurable outcome under specific conditions.

Characteristics of a Strong Hypothesis

A strong hypothesis is not just a guess; it possesses several critical characteristics that make it suitable for scientific inquiry within drone tech:

- Specificity: It clearly defines the variables being tested and the predicted relationship between them. Vague statements like “New drone is better” are useless. Instead, “A drone equipped with X-brand propeller blades will exhibit Y% longer flight endurance than one with Z-brand blades under identical payload and environmental conditions” is specific.

- Falsifiability: There must be a conceivable way for the hypothesis to be proven wrong. If no possible experimental outcome could refute it, the hypothesis is not scientific. For instance, if you hypothesize that “a new obstacle avoidance algorithm works perfectly,” it’s not truly falsifiable if “perfectly” is undefined or if edge cases are simply dismissed.

- Measurability: The predicted outcome must be quantifiable. Improvements in performance, reductions in error rates, or increases in efficiency must be expressible through metrics that can be objectively collected and analyzed.

- Relevance: The hypothesis should address a meaningful problem or contribute to a significant advancement in drone technology, impacting areas like safety, efficiency, autonomy, or data quality in applications such as remote sensing, infrastructure inspection, or logistics.

Methodologies for Hypothesis Testing in Drone Innovation

Once a robust hypothesis is formulated, the next crucial step is to design and execute experiments that can rigorously test its validity. This involves careful planning of data collection, controlled environments, and sophisticated analytical techniques.

Experimental Design and Data Collection

The success of hypothesis testing hinges on the quality of the experimental design and the data collected. For drone technologies, this can take various forms:

- Controlled Experiments: These are paramount for isolating the effect of a specific change. For instance, in developing an AI follow mode, A/B testing might involve equipping two identical drones with different versions of the AI algorithm and flying them simultaneously under identical conditions to compare tracking accuracy, latency, and smoothness. Similarly, testing a new sensor’s performance (e.g., a novel LiDAR system for autonomous navigation) would involve comparing its data output against a known baseline under varying environmental factors like lighting, fog, or dust, while controlling other variables.

- Field Trials: For technologies that require real-world validation, field trials are essential. This could involve deploying drones equipped with new GPS-denied navigation systems in complex urban canyons or testing the efficacy of a novel remote sensing payload for agricultural crop analysis across different farm landscapes. Data collected often includes drone telemetry (position, velocity, altitude), sensor outputs (images, point clouds, spectral data), and ground truth measurements for comparison.

- Simulations: Before costly or risky real-world deployments, simulations offer a safe and efficient environment for initial hypothesis testing. Developers can test autonomous flight algorithms, obstacle avoidance strategies, or complex multi-drone coordination systems in virtual environments, generating vast amounts of data under various conditions without physical constraints. This iterative simulation-to-reality approach significantly accelerates the development cycle for new features like AI-driven path planning or dynamic obstacle avoidance.

Statistical Analysis and Interpretation

Raw data from drone experiments are just numbers; their true meaning is unlocked through rigorous statistical analysis.

- Quantitative Metrics: Performance is measured using a range of quantitative metrics tailored to the hypothesis. For autonomous systems, metrics might include precision, recall, F1-score for object detection, mean absolute error (MAE) for localization, or collision rates. For power systems, it could be flight time increase or energy consumption reduction. For remote sensing, it could be the correlation coefficient with ground truth data or spatial accuracy.

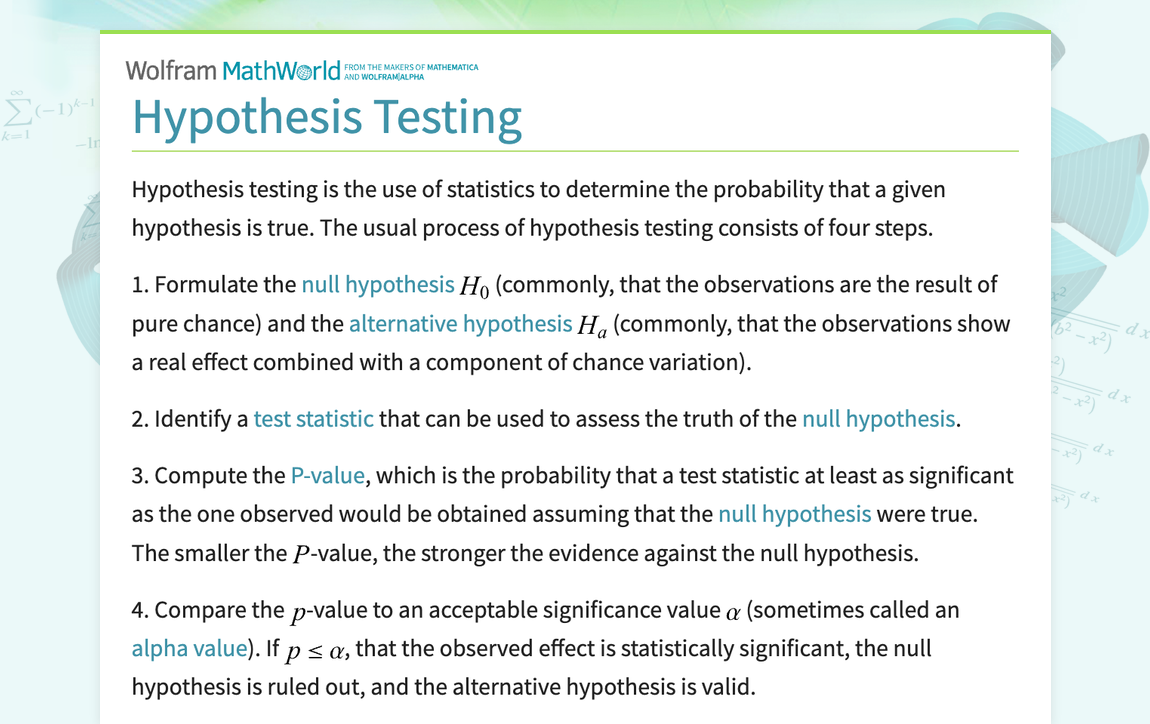

- Statistical Significance: A critical aspect of analysis is determining if observed differences or improvements are truly significant or merely due to random chance. This is where concepts like p-values and confidence intervals come into play. A low p-value (typically < 0.05) suggests that the observed effect is unlikely to have occurred by chance, thus providing evidence to support the alternative hypothesis. Confidence intervals help quantify the range within which the true effect likely lies. Tools like R, Python with libraries such as SciPy or StatsModels, or specialized machine learning frameworks are instrumental in performing these analyses.

- The Role of Null and Alternative Hypotheses: Every hypothesis test involves two opposing statements. The null hypothesis (H0) states that there is no effect or no difference (e.g., “The new autonomous navigation algorithm has no effect on collision rates”). The alternative hypothesis (H1) states that there is an effect or a difference (e.g., “The new autonomous navigation algorithm significantly reduces collision rates”). The goal of the experiment is to gather enough evidence to either reject the null hypothesis in favor of the alternative, or to fail to reject the null hypothesis (meaning there isn’t enough evidence to support the alternative). It’s important to note that failing to reject the null hypothesis does not mean it is true, only that the data did not provide sufficient evidence to conclude otherwise.

Impact and Applications in Advanced Drone Systems

Hypothesis testing is not an abstract academic exercise; it has profound, tangible impacts across the spectrum of advanced drone systems, propelling innovation in critical applications.

Autonomous Flight and AI

The development of truly autonomous drones heavily relies on hypothesis testing. When new AI models for perception, decision-making, or path planning are proposed, they are rigorously tested. For instance, researchers might hypothesize that a new convolutional neural network (CNN) architecture will improve the detection accuracy of small objects (e.g., power lines) by X% for inspection drones. Through controlled experiments comparing the CNN to existing methods on diverse datasets, coupled with statistical analysis of detection rates and false positives, this hypothesis is either supported or refuted, directly influencing the adoption and deployment of safer, more intelligent autonomous systems. Similarly, developing AI follow modes involves testing hypotheses about tracking stability, power consumption, and responsiveness, ensuring the drone can intelligently follow a subject while optimizing flight performance.

Mapping and Remote Sensing Precision

In mapping and remote sensing, drones collect vast amounts of data used for everything from precision agriculture to environmental monitoring and construction site management. Hypothesis testing is crucial for validating the accuracy and utility of these datasets. For example, a hypothesis might state: “Multispectral imagery captured by a new drone-mounted sensor, when processed with algorithm X, can identify crop stress due to nitrogen deficiency 7-10 days earlier than traditional ground surveys.” This would be tested by conducting field experiments comparing drone data analysis with conventional methods and correlating findings with ground truth measurements. Such testing helps to establish the quantitative advantage and reliability of new drone-based sensing solutions, enabling better resource management and predictive analytics.

Predictive Maintenance and System Reliability

The long-term operational success of drone fleets hinges on their reliability and the ability to predict component failures. Here, hypothesis testing can drive the development of smarter maintenance strategies. One might hypothesize: “Monitoring motor vibration patterns using an onboard sensor array will allow prediction of motor failure 50 flight hours in advance with 90% accuracy.” This involves collecting extensive operational data from drone motors, correlating vibration signatures with actual failure events, and using statistical models to test the predictive power of the sensor data. Successful validation of such a hypothesis leads to proactive maintenance schedules, reducing downtime and improving safety.

Ethical and Safety Considerations

As drones become more integrated into daily life, ethical considerations and safety compliance are paramount. Hypothesis testing plays a role in validating safety protocols and ensuring ethical operations. For instance, testing a hypothesis like “Implementing a geo-fencing algorithm that dynamically adjusts flight ceiling based on real-time airspace traffic will reduce unauthorized airspace incursions by 95% in controlled environments” ensures regulatory adherence and public safety. This involves simulating complex airspace scenarios and deploying prototype systems to evaluate their effectiveness in preventing violations, often with human-in-the-loop oversight to test human-machine interaction safety hypotheses.

The Iterative Cycle of Innovation

Hypothesis testing is not a one-time event but rather an integral part of a continuous, iterative cycle of innovation. In the fast-paced world of drone technology, this cycle is particularly critical for rapid progress and adaptation. The process begins with observation, leading to the formulation of a hypothesis. This hypothesis is then tested through carefully designed experiments, and the resulting data are analyzed.

The conclusions drawn from this analysis—whether supporting or refuting the initial hypothesis—feed back into the innovation process. If a hypothesis is supported, it validates a design choice or an algorithm, potentially leading to its implementation or further refinement. If a hypothesis is refuted, it’s not a failure but a valuable learning opportunity. It indicates that the initial assumption was incorrect, prompting new observations, revised hypotheses, and subsequent rounds of experimentation. This iterative loop—Observe -> Hypothesize -> Experiment -> Analyze -> Conclude -> Refine/New Hypothesis—is what drives sustained technological advancement.

This data-driven approach allows drone developers to move quickly and confidently, making informed decisions rather than relying on intuition alone. It ensures that every new feature, every performance enhancement, and every safety protocol in advanced drone systems, from AI follow modes to sophisticated remote sensing platforms, is built on a foundation of empirical evidence, leading to more robust, reliable, and intelligent aerial technologies.