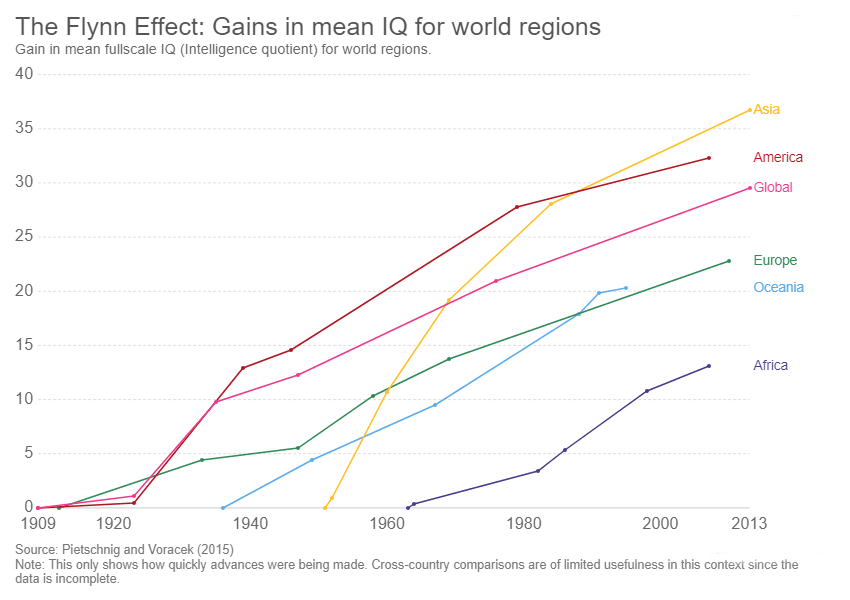

The term “Flynn Effect” is traditionally rooted in psychology, referring to the observation of sustained and substantial increases in intelligence test scores from generation to generation. It denotes a continuous upward trajectory in cognitive abilities over time, reflecting complex societal, educational, and environmental changes. When we transpose this fascinating concept into the realm of drone technology and innovation, we uncover a compelling parallel: a rapid, ongoing, and seemingly accelerating improvement in the ‘intelligence,’ autonomy, and capabilities of unmanned aerial vehicles (UAVs). This metaphorical ‘Flynn Effect’ in drone tech isn’t about human IQ, but rather about the exponential growth in drone computational power, sensor sophistication, AI integration, and the resultant expansion of their operational domains. It represents a continuous evolution where each successive generation of drone technology exhibits enhanced perception, decision-making, and execution, far surpassing its predecessors.

The drone industry, characterized by its blistering pace of innovation, provides a fertile ground for observing such an effect. From the rudimentary remote-controlled quadcopters of yesteryear to today’s highly autonomous, AI-driven aerial platforms, the progression is undeniable. This article delves into the various facets of this technological ‘Flynn Effect,’ exploring how advances in onboard processing, sensor fusion, machine learning, and application development are collectively driving drones towards unprecedented levels of sophistication and utility. We will examine the core components contributing to this upward trend, the challenges it presents, and the exciting future it portends for industries ranging from logistics and agriculture to infrastructure inspection and environmental monitoring. The ‘Flynn Effect’ in drone tech is not just a theoretical construct; it is a lived reality shaping the future of aerial robotics.

The Metaphorical “Cognitive Leap” in Drone Systems

Just as human intelligence has seen generational gains, drone systems are experiencing a profound “cognitive leap,” driven by relentless innovation in their core processing and sensory capabilities. This isn’t just about faster flight or longer endurance; it’s about the fundamental ability of drones to perceive, process, and interact with their environments in increasingly sophisticated ways. This leap is the bedrock of the ‘Flynn Effect’ in drone technology, enabling new levels of autonomy and operational efficiency.

Evolution of Onboard Intelligence: Processors and AI Chips

At the heart of any intelligent system lies its processing power. Early drones relied on relatively simple microcontrollers for basic flight stabilization. Today, modern UAVs are equipped with powerful System-on-Chips (SoCs), often integrating dedicated Neural Processing Units (NPUs) or Tensor Processing Units (TPUs). These specialized AI chips allow for complex algorithms, machine learning models, and deep learning neural networks to run directly onboard, in real-time. This local processing capability is crucial for reducing latency, enhancing data privacy, and enabling operations in environments where constant connectivity to cloud-based AI is impractical. The generational improvement in these processors is staggering, moving from megahertz to gigahertz, and from rudimentary calculations to millions of inferences per second, directly translating into smarter, more responsive drone behavior. This continuous hardware advancement provides the foundational increase in “processing intelligence” that parallels the cognitive gains described by the original Flynn Effect. Each new drone model benefits from a richer, more efficient computational substrate, allowing for more complex tasks and sophisticated decision-making at the edge.

Enhanced Sensor Fusion and Environmental Awareness

The “eyes and ears” of a drone are its sensors, and their continuous evolution is a major contributor to the ‘Flynn Effect’. Initially, drones were equipped with basic GPS and inertial measurement units (IMUs). Now, sensor suites are incredibly diverse and sophisticated, including high-resolution visible light cameras, thermal cameras, LiDAR (Light Detection and Ranging) for 3D mapping, ultrasonic sensors for proximity detection, and advanced radar systems for all-weather operation. The true “cognitive leap,” however, comes from sensor fusion – the process of combining data from multiple disparate sensors to create a more complete and accurate understanding of the drone’s environment. Advanced algorithms synthesize input from GPS, IMU, cameras, LiDAR, and other sensors to build a real-time, high-fidelity model of the surroundings. This comprehensive environmental awareness significantly improves navigation accuracy, obstacle avoidance, and target recognition. For example, a drone fusing LiDAR data with visual imagery can differentiate between a tree and a power line more effectively than with either sensor alone, allowing for safer, more efficient autonomous flight paths. This multi-modal perception empowers drones to operate in complex, dynamic environments with greater confidence and adaptability, mirroring an increase in perceptual intelligence.

Accelerating Autonomous Capabilities and Learning

The ‘Flynn Effect’ in drone technology is perhaps most evident in the accelerating sophistication of autonomous capabilities. This is where the raw processing power and advanced sensor data translate into intelligent actions, enabling drones to perform tasks with minimal human intervention and continuously improve their performance through various learning mechanisms. The journey from simple waypoint navigation to complex, adaptive autonomous missions highlights a generational leap in operational “intelligence.”

Generational Improvements in Flight Controllers and Algorithms

The flight controller is the brain of the drone, responsible for interpreting sensor data, executing commands, and maintaining stable flight. Over the years, flight controller hardware has become significantly more powerful, but it’s the underlying algorithms that have truly transformed drone autonomy. Early algorithms focused on basic stability and position hold. Successive generations have introduced more advanced control loops, adaptive flight control, and robust fault-tolerance mechanisms. These improvements allow drones to handle varying wind conditions, carry different payloads, and perform complex maneuvers with greater precision. For instance, advanced PID (Proportional-Integral-Derivative) controllers combined with state-estimation filters (like Kalman filters) create a much smoother and more accurate flight experience. The integration of advanced path planning and trajectory optimization algorithms allows drones to autonomously choose the most efficient and safe routes, considering obstacles, terrain, and mission objectives. This continuous algorithmic refinement represents a core aspect of the ‘Flynn Effect’, as each iteration adds layers of “intelligence” that make drones more capable and reliable without direct human input.

Machine Learning and Predictive Analytics in Drone Operations

Perhaps the most potent driver of the ‘Flynn Effect’ in drone autonomy is the integration of machine learning (ML) and predictive analytics. Drones are no longer just executing pre-programmed instructions; they are learning from their experiences and adapting to new situations. For example, autonomous inspection drones can use ML models trained on vast datasets of infrastructure images to identify defects like cracks or corrosion with human-level accuracy, and even classify their severity. Predictive analytics takes this a step further, enabling drones to anticipate potential failures, optimize maintenance schedules for critical components (like batteries or motors), and even predict environmental changes (e.g., wind gusts) to adjust flight paths proactively. Reinforcement learning, a subset of ML, is increasingly being used to train drones to perform complex tasks, such as navigating through unknown environments or interacting with dynamic objects, by learning through trial and error within simulated or real-world scenarios. This continuous learning from data, experience, and environmental feedback fundamentally elevates the “intelligence” of drone systems, allowing them to evolve and improve their performance autonomously, much like a learning organism, embodying the very essence of a technological ‘Flynn Effect’.

The Expanding Scope of Drone Applications and Data Mastery

The ‘Flynn Effect’ in drone innovation is not just internal to the drone’s capabilities but also manifests in the ever-widening scope and increasing sophistication of its applications. As drones become smarter and more autonomous, their ability to collect, process, and interpret vast amounts of data has transformed entire industries, demonstrating a clear generational leap in utility and impact. This mastery of data is where the abstract concept of increasing ‘intelligence’ truly delivers tangible value.

Sophistication in Mapping, Surveying, and Remote Sensing

One of the earliest and most impactful applications of drones was in mapping and surveying. Initially, drones offered a quicker alternative to traditional methods. Today, the sophistication has exploded. Drones equipped with LiDAR, photogrammetry cameras, and multispectral/hyperspectral sensors can create highly accurate 3D models, digital elevation models (DEMs), and orthomosaic maps with centimeter-level precision. This level of detail enables critical applications in construction (progress monitoring, volume calculations), agriculture (crop health analysis, yield prediction), urban planning (infrastructure development, change detection), and environmental science (land use monitoring, biodiversity assessment). The ‘Flynn Effect’ here is evident in the leap from simple aerial photographs to complex data products that require significant onboard processing and post-processing analytics, often leveraging AI to extract meaningful insights automatically. For instance, AI algorithms can identify specific plant diseases from multispectral data or detect minute shifts in land topography from successive LiDAR scans, tasks impossible for earlier drone generations.

AI-Driven Object Recognition and Decision-Making

The integration of artificial intelligence has profoundly amplified drones’ ability to perform object recognition and make autonomous decisions, marking a significant advancement. Drones are no longer merely observers; they are active participants capable of understanding and reacting to their environment. In security and surveillance, AI-powered drones can autonomously detect intruders, identify specific vehicles or individuals, and track their movements, even in challenging conditions. In industrial inspection, drones can identify anomalies such as loose bolts on a wind turbine, cracks in a pipeline, or corrosion on a bridge, flagging them for human review with high accuracy. Beyond mere identification, sophisticated drones are being developed with decision-making capabilities: for example, an autonomous delivery drone might dynamically recalculate its route in real-time based on unexpected obstacles, weather changes, or evolving traffic conditions, prioritizing safety and efficiency. This ability to not only “see” and “understand” but also to “decide” and “act” based on complex environmental cues represents a pinnacle of the ‘Flynn Effect’ in drone technology, transforming them from tools into intelligent, adaptive agents.

Challenges and Future Trajectories of Drone “Intelligence”

While the ‘Flynn Effect’ in drone tech promises a future of increasingly intelligent and autonomous aerial systems, this rapid progression is not without its challenges. The trajectory of drone “intelligence” is a complex interplay of technological breakthroughs, ethical considerations, regulatory frameworks, and the continuous pursuit of true adaptability and resilience. Understanding these facets is crucial for guiding the future evolution of UAVs responsibly.

Ethical Considerations and Human-Machine Teaming

As drones become more autonomous and their decision-making capabilities advance, significant ethical considerations emerge. Questions surrounding privacy, data security, accountability for autonomous errors, and the potential for misuse become paramount. The ‘Flynn Effect’ in drone intelligence necessitates a parallel evolution in ethical guidelines and regulatory frameworks to ensure that these powerful technologies serve humanity’s best interests. A key area of focus is human-machine teaming (HMT). Rather than aiming for full human displacement, the future often envisions a collaborative paradigm where drones augment human capabilities. For example, in search and rescue, an autonomous drone might identify potential survivors, but a human operator makes the final decision on deployment and extraction. Designing interfaces and protocols that foster trust, transparency, and effective collaboration between human operators and increasingly intelligent drones is vital. This ensures that as drone “intelligence” grows, it remains a tool for empowerment rather than a source of unforeseen ethical dilemmas.

The Pursuit of True Self-Correction and Adaptability

Despite their impressive advancements, current drone systems are still largely reliant on pre-programmed knowledge and training data. True self-correction and adaptability, akin to how biological systems learn and evolve, remain ambitious goals. The next frontier in the ‘Flynn Effect’ for drone tech involves developing systems that can autonomously learn from novel, unpredicted situations, generalize knowledge across different environments, and exhibit robust resilience to unforeseen failures or adversarial attacks. This includes advancements in areas like federated learning (where drones learn collectively without centralizing sensitive data), explainable AI (allowing human operators to understand drone decision-making), and bio-inspired robotics that mimic natural systems’ robustness and flexibility. The pursuit of true self-correction means drones could dynamically adjust their flight control algorithms in response to damaged propellers or adapt their inspection patterns based on emerging structural weaknesses. The ability to autonomously identify errors, diagnose root causes, and implement corrective actions in real-time would represent another profound leap in drone ‘intelligence,’ pushing the ‘Flynn Effect’ to new heights and ushering in an era of truly resilient and adaptive aerial platforms.

In conclusion, the ‘Flynn Effect’ serves as a powerful metaphor to describe the relentless and accelerating growth in the capabilities and ‘intelligence’ of drone technology. From their foundational processing power and advanced sensor fusion to their expanding autonomous functions and data mastery, drones are undergoing a continuous, generational leap in sophistication. This effect is reshaping industries, pushing the boundaries of what is possible from the sky, and presenting both immense opportunities and significant challenges. As we navigate the future, fostering ethical development and robust human-machine collaboration will be paramount to harnessing the full potential of this ever-evolving aerial intelligence. The trajectory of drone innovation shows no sign of slowing, promising a future where UAVs are not just tools, but intelligent, adaptive partners in countless endeavors.