In the intricate tapestry of the digital age, where machines converse with each other at speeds unimaginable just decades ago, and where autonomous systems navigate complex environments with precision, there exists a fundamental, often invisible, language that orchestrates every interaction: the computer protocol. Far from being a mere technicality, protocols are the bedrock upon which all modern technology is built, serving as the essential rulebook that enables diverse systems to understand each other, exchange information, and collaborate seamlessly. Without these standardized sets of rules, the internet as we know it would cease to function, AI systems would be isolated, autonomous vehicles would crash, and remote sensing data would be unintelligible. This exploration delves into the essence of computer protocols, unveiling their pivotal role in fostering innovation, particularly within the dynamic realm of advanced tech such as AI, autonomous flight, mapping, and remote sensing.

The Foundational Language of Digital Interaction

At its core, a computer protocol is a set of formal rules, conventions, and data formats that govern how computers and other network devices exchange information. Think of it as a universal etiquette guide for machines, ensuring that regardless of their make or model, they can speak the same language and interpret messages correctly. This standardization is not just convenient; it is absolutely critical for the interoperability that defines our interconnected world.

Defining Computer Protocols

More formally, a computer protocol defines the syntax, semantics, and synchronization of communication, and possible error recovery methods. Syntax refers to the structure of the data blocks, indicating how information is organized within a message. Semantics deals with the meaning of each section of the message, ensuring that what one device sends, the other interprets exactly as intended. Synchronization dictates the timing and sequencing of messages, crucial for ensuring data arrives in the correct order and at the appropriate moment. Finally, robust protocols include mechanisms for error detection and correction, ensuring data integrity even across unreliable communication channels.

To draw an analogy, consider human language. If two people want to communicate, they must share a common language (English, Spanish, Mandarin) and follow its grammar and vocabulary rules. Similarly, if they are conducting a formal meeting, they might adhere to a specific protocol for speaking turns, introductions, and decision-making. In the digital realm, protocols provide this common language and structure, allowing a drone’s flight controller to communicate with its ground station, a sensor to transmit data to a cloud server, or an AI model to receive input from various data streams.

The Necessity of Standardization

The necessity of standardization in computer protocols cannot be overstated. Imagine a world where every device manufacturer created their own unique communication method. A smartphone from one company wouldn’t be able to connect to a Wi-Fi router from another; a sensor array designed by one team couldn’t feed data to an analytics platform built by another. Such a fragmented ecosystem would stifle innovation, create insurmountable compatibility issues, and effectively halt the progress of interconnected technologies.

Standardized protocols, on the other hand, foster an environment of open innovation. They allow developers to create new applications and hardware without needing to reinvent the wheel for every communication layer. This principle is fundamental to the rapid expansion of fields like autonomous systems, where diverse components – from GPS modules and LIDAR sensors to flight control units and AI inference engines – must all communicate flawlessly. Without universally accepted protocols, the promise of a seamless, intelligent future would remain an elusive dream.

How Protocols Drive Modern Tech & Innovation

Protocols are not passive rulebooks; they are active enablers, propelling the advancements we see in cutting-edge technological domains. From intelligent automation to vast data networks, protocols are the invisible gears that keep the machinery of innovation turning.

Enabling Autonomous Systems and AI

Autonomous systems, whether they are self-driving cars, industrial robots, or sophisticated drones, rely heavily on a complex web of communication protocols. These systems need to gather real-time data from various sensors (cameras, radar, lidar, inertial measurement units), process it using onboard AI algorithms, and then issue commands to actuators (motors, steering mechanisms). All these steps involve rapid, reliable data exchange, which is meticulously managed by protocols.

For instance, in drone autonomy, protocols like MAVLink (Micro Air Vehicle Link) are critical. MAVLink is a lightweight, header-only message marshaling library for micro air vehicles, designed for communicating with ground control stations and between onboard components. It defines messages for everything from GPS coordinates and battery status to flight mode commands and mission waypoints. Without MAVLink or similar protocols, an autonomous drone couldn’t receive its flight plan, report its position, or execute complex maneuvers based on AI-driven obstacle avoidance decisions.

Similarly, the Robot Operating System (ROS), a flexible framework for writing robot software, uses its own communication protocols for inter-process communication (IPC). ROS nodes (independent processes that perform specific tasks, like image processing or motor control) communicate by sending messages over named topics. These messages are defined by the protocol, ensuring that a node generating a map from sensor data can reliably transmit it to a navigation node, which then instructs the motor controller node. AI follow modes, for example, depend on an AI vision system processing real-time video, identifying a target, and then sending precise heading and speed commands, all facilitated by robust, low-latency protocols.

Facilitating Remote Sensing and Data Transmission

Remote sensing, particularly from drone and satellite platforms, generates enormous volumes of data—from high-resolution imagery and thermal scans to hyperspectral data and LiDAR point clouds. Transmitting this data efficiently and securely from the collection platform to ground stations, data centers, or cloud-based analytics platforms is a monumental task that protocols handle.

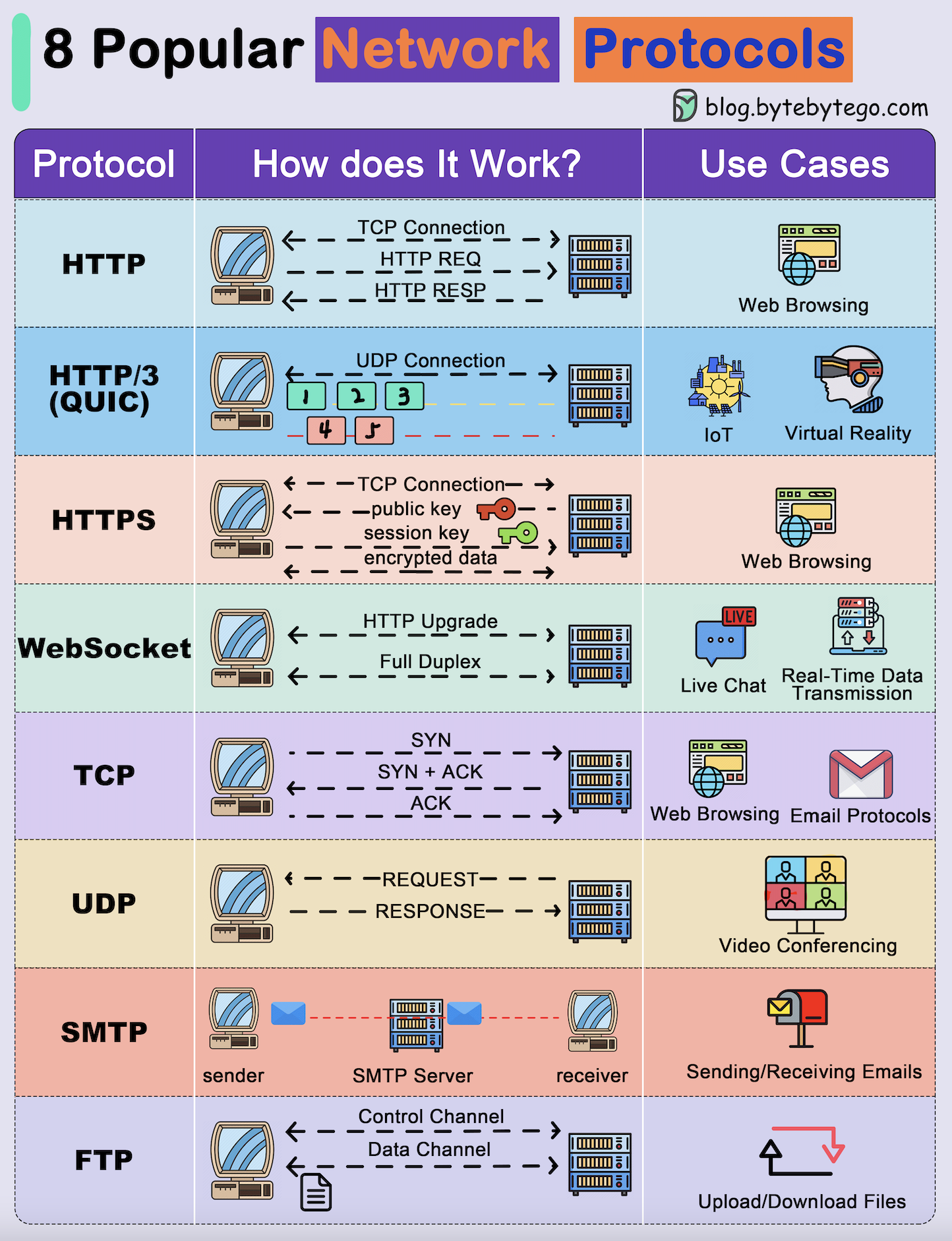

Protocols like TCP/IP (Transmission Control Protocol/Internet Protocol) form the backbone of this data transmission over the internet, ensuring reliable, ordered, and error-checked delivery of packets. For real-time telemetry or less critical, high-volume sensor data where some loss is acceptable for speed, UDP (User Datagram Protocol) might be employed. Specialized protocols are also in play, such as those used for transmitting corrected GPS data (e.g., RTCM for Real-Time Kinematic – RTK – corrections), crucial for mapping applications requiring centimeter-level accuracy.

Consider a drone conducting a large-scale agricultural survey, collecting multispectral imagery to assess crop health. The drone’s onboard systems capture terabytes of data. This data then needs to be transferred to a processing center. Protocols manage the segmentation of this data into manageable packets, their transmission over wireless links (e.g., Wi-Fi, cellular, or proprietary radio links), reassembly at the receiving end, and verification of integrity. Without these protocols, the raw sensor data would be useless, unable to reach the analytical tools that transform it into actionable insights for farmers.

Powering Connectivity and the IoT Ecosystem

The Internet of Things (IoT) envisions a world where billions of devices, from smart home appliances to industrial sensors, are interconnected, communicating and collaborating to create intelligent environments. Protocols are the glue that holds this vast ecosystem together. They enable devices with vastly different computational capabilities and power constraints to communicate effectively.

Protocols like MQTT (Message Queuing Telemetry Transport) are specifically designed for lightweight messaging in constrained environments, making them ideal for IoT devices that might run on battery power and have limited processing capabilities. CoAP (Constrained Application Protocol) serves a similar purpose for web transfers. For shorter-range, low-power connectivity, protocols like Zigbee and Bluetooth Low Energy (BLE) are essential for device-to-device communication in smart homes or within a localized sensor network for remote sensing applications.

These protocols facilitate applications such as smart city infrastructure, where traffic sensors communicate with signal lights, or precision agriculture, where soil moisture sensors relay data to automated irrigation systems. Each sensor reading, each command sent to an actuator, is encapsulated and transmitted according to a predefined protocol, making the IoT vision a tangible reality and driving further innovation in connected tech.

Key Components and Types of Protocols

To understand how protocols function, it’s helpful to view them through the lens of layered architectures, which break down complex communication tasks into manageable sub-tasks.

Layers of Protocol Stacks

The concept of a protocol stack, like the well-known TCP/IP model or the more theoretical OSI (Open Systems Interconnection) model, is central to modern networking. These models define different layers, with each layer handling a specific aspect of the communication process. A layer typically communicates only with the layer immediately above and below it, abstracting away complexities and allowing for modular development.

In the TCP/IP model, for example, there are four layers:

- Application Layer: Where user applications interact with the network (e.g., HTTP for web browsing, FTP for file transfer, DNS for domain name resolution).

- Transport Layer: Manages end-to-end communication and data reliability (e.g., TCP for reliable streams, UDP for faster, less reliable datagrams).

- Internet Layer: Handles logical addressing and routing across networks (e.g., IP for assigning addresses and directing packets).

- Network Access Layer (or Link Layer): Deals with the physical transmission of data frames over specific network hardware (e.g., Ethernet, Wi-Fi).

This layered approach ensures that innovations at one layer (e.g., a new physical wireless technology) don’t require fundamental changes to applications or higher-level protocols, thus accelerating technological progress.

Common Protocol Categories

Protocols can be broadly categorized based on their function and the layer they operate at:

- Network Protocols: These are fundamental to internet connectivity.

- TCP/IP (Transmission Control Protocol/Internet Protocol): The suite of protocols that underpins the internet, providing reliable, connection-oriented data transmission and addressing.

- UDP (User Datagram Protocol): A simpler, faster, connectionless protocol often used where speed is more critical than guaranteed delivery, like streaming video or DNS lookups.

- HTTP/HTTPS (Hypertext Transfer Protocol/Secure): The foundation of data communication for the World Wide Web.

- FTP (File Transfer Protocol): For transferring files between computers.

- DNS (Domain Name System): Translates human-readable domain names into IP addresses.

- Data Link Protocols: Govern communication within a local network segment.

- Ethernet: The most common wired LAN technology.

- Wi-Fi (IEEE 802.11): Wireless local area network protocols.

- Application-Specific Protocols: Designed for particular applications or domains, often built on top of network protocols.

- MAVLink: As discussed, essential for drone communication.

- ROS Protocols: Crucial for inter-node communication within the Robot Operating System.

- RTCM (Radio Technical Commission for Maritime Services): Used for transmitting differential GPS correction data, vital for high-precision autonomous navigation and mapping.

- MQTT (Message Queuing Telemetry Transport): Lightweight messaging protocol for IoT devices.

- DICOM (Digital Imaging and Communications in Medicine): For handling and transmitting medical images, showing how even domain-specific data types require their own protocols for interoperability.

Challenges and Future Directions in Protocol Development

As technology advances, so too must the protocols that support it. The demands of emerging innovations present ongoing challenges and drive future development in the protocol landscape.

Security and Robustness

With the increasing reliance on digital systems, particularly in critical infrastructure and autonomous systems, the security and robustness of protocols are paramount. Protocols must be designed to withstand cyber threats, data tampering, and denial-of-service attacks. The evolution from HTTP to HTTPS, incorporating SSL/TLS encryption, is a prime example of protocols adapting to security demands. Future protocols for AI-driven autonomous systems will require even more stringent security measures, including advanced encryption, authentication, and anomaly detection, to prevent malicious interference that could have catastrophic real-world consequences.

Low-Latency and High-Throughput Demands

The push for real-time applications – such as immersive FPV (First Person View) drone flying, instantaneous decision-making in autonomous vehicles, and rapid processing of massive remote sensing data streams – places immense demands on protocols for low latency and high throughput. Traditional protocols, while reliable, can sometimes introduce delays. New protocols and optimizations are being developed to minimize latency, often by trading some reliability for speed or by leveraging technologies like 5G, which inherently supports lower latency. The development of protocols specifically tailored for edge computing environments, where processing happens closer to the data source, is also critical for meeting these demands.

Interoperability and Open Standards

Despite the existence of many standardized protocols, fragmentation remains a challenge, particularly in niche or emerging tech areas. The drive towards true interoperability across diverse platforms, manufacturers, and industries continues to push for open standards and robust, universally adoptable protocols. Initiatives to create common communication frameworks for smart cities or drone traffic management systems exemplify this trend. The goal is to reduce vendor lock-in, foster greater competition, and accelerate innovation by ensuring that disparate systems can truly communicate and collaborate without proprietary barriers.

Conclusion

Computer protocols are the unsung heroes of the digital revolution, the silent architects enabling the incredible advancements we witness daily in technology and innovation. From the fundamental exchange of data over the internet to the sophisticated dance of autonomous vehicles and the intricate collection of remote sensing data, protocols provide the essential rules that transform raw data into meaningful information and isolated machines into interconnected intelligence. As we venture further into an era dominated by AI, hyper-connectivity, and increasingly autonomous systems, the evolution of these foundational communication rulebooks will continue to be a critical driver, shaping the future of how technology interacts, learns, and ultimately, serves humanity. Understanding “what is computer protocol” is not just understanding a technical detail; it’s grasping the very language that powers our innovative, interconnected world.