In an era defined by instantaneous digital interaction, the seemingly trivial act of sending an emoji on platforms like Snapchat encapsulates a profound technological achievement: the distillation of complex information, emotion, or status into a universally understood, concise visual cue. This capacity for rapid, context-dependent communication, where a single symbol conveys a wealth of meaning, holds vital parallels and untapped potential within the sophisticated world of drone technology and innovation. Just as a simple heart emoji can signify affection or a flame emoji denotes a streak, advanced drone systems increasingly rely on innovative symbolic languages to convey critical operational data, interpret complex environmental factors, and facilitate seamless human-machine and machine-machine interaction. This exploration delves into how the principles underpinning social media’s emoji language are being mirrored and innovated within the high-stakes domain of aerial robotics and intelligent systems.

The Language of Light and Data: Interpreting Drone Status Indicators

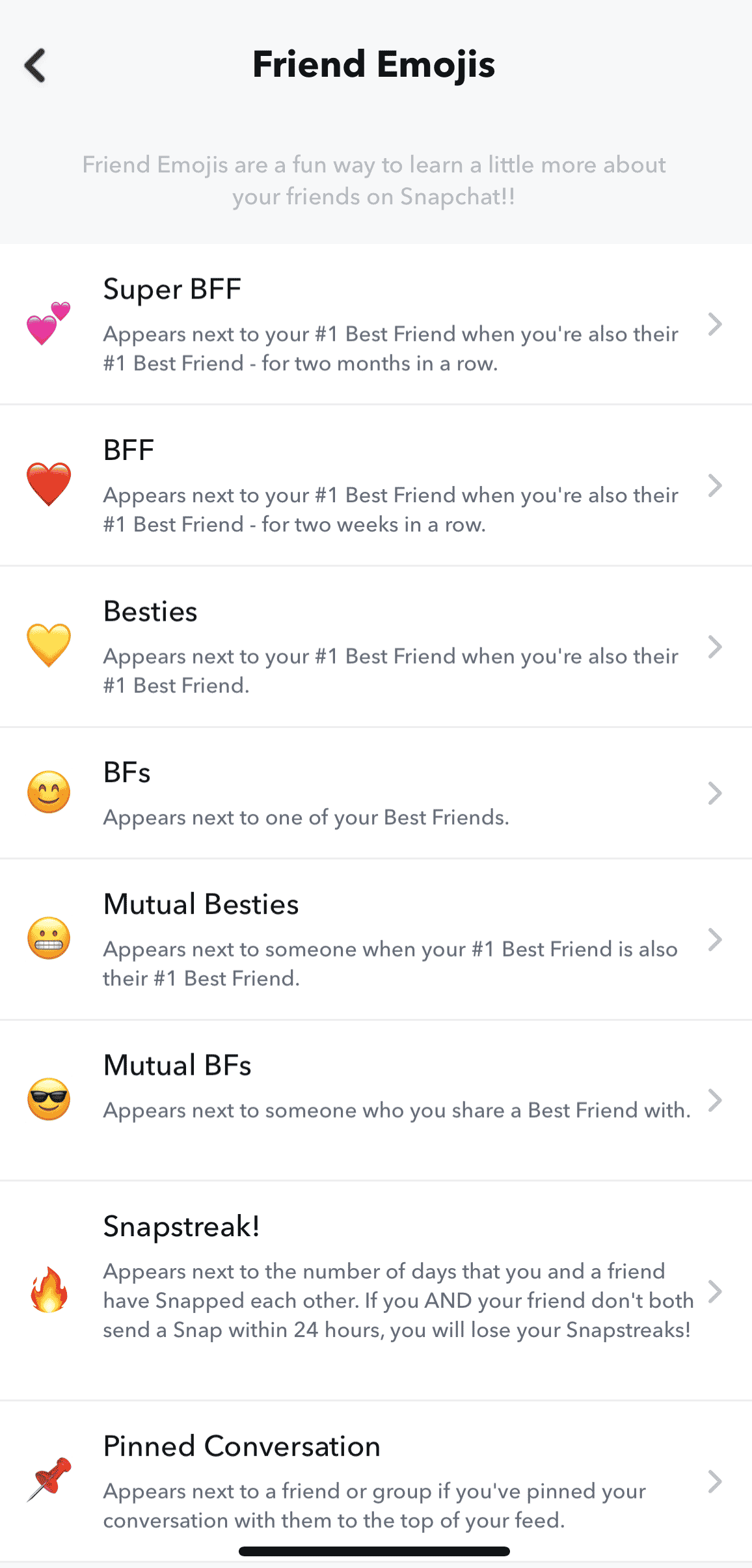

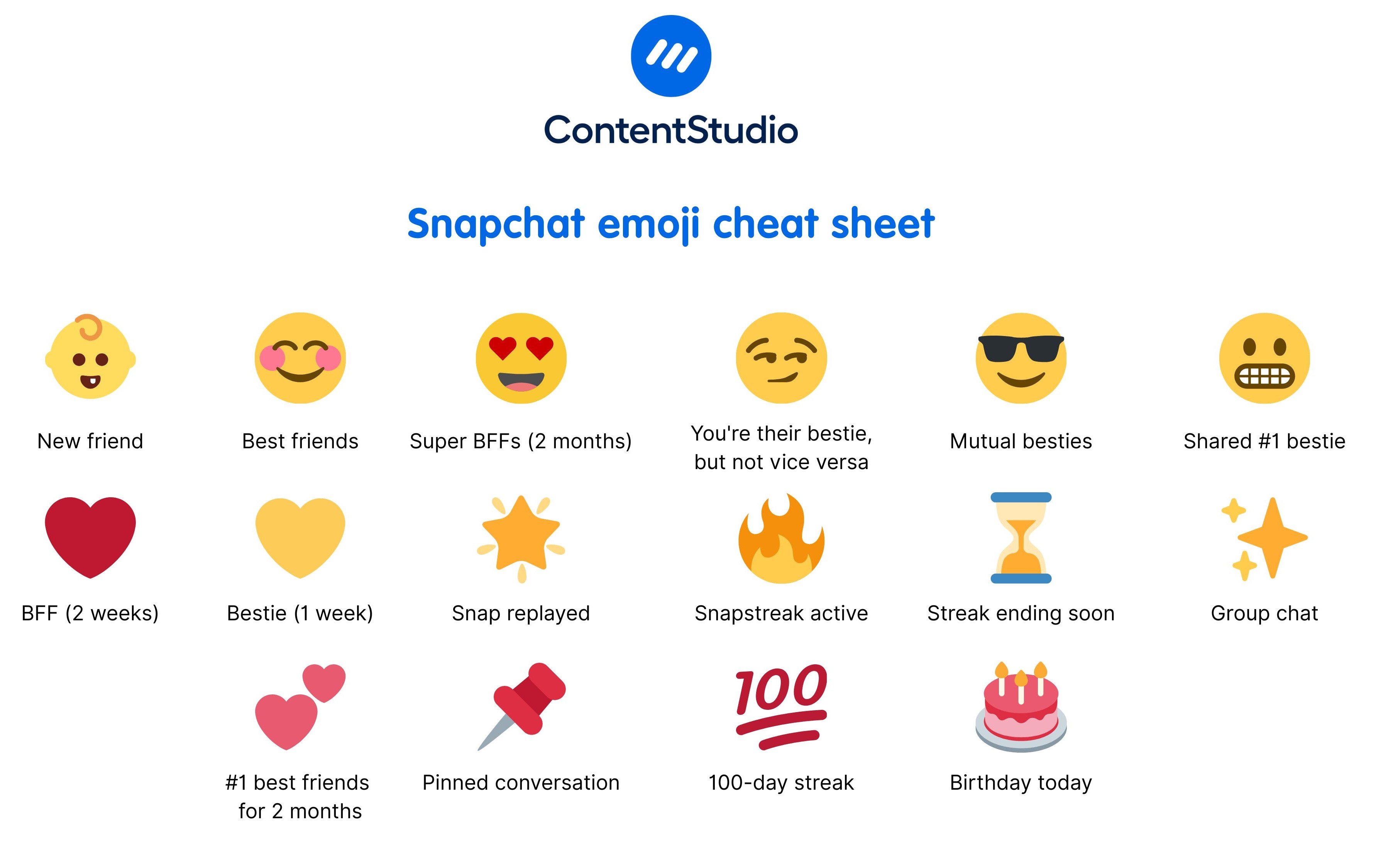

The operational landscape for drones is a dynamic tapestry of environmental variables, mission parameters, and system health metrics. For operators, understanding the drone’s “state of mind” is paramount to safe and successful missions. In much the same way emojis offer a shorthand for human emotions, advanced drone systems leverage a sophisticated array of visual and data-driven indicators to communicate their internal status, external perceptions, and ongoing tasks. These aren’t literal emojis, but rather the operational equivalents: concise, context-rich symbols designed for rapid interpretation.

Beyond Telemetry: Visualizing Operational Health

Traditional telemetry provides raw data – voltage, GPS coordinates, signal strength. However, the true innovation lies in abstracting this data into immediately comprehensible visual cues. Consider the simple LED light sequences on a drone: blinking green might signify GPS lock, while flashing red could indicate a critical error or low battery. These are the drone world’s foundational “emojis,” providing instant, at-a-glance status updates to a pilot.

Beyond physical lights, the graphical user interfaces (GUIs) of modern drone control applications have evolved significantly. Instead of numerical readouts, pilots are presented with intuitive icons, color-coded gauges, and dynamic symbols that instantly convey battery levels, flight mode, signal integrity, and sensor status. A vibrant green icon for “GPS engaged” speaks volumes more quickly and effectively than a string of coordinates and satellite counts. When a drone enters an “autonomous flight” mode, a distinct symbol replaces the manual control icon, instantly informing the operator of the system’s current directive. This visualization paradigm minimizes cognitive load and reduces the time required for critical decision-making, transforming complex data streams into an easily digestible visual language, much like emojis streamline communication in a fast-paced chat.

Real-time Context: Deciphering Environmental Cues

Drones operate in environments teeming with variables – wind speed, obstacles, no-fly zones, dynamic weather patterns. Interpreting these conditions in real-time is crucial for autonomous decision-making and operator intervention. Innovative drone technology employs advanced sensors (LiDAR, radar, computer vision) to perceive the environment, translating raw sensor data into actionable visual indicators.

For instance, obstacle avoidance systems don’t just detect a tree; they might display a vivid red overlay on the pilot’s FPV feed, indicating an imminent collision risk, or project a dynamically calculated safe flight path. Geofencing violations trigger prominent on-screen alerts, often with specific visual symbols indicating the type of restricted airspace entered. Weather applications integrated into drone control software use symbolic representations – a cloud icon for fog, a wind-sock icon for high winds – to convey dynamic conditions without requiring the pilot to sift through meteorological data. This real-time contextual awareness, presented through intuitive visual “emojis,” empowers both autonomous systems and human operators to make informed decisions swiftly, enhancing safety and mission efficiency.

Rapid Communication Protocols for Autonomous Systems

The future of drone technology is increasingly autonomous and collaborative. Fleets of drones, or individual drones interacting with ground stations and other IoT devices, require sophisticated communication protocols that are not only robust but also capable of conveying complex directives and statuses with the efficiency of a single, well-understood emoji. This is where innovation in inter-drone communication and human-drone interfaces is most critical.

Inter-Drone Communication: A Fleet’s Silent Dialogue

In swarm intelligence or multi-drone operations, individual units must communicate their intentions, status, and findings to their peers. This “silent dialogue” often involves a shared understanding of symbolic data packets – a kind of machine-to-machine “emoji” language. For example, in a search and rescue mission, a drone identifying a target might transmit a specific data signature that instantly signals “target found” and its coordinates to the rest of the swarm, enabling immediate convergence or resource allocation.

Collision avoidance in dense airspaces relies on drones transmitting standardized identifiers and trajectory data that other drones can quickly interpret to adjust their flight paths. These communications are highly optimized for bandwidth and latency, ensuring that critical information is conveyed with the urgency and conciseness required for real-time aerial choreography. The development of universal communication standards and symbolic representations for key operational states is paramount for ensuring interoperability and the safe scaling of autonomous drone fleets, mirroring the need for a universally understood set of emojis in human communication.

Human-Drone Interface: Bridging the Information Gap

The interface between human operators and advanced drones is evolving beyond joysticks and simple monitors. Augmented reality (AR) and sophisticated graphical displays are creating more immersive and intuitive interaction experiences, akin to how social media platforms strive for seamless user engagement. AR overlays, for instance, can project crucial operational “emojis” directly onto the live drone feed, showing predicted flight paths, target lock indicators, or hazard warnings within the actual visual context.

Voice commands, gesture controls, and even biometric feedback are being explored as ways for humans to issue directives and receive acknowledgments, translating natural human communication into machine-understandable commands and vice-versa. The goal is to create a seamless dialogue where the drone’s responses are as clear and unambiguous as an emoji’s meaning, minimizing misinterpretation and maximizing operational efficiency. This convergence of intuitive human interaction with complex machine operations is a frontier of tech innovation, striving to make human-drone collaboration as effortless as sending a quick message on Snapchat.

AI and Predictive Analytics: Understanding the Unspoken

The true power of “what emojis mean” lies not just in their explicit meaning, but in the layers of context and inference a human mind applies. Similarly, in advanced drone systems, Artificial Intelligence (AI) and machine learning are crucial for moving beyond simple status indicators to understanding the “unspoken” implications of data – anticipating issues, optimizing performance, and providing deeper contextual intelligence.

Machine Learning for Anomaly Detection

Drones generate vast amounts of operational data – sensor readings, motor temperatures, control inputs. AI-powered machine learning algorithms are trained to analyze these continuous streams, identifying subtle deviations from normal operational patterns that might indicate an impending malfunction or a performance degradation. These deviations are the “pre-emojis” – the subtle cues that precede a clear problem.

By learning the “normal” operational signature, AI can flag anomalies that a human operator might miss, predicting component failures before they become critical. For example, a slight, consistent increase in motor vibration or a minute drop in battery efficiency over several flights, when combined with specific environmental factors, could trigger a predictive “emoji” alert indicating a required maintenance check. This proactive anomaly detection transforms raw data into intelligent, actionable warnings, significantly enhancing drone reliability and safety.

Contextual Intelligence: Dynamic Interpretation of Visual Cues

The meaning of an emoji often shifts with context – a winking face can be flirtatious, sarcastic, or playful depending on the surrounding text and relationship. Similarly, AI in drone technology is enabling systems to interpret visual and operational cues dynamically, adapting their understanding based on the mission context, environmental conditions, and learned historical data.

Consider a drone operating in a dense urban environment versus an open agricultural field. A detected “obstacle” (represented by a visual “emoji” on the display) would be interpreted differently in each context: a common occurrence in the city requiring evasive action, versus an unusual finding in the field warranting further investigation. AI algorithms can leverage historical data, real-time sensor inputs, and mission objectives to provide a more nuanced interpretation of these “emojis,” offering more intelligent recommendations or autonomous actions. This contextual intelligence is key to developing truly autonomous and adaptive drone systems that can operate effectively in complex, unpredictable real-world scenarios.

The Future of Drone Communication: An Emojified World?

The trajectory of drone technology points towards increasingly sophisticated, autonomous, and interconnected systems. As these systems grow in complexity, the need for clear, concise, and contextually rich communication will only intensify. The principles derived from the widespread adoption of emojis – distilling meaning into easily digestible visual packets – will continue to inspire innovations in how drones communicate with humans, with each other, and with their environment.

Augmented Reality for Enhanced Situational Awareness

The integration of augmented reality (AR) into drone control systems represents a significant leap forward in translating complex data into instantly understandable “emojis.” Imagine a pilot wearing AR goggles, seeing not only the live drone feed but also real-time overlays of flight paths, no-fly zones, battery levels, and sensor readings directly within their field of view. These AR “emojis” provide immediate situational awareness, highlighting critical information without diverting attention from the primary visual task. This could extend to collaborative missions, where multiple pilots see the “emojis” of other drones in their swarm, including their targets, statuses, and warnings, fostering unprecedented coordination.

Standardizing Visual Communication Across Platforms

Just as Unicode standardized emojis across different mobile operating systems, the drone industry faces a growing need for universal standards in visual and symbolic communication. As more manufacturers, software developers, and regulatory bodies contribute to the drone ecosystem, a common “emoji dictionary” for operational statuses, alerts, and commands will become essential. Such standardization would ensure interoperability, enhance safety, and streamline training across diverse drone platforms, fostering a more cohesive and efficient global drone infrastructure. This initiative would pave the way for a future where a flashing yellow icon means “caution” universally, regardless of the drone’s origin or control software.

In conclusion, while the title “What Emojis Mean on Snapchat” might initially seem far removed from the realm of drones, it provocatively highlights a fundamental principle of effective communication: the power of concise, context-rich visual symbols to convey complex meaning instantly. From simple LED indicators to sophisticated AI-driven predictive analytics and augmented reality interfaces, the innovative spirit of drone technology is constantly striving to develop its own robust and intuitive “emoji language.” As drones become more autonomous and integrated into our daily lives, mastering this nuanced language of light, data, and symbols will be paramount to unlocking their full potential, ensuring safety, and fostering seamless interaction within the dynamic, evolving landscape of aerial robotics.