In the rapidly evolving landscape of technology and innovation, from autonomous drones navigating complex environments to AI systems making real-time decisions, the underlying structure and organization of data are paramount. Without a meticulously defined framework, the vast streams of information generated by sophisticated sensors, flight systems, and intelligent algorithms would be an unusable deluge. This is where Data Definition Language (DDL) plays an indispensable, often unseen, yet critical role. Far from being a mere technicality, DDL is the foundational blueprint upon which all modern technological applications, particularly those reliant on robust data management like remote sensing, autonomous flight, and AI, are built. It is the architect’s tool for shaping the digital world, ensuring data is not just stored, but structured in a way that is logical, efficient, and capable of supporting cutting-edge innovation.

The Foundational Role of DDL in Tech Ecosystems

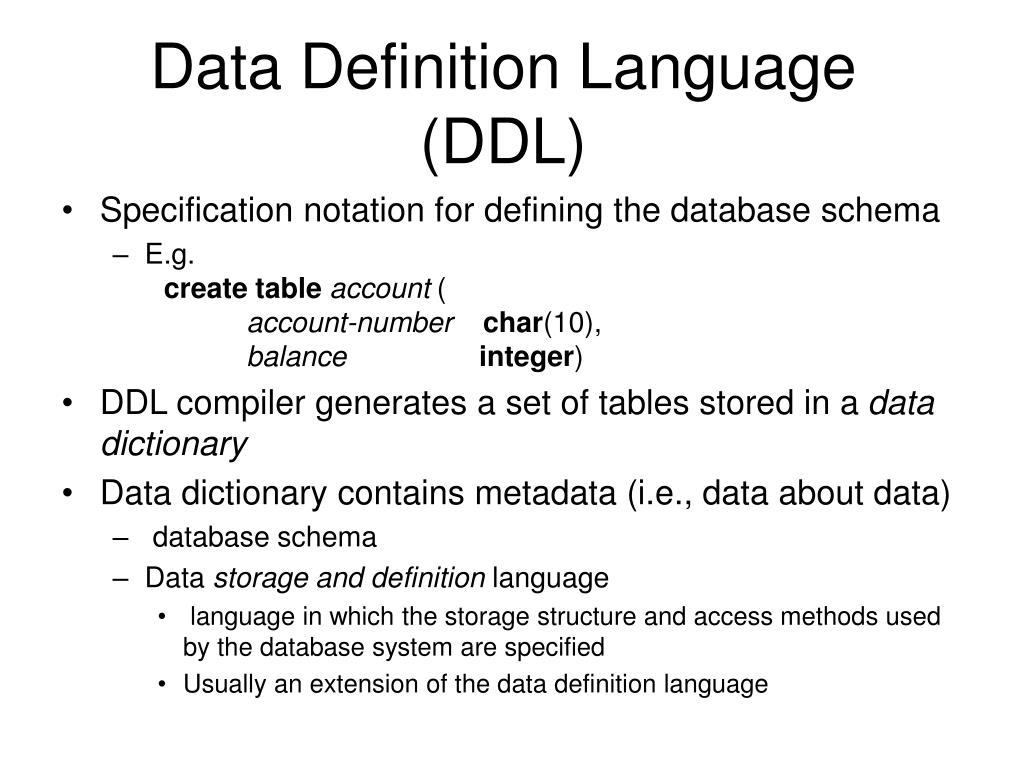

At its core, DDL is a standard for commands used to define and modify the structure of a database, known as its schema. It dictates the types of data that can be stored, how they relate to each other, and the constraints that govern their integrity. In an era where technological advancements are intrinsically linked to the processing and interpretation of data, DDL provides the bedrock.

Databases as the Backbone of Modern Tech

Consider any advanced technological system—be it a drone performing an intricate mapping mission, an AI system analyzing environmental data, or an autonomous vehicle charting its course. Each generates and consumes immense volumes of data: GPS coordinates, sensor readings (lidar, thermal, optical), telemetry, flight paths, obstacle locations, and AI model parameters. This data isn’t simply thrown into a digital bin; it’s meticulously organized within databases. These databases are the central nervous system of these systems, storing everything from historical flight logs and environmental scans to the operational parameters that define a drone’s autonomous behavior or an AI’s learning process.

DDL is the language used to design these databases. Before a single byte of sensor data from a remote sensing drone can be stored, DDL commands are executed to create the tables, specify their columns, define relationships between different data sets, and set rules for data validity. It’s the equivalent of constructing the shelves, drawers, and labels in a vast library before any books are placed. Without DDL, the very concept of a structured database capable of supporting complex queries and high-performance operations in a tech ecosystem would be impossible.

The Imperative of Structured Data for AI and Automation

The efficacy of Artificial Intelligence (AI) and autonomous systems is directly proportional to the quality and structure of the data they process. AI follow modes in drones, for instance, rely on a constant stream of visual and positional data, which must be accurately stored and retrieved for real-time analysis. Autonomous flight systems require structured databases to log flight plans, sensor inputs for obstacle avoidance, and historical performance data for continuous improvement.

DDL ensures that this data is not only structured but also consistent and reliable. By defining data types (e.g., numeric for coordinates, text for descriptions, boolean for status flags), setting primary and foreign keys to establish relationships, and implementing constraints (e.g., NOT NULL, UNIQUE), DDL guarantees the integrity of the information. This integrity is non-negotiable for AI and automation. An AI model trained on inconsistent or poorly structured data will perform suboptimally, leading to errors in decision-making—a critical risk in applications like drone navigation or remote sensing analysis. DDL, therefore, isn’t just about storage; it’s about establishing the framework for intelligent processing and reliable automation.

Core Components and Operations of DDL

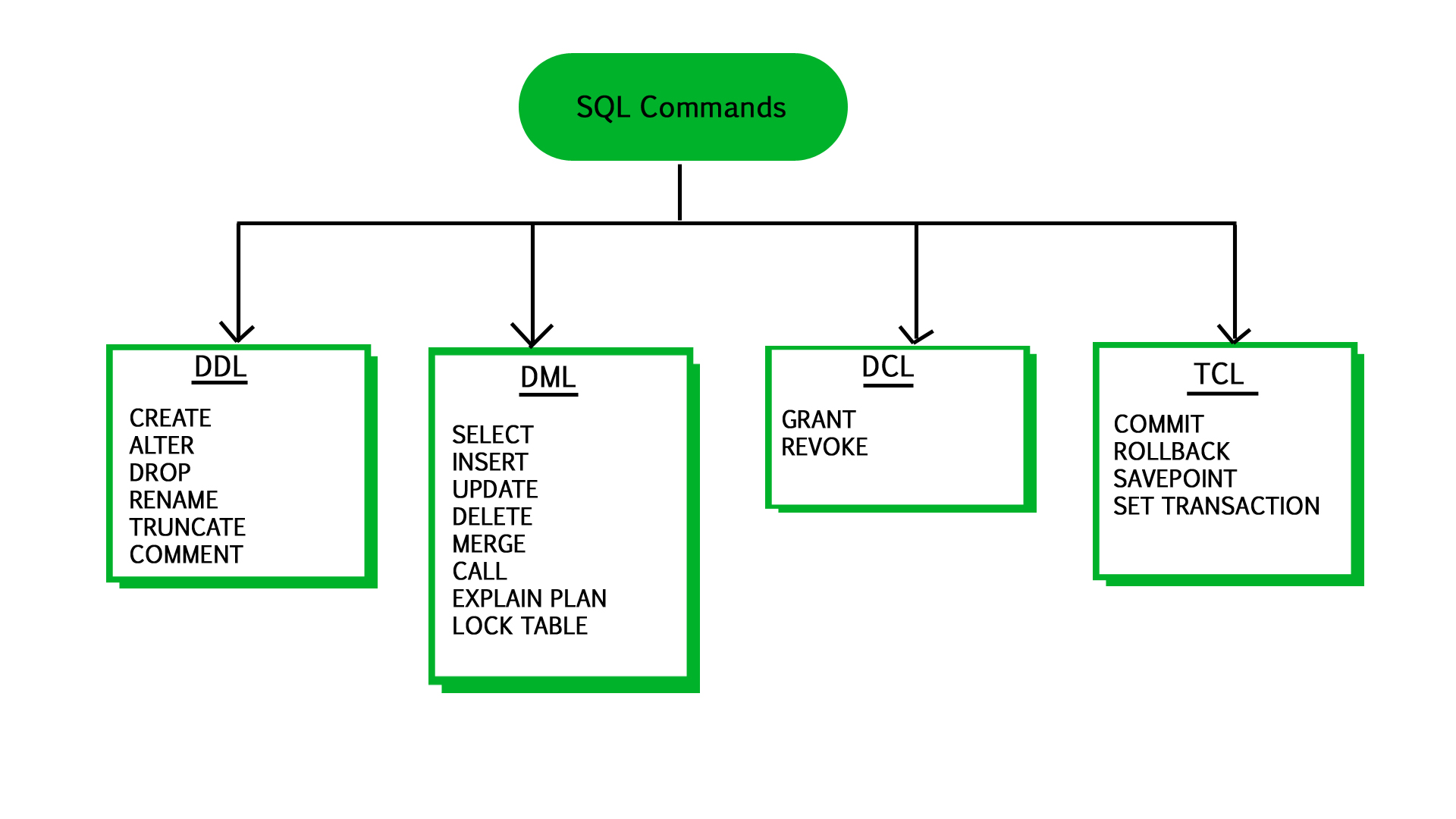

DDL is characterized by a set of powerful commands that empower database administrators and developers to define, modify, and manage the fundamental structure of a database. These commands form the vocabulary for designing the logical schema that underpins all data interactions within advanced tech applications.

Creating Data Structures (CREATE Statement)

The CREATE statement is the cornerstone of DDL, responsible for bringing new database objects into existence. This includes databases themselves, tables, indexes, views, and more. For tech and innovation, its application is broad and fundamental.

Imagine developing a new drone mapping platform. Before any drone takes flight, a database needs to be established to store the vast quantities of data it will collect. Using CREATE DATABASE initiates the storage area. Then, CREATE TABLE commands are used to define the specific structures within that database:

- A

FlightLogtable might be created with columns forFlightID(primary key),DroneID,MissionDate,StartTime,EndTime,RouteCoordinates(perhaps a complex spatial data type),BatteryLevelAtStart,WeatherConditions. - A

SensorDatatable could storeSensorDataID,FlightID,Timestamp,SensorType,ReadingValue,GPSLocation. - An

ImageMetadatatable might captureImageID,FlightID,Timestamp,CameraSettings,GeoTag,Resolution.

These CREATE statements are meticulously designed to reflect the real-world entities and attributes relevant to the drone’s operation and data collection. They define the type of data each column will hold (e.g., INT for IDs, DATETIME for timestamps, VARCHAR for text descriptions, FLOAT or DECIMAL for readings), ensuring data consistency from the very outset. Without these foundational CREATE operations, there would be no structured repository for the invaluable information gathered by advanced tech systems.

Modifying Existing Structures (ALTER Statement)

Technological innovation is dynamic; requirements change, new sensors emerge, and AI models evolve. The ALTER statement provides the flexibility to modify existing database structures without losing the data already stored within them. This adaptability is crucial in fast-paced tech development.

Consider a drone system that initially only captured basic GPS coordinates. Later, the developers decide to integrate a new advanced thermal imaging sensor. To accommodate this, the ImageMetadata table might need a new column for ThermalReading or ThermalImageURL. An ALTER TABLE ADD COLUMN command would seamlessly integrate this new data point. Similarly, if an AI follow mode needs to log a new parameter, such as “ConfidenceScore” for object recognition, an ALTER TABLE command would add this to the relevant log table.

Other ALTER operations include modifying existing column definitions (e.g., changing a VARCHAR length, making a column NOT NULL), adding or dropping constraints (like unique keys or foreign keys to enforce new relationships), and even renaming tables or columns. This flexibility ensures that as tech projects expand and mature, their underlying data structures can evolve alongside them, accommodating new data types and enhancing existing data models without requiring a complete system overhaul.

Deleting Structures (DROP Statement)

While CREATE brings structures into being and ALTER modifies them, the DROP statement is used to remove them entirely. This command is powerful and must be used with caution, as dropping a database object permanently deletes both its structure and all the data contained within it.

In the context of tech innovation, DROP is used when a particular data structure becomes obsolete, when an experimental schema is no longer needed, or during development phases to clean up test environments. For instance, if a legacy DroneModelSpecifications table is replaced by a more comprehensive EquipmentInventory system, the old table might be DROPped after migrating its relevant data. Similarly, if a specific TestFlightData database was created for a temporary project, it can be DROPped to free up resources and simplify the database landscape once the project concludes.

The DROP statement serves as a clean-up mechanism, ensuring that the database schema remains streamlined and relevant to the current operational and developmental needs of advanced tech applications. It’s a critical tool for maintaining a healthy and efficient data environment, preventing the accumulation of redundant or unused structures that could complicate future development and maintenance.

DDL’s Impact on Advanced Technological Applications

The meticulous definition of data structures through DDL is not merely an administrative task; it directly underpins the performance, reliability, and innovative capacity of advanced technological applications. Its influence permeates key areas like remote sensing, autonomous systems, and AI.

Enabling Precision in Remote Sensing and Mapping

Remote sensing and drone-based mapping operations generate colossal amounts of georeferenced data. High-resolution imagery, LiDAR point clouds, multispectral sensor data, and associated metadata (e.g., drone altitude, camera angle, atmospheric conditions) all need to be stored, correlated, and retrieved with extreme precision. DDL is instrumental in defining the schema that supports this.

For instance, DDL can define tables with spatial data types, allowing for efficient storage and querying of geographical information. It establishes relationships between imagery, flight paths, and sensor calibrations, ensuring that when an analyst queries a specific geographical area, all relevant data—from the raw sensor input to the processed map—can be seamlessly assembled. Without DDL’s rigorous definition of data types, constraints, and relationships, integrating diverse data sources for accurate mapping or environmental monitoring would be a chaotic and error-prone endeavor. It ensures that every pixel, every point, every measurement is stored in a way that preserves its context and integrity, enabling precise analysis and actionable insights.

Structuring Data for Autonomous Systems and AI

Autonomous systems, whether in drones, robotics, or vehicles, rely on real-time data processing and decision-making. Their operational intelligence is directly tied to their ability to access, interpret, and act upon structured data. Similarly, AI models, from machine learning for object recognition to deep learning for predictive analytics, are critically dependent on well-organized datasets for training, validation, and inference.

DDL provides the architecture for these data flows. For autonomous drones, DDL defines tables to store navigation parameters, obstacle detection logs, dynamic flight plan adjustments, and real-time sensor fusion results. This structured approach allows the drone’s onboard AI to quickly retrieve relevant information, update its world model, and make swift decisions. For AI development, DDL schemas are used to organize vast training datasets, ensuring labels, features, and metadata are consistently formatted. This consistency is vital for the robustness and performance of AI algorithms. By defining data types and constraints, DDL prevents the injection of erroneous data, which could lead to flawed AI models or dangerous autonomous behaviors. It establishes the “rules of the game” for data, making it interpretable and actionable for intelligent systems.

Ensuring Data Integrity and Scalability for Innovation

In any technological endeavor, the integrity of data is paramount. Corrupted or inconsistent data can lead to catastrophic failures, from incorrect map overlays to autonomous system malfunctions. DDL provides mechanisms to enforce data integrity through constraints such as PRIMARY KEY (unique identifiers), FOREIGN KEY (referential integrity between tables), UNIQUE (ensuring no duplicate values), and CHECK (custom validation rules). These constraints prevent invalid data from entering the database, thereby safeguarding the reliability of the entire tech ecosystem.

Furthermore, as tech innovations scale, their data requirements grow exponentially. DDL contributes to scalability by allowing for the creation of indexes, which significantly speed up data retrieval, crucial for real-time applications. It also facilitates partitioning strategies, enabling databases to handle massive datasets more efficiently by breaking them into smaller, more manageable segments. By providing a clear, structured, and integrity-controlled environment, DDL allows tech innovators to focus on developing new algorithms, advanced sensors, and intelligent behaviors, confident that the underlying data infrastructure is robust, reliable, and capable of growing with their ambitions.

DDL in Practice: From Concept to Deployment

The theoretical understanding of DDL translates into practical application through careful planning and implementation, guiding the journey of data from conceptualization to operational deployment within tech projects.

Schema Design Considerations for Tech Projects

Effective schema design is a critical initial phase for any tech project. It involves translating real-world entities and relationships—such as drones, flights, sensors, and collected imagery—into logical database tables, columns, and constraints. This process is often iterative, requiring collaboration between engineers, data scientists, and domain experts. For a drone-based AI mapping project, for example, the design phase would consider:

- What data is absolutely essential to collect (e.g., GPS, timestamp, image, sensor readings)?

- How frequently will this data be generated and accessed?

- What are the relationships between different pieces of data (e.g., does an image belong to a specific flight, and does a flight belong to a specific drone)?

- What data types best represent the information (e.g.,

DECIMALfor coordinates,BLOBfor binary image data,ENUMfor sensor types)? - What integrity rules are necessary to prevent bad data (e.g., ensuring flight IDs are unique, sensor readings are within a valid range)?

The outcome of this design phase is a comprehensive schema definition, which is then translated into DDL statements. A well-designed schema, enforced by DDL, is the foundation for efficient data storage, retrieval, and analysis, directly impacting the performance and scalability of the entire tech solution.

Version Control and Collaborative DDL Management

In complex tech development environments, multiple teams or individuals might be working on different aspects of a system, requiring changes to the database schema. Managing these changes, particularly in a distributed or agile setup, presents challenges. This is where version control systems, traditionally used for code, are increasingly being applied to DDL scripts.

By storing DDL scripts in a system like Git, teams can track every change made to the database schema, review modifications, revert to previous versions if necessary, and merge contributions from different developers. This practice ensures that the database schema evolves in a controlled, auditable, and collaborative manner. For example, if one team is developing a new feature that requires an ALTER TABLE to add a new column for drone autonomy parameters, and another team is optimizing existing sensor data storage, version control helps manage these concurrent changes without conflicts. This systematic approach to DDL management is crucial for maintaining a coherent and robust data infrastructure, particularly in large-scale tech projects that rely heavily on continuous integration and deployment pipelines.

The Future of Data Definition in an Evolving Tech Landscape

As technology continues its relentless march forward, the demands placed on data infrastructure are becoming ever more complex. DDL, while a mature concept, must adapt to these new realities to remain relevant and effective.

Adapting to Big Data and Real-time Processing

The sheer volume, velocity, and variety of data generated by modern tech—from fleets of autonomous drones continuously streaming high-resolution imagery to AI systems processing petabytes of environmental data—push traditional database architectures to their limits. DDL faces the challenge of enabling schemas that can handle “Big Data” effectively and support real-time processing needs.

This involves DDL extensions for distributed databases, allowing for sharding and partitioning across multiple servers to handle immense loads. It also means incorporating definitions for specialized data types, such as graph structures for complex relationship mapping (e.g., network of drone communications) or time-series data for sensor readings over time. Furthermore, as real-time analytics become critical for autonomous decision-making, DDL must facilitate the creation of structures optimized for rapid ingestion and immediate querying, perhaps through in-memory tables or highly optimized indexing strategies. The evolution of DDL in this context is about enabling databases to not just store data, but to do so at scale and speed that matches the pace of modern technological operations.

DDL and Schema-on-Read for NoSQL Databases

While relational databases, defined strictly by DDL, remain prevalent, the rise of NoSQL databases (e.g., MongoDB, Cassandra) has introduced a paradigm shift, particularly for unstructured or semi-structured data common in some tech applications. NoSQL databases often employ a “schema-on-read” approach, where the structure is inferred at the time of data retrieval rather than being rigidly defined upfront by DDL.

However, even in NoSQL environments, a form of “data definition” is often implicitly or explicitly present. Developers still need to understand and document the expected structure of their JSON documents or key-value pairs to write effective applications. For hybrid systems, where some data benefits from the flexibility of NoSQL and other parts from the rigor of SQL, DDL continues to play its traditional role in defining the relational components. The future may see DDL evolving to encompass a broader spectrum of data definition, providing tools and standards for defining not just rigid relational schemas, but also providing frameworks or guidelines for defining expected structures in more flexible NoSQL paradigms, ensuring better interoperability and manageability across diverse data stores within a single tech ecosystem.

In conclusion, Data Definition Language is far more than a dry set of commands; it is the silent architect of the digital infrastructure that underpins all modern technological innovation. From defining the precise schemas for drone mapping data to structuring the foundational datasets for advanced AI and autonomous systems, DDL ensures that information is not merely present, but meticulously organized, validated, and optimized for high-performance processing. As the world of technology continues to expand, DDL will remain an indispensable tool, constantly evolving to meet the complex demands of data-driven innovation, defining the very fabric of our digital future.