Hate speech, a term frequently encountered in public discourse and legal discussions, refers to public speech that expresses hate or prejudice towards a particular group, especially on the basis of race, religion, or sexual orientation. While the concept may seem straightforward, its definition and the identification of specific examples can be complex, often navigating the delicate balance between freedom of expression and the protection of vulnerable communities. This article aims to clarify the nature of hate speech, explore its multifaceted forms, and provide illustrative examples to foster a deeper understanding of this critical societal issue.

Defining Hate Speech: Beyond Simple Offense

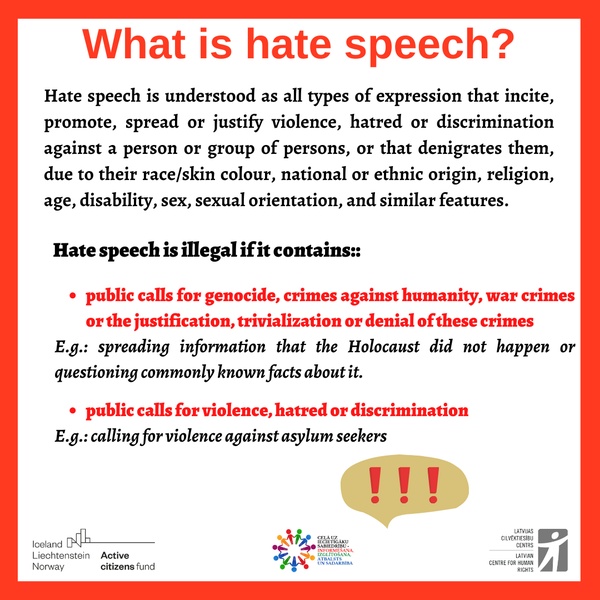

At its core, hate speech is not merely about expressing an opinion, however unpopular or disagreeable. It is characterized by its intent to demean, insult, promote hatred, incite violence, or discriminate against a group based on their inherent characteristics. These characteristics often include, but are not limited to, race, ethnicity, nationality, religion, gender identity, sexual orientation, disability, and political affiliation.

Intent and Impact

A key differentiator of hate speech is its intent to cause harm. This harm can manifest in various ways: psychological distress, social marginalization, and even physical violence. While the speaker’s subjective intent can be a factor, the objective impact on the targeted group is often given significant weight in legal and social analyses. The speech doesn’t need to directly incite immediate violence to be considered hate speech; it can contribute to a climate of hostility and prejudice that makes such violence more likely.

The Nuance of “Hate”

The word “hate” itself carries a strong emotional valence. However, in the context of hate speech, it encompasses a spectrum of animosity, from overt hostility and vilification to subtle but pervasive dehumanization and stereotyping. The target of hate speech is typically a group, rather than an individual, though individuals may be targeted because they represent a particular group.

Freedom of Speech vs. Hate Speech

The discussion of hate speech is inextricably linked to the principle of freedom of speech. Most democratic societies uphold the right to express diverse, even controversial, opinions. However, this right is not absolute. Legal frameworks often draw a line where speech infringes upon the rights and safety of others. Hate speech is generally considered to fall outside the bounds of protected speech because of its potential to incite harm and undermine social cohesion. The challenge lies in precisely demarcating this boundary, ensuring that legitimate criticism or dissent is not suppressed while simultaneously safeguarding against harmful rhetoric.

Manifestations of Hate Speech: Diverse Forms and Mediums

Hate speech is not confined to a single mode of expression. It can appear in spoken words, written text, visual imagery, and even symbolic gestures. The evolution of communication technologies has also provided new platforms and avenues for hate speech to proliferate.

Verbal and Written Hate Speech

This is perhaps the most traditional form, encompassing direct verbal attacks, slurs, epithets, and derogatory statements. In written form, it can be found in books, articles, online forums, social media posts, comments sections, and personal messages. The anonymity afforded by the internet can embolden individuals to express more extreme views.

- Direct Incitement: Statements that explicitly call for violence, discrimination, or harm against a specific group. For example, urging people to “drive out” or “attack” members of a particular religious community.

- Dehumanization: Portraying a group as less than human, often comparing them to animals or vermin. This is a common tactic used to justify mistreatment and violence. Examples include calling refugees “insects” or a specific ethnic group “filth.”

- Stereotyping and Generalization: Spreading harmful and negative stereotypes about an entire group, attributing their alleged negative characteristics to all members. For instance, asserting that all members of a certain nationality are criminals or lazy.

- Conspiracy Theories: Promoting unfounded theories that falsely accuse a group of orchestrating malicious plots against society. Anti-Semitic conspiracy theories are a prominent example.

- Denial of Historical Atrocities: Denying or minimizing the gravity of events like the Holocaust or other genocides, often with the intent to rehabilitate the perpetrators or incite further animosity.

Visual and Symbolic Hate Speech

Images, symbols, and gestures can also be powerful vehicles for hate speech. These can be more insidious as they can quickly convey a message without explicit words.

- Hate Symbols: Symbols associated with hate groups, such as swastikas, certain racial slurs represented visually, or extremist group insignia. The display of these symbols can create a hostile environment for targeted groups.

- Derogatory Cartoons and Memes: Visual media designed to mock, ridicule, and spread negative stereotypes about a group. These can be particularly effective in online spaces due to their viral nature.

- Hate Graffiti: Vandalism involving the spray-painting of hate symbols, slurs, or threatening messages on public or private property, often targeting places of worship or community centers.

- Offensive Imagery: The dissemination of images depicting violence, degradation, or humiliation of a particular group.

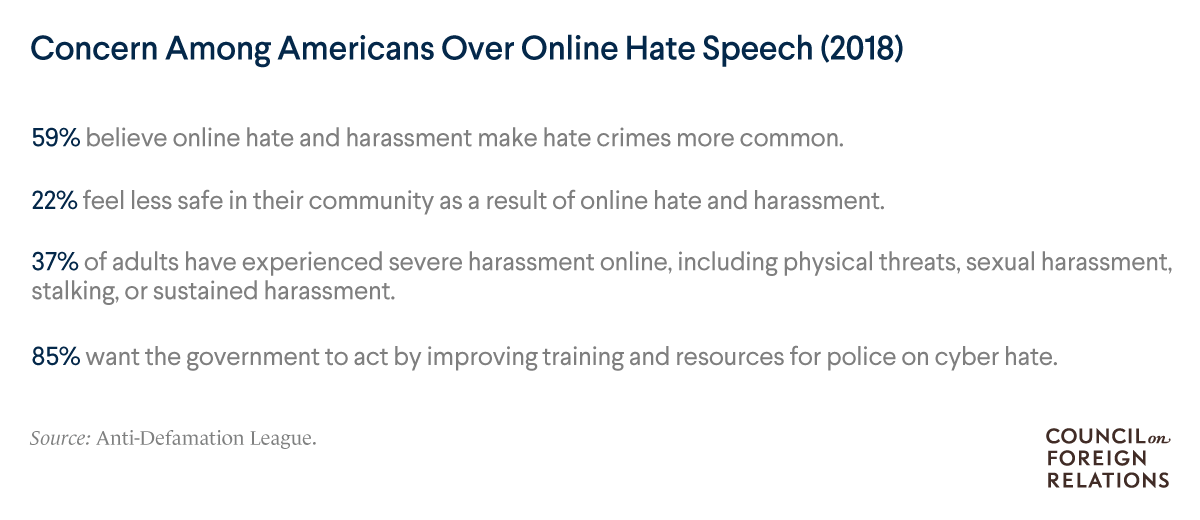

Digital and Online Hate Speech

The internet and social media platforms have become significant battlegrounds for hate speech. The ease of dissemination, global reach, and potential for anonymity present unique challenges.

- Online Harassment Campaigns: Organized efforts to bombard individuals or groups with hateful messages, threats, and misinformation across social media and other online platforms.

- Hate Group Websites and Forums: Dedicated online spaces where individuals can congregate to share hateful ideologies, organize, and recruit.

- Algorithmic Amplification: In some cases, platform algorithms can inadvertently amplify hate speech by recommending content that users engage with, even if that engagement is negative.

- Deepfakes and Manipulated Media: Increasingly sophisticated technologies can be used to create fabricated videos or images designed to spread disinformation and incite hatred against individuals or groups.

Examples of Hate Speech in Practice

To better understand the application of these definitions, consider the following hypothetical, yet representative, examples across different contexts:

Scenario 1: Political Rally

During a political rally, a speaker declares, “We need to cleanse our nation of these [ethnic slur] who are taking our jobs and destroying our culture. They are a disease, and we must eradicate them before it’s too late.”

- Analysis: This statement employs dehumanization (“disease,” “eradicate”), uses an ethnic slur, and incites violence or drastic action (“cleanse,” “eradicate”) against a specific ethnic group, clearly falling under the definition of hate speech.

Scenario 2: Online Forum

On a private online forum dedicated to discussing social issues, a user posts, “All [religious group] are inherently violent and dangerous. They have a history of terrorism, and we should not trust any of them. The best solution is to ban them from entering our country.”

- Analysis: This example relies on harmful generalizations and stereotypes about an entire religious group. It promotes distrust and advocates for discriminatory action (banning them from the country), contributing to a climate of prejudice.

Scenario 3: Social Media Post

A user posts a meme on a widely used social media platform featuring a derogatory caricature of a person with a disability, accompanied by the caption, “These people are a drain on society. They should be put out of our misery.”

- Analysis: This is a clear instance of hate speech targeting individuals with disabilities. The use of a derogatory caricature, the dehumanizing language (“drain on society”), and the suggestion of violence (“put out of our misery”) are all indicative of hate speech.

Scenario 4: Public Discourse

A politician, in a televised interview, states, “The rising crime rates are a direct result of the uncontrolled immigration of people from [specific country]. They bring their criminal tendencies with them, and our communities are no longer safe.”

- Analysis: While framed as a concern about crime, this statement employs dangerous generalizations and scapegoating. It attributes criminal behavior to an entire nationality, fostering xenophobia and potentially inciting fear and hostility towards immigrants from that country.

Scenario 5: Private Conversation

Two individuals are discussing a recent protest organized by a minority group. One says, “Honestly, they are so loud and demanding. They just want special treatment and are trying to ruin our way of life. They don’t belong here.”

- Analysis: While this might be dismissed as a mere opinion in some contexts, the language used (“ruin our way of life,” “don’t belong here”) contributes to marginalization and exclusion. If such sentiments are pervasive and expressed in ways that foster animosity, they can be considered part of a broader pattern of hate speech.

Conclusion: The Importance of Vigilance

Understanding what constitutes hate speech is crucial for fostering inclusive and safe societies. It requires recognizing that speech, especially when amplified and widespread, can have profound and damaging consequences for targeted individuals and communities. While the boundaries of free speech are vital to protect, so too is the imperative to condemn and counter rhetoric that dehumanizes, demonizes, and incites hatred. Continuous dialogue, education, and a commitment to challenging hateful ideologies are essential in combating its pervasive influence. The examples provided, while illustrative, underscore the need for critical engagement with language and imagery that seeks to divide and demean.