In an era increasingly defined by rapid technological advancement, understanding fundamental concepts is paramount to appreciating the innovations that shape our world. Among these foundational elements is “GHz,” an acronym frequently encountered across specifications for everything from computer processors and Wi-Fi routers to drone communication systems and advanced sensing equipment. But what exactly does GHz mean, and why is it so crucial to the fabric of modern technology and innovation?

GHz, or Gigahertz, is a unit of frequency. At its core, frequency measures how often an event repeats itself over a specific period. In the context of electronics and digital systems, this often refers to the speed at which a processor executes instructions, the rate at which an electromagnetic wave oscillates, or the speed at which data is transmitted. Understanding Gigahertz is key to unlocking the capabilities of artificial intelligence, autonomous flight, sophisticated mapping, and high-precision remote sensing – the very pillars of the “Tech & Innovation” landscape.

The Fundamentals of Frequency: Understanding Hertz and Gigahertz

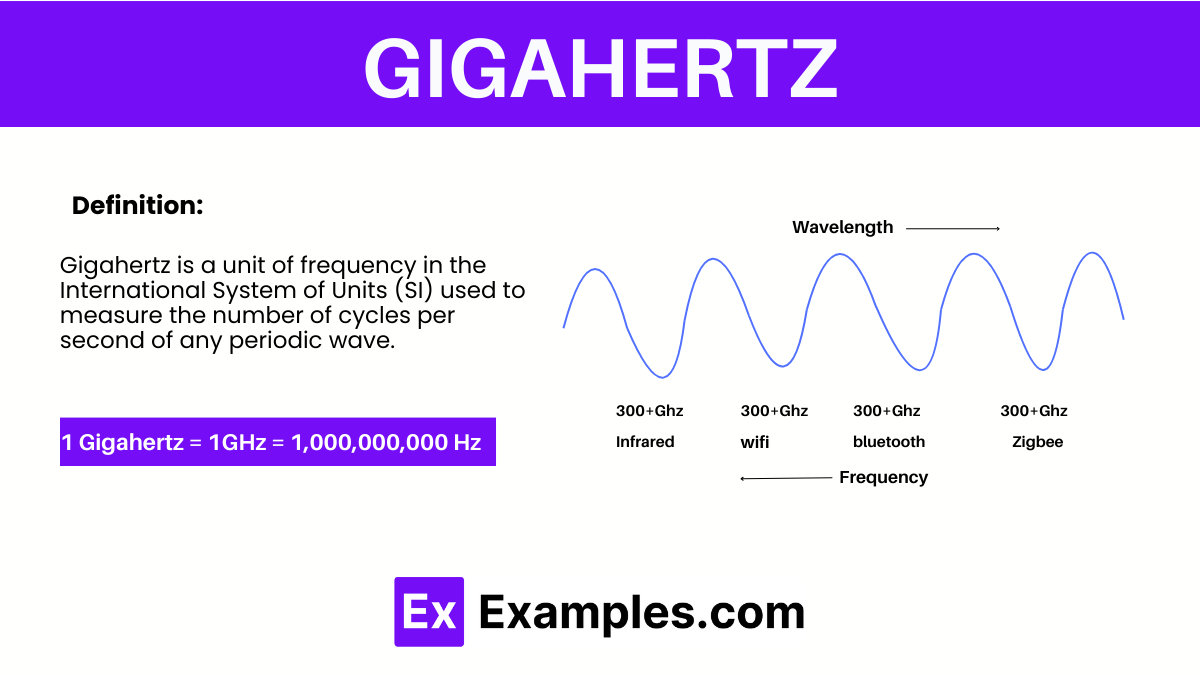

To grasp Gigahertz, we must first understand its parent unit: Hertz (Hz). Named after the German physicist Heinrich Rudolf Hertz, this unit represents one cycle per second. Whether it’s the vibration of a sound wave, the oscillation of an electrical current, or the processing cycle of a computer chip, Hertz provides a standardized way to quantify repetition over time.

From Cycles Per Second to Gigahertz: A Primer

One Hertz (1 Hz) signifies one cycle per second. As technological demands grew, so did the frequencies involved, necessitating larger units:

- Kilohertz (kHz): One thousand (1,000) Hertz.

- Megahertz (MHz): One million (1,000,000) Hertz.

- Gigahertz (GHz): One billion (1,000,000,000) Hertz.

When you see a processor clocked at 3.5 GHz, it means that the internal clock of that processor is cycling 3.5 billion times every second. Each cycle represents an opportunity for the processor to perform an operation, fetch data, or execute an instruction. Similarly, a Wi-Fi signal operating at 2.4 GHz or 5 GHz indicates the frequency at which its electromagnetic waves oscillate, carrying data through the air. These high frequencies are essential for the rapid processing and communication that underpin modern technological advancements.

Why Frequency Matters in Digital Systems

In digital systems, frequency is intrinsically linked to speed and capacity. Higher frequencies generally allow for more operations per second or faster data transmission. This direct correlation is critical for virtually every aspect of “Tech & Innovation.” For instance, an AI algorithm running on a higher GHz processor can crunch data more quickly, leading to faster decision-making for autonomous vehicles or more rapid pattern recognition for remote sensing applications.

However, frequency isn’t the sole determinant of performance. While higher GHz numbers often imply greater potential, factors like architectural efficiency, instruction sets, core count (for processors), and channel width (for communication) also play significant roles. Nevertheless, a robust understanding of GHz provides the baseline for appreciating the incredible speeds and capabilities embedded within contemporary technology.

GHz in Communication: The Backbone of Connected Innovation

The invisible web that connects our devices, empowers drones, and facilitates data exchange relies heavily on specific frequency bands, predominantly measured in Gigahertz. Wireless communication is the lifeblood of interconnected innovation, enabling everything from real-time data streaming to complex command and control systems.

Wireless Connectivity: Wi-Fi, Bluetooth, and Drone Control

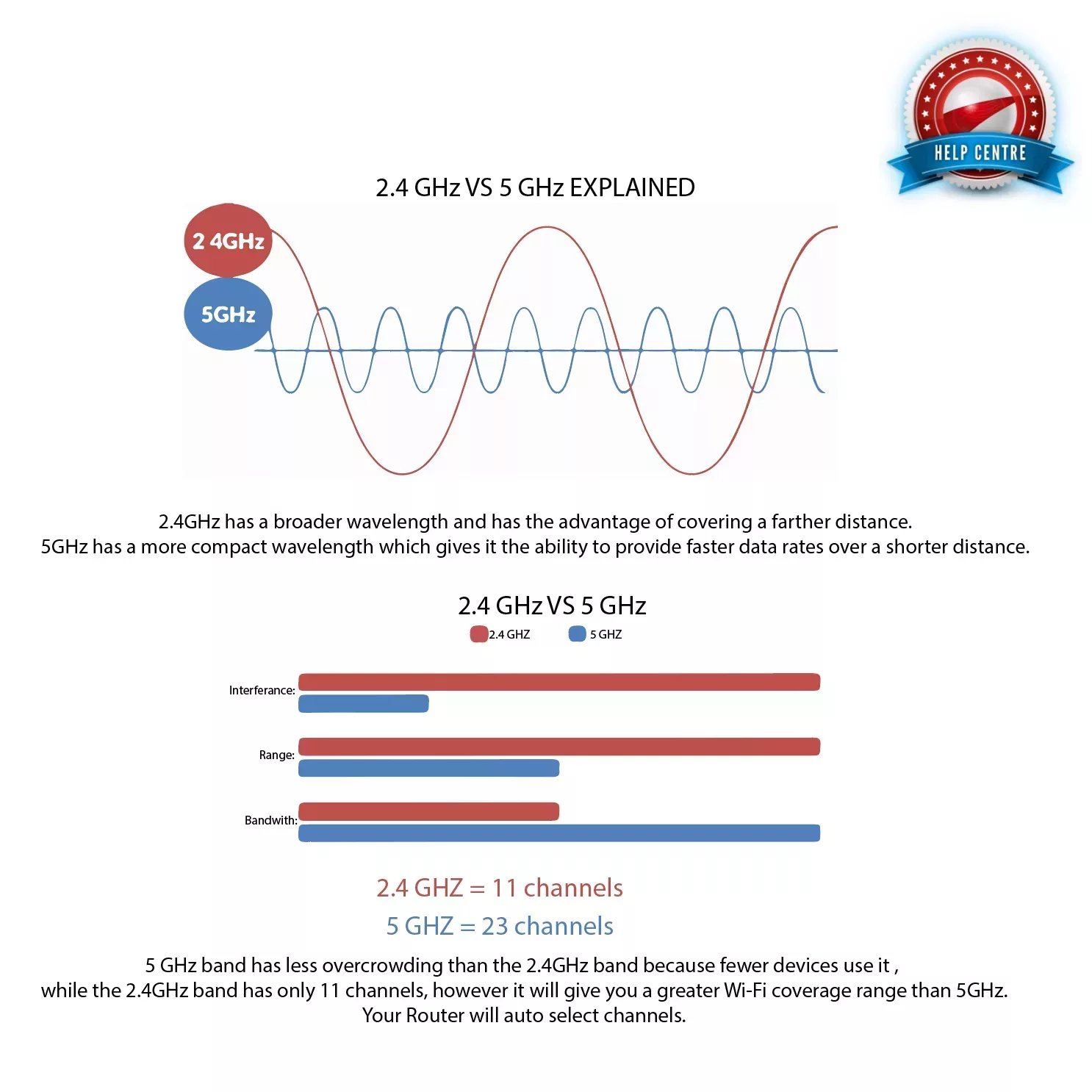

Ubiquitous technologies like Wi-Fi and Bluetooth operate in the Gigahertz range. Standard Wi-Fi networks typically utilize the 2.4 GHz and 5 GHz bands. The 2.4 GHz band offers wider range but can be more susceptible to interference from other household devices (like microwaves or cordless phones). The 5 GHz band provides faster data rates and less interference, but with a shorter range.

For advanced applications like autonomous drone operations, the choice of frequency is critical. Drone controllers and video transmission systems often operate in the 2.4 GHz, 5.8 GHz, or even higher frequency bands (e.g., specific ISM bands) to ensure stable command links, reliable telemetry data, and high-quality FPV (First Person View) feeds. High-frequency signals are crucial for transmitting large amounts of data, such as high-definition video from a drone or complex instructions to an autonomous robot, with minimal latency.

The Spectrum: Advantages and Challenges of High Frequencies

The electromagnetic spectrum is a finite resource, and different frequency bands have unique characteristics. Higher frequencies, particularly in the Gigahertz range and beyond into millimeter-wave (mmWave), offer several advantages:

- Greater Bandwidth: Higher frequencies can accommodate wider channels, allowing for more data to be transmitted simultaneously. This is vital for streaming 4K video from a drone or transmitting vast datasets from remote sensors.

- Smaller Antennas: The physical size of antennas is inversely proportional to the frequency. Higher frequencies allow for smaller, more compact antennas, which are ideal for miniature drones or integrated IoT devices.

- Less Congestion (in some bands): Certain higher frequency bands are less crowded than the popular 2.4 GHz band, reducing interference and improving signal reliability.

However, higher frequencies also present challenges:

- Reduced Range and Penetration: Gigahertz signals, especially those above 5 GHz, are more susceptible to attenuation and absorption by obstacles like walls, trees, and even rain. This limits their effective range and penetration capabilities.

- Line-of-Sight Requirement: Many high-frequency communication systems perform optimally with a clear line-of-sight between transmitter and receiver, which can be a limitation for ground-based autonomous systems or drones operating in complex environments.

Data Transmission and Bandwidth Implications

The amount of data that can be transmitted over a wireless link is directly related to its bandwidth, which is often tied to the operating frequency. Technologies like 5G, for example, leverage higher Gigahertz and even millimeter-wave bands (up to tens of GHz) to achieve unprecedented data speeds, enabling applications like real-time cloud processing for autonomous vehicles, immersive augmented reality, and instantaneous remote control of machinery. This capacity for high-speed data transmission is fundamental to the scalability and responsiveness of future tech and innovation.

Processor Speed and Computational Power: The Brains of Modern Tech

Beyond communication, Gigahertz is perhaps most famously associated with the “clock speed” of processors – the very brains driving our AI algorithms, autonomous systems, and advanced remote sensing platforms.

CPU Clock Speed: The Engine of AI and Autonomous Systems

The CPU (Central Processing Unit) is the core component that executes instructions, performs calculations, and manages data flow. Its clock speed, measured in Gigahertz, indicates how many cycles it can complete per second. For an AI system to process complex neural networks, recognize objects in real-time, or make instantaneous decisions for an autonomous drone, it requires immense computational power. A higher GHz clock speed means more instructions can be processed per second, directly translating to faster computations and more responsive intelligent systems.

This processing power is critical for:

- Real-time Object Recognition: Essential for autonomous navigation and safety.

- Complex Algorithm Execution: Driving AI decision-making and predictive analytics.

- Data Fusion: Combining inputs from multiple sensors (cameras, LiDAR, radar) for a comprehensive environmental understanding.

Beyond Clock Speed: Architecture and Efficiency

While clock speed (GHz) is a primary indicator, it’s crucial to remember that it’s not the only factor determining a processor’s performance. Modern processors utilize multi-core architectures, advanced instruction sets, and sophisticated caching mechanisms to maximize efficiency. A 3 GHz multi-core processor with efficient architecture can outperform a 4 GHz single-core processor with older architecture.

However, the base clock speed still provides a vital metric for raw processing potential. For demanding “Tech & Innovation” applications, engineers strive for a balance: high GHz for sheer speed, coupled with optimized architecture for efficiency and power consumption, especially in battery-constrained devices like drones or remote sensors.

Real-time Processing for Robotics and Remote Sensing

In robotics and remote sensing, the ability to process data in real-time is not just an advantage; it’s a necessity. An autonomous drone mapping a large area needs to process sensor data (like point clouds from LiDAR or imagery from a camera) instantly to adjust its flight path, avoid obstacles, and ensure data quality. This requires processors capable of operating at high Gigahertz speeds to handle the continuous stream of incoming information and execute complex algorithms without delay. The latency introduced by slow processing could be catastrophic for an autonomous system, underscoring the critical role of GHz in these cutting-edge applications.

Sensor Technology and Data Acquisition: The Eyes and Ears of Innovation

Modern “Tech & Innovation” relies heavily on sophisticated sensors that act as the eyes and ears of intelligent systems. Many of these sensors operate or are influenced by Gigahertz frequencies to achieve their precision and capability.

Radar and Lidar Systems: High-Frequency Sensing

Radar (Radio Detection and Ranging) systems transmit radio waves and detect their reflections to determine the range, angle, or velocity of objects. These radio waves often operate in the Gigahertz range, from a few GHz (e.g., for automotive radar) up to tens or even hundreds of GHz (e.g., for weather radar or advanced military applications). Higher frequencies in radar offer greater resolution and precision, allowing for the detection of smaller objects or more accurate mapping of surfaces, critical for autonomous navigation and remote sensing.

Lidar (Light Detection and Ranging), while primarily using light pulses (often in the infrared spectrum), also benefits from high-frequency processing for signal interpretation. The rapid pulsing of lasers and the subsequent processing of reflected signals to create precise 3D point clouds demand Gigahertz-level computational power to achieve real-time environmental mapping, a cornerstone of autonomous flight and robotics.

The Role of GHz in Imaging and Signal Processing

Beyond dedicated ranging sensors, many advanced imaging systems and signal processors leverage Gigahertz frequencies. High-resolution cameras on drones, for instance, capture vast amounts of pixel data. Processing this data – for image stabilization, real-time stitching of panoramas, or applying AI filters – requires processors running at high Gigahertz speeds.

Furthermore, any system that processes continuous signals, such as acoustic sensors used in environmental monitoring or radio receivers for remote sensing data, relies on processing capabilities measured in GHz to sample and interpret these signals accurately and efficiently. The faster the processing speed, the more intricate and nuanced the data analysis can be.

Advancements in Remote Sensing Data Capture

Remote sensing platforms, whether satellites, aerial drones, or ground-based autonomous vehicles, collect incredible volumes of data about our planet. From monitoring crop health with hyperspectral sensors to mapping urban sprawl with synthetic aperture radar, the ability to capture, transmit, and process this data at speed is paramount. High-frequency communication links (Gigahertz bands) enable rapid data offloading, while high-Gigahertz processors on board perform preliminary analysis, significantly accelerating the insights gained from remote sensing missions and feeding directly into applications like precision agriculture, disaster response, and urban planning.

The Future Landscape: 5G, IoT, and Next-Gen Innovation

The trajectory of “Tech & Innovation” is pushing the boundaries of what’s possible, and Gigahertz frequencies are at the heart of this evolution, particularly with the advent of 5G and the pervasive growth of the Internet of Things (IoT).

Millimeter-Wave Technology and its Implications for Speed

5G technology utilizes a broader range of frequencies, including the traditional sub-6 GHz bands and much higher millimeter-wave (mmWave) frequencies, which can extend up to tens of Gigahertz. This leap into higher Gigahertz frequencies for commercial communication is a game-changer. mmWave offers massive bandwidth, enabling multi-Gigabit per second speeds and ultra-low latency. While these higher frequencies have shorter ranges and are more susceptible to obstruction, they are ideal for dense urban areas or specific applications requiring immense data throughput, such as autonomous vehicle communication or high-definition streaming in stadiums. The development of advanced antenna technologies, like beamforming, is helping to mitigate the propagation challenges of mmWave.

The Internet of Things (IoT) and Ubiquitous Connectivity

The IoT envisions a world where countless devices, from smart home appliances to industrial sensors, are interconnected. Many IoT devices communicate wirelessly, often relying on various Gigahertz bands (e.g., Wi-Fi, Bluetooth Low Energy, Zigbee). The sheer volume of data generated by billions of interconnected devices demands robust, high-frequency communication infrastructure and powerful Gigahertz processors for edge computing – where data is processed closer to its source, rather than entirely in the cloud. This distributed intelligence is crucial for real-time responsiveness and efficiency in complex IoT ecosystems.

Driving Autonomous Systems and Smart Cities

The combined power of high-Gigahertz processing, high-frequency communication (like 5G mmWave), and advanced Gigahertz-based sensors (radar, LiDAR) is collectively driving the realization of fully autonomous systems and smart cities. Autonomous vehicles require constant communication with each other and infrastructure (V2X communication), real-time processing of sensor data, and rapid decision-making, all facilitated by Gigahertz frequencies. Smart cities leverage IoT devices for everything from traffic management to environmental monitoring, transmitting vast datasets over Gigahertz networks for analysis by AI systems that optimize urban living.

Conclusion

From the rapid cycles within a processor to the invisible waves carrying our data through the air, Gigahertz (GHz) is a fundamental unit that underpins almost every aspect of modern “Tech & Innovation.” It is the heartbeat of computational power, the carrier wave of global communication, and the precise rhythm of advanced sensing technologies. As we venture further into an era of artificial intelligence, autonomous systems, sophisticated mapping, and remote sensing, the relentless pursuit of higher frequencies, coupled with architectural efficiencies, will continue to unlock unprecedented capabilities. Understanding “what is GHz” is not merely academic; it is to understand the very pulse of the technological frontier.