Understanding the Nuances of Data Packet Arrival Times

In the realm of digital communication, particularly within the context of technologies that rely on the seamless and instantaneous transmission of data, understanding network jitter is paramount. While often discussed in relation to Voice over IP (VoIP) and video conferencing, the implications of jitter extend far beyond these applications, impacting real-time data streams crucial for many advanced technological systems. For professionals working with sophisticated networks, especially those supporting real-time control or high-bandwidth data transfer, a deep grasp of jitter, its causes, and its mitigation strategies is not merely beneficial; it’s essential for optimal performance and reliability.

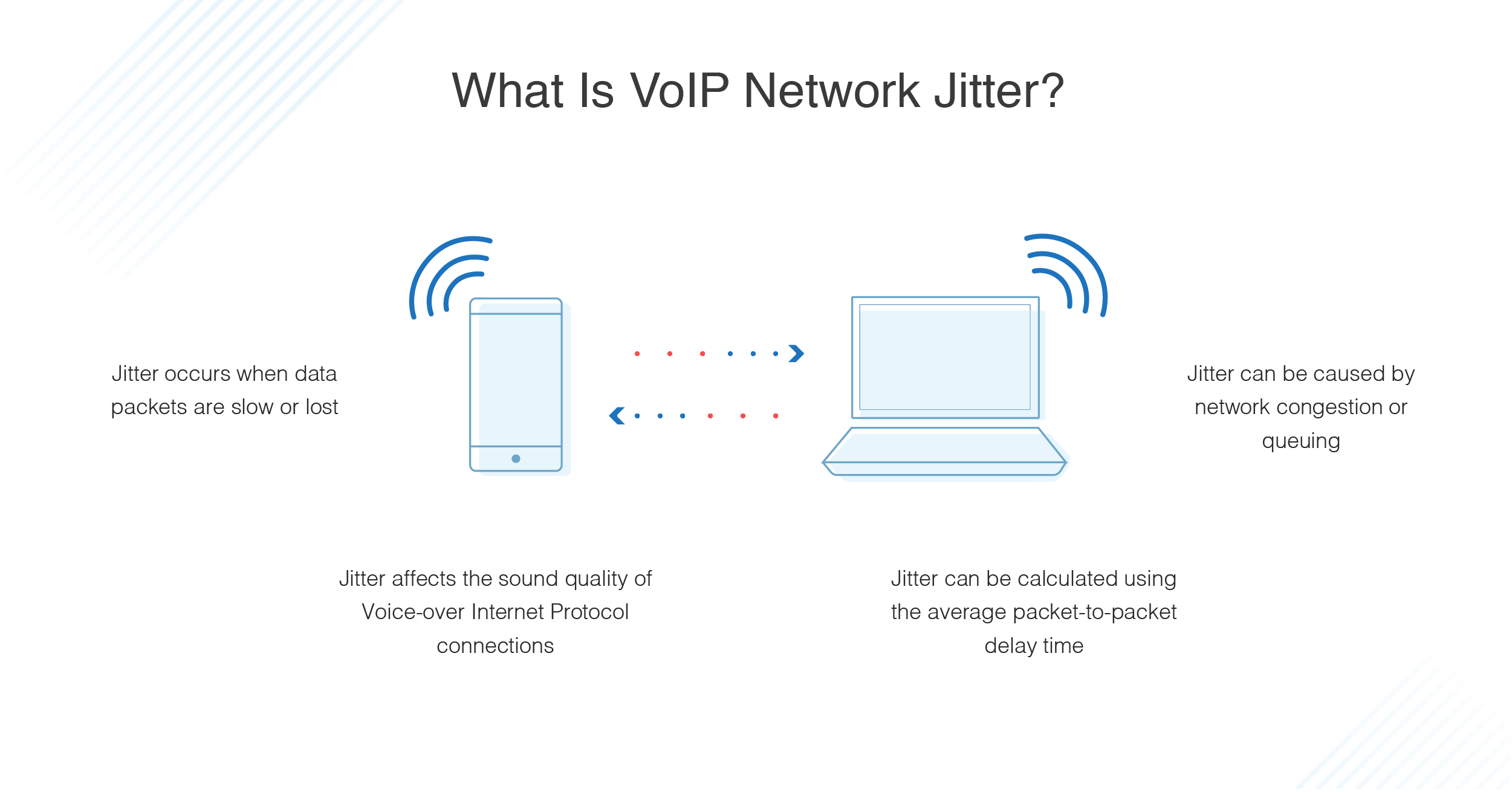

Jitter, in its simplest definition, refers to the variation in the latency of received packets with respect to the time they were sent. Think of it as the inconsistent delay in data arrival. If data packets were like cars traveling on a highway, a low-jitter network would have them arriving at their destination at roughly the same intervals they departed their origin, like a perfectly synchronized convoy. High jitter, however, means the cars arrive erratically – some much sooner than expected, others significantly delayed, and some perhaps not at all within a predictable timeframe. This unpredictability can wreak havoc on applications that depend on a consistent flow of information.

Defining Jitter and its Impact

Network jitter is a measurement of the variation in delay between successive data packets. It is often expressed in milliseconds (ms). A low jitter value signifies that packet arrival times are consistent, leading to a smooth and predictable data flow. Conversely, a high jitter value indicates significant fluctuations in packet arrival times, which can result in a choppy or interrupted experience for the end-user.

The impact of jitter is not uniform across all types of network traffic. Applications that are highly sensitive to timing, such as real-time audio and video streams, are most severely affected. For instance, in a video conference, high jitter can manifest as:

- Choppy Video: Frames may arrive out of order or with significant gaps, leading to jerky playback.

- Audio Dropouts: Spoken words might be cut off or distorted, making conversations difficult to follow.

- Synchronization Issues: Audio and video streams can become desynchronized, leading to a frustrating and unprofessional experience.

Beyond real-time communication, jitter can also impact other time-sensitive applications:

- Online Gaming: Delayed or inconsistent packet arrivals can lead to lag, making it difficult to react to in-game events accurately and affecting the overall gaming experience.

- Industrial Control Systems: In environments where precise timing is critical for operating machinery or complex processes, significant jitter can lead to errors, malfunctions, and potentially dangerous situations.

- Remote Sensing and Data Acquisition: Systems that rely on synchronized data streams from multiple sensors might experience data corruption or loss if packet arrival times are too erratic.

Understanding the root causes of jitter is the first step towards effective management. It’s a complex issue that can arise from various points within a network’s infrastructure.

Sources of Network Jitter

The variability in packet arrival times, or jitter, can originate from several points within a network. Identifying these sources is crucial for implementing effective mitigation strategies. The causes can broadly be categorized into hardware-related issues, software and protocol-related issues, and network congestion.

Network Congestion

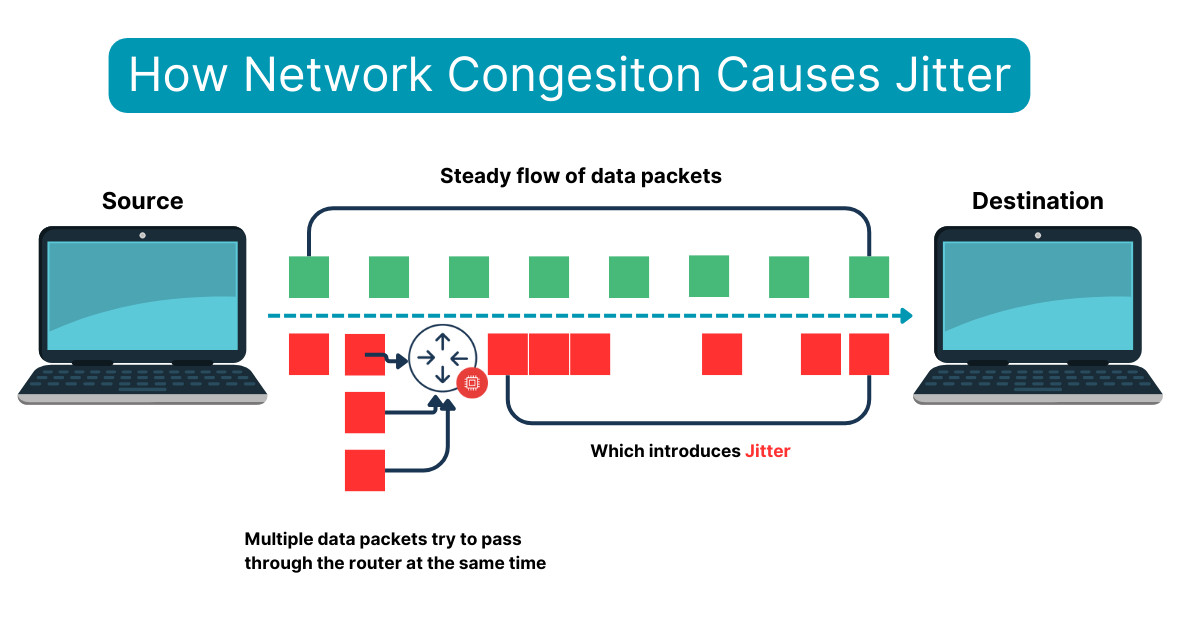

Perhaps the most common culprit behind jitter is network congestion. When a network link or a device, such as a router or switch, becomes overloaded with traffic, packets have to wait in queues before they can be processed and forwarded. The length of these queues fluctuates based on the volume and burstiness of incoming traffic.

- Queueing Delay: Packets are temporarily stored in buffers (queues) when the output rate of a network device is slower than the input rate. The time a packet spends in a queue is variable. Bursty traffic can cause queues to grow rapidly, leading to longer waiting times for subsequent packets.

- Router and Switch Overload: When routers or switches are processing more data than their hardware or software can handle efficiently, packets may be dropped or delayed significantly. This is particularly true for older or less powerful network devices.

Hardware and Device Issues

The physical components of a network can also contribute to jitter. The performance and configuration of network devices play a significant role in how consistently data is processed and transmitted.

- Processing Delays: Network devices, like routers and switches, have a finite capacity for processing data packets. If a device is struggling to keep up with the data flow, it can introduce variable delays as it handles each packet. This delay can vary depending on the complexity of the packet and the current load on the device.

- Faulty Hardware: Malfunctioning network interface cards (NICs), corrupted cables, or other hardware issues can lead to intermittent packet loss or delays, contributing to jitter.

- Resource Contention: In complex network architectures, multiple processes or services might compete for the same hardware resources (e.g., CPU, memory) on a network device. This contention can lead to unpredictable delays in packet handling.

Software and Protocol Inefficiencies

The way data is handled by software and the underlying network protocols can also introduce jitter. Issues with timing, scheduling, and inefficient protocol implementations can all contribute to variations in packet arrival.

- Operating System Scheduling: The operating system of a network device or end-user machine schedules the processing of various tasks, including network packet handling. Inconsistent or suboptimal scheduling can lead to variations in the time it takes to process and transmit packets.

- Protocol Overhead: Some network protocols add overhead to data packets. The processing of this overhead can introduce small, but variable, delays.

- Interference and Shared Mediums: In older network technologies or certain wireless environments that use shared mediums, devices might have to wait for access, leading to unpredictable transmission times. While less common in modern wired networks, it can still be a factor in some scenarios.

- Application-Level Buffering: Applications themselves might implement buffering mechanisms to manage incoming data. If these buffers are not optimally sized or managed, they can contribute to perceived jitter.

Wireless Network Specifics

Wireless networks, by their very nature, are more susceptible to jitter than wired networks due to the inherent unreliability and shared nature of the medium.

- Signal Interference: Wireless signals can be affected by a multitude of environmental factors, including other electronic devices, physical obstructions, and multipath fading (where signals bounce off surfaces and arrive at the receiver via multiple paths, potentially out of sync).

- Channel Access: In Wi-Fi networks, devices must contend for access to the wireless channel. This contention process, governed by protocols like CSMA/CA (Carrier Sense Multiple Access with Collision Avoidance), introduces variability in transmission times.

- Dynamic Bandwidth Allocation: Some wireless technologies dynamically adjust bandwidth allocation based on network conditions, which can lead to fluctuations in data throughput and, consequently, jitter.

Understanding these diverse sources is the critical first step toward implementing effective strategies for managing and mitigating network jitter.

Managing and Mitigating Network Jitter

Addressing network jitter requires a multi-faceted approach, focusing on optimizing network performance, implementing appropriate technologies, and configuring devices effectively. The goal is not necessarily to eliminate jitter entirely – which is often an unrealistic objective – but to reduce it to acceptable levels for the specific application.

Network Design and Quality of Service (QoS)

A well-designed network infrastructure is the foundation for minimizing jitter. Implementing Quality of Service (QoS) mechanisms is a primary strategy for prioritizing time-sensitive traffic.

- Traffic Prioritization: QoS allows network administrators to classify different types of network traffic and assign priorities. For instance, VoIP calls or video streams can be given higher priority than bulk data transfers, ensuring they receive preferential treatment in terms of bandwidth and queue management.

- Bandwidth Management: Ensuring adequate bandwidth is available for critical applications is crucial. Over-provisioning bandwidth in key areas, especially for time-sensitive services, can help prevent congestion-related jitter.

- Minimizing Hops and Latency: Designing networks with fewer network devices (hops) between the source and destination can reduce the number of points where jitter can be introduced. Choosing high-performance, low-latency network equipment is also important.

- Network Segmentation: Dividing a large network into smaller, more manageable segments (e.g., using VLANs) can help isolate traffic and prevent congestion in one segment from impacting another.

Buffering and Jitter Buffers

While network-level solutions are vital, applications themselves often employ buffering mechanisms to cope with jitter.

- Jitter Buffers (De-jitter Buffers): These are memory buffers used by applications, particularly those handling real-time audio and video. Incoming packets are stored in the jitter buffer, and then played out at a constant rate. The size of the jitter buffer is a critical parameter:

- Small Jitter Buffer: Results in lower latency but is more susceptible to packet loss due to jitter.

- Large Jitter Buffer: Can accommodate more jitter but introduces higher latency, which can be detrimental to interactive applications like video conferencing.

- Adaptive Jitter Buffers: More sophisticated jitter buffers can dynamically adjust their size based on the observed network conditions, attempting to strike a balance between latency and resilience to jitter.

Network Monitoring and Optimization

Continuous monitoring of network performance is essential for identifying jitter-related issues and their root causes.

- Performance Monitoring Tools: Network monitoring software can track key metrics like packet loss, latency, and jitter in real-time. This data helps administrators diagnose problems, identify bottlenecks, and measure the effectiveness of mitigation strategies.

- Packet Analysis: Deep packet inspection (DPI) tools can analyze the content and timing of individual packets to pinpoint the source of delays and jitter.

- Regular Network Audits: Periodic assessments of network configuration, device performance, and traffic patterns can help proactively identify potential jitter issues before they significantly impact users.

Hardware and Software Considerations

The choice of hardware and the configuration of software play a significant role in jitter management.

- High-Performance Network Devices: Investing in routers, switches, and network interface cards that offer sufficient processing power and low latency can significantly reduce device-induced jitter.

- Firmware and Driver Updates: Keeping network device firmware and operating system drivers up to date is crucial, as manufacturers often release patches that improve performance and stability, including addressing jitter-related issues.

- Optimized Network Stacks: In some specialized applications, highly optimized network stacks or custom protocol implementations might be necessary to achieve the lowest possible jitter.

By combining these strategies, network professionals can effectively manage and mitigate jitter, ensuring the reliable and high-quality performance of even the most demanding real-time applications. The continuous evolution of network technologies and the increasing demand for seamless real-time data flow mean that understanding and managing jitter will remain a critical skill.