The Virtual Core of Modern Computing

In the ever-evolving landscape of technology, the term “vCPU” has become increasingly ubiquitous, particularly within the realms of cloud computing, virtualization, and high-performance computing. While its name might suggest a direct physical counterpart, a vCPU is fundamentally a software construct, a virtual representation of a central processing unit (CPU) core. Understanding the vCPU is crucial for anyone navigating the complexities of modern IT infrastructure, from system administrators managing large server farms to individuals optimizing their cloud-based applications. This article delves into the nature of vCPUs, their role in virtualization, and their impact on performance and efficiency.

Defining the Virtual CPU

At its core, a vCPU is a single thread of execution that can be scheduled and managed by a hypervisor. A hypervisor, also known as a Virtual Machine Monitor (VMM), is a layer of software that creates and runs virtual machines (VMs). It sits between the physical hardware and the operating systems running on the VMs, allowing multiple operating systems to share the same physical hardware resources.

When a virtual machine is created, it is allocated a certain number of vCPUs. These vCPUs are not physical cores themselves but rather a share of the processing power of the underlying physical CPU cores. The hypervisor is responsible for arbitrating access to these physical cores, distributing the workload of the vCPUs among them. This dynamic allocation and scheduling are what enable the illusion of dedicated processing power for each VM.

Physical vs. Virtual: The Fundamental Difference

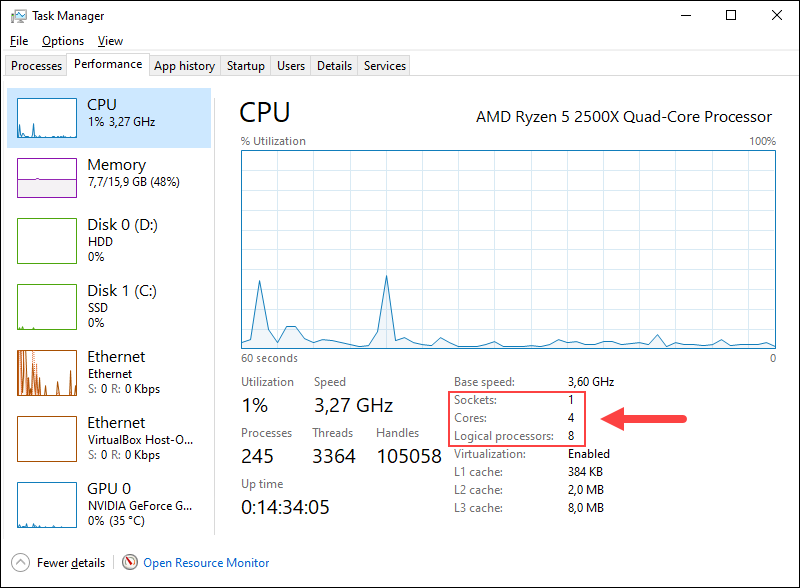

The distinction between a physical CPU core and a vCPU is paramount. A physical CPU core is a tangible piece of silicon within the processor that can execute instructions. Modern CPUs often contain multiple physical cores, each capable of independent processing. Some advanced CPUs also feature technologies like Intel’s Hyper-Threading or AMD’s Simultaneous Multi-Threading (SMT), which allow a single physical core to present itself as multiple logical processors to the operating system, further enhancing parallelism.

A vCPU, however, is a logical entity. It represents a slice of processing time on a physical CPU core. The hypervisor manages how and when each vCPU gets to execute instructions on a physical core. This means that multiple vCPUs from different VMs might share the same physical core, taking turns to execute their assigned tasks. The speed and responsiveness of a vCPU are directly tied to the performance of the underlying physical CPU and the efficiency of the hypervisor’s scheduling algorithms.

The Role of the Hypervisor

The hypervisor is the orchestrator of the virtualized environment, and its role in managing vCPUs is critical. It is responsible for:

- Scheduling: The hypervisor determines which vCPU gets to run on which physical CPU core at any given moment. This scheduling is a complex process, aiming to provide fair access and optimize performance for all running VMs.

- Resource Allocation: When a VM is created, the hypervisor allocates a specific number of vCPUs to it. This allocation can often be adjusted dynamically, allowing administrators to scale resources up or down as needed.

- Context Switching: When a vCPU’s time slice on a physical core ends, or when it needs to wait for an I/O operation, the hypervisor performs a context switch. This involves saving the current state of the vCPU and loading the state of another vCPU that is ready to run. Efficient context switching is vital for minimizing performance overhead.

- Isolation: The hypervisor ensures that VMs are isolated from each other, preventing one VM’s processes or errors from affecting others. This isolation extends to vCPU access, guaranteeing that a vCPU in one VM does not interfere with the operations of a vCPU in another.

Virtualization and the Rise of vCPUs

The advent of virtualization revolutionized computing by enabling the consolidation of multiple workloads onto fewer physical servers. This technology allows for greater resource utilization, increased flexibility, and reduced operational costs. vCPUs are the fundamental building blocks of this virtualized paradigm.

How vCPUs Enable Virtual Machines

Virtual machines are essentially emulations of complete computer systems, including their own operating systems, applications, and configurations. To run these VMs, they need access to processing power, memory, storage, and network resources. vCPUs provide the virtual processing power for each VM.

When you provision a VM, you typically specify the number of vCPUs it requires. This tells the hypervisor how many processing threads the VM should have available. For instance, a VM might be configured with 2 vCPUs, meaning its operating system will see and be able to utilize two logical processors. The hypervisor then ensures that these 2 vCPUs receive their allocated share of processing time from the available physical CPU cores.

Resource Contention and Overcommitment

One of the key challenges in virtualization is managing resource contention, particularly with vCPUs. When the total number of vCPUs allocated across all VMs exceeds the number of available physical CPU cores, a phenomenon known as “overcommitment” occurs. While some level of overcommitment can be beneficial, allowing for higher server utilization, excessive overcommitment can lead to performance degradation.

If too many vCPUs are vying for the attention of a limited number of physical cores, the hypervisor’s scheduler will have to switch between them more frequently. This increased context switching adds overhead and can slow down the execution of tasks for all VMs. The art of virtualization management involves finding the right balance to maximize utilization without compromising performance.

Performance Considerations for vCPUs

The performance experienced by a VM is influenced by several factors related to its vCPUs:

- Number of vCPUs: More vCPUs generally allow a VM to perform more tasks in parallel, improving performance for multi-threaded applications. However, allocating too many vCPUs can sometimes lead to diminishing returns due to scheduling overhead.

- Physical CPU Speed and Architecture: The underlying physical CPU cores are the ultimate source of processing power. Faster cores with more advanced architectures will generally provide better performance for vCPUs.

- Hypervisor Efficiency: The quality and efficiency of the hypervisor’s scheduling algorithms play a significant role. A well-optimized hypervisor can minimize overhead and maximize the performance delivered to vCPUs.

- Workload Characteristics: The type of applications running on the VM also impacts vCPU performance. CPU-bound applications that perform intensive calculations will be more sensitive to vCPU allocation than I/O-bound applications.

- NUMA (Non-Uniform Memory Access): In multi-socket servers, NUMA architectures can introduce latency if a vCPU needs to access memory that is not directly attached to its physical CPU socket. Hypervisors often employ NUMA-aware scheduling to mitigate this.

The Benefits of vCPU-Based Virtualization

The adoption of vCPUs and virtualization has brought about numerous advantages for businesses and IT departments:

- Server Consolidation: Multiple physical servers can be replaced by a single, more powerful server running multiple VMs, leading to significant cost savings in hardware, power, cooling, and data center space.

- Resource Optimization: Resources can be allocated dynamically to VMs based on their current needs, improving overall resource utilization and reducing waste.

- Flexibility and Agility: New servers can be provisioned or reconfigured rapidly by creating or modifying VMs, enabling faster deployment of applications and services.

- Improved Disaster Recovery: VMs can be easily backed up, replicated, and migrated to different hardware, enhancing disaster recovery capabilities and business continuity.

- Testing and Development Environments: VMs provide isolated and easily reproducible environments for software development, testing, and quality assurance.

- Cost Efficiency: By reducing hardware footprint and improving resource utilization, virtualization leads to substantial cost savings in IT operations.

vCPUs in Cloud Computing

Cloud computing platforms, such as Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP), are built upon massive infrastructures of physical servers. Virtualization is the foundational technology that allows these platforms to offer resources to millions of users simultaneously. vCPUs are the primary unit of compute offered by cloud providers.

Instance Types and vCPU Allocation

When you launch a virtual server instance in the cloud, you select an “instance type” that defines the hardware resources allocated to your VM. These instance types are characterized by their vCPU count, memory, storage, and network performance. Cloud providers offer a wide array of instance types, catering to various workloads, from general-purpose computing to memory-intensive, compute-optimized, or GPU-accelerated instances.

For example, a basic general-purpose instance might have 2 vCPUs and 4 GB of RAM, while a high-performance compute instance could offer 16 vCPUs and 64 GB of RAM. The pricing of these instances is directly correlated with the number of vCPUs and other resources they provide.

Shared vs. Dedicated vCPUs

Cloud environments typically offer two primary models for vCPU allocation:

- Shared vCPUs: In this model, the physical CPU cores are shared among multiple VMs. Your VM receives a guaranteed minimum allocation of CPU time, but its performance can be affected by the activity of other VMs on the same physical host. This is often the most cost-effective option for workloads with variable or non-critical performance requirements.

- Dedicated vCPUs: With dedicated vCPUs, the physical CPU cores are exclusively allocated to your VM. This ensures consistent and predictable performance, making it suitable for demanding applications, databases, and critical workloads. Dedicated instances are generally more expensive than shared ones.

The Impact of vCPUs on Cloud Performance and Cost

The number of vCPUs allocated to your cloud instance has a direct impact on both its performance and its cost:

- Performance: More vCPUs allow for greater parallelism, enabling your applications to handle more requests, perform complex calculations faster, and complete tasks more efficiently. This is particularly important for web servers, application servers, and data processing jobs.

- Cost: Cloud computing is typically billed based on resource consumption. Instances with more vCPUs are more expensive, reflecting the greater share of the underlying physical hardware resources they utilize. Therefore, choosing the appropriate number of vCPUs is a crucial aspect of cost optimization in the cloud.

Optimizing vCPU Usage in the Cloud

To maximize performance and minimize costs, it’s essential to optimize vCPU usage in cloud environments:

- Right-Sizing Instances: Regularly monitor the CPU utilization of your instances. If an instance is consistently underutilized, consider downsizing it to a smaller instance type with fewer vCPUs to reduce costs. Conversely, if an instance is frequently hitting its CPU limits, consider upgrading to a larger instance type.

- Leveraging Auto-Scaling: For applications with fluctuating demand, configure auto-scaling groups. These groups automatically adjust the number of instances (and thus the total number of vCPUs) based on predefined metrics like CPU utilization, ensuring that you have sufficient capacity during peak times and scale down to save costs during off-peak times.

- Choosing the Right Instance Family: Cloud providers offer specialized instance families optimized for different workloads. Selecting the appropriate family (e.g., compute-optimized for CPU-intensive tasks) can provide better performance for a given number of vCPUs.

- Application Profiling: Understand the CPU requirements of your applications. Some applications are inherently more CPU-bound than others. Profiling your applications can help identify bottlenecks and inform your instance selection.

The Future of vCPUs and Virtualization

The concept of vCPUs and virtualization continues to evolve, driven by advancements in hardware and software. As processors become more powerful and hypervisor technologies become more sophisticated, the efficiency and capabilities of vCPUs will only improve.

Emerging Trends and Innovations

Several trends are shaping the future of vCPUs and virtualization:

- Hardware-Assisted Virtualization: Modern CPUs incorporate hardware features that accelerate virtualization tasks, such as instruction set extensions for virtualization. This reduces the overhead imposed by the hypervisor and improves the performance of vCPUs.

- Containerization: While virtualization isolates entire operating systems, containerization (e.g., Docker, Kubernetes) isolates applications and their dependencies at the operating system level. Containers share the host OS kernel, making them more lightweight and efficient than traditional VMs. However, vCPUs are still fundamental to the underlying host operating system that runs these containers.

- Serverless Computing: Serverless platforms abstract away the underlying infrastructure, allowing developers to run code without managing servers or VMs. While developers don’t directly manage vCPUs in a serverless model, the cloud provider’s infrastructure still relies on virtualized environments powered by vCPUs to execute this code.

- Edge Computing: As computing moves closer to data sources at the “edge” of the network, virtualization will play a crucial role in managing distributed resources and workloads on smaller, often resource-constrained, devices.

The vCPU, though a software abstraction, is a cornerstone of modern computing. Its ability to efficiently share and manage physical processing power has unlocked the potential of virtualization and cloud computing, enabling unprecedented scalability, flexibility, and cost-effectiveness. As technology continues to advance, the role of the vCPU will remain central to delivering the compute resources that power our digital world.