The Crucial Role of C: Drive Management in Tech & Innovation

In the fast-paced world of technology and innovation, where breakthroughs often depend on high-performance computing, efficient data management is not merely a convenience—it’s a fundamental necessity. The C: drive, serving as the primary repository for operating systems, critical applications, development environments, and often vast datasets generated by mapping, remote sensing, AI model training, and simulation projects, can quickly become a bottleneck if not meticulously managed. Understanding precisely “what’s taking up space on your C: drive” is therefore paramount for maintaining optimal system performance, ensuring resource availability for demanding computational tasks, and preventing costly delays in research and development cycles.

For engineers, data scientists, software developers, and researchers, a cluttered C: drive can lead to slower application load times, sluggish build processes, insufficient space for compiling code, and even failures in data acquisition or processing pipelines. Imagine an AI model training session grinding to a halt because there’s no space for temporary files, or a complex geospatial mapping project being delayed because new sensor data cannot be processed. These scenarios highlight why proactive and informed disk space analysis is integral to the tech workflow. This article will delve into effective strategies and tools, both built-in and third-party, to accurately identify disk space hogs and implement robust management practices, thereby safeguarding productivity and fostering an environment conducive to continuous innovation.

Leveraging Built-in Windows Tools for Disk Analysis

Windows offers several native utilities that, while sometimes overlooked, provide valuable insights into C: drive usage and offer straightforward solutions for reclaiming space. These tools are often the first line of defense in maintaining a lean and efficient primary storage.

Disk Cleanup Utility

The Disk Cleanup utility is a classic Windows feature designed to identify and remove various types of unnecessary files that accumulate over time. These include temporary internet files, system error memory dump files, Recycle Bin contents, temporary application files, and downloaded program files. For tech professionals, this tool can also target system log files, Windows update cleanup files (which can often consume significant gigabytes after major updates), and old Windows installation files.

To access it, simply search for “Disk Cleanup” in the Start menu. Upon launching, select your C: drive. For a more comprehensive scan, click “Clean up system files.” This elevated scan reveals additional categories like previous Windows installations, Windows Defender antivirus files, and system restore points, all of which can be substantial. Regularly running Disk Cleanup, particularly after large system updates or software installations, can free up considerable space without impacting critical project data or development environments. While basic, its effectiveness in clearing system-level clutter makes it an indispensable initial step in any disk space audit.

Storage Sense and Storage Settings

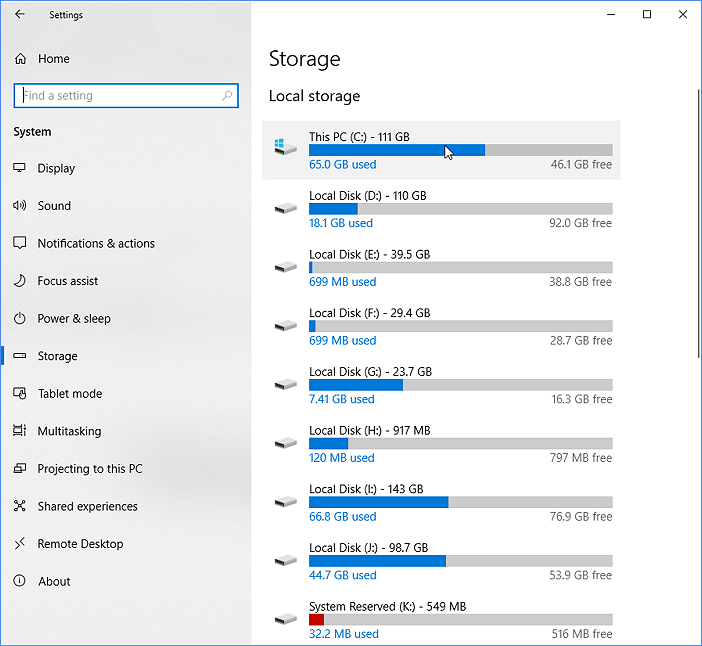

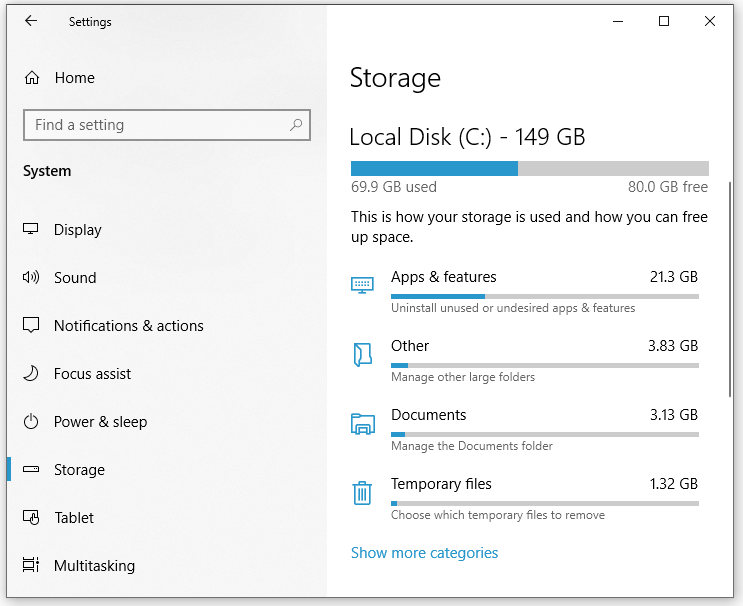

More modern versions of Windows introduce Storage Sense, an automated disk space management feature integrated into the “Storage” section of the Windows Settings app. Storage Sense can automatically free up space by deleting temporary files, emptying the Recycle Bin, and removing files from your Downloads folder that haven’t been opened for a specified period.

To configure Storage Sense, navigate to Settings > System > Storage. Here, you can toggle Storage Sense “On” and then click “Configure Storage Sense or run it now.” This allows for granular control, letting you specify when to run Storage Sense, how often to delete temporary files, and whether to clean up cloud-backed content (like OneDrive files that are only stored locally). Crucially, the “Storage” settings also provide a visual breakdown of your C: drive usage. It categorizes files by type (Apps & features, Temporary files, Documents, Others, etc.), providing a quick glance at where the majority of your space is allocated. Clicking on each category offers more detail and options for management, such as uninstalling large applications or viewing specific temporary files. For instance, the “Apps & features” section allows you to sort installed software by size, quickly identifying large development tools, simulation software, or datasets associated with specific applications that might be consuming significant resources. This detailed overview is particularly useful for tech professionals trying to understand the footprint of their development environment and associated tools.

Advanced Third-Party Solutions for Deep Dive Analysis

While Windows’ built-in tools are good for general maintenance, professionals often require a more granular, visual, and powerful analysis to pinpoint specific directories, project files, or large datasets that are consuming significant space. This is where advanced third-party utilities shine.

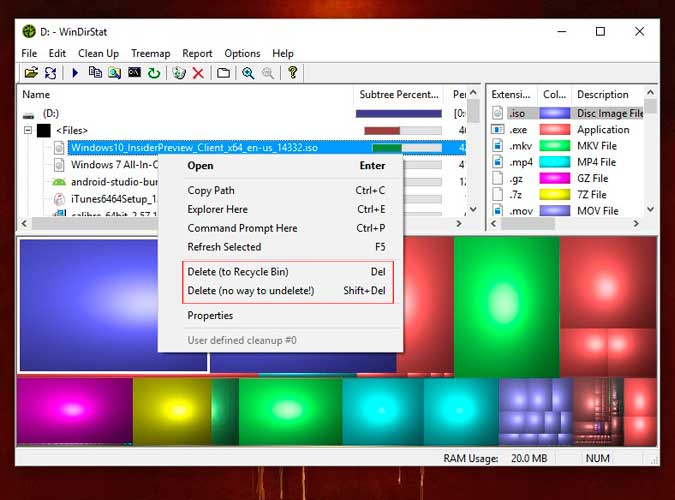

Visualizing Disk Usage with TreeSize Free/WinDirStat

For a truly insightful and often visually striking overview of disk space distribution, tools like TreeSize Free or WinDirStat are invaluable. These utilities go beyond simple categorization, presenting a hierarchical view of your file system, making it incredibly easy to spot large folders and files at a glance.

TreeSize Free is renowned for its speed and interactive interface. It scans your C: drive (or any selected drive/folder) and displays results in a tree-like structure, similar to File Explorer, but augmented with size information for every directory and subdirectory. The most compelling feature is its Treemap chart, which visually represents folders and files as nested, color-coded rectangles. Larger rectangles signify larger folders or files, allowing you to instantly identify where significant chunks of space are being used. This visual representation is exceptionally effective for navigating complex project directories, identifying caches from development tools (e.g., npm, Maven, Docker images), or locating unexpectedly large log files or output data from simulations. TreeSize Free also provides options to delete, move, or open files directly from its interface, streamlining the cleanup process.

WinDirStat offers a similar functionality but with its own distinctive visualization. It combines a tree view, a list of file extensions and their sizes, and a “treemap” graphic. The treemap in WinDirStat uses various colors to represent different file types, providing an additional layer of information. While perhaps not as fast as TreeSize Free for very large drives, its detailed statistics and clear visual hierarchy make it a powerful alternative for those who prefer its specific graphical output. Both tools are essential for tech professionals who need to quickly ascertain the storage footprint of specific projects, identify rogue temporary files, or audit the size of raw sensor data or processed outputs.

Specialized Tools for Large Dataset Management

Beyond general disk analysis, certain tech domains deal with exceptionally large and specialized datasets that may require a more tailored approach. For example, in geospatial analysis, remote sensing, and scientific computing, datasets can span terabytes. While TreeSize and WinDirStat can identify these large files, managing them often involves specialized strategies.

Tools for Data Lifecycle Management (DLM) or Storage Resource Management (SRM) can offer features like data tiering, automated archiving to network-attached storage (NAS) or cloud platforms, and data deduplication. While these are often enterprise-level solutions, understanding their principles is valuable. For individual professionals, this translates to utilizing network shares, external hard drives, or cloud storage (e.g., Azure Blob Storage, AWS S3, Google Cloud Storage) to offload large, infrequently accessed datasets from the C: drive. Furthermore, in areas like AI/ML, managing model checkpoints, training data, and intermediate results can be a challenge. Version control systems designed for data, like DVC (Data Version Control), help manage large files and directories by linking them to Git repositories without storing the actual data in Git, effectively externalizing the large data footprint from the primary development drive. Understanding and adopting such practices is crucial for professionals working with massive data volumes generated by modern tech innovation.

Strategies for Proactive Space Optimization in Tech Workflows

Effective C: drive management isn’t just about reactive cleanup; it involves proactive strategies integrated into daily tech workflows. By adopting disciplined practices, professionals can minimize space consumption and maintain a consistently optimized environment.

Managing Development Environments and Project Files

Development environments are notorious for consuming significant disk space. Integrated Development Environments (IDEs) like Visual Studio, IntelliJ IDEA, or Eclipse, along with their associated SDKs, compilers, libraries, and dependencies (e.g., Node.js modules, Python virtual environments, Maven repositories, NuGet packages), can quickly balloon in size.

Key strategies include:

- Centralized Dependency Caches: Configure development tools to use a single, shared cache for dependencies rather than multiple copies across different projects. Regularly clean these caches using built-in commands (e.g.,

npm cache clean,pip cache purge,mvn dependency:purge-local-repository). - Virtual Environments: Utilize virtual environments (e.g.,

venvfor Python,nvmfor Node.js) to isolate project dependencies. While this might seem to use more space per project, it prevents global pollution and allows for easy deletion of entire environments when projects are completed or no longer needed. - Docker Image Management: Docker containers and images can be huge. Regularly prune unused Docker images, containers, and volumes using commands like

docker system prune -a. This removes all stopped containers, unused networks, dangling images, and build cache, often reclaiming gigabytes. - Project Archiving: Once a project is completed or put on hold, consider archiving its non-essential files to external storage. Keep only the necessary source code on the C: drive, leveraging version control systems like Git to manage project history efficiently.

Archiving and Offloading Large Datasets

For professionals dealing with large volumes of raw sensor data, processed imagery, simulation outputs, or extensive test datasets, offloading is a critical strategy. The C: drive should ideally be reserved for the operating system, actively used applications, and currently active project files that require high-speed access.

Best practices for data management:

- Categorize Data: Differentiate between “hot” data (frequently accessed, active project files) and “cold” data (archival, completed project data, raw backups).

- External Storage: Utilize external SSDs or HDDs for cold data storage. These are cost-effective solutions for keeping large archives readily accessible but off the primary drive.

- Network Attached Storage (NAS): For team environments or larger personal archives, a NAS provides centralized, network-accessible storage that can scale.

- Cloud Storage: Leverage cloud services (OneDrive, Google Drive, Dropbox, or more enterprise-grade solutions like AWS S3, Azure Blob Storage) for backup, collaboration, and long-term archival of very large datasets. Implement selective sync to only keep active files locally on the C: drive.

- Data Compression: For certain types of data, lossless compression tools can significantly reduce file sizes before archiving.

Regular Maintenance and Monitoring

Proactive management also involves consistent monitoring and scheduled maintenance. Integrating disk space checks into routine system maintenance can prevent critical low-space situations.

- Scheduled Scans: Set a recurring reminder to run Disk Cleanup or a third-party analysis tool (like TreeSize Free) monthly.

- Monitor Alerts: Pay attention to Windows low disk space warnings. These are early indicators that action is needed.

- Review Downloads and Temporary Folders: These folders are common culprits for accumulating unneeded files. Make it a habit to regularly review and clear them.

- Uninstall Unused Software: Periodically review the “Apps & features” list in Windows settings and uninstall any software that is no longer used. Even small applications contribute to overall clutter.

The Impact of Disk Space on Performance and Innovation

The availability of ample disk space on the C: drive directly correlates with system performance, stability, and ultimately, the capacity for innovation. A system struggling with low disk space will exhibit slower boot times, unresponsive applications, increased fragmentation, and potential data corruption. For tech professionals, this translates into lost productivity, missed deadlines, and a frustrating development experience.

Conversely, a well-managed C: drive ensures that the operating system has sufficient swap space, applications can create temporary files without hindrance, and development tools operate at peak efficiency. This optimized environment provides the stability and speed necessary to compile large codebases quickly, run complex simulations, train large AI models, and process vast amounts of sensor data without performance bottlenecks. By actively understanding and managing what occupies the C: drive, professionals can maintain a nimble, high-performing workstation that serves as a reliable foundation for exploring new ideas, pushing technological boundaries, and driving innovation forward. Efficient disk space management is not merely a task; it’s an investment in sustained productivity and technological advancement.