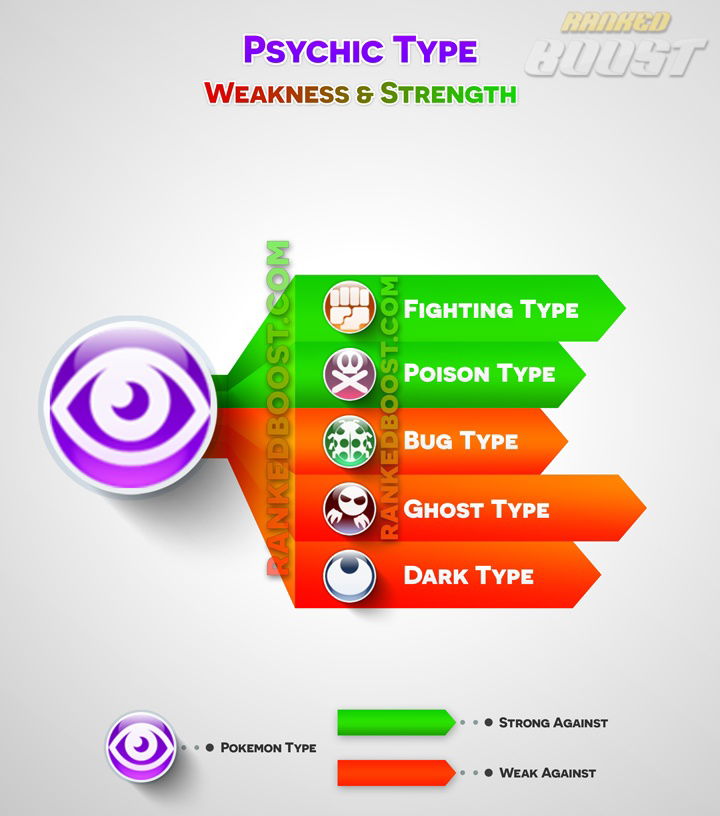

The relentless march of innovation has ushered in an era where autonomous systems exhibit capabilities once confined to science fiction. From drones navigating complex urban environments with uncanny precision to AI algorithms predicting intricate patterns in vast datasets, these systems often demonstrate an almost “psychic” ability to perceive, process, and act. This metaphorical “psychic” power represents the apex of current technological achievement: sophisticated sensor fusion, advanced machine learning, predictive analytics, and self-optimizing control systems. Yet, even these highly evolved intelligences possess inherent vulnerabilities. Understanding what lies “weak against psychic” capabilities is crucial for fortifying future autonomous technologies and ensuring their robust, reliable, and ethical deployment.

The Illusion of Infallibility in Autonomous Systems

The perceived “psychic” abilities of modern autonomous systems—their capacity for real-time environmental comprehension, predictive modeling, and intelligent decision-making—can create an illusion of infallibility. These systems can process torrents of data, identify subtle anomalies, and execute complex maneuvers far beyond human capacity. However, this sophistication masks a delicate interplay of hardware, software, and environmental factors, each presenting a potential point of weakness.

Defining “Psychic” Capabilities in AI

In the context of technology and innovation, “psychic” capabilities refer to advanced functions such as:

- Hyper-Perception: The ability of sensor arrays (LiDAR, radar, thermal, visual) to gather and fuse data, creating a comprehensive understanding of an environment, often detecting things invisible to the human eye. This allows autonomous drones, for example, to “see” through fog or identify structural weaknesses in infrastructure from a distance.

- Predictive Analytics: AI models that forecast future states or events based on historical and real-time data. This can manifest in predicting equipment failure, optimizing flight paths based on anticipated weather changes, or identifying anomalous behaviors in monitored subjects.

- Autonomous Decision-Making: AI algorithms that process perceived information and execute complex actions without direct human intervention, such as obstacle avoidance, dynamic path planning, or target tracking in unconstrained environments.

- Remote Sensing and Cognitive Mapping: Technologies that enable systems to build detailed, intelligent maps of their surroundings, not just geometrically, but also semantically, understanding the types of objects and their functions within that space.

These capabilities, while impressive, are not magic. They are products of intricate engineering, and like all engineered systems, they are subject to specific limitations and potential failures.

The Core Challenge: Black Box Complexity

One fundamental weakness against these “psychic” systems lies in their inherent complexity, often referred to as the “black box” problem. Many advanced AI models, particularly deep neural networks, operate in ways that are opaque even to their creators. While they may produce highly accurate predictions or actions, the exact reasoning process remains hidden. This lack of transparency makes it difficult to diagnose failures, identify biases, or assure safety and ethical compliance, creating a significant vulnerability when things go awry. If a system fails to “perceive” or “predict” correctly, understanding why is the first step to mitigation, and the black box nature impedes this critical process.

Environmental and Physical Countermeasures

The sophisticated sensory and navigational capabilities that endow autonomous platforms with their “psychic” edge are paradoxically their most vulnerable points. These systems rely heavily on external environmental signals and uninterrupted data flows, making them susceptible to various forms of interference and disruption.

GPS Spoofing and Jamming: Blinding the Digital Eye

Global Positioning System (GPS) is the bedrock of modern navigation for autonomous drones and many remote sensing applications. Its reliance on satellite signals, however, presents a significant weakness.

- GPS Jamming: Deliberate emission of radio signals to overwhelm and block legitimate GPS signals. This effectively “blinds” the autonomous system, preventing it from accurately determining its position and velocity, leading to drift, uncontrolled flight, or a forced emergency landing. For a system relying on precise location for mapping or remote sensing, jamming eliminates its ability to contextualize data spatially.

- GPS Spoofing: A more insidious attack where false GPS signals are transmitted, tricking the autonomous system into believing it is at a different location or moving along a different trajectory than it actually is. This can lead to drones veering off course, entering restricted airspace, or even crashing, all while their internal “psychic” navigation system believes it is operating correctly. Such an attack exploits the system’s trust in external data, turning its reliance on accurate positioning into a liability.

Electromagnetic Interference: The Unseen Disruptor

Beyond GPS, autonomous systems communicate and operate through a myriad of electromagnetic signals. Wi-Fi, radio control links, data telemetry, and internal sensor communications are all susceptible to electromagnetic interference (EMI).

- Deliberate Interference: High-power electromagnetic pulses (EMP) or directed energy weapons can temporarily or permanently disrupt the sensitive electronics within a drone, effectively incapacitating its “psychic” processing and control.

- Ambient Interference: Even in complex urban environments, high levels of ambient EMI from power lines, communication towers, or other electronic devices can degrade sensor performance, corrupt data transmissions, or induce errors in delicate control circuits. This unseen “noise” can subtly undermine the system’s ability to accurately perceive its environment or communicate its intentions, leading to unpredictable behavior or mission failure.

Sensor Overload and Obscuration: Hiding in Plain Sight

The hyper-perception of “psychic” systems relies on high-fidelity sensor input. Consequently, anything that degrades or overwhelms these sensors constitutes a critical weakness.

- Environmental Obscuration: Dense fog, heavy rain, smoke, or dust can severely limit the effectiveness of optical and even some radar/LiDAR sensors. While advanced algorithms attempt to compensate, extreme conditions can push even the most sophisticated systems beyond their processing limits, creating “blind spots” where the system’s “psychic” vision fails.

- Deliberate Obscuration: Simple physical countermeasures, such as reflective materials, smoke screens, or even specially designed camouflage, can render a target invisible or confusing to various sensor types. For thermal cameras, materials that mimic ambient temperature or block heat signatures can be effective. For optical systems, bright lights or lasers can temporarily blind or damage imaging sensors.

- Sensor Saturation/Overload: Bombarding a system’s sensors with excessive or contradictory data can overwhelm its processing capabilities. For instance, an extreme light source directed at an optical sensor can cause saturation, making it impossible to discern details within its field of view. Similarly, rapid, unpredictable movements of multiple objects could confuse tracking algorithms designed for more orderly scenarios.

Data Integrity and Cyber Vulnerabilities

The “psychic” power of autonomous systems is intrinsically linked to the integrity and security of the data they consume, process, and transmit. Any compromise to this data chain represents a profound weakness, capable of turning an advanced system into a liability.

Adversarial Attacks: Manipulating Perceptions

Machine learning models, especially deep learning networks at the heart of many “psychic” capabilities, are surprisingly vulnerable to adversarial attacks. These are subtle, carefully crafted inputs designed to fool the AI.

- Image Perturbations: Minor, imperceptible alterations to an image can cause an object recognition system to misclassify an object entirely—for example, making a drone’s obstacle avoidance system perceive a harmless street sign as a critical threat or, more dangerously, an actual obstacle as clear sky.

- Audio Spoofing: For systems relying on acoustic data, imperceptible changes to audio signals can trick voice command systems or sound-based anomaly detectors.

- Sensor Data Injection: Malicious actors could inject false sensor readings directly into a system’s data stream, making it “perceive” non-existent threats or opportunities, leading to erroneous decisions in navigation or object interaction. These attacks exploit the AI’s learned patterns, turning its reliance on data consistency against itself.

Data Poisoning and Model Drift: Corrupting the Source

The “psychic” predictive power of AI models is only as good as the data they are trained on. This makes the training data a critical point of weakness.

- Data Poisoning: Malicious actors can subtly introduce corrupted or biased data into a training dataset. Over time, this “poison” can embed vulnerabilities or incorrect learned behaviors into the AI model, leading to systematic misclassifications or undesirable actions once deployed. For example, poisoning data used for autonomous navigation could make a drone consistently misidentify certain safe objects as dangerous, or vice versa.

- Model Drift: Even without malicious intent, AI models can degrade over time as the real-world data they encounter deviates from their original training data. Environmental changes, new operational scenarios, or shifts in the types of objects encountered can lead to a gradual reduction in the “psychic” accuracy and reliability of the system, requiring constant monitoring and retraining.

Cybersecurity Gaps: The Digital Achilles’ Heel

The entire digital infrastructure supporting autonomous systems—from ground control stations to cloud-based processing and communication links—is a target for cyberattacks.

- Remote Exploits: Vulnerabilities in a drone’s operating system, communication protocols, or onboard software can be exploited remotely, allowing attackers to hijack control, steal sensitive data, or disable the system entirely. This directly undermines the system’s autonomous decision-making and perceived invincibility.

- Supply Chain Attacks: Compromises introduced during the manufacturing or software development process can embed backdoors or weaknesses long before deployment, making the “psychic” capabilities of a system inherently insecure from day one.

- Data Exfiltration: Advanced remote sensing platforms collect vast amounts of sensitive data. If the communication channels or storage systems are compromised, this data can be stolen, revealing critical intelligence or proprietary information.

Algorithmic Biases and Ethical Limitations

Beyond technical vulnerabilities, the “psychic” capabilities of AI are weak against inherent biases embedded during development and face fundamental ethical and boundary limitations. These weaknesses highlight that even the most advanced algorithms are not truly impartial or omniscient.

Unintended Biases in Training Data

AI systems learn from the data they are fed. If this data reflects societal biases, omissions, or skewed distributions, the “psychic” AI will inadvertently learn and perpetuate those biases.

- Recognition Bias: If an object recognition system is trained predominantly on images from specific demographics or environments, its accuracy may drop significantly when applied to different groups or settings. For a drone performing surveillance, this could lead to misidentification or missed threats depending on the population it monitors.

- Decision Bias: AI systems used for autonomous decision-making, such as predictive maintenance or resource allocation, can absorb biases present in historical human decisions, leading to unfair or suboptimal outcomes. This undermines the perceived objectivity of the “psychic” system, exposing it to ethical and operational failures. The perceived infallibility of a data-driven decision can mask deeply unfair or inefficient underlying biases.

The Boundaries of Predictive Analytics

While AI exhibits impressive predictive “psychic” abilities, these are not without limits. Predictive models operate on probabilities and correlations, not absolute certainties, and their strength diminishes at the edges of known data.

- Novelty and Edge Cases: AI struggles with truly novel situations or rare “edge cases” that fall outside its training data distribution. An autonomous drone, for instance, might flawlessly navigate familiar urban landscapes but falter in an entirely new, unmapped, or highly chaotic environment. Its “psychic” ability to anticipate outcomes is constrained by its past experiences, leaving it vulnerable to the truly unprecedented.

- Lack of Causal Understanding: Modern AI excels at finding correlations but often lacks a genuine causal understanding of the world. It can predict what might happen but not necessarily why. This means that while it can anticipate outcomes, it cannot always adapt intelligently or generalize its knowledge when underlying causal factors shift, making it fragile against scenarios that break established correlations.

Human Oversight as the Ultimate Safeguard

Perhaps the most crucial “weakness” against purely autonomous “psychic” systems is the irreplaceable need for human oversight and intervention.

- Ethical Dilemmas: In scenarios involving potential harm or moral judgment, even the most advanced AI lacks true consciousness or ethical reasoning. A drone with “psychic” targeting capabilities might identify a target based on its programming, but a human must ultimately bear responsibility for the ethical implications of engagement.

- Contextual Nuance: Humans possess a unique ability to interpret complex situations, understand intent, and apply common sense and empathy—nuances that still elude AI. When a system’s “psychic” perception is accurate but its proposed action lacks contextual understanding (e.g., distinguishing between a toy weapon and a real threat), human intervention is paramount.

- Last Resort Control: The ability for human operators to override autonomous systems, assume manual control, or initiate emergency protocols is a fundamental safeguard. This acknowledges that even the most “psychic” technology can encounter unforeseen circumstances where human judgment is the only viable countermeasure to prevent catastrophe.

The Future of Resilience: Fortifying Against the Unseen

Acknowledging what is “weak against psychic” capabilities is not a setback but a roadmap for future innovation. Building truly resilient and trustworthy autonomous systems requires directly addressing these vulnerabilities, transforming weaknesses into opportunities for advancement in “Tech & Innovation.”

Multi-Modal Sensor Fusion and Redundancy

To counteract the weaknesses of individual sensors (like GPS jamming or optical obscuration), future systems must integrate an even broader array of sensors, making their “psychic” perception more robust.

- Diverse Sensor Sets: Combining GPS with inertial navigation systems (INS), visual odometry, LiDAR, radar, acoustic sensors, and even emerging quantum sensors provides multiple, independent streams of data. If one modality is compromised, others can compensate, maintaining situational awareness.

- Redundant Systems: Implementing redundant hardware and software components ensures that if a primary system fails, a backup can seamlessly take over, preventing catastrophic loss of “psychic” capability. This applies not just to sensors but to processing units, communication links, and power systems.

Explainable AI (XAI) for Transparency

Addressing the “black box” problem is paramount for trust and troubleshooting. Explainable AI (XAI) aims to develop models that can articulate their reasoning and highlight the factors influencing their decisions.

- Auditable Decision Paths: XAI techniques allow operators to understand why an autonomous drone took a particular action or made a specific prediction, making it easier to identify errors, biases, or malicious manipulation. This transparency transforms the opaque “psychic” process into an intelligible one.

- Human-AI Collaboration: With explainable outputs, humans can better understand the AI’s “perceptions” and predictions, facilitating more effective collaboration and enabling human operators to make informed override decisions when necessary, leveraging the strengths of both human and artificial intelligence.

Robust Cybersecurity Architectures

As autonomous systems become more integrated and pervasive, their cybersecurity must evolve beyond traditional IT defenses.

- Hardware-Level Security: Incorporating security features directly into the hardware, such as secure boot mechanisms, trusted execution environments, and tamper-proof components, creates a more formidable defense against physical and remote attacks.

- Proactive Threat Intelligence: Continuously monitoring for new attack vectors, adversarial techniques, and software vulnerabilities is essential. This includes employing AI-driven cybersecurity tools that can detect and respond to novel threats in real-time, essentially turning a “psychic” defense against “psychic” attacks.

- Secure Software Development Lifecycle: Embedding security considerations at every stage of software development, from design to deployment and maintenance, significantly reduces the likelihood of exploitable vulnerabilities. This includes rigorous testing, formal verification, and secure coding practices.

By systematically addressing these “weaknesses against psychic” capabilities, the field of Tech & Innovation can move closer to developing truly intelligent, resilient, and trustworthy autonomous systems that not only possess advanced “perceptive” powers but are also fortified against the myriad challenges of the real world.