The seemingly simple question, “What’s the difference between grape and cherry tomatoes?”, when posed to an advanced aerial platform equipped with cutting-edge technology, transforms into a complex and fascinating challenge in the realm of tech and innovation. It encapsulates the core difficulties and the immense potential of remote sensing, AI-driven analytics, and autonomous flight in discerning minute distinctions within visually similar objects from an elevated perspective. This challenge pushes the boundaries of current drone capabilities, driving advancements in precision agriculture, environmental monitoring, and beyond, where granular differentiation is paramount.

The Micro-Discrimination Challenge in Remote Sensing

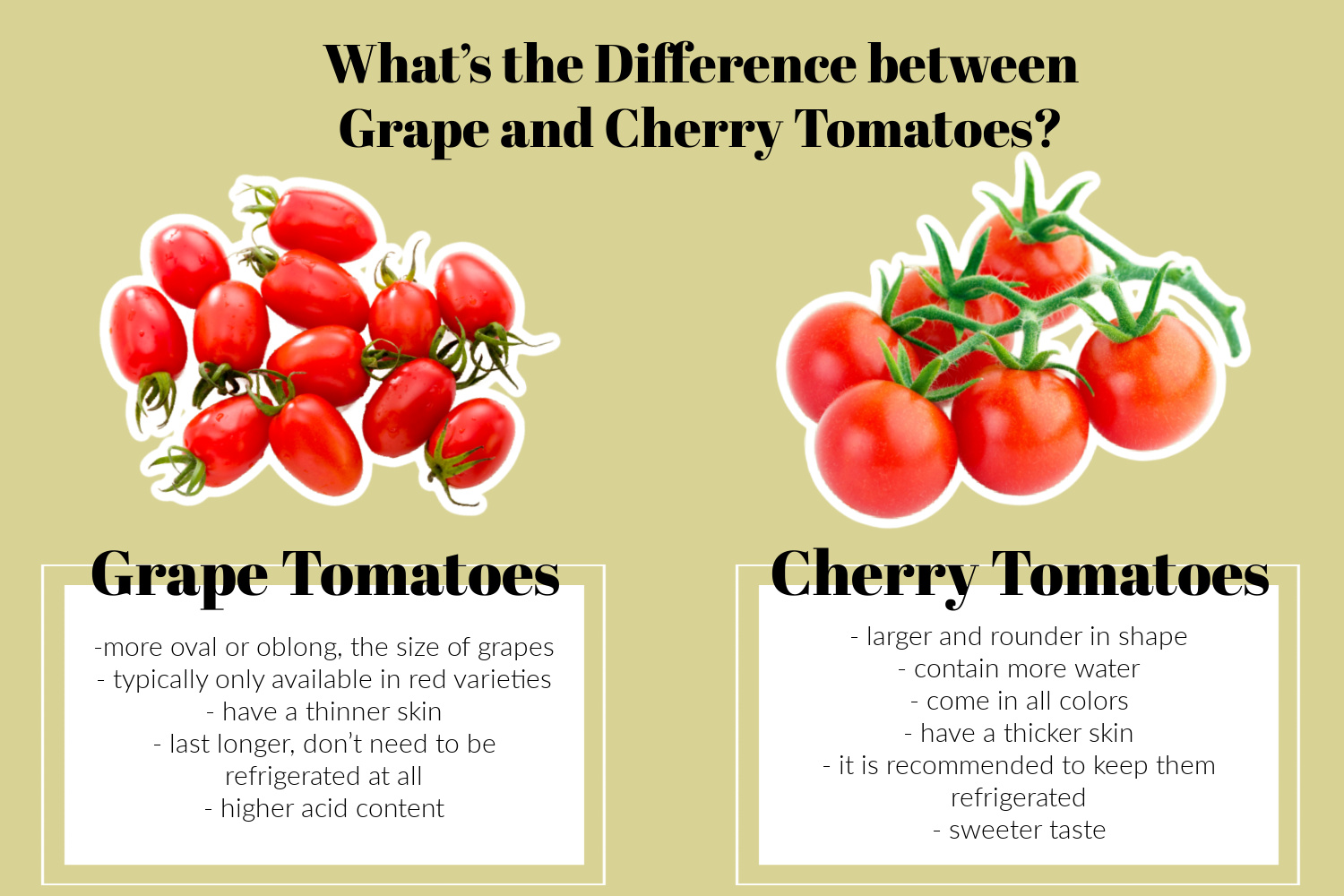

Identifying and classifying objects from aerial imagery is a foundational aspect of remote sensing. However, the task becomes exponentially more intricate when the targets are small, share many visual characteristics, and are part of a larger, complex environment. Grape and cherry tomatoes serve as an ideal illustration of this micro-discrimination challenge. While both are small, roundish, red fruits, they possess subtle differences in shape (oval vs. perfectly round), size, and growth patterns that are often difficult to discern even for the human eye from a distance, let alone a drone at altitude.

Distinguishing Phenotypes from Above

For aerial intelligence systems, distinguishing between grape and cherry tomatoes requires an understanding of their specific phenotypes – the observable characteristics resulting from the interaction of their genotype with the environment. Grape tomatoes are typically more oval or oblong, with a firmer flesh and thicker skin, often growing in elongated clusters. Cherry tomatoes, conversely, are generally rounder, juicier, and tend to grow in slightly looser clusters. These subtle variations in morphology, growth habit, and even spectral reflectance present a significant hurdle for automated systems. Traditional remote sensing methods, relying on broad spectral bands or basic shape recognition, frequently fall short when attempting to classify such fine-grained differences, highlighting the need for more sophisticated technological solutions.

Spatial and Spectral Resolution as Limiting Factors

The ability to discern these subtle phenotypic differences is heavily reliant on the quality and nature of the data acquired by the drone’s sensors. Spatial resolution, measured by Ground Sample Distance (GSD), dictates the size of a single pixel on the ground. For objects as small as individual tomatoes, an extremely low GSD (e.g., sub-centimeter per pixel) is required to capture sufficient detail. Achieving such high resolution across large areas demands precise flight planning and advanced camera optics. Simultaneously, spectral resolution, referring to the number and width of spectral bands captured, is equally critical. Standard RGB cameras capture only red, green, and blue light, which might not be enough to differentiate between objects whose primary visual differences lie in non-visible wavelengths or subtle spectral signatures that RGB misses. Without adequate spatial and spectral fidelity, grape and cherry tomatoes might appear as indistinguishable red blurs to the aerial observer.

AI and Machine Learning for Fine-Grained Classification

The advent of Artificial Intelligence and Machine Learning (AI/ML), particularly deep learning, offers a transformative approach to overcoming the micro-discrimination challenge. These advanced algorithms can be trained to recognize patterns and features that are imperceptible to traditional analytical methods, making them ideally suited for differentiating between subtly distinct objects like grape and cherry tomatoes.

Training Deep Learning Models for Subtlety

Convolutional Neural Networks (CNNs) are at the forefront of image recognition tasks, demonstrating remarkable capability in identifying intricate features. For differentiating between tomato varieties, a CNN would be trained on an extensive dataset of aerial imagery containing precisely labeled examples of grape and cherry tomatoes under various conditions (different lighting, growth stages, angles, etc.). This iterative process involves feeding the network vast amounts of data, allowing it to learn hierarchical features – from basic edges and colors to complex textures and cluster shapes. The model learns to identify the unique patterns and spectral signatures associated with each tomato type, developing an internal representation of their subtle differences that transcends simple visual cues. The success of this approach hinges on the quality and diversity of the training data, requiring meticulous data collection and annotation, often performed through a combination of manual and semi-automated techniques.

Feature Extraction and Pattern Recognition

AI algorithms transcend simple pixel-level analysis. They excel at sophisticated feature extraction, identifying distinguishing characteristics such as the aspect ratio of the fruit (length vs. width), the arrangement of fruits within a cluster, or even the subtle variations in their spectral reflectance that indicate ripeness or specific plant health markers. For grape and cherry tomatoes, an AI model can identify and quantify these minute geometric and chromatic differences. It can discern the slightly more oblong shape of a grape tomato versus the perfectly spherical cherry, or detect subtle textural differences in their skin. Furthermore, pattern recognition allows the AI to consider the context of the fruits within their plant structure, observing how they cluster and hang, providing additional critical data points for accurate classification. This holistic approach, integrating multiple layers of analysis, enables AI to make distinctions that would be incredibly challenging for human operators analyzing raw aerial imagery.

Overcoming Environmental Variability

A significant hurdle in deploying aerial object recognition systems is the inherent variability of natural environments. Changes in sunlight, shadows cast by foliage, atmospheric haze, and partial occlusions can dramatically alter the appearance of targets. AI models, particularly deep learning architectures, are designed with robustness in mind. Through data augmentation techniques during training (e.g., introducing synthetic shadows, varying brightness), and by processing large and diverse datasets, these models learn to generalize across varying environmental conditions. This ensures that the system can reliably differentiate between grape and cherry tomatoes whether it’s a sunny midday, an overcast morning, or under slight foliage cover, maintaining high accuracy in real-world agricultural settings.

Advanced Sensor Fusion for Enhanced Differentiation

While AI provides the analytical power, the quality and richness of the input data are paramount. Advanced sensor fusion techniques, integrating data from multiple types of drone-mounted sensors, unlock new dimensions of information that significantly enhance the ability to differentiate between subtly distinct objects.

Beyond RGB: The Power of Multispectral and Hyperspectral Data

Standard RGB cameras provide only a fraction of the information present in the electromagnetic spectrum. Multispectral and hyperspectral sensors capture data across many more narrow bands, extending beyond human vision into infrared and ultraviolet regions. For botanical objects like tomatoes, these spectral signatures are incredibly informative. Different tomato varieties, even if visually similar, may exhibit distinct reflectance patterns in the near-infrared (NIR) or red-edge bands due to variations in their chlorophyll content, water status, or cellular structure. Multispectral data can reveal nuanced physiological differences – a grape tomato, for instance, might have a slightly different cellular density or water distribution profile than a cherry tomato, detectable through specific spectral bands, which RGB alone would miss. Hyperspectral imaging, with hundreds of contiguous narrow bands, offers an even more detailed ‘spectral fingerprint,’ enabling ultra-fine classification based on unique chemical compositions.

LiDAR for Volumetric and Structural Analysis

Light Detection and Ranging (LiDAR) technology provides a complementary, non-imaging data stream that captures precise 3D structural information. By emitting laser pulses and measuring the time it takes for them to return, LiDAR creates dense point clouds that map the topography and volumetric shape of objects. While individual tomatoes might be too small for highly detailed LiDAR mapping, the technology can discern differences in the overall plant canopy structure, the way fruit clusters hang, or even subtle variations in the plant’s architecture that are characteristic of specific varieties. For example, LiDAR could identify slightly denser foliage structures around grape tomato clusters versus the more open arrangement of cherry tomatoes, providing context and structural cues that aid in overall classification when fused with spectral data.

Integrating Thermal and Other Modalities

Further enhancing the data fusion approach, thermal cameras can capture differences in surface temperature, which might correlate with variations in plant health, metabolic activity, or even ripeness across different tomato varieties. Integrating data from thermal sensors alongside RGB, multispectral, and LiDAR provides a truly comprehensive dataset. This multi-modal data fusion creates a richer, more robust understanding of the target environment. AI algorithms can then process these diverse data streams simultaneously, leveraging the strengths of each sensor to build a more confident and accurate classification model, far surpassing what any single sensor could achieve on its own.

Autonomous Flight and Data Acquisition Strategies

The success of sophisticated aerial object recognition hinges not only on advanced sensors and AI but also on intelligent, autonomous flight strategies that ensure optimal data acquisition. The precise capture of high-resolution, multi-modal data for minute discrimination demands a level of flight control and automation beyond manual operation.

Precision Flight Paths for Optimal Data Capture

Autonomous flight planning software plays a critical role in generating optimized flight paths that ensure consistent Ground Sample Distance (GSD), appropriate image overlap (both frontal and side), and optimal camera angles. For distinguishing grape from cherry tomatoes, the flight plan must prioritize a very low GSD, often less than a centimeter per pixel, which necessitates flying at lower altitudes. The autonomous system can calculate the most efficient flight corridors to cover the target area, maintaining a steady speed and altitude, minimizing distortion, and maximizing data quality. This contrasts sharply with manual flights, where maintaining such precision and consistency across large areas is virtually impossible, leading to inconsistent data quality detrimental to AI analysis.

Dynamic Mission Adaptation and Edge Computing

The next frontier in autonomous data acquisition involves real-time analysis and dynamic mission adaptation. Imagine a drone equipped with edge computing capabilities, where AI models can partially process data on board as it’s being collected. If the initial data from an area yields ambiguous results in differentiating between tomato types, the drone could autonomously adjust its flight path – perhaps performing a closer pass, capturing images from a different angle, or triggering additional sensor readings (e.g., switching to a higher zoom or capturing more spectral bands). This adaptive intelligence minimizes the need for costly re-flights and significantly improves the efficiency and accuracy of data collection, ensuring that even the most subtle distinctions are captured.

High-Throughput Data Processing and Cloud Integration

The fusion of multi-modal data at extremely high resolutions generates colossal volumes of information. Processing this data efficiently requires robust infrastructure. Cloud-based platforms, offering scalable storage and high-performance computing resources, are essential for storing, organizing, and analyzing petabytes of drone-derived data. AI model inference, especially for deep learning architectures, is computationally intensive. Cloud integration allows for parallel processing, enabling rapid turnaround of analytical results, from initial data ingestion to final classification maps that highlight where grape tomatoes end and cherry tomatoes begin within a field. This seamless pipeline from autonomous flight to cloud processing is critical for translating raw data into actionable insights for precision agriculture.

The Impact on Precision Agriculture and Beyond

The ability to differentiate between grape and cherry tomatoes, driven by these innovations in aerial object recognition, represents a microcosm of a much broader revolution with profound implications for precision agriculture and numerous other industries.

Granular Crop Management and Yield Optimization

In agriculture, this advanced capability translates directly into unprecedented levels of granular crop management. Farmers can accurately identify the precise location and health status of specific varieties within a field. This enables highly targeted interventions – whether it’s applying specific nutrients, targeted pest control, or precisely timing irrigation for optimal growth. Knowing the exact distribution of different tomato varieties allows for more accurate yield prediction, better resource allocation, and optimized harvest planning, ultimately leading to higher quality produce and reduced waste. The economic impact of being able to precisely manage specific crop types, down to individual plants, is immense.

Automated Quality Control and Harvesting

Looking further into the future, this technology is a precursor to fully automated quality control and selective harvesting. Drones equipped with these advanced AI systems could not only differentiate between grape and cherry tomatoes but also assess their ripeness, detect blemishes, or identify optimal harvest windows. This information could then guide autonomous robotic harvesters, ensuring that only perfectly ripe and high-quality produce is picked. Such automation would revolutionize labor-intensive farming practices, increase efficiency, and ensure consistent product quality for consumers.

Broader Applications of Micro-Discrimination Technology

The underlying principles and technological advancements developed to distinguish between seemingly identical tomato varieties extend far beyond agriculture. This sophisticated micro-discrimination technology has vast implications across various industries: in environmental monitoring, it could differentiate between subtly distinct invasive and native plant species; in infrastructure inspection, it could pinpoint specific types of material fatigue or subtle structural anomalies; in security and defense, it could identify specific vehicle types or camouflaged objects with unprecedented accuracy. The challenge of “what’s the difference between grape and cherry tomatoes” serves as a powerful testament to how pushing the boundaries of aerial tech and innovation can unlock solutions for complex, real-world problems.