The Evolving Language of Human-Drone Interaction

As unmanned aerial vehicles (UAVs) transcend their initial roles as niche tools and integrate ever more deeply into various sectors, from logistics and agriculture to public safety and infrastructure inspection, the complexities of their operation and interaction with human counterparts grow exponentially. The sheer volume of data drones collect, the intricacy of their flight paths, and the critical decisions they make in autonomous modes necessitate a communication paradigm far more intuitive and efficient than traditional telemetry or complex data displays. In this evolving landscape of human-drone collaboration, the very concept of “meaning” is being redefined, extending beyond textual or verbal cues to embrace symbolic, visual communication — akin to the universal language of emojis in digital human interaction.

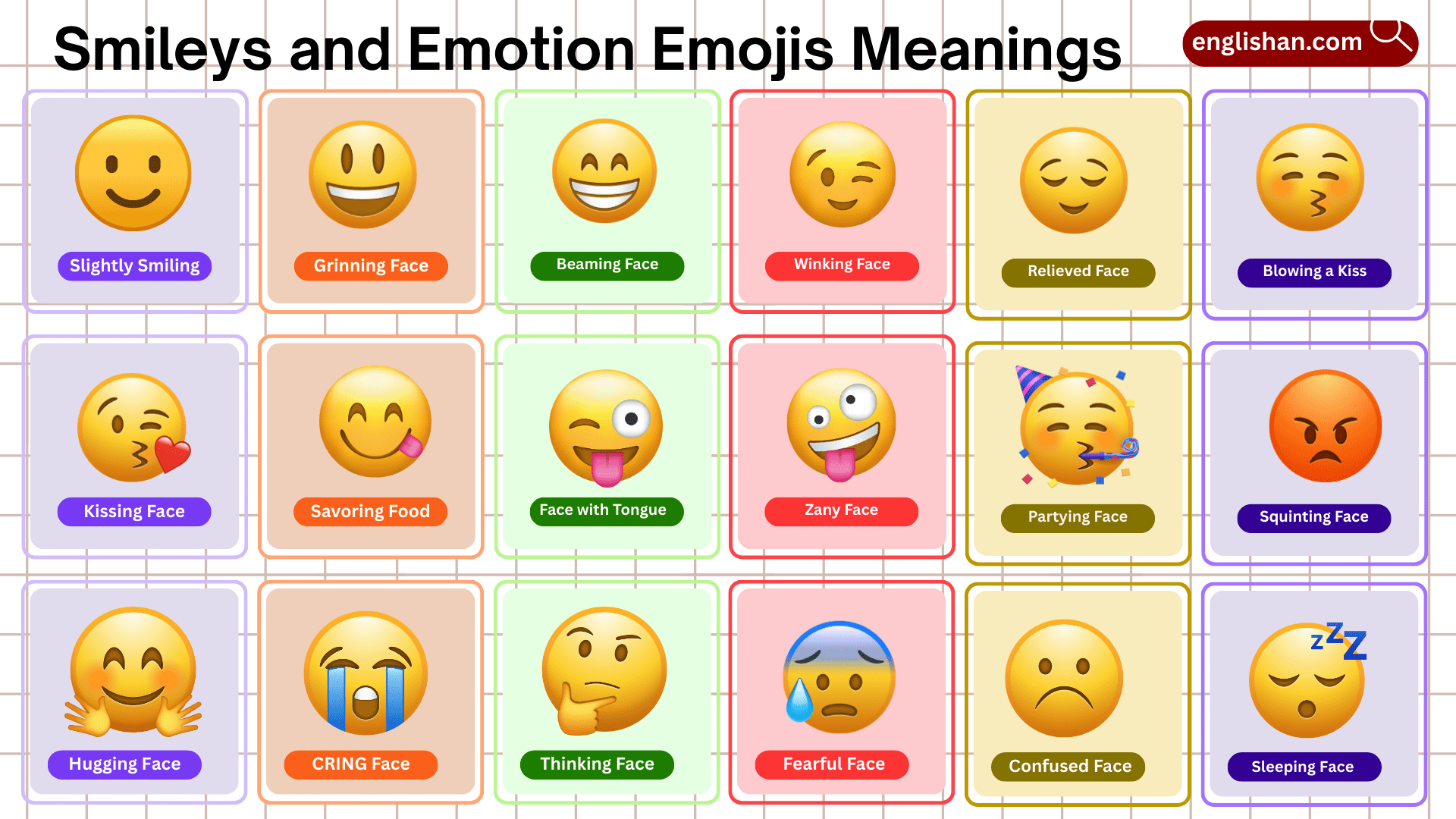

This exploration delves into how advanced tech and innovation within the drone industry are grappling with this challenge, leveraging artificial intelligence (AI), sophisticated sensor fusion, and human-computer interaction principles to create a more seamless dialogue between drone and operator, and indeed, between drone and the wider public. The “meaning of emoji” in this context is not about literal smiley faces on a drone’s display, but rather about the pursuit of concise, universally understandable visual signals that can convey complex states, intentions, or interpretations with immediate clarity, reducing cognitive load and enhancing operational safety and efficiency. This innovative approach is pivotal for the widespread adoption and safe integration of autonomous aerial systems into our everyday lives.

Interpreting Intent: AI’s Quest for Visual Semiotics

The pinnacle of drone innovation lies in their capacity for autonomous decision-making, which hinges critically on the ability to interpret their environment and, crucially, human intent. Just as a human deciphers the “meaning” of an emoji in a text message—understanding emotion, context, or an abstract concept—advanced drone AI systems are being engineered to decode the visual “emojis” present in their surroundings. This involves moving beyond rudimentary object detection to a sophisticated understanding of visual semantics.

Modern computer vision algorithms, fueled by deep learning and neural networks, enable drones to identify not just objects but also their states, relationships, and even potential human intent. For instance, in an AI Follow Mode, a drone isn’t merely tracking a person’s GPS coordinates; it might be interpreting subtle body language, gestures, or movement patterns that communicate a desire to speed up, slow down, or pause. These visual cues, though complex, function as a form of “emoji-like” signal for the drone’s AI, providing rich context that numerical data alone cannot convey. A person waving frantically could be interpreted by an intelligent drone as a distress “emoji,” prompting an autonomous response like hovering for closer inspection or signaling for help.

Furthermore, in remote sensing and mapping applications, AI-powered drones interpret environmental “emojis” such as subtle changes in vegetation color indicating crop stress, specific patterns of damage signifying infrastructure failure, or unusual ground disturbances pointing to geological instability. These are not direct human communications but environmental signals that the AI interprets to derive critical “meaning” for subsequent actions or data reporting. The innovation here lies in training AI models to understand nuanced visual patterns that previously required extensive human analysis, transforming raw visual data into actionable intelligence with the immediacy and clarity often associated with symbolic communication. This capability underpins the future of adaptive mission planning, autonomous navigation in complex environments, and proactive hazard detection, making drones not just data collectors, but intelligent interpreters of the visual world.

Communicating Status: Drone-to-Human Visual Narratives

Just as crucial as a drone’s ability to interpret its environment is its capacity to communicate its own status, intentions, and findings back to human operators and, increasingly, to the public. Traditional methods like numerical telemetry on a controller screen or spoken alerts, while functional, often lack the immediacy and universality needed for complex, dynamic operations or interactions with non-expert bystanders. This is where the concept of “meaning of emoji” finds a powerful application in drone-to-human communication: using intuitive, symbolic visual cues to convey critical information instantly and across language barriers.

Imagine a drone indicating a low battery not with a numerical percentage, but with a universally recognized “battery emoji” that slowly depletes, or changes from green to red. An “obstacle detected” alert could manifest as a pulsating red light or a projected “warning sign” on the ground below the drone. These visual narratives simplify complex data into easily digestible formats, reducing the cognitive load on operators and enhancing situational awareness for anyone in the drone’s vicinity. This innovative approach goes beyond simple warning lights; it’s about crafting a visual language that mirrors the efficiency and widespread understanding of emojis.

Beyond Simple Icons: Dynamic Visual Feedback

The innovation extends beyond static icons to dynamic visual feedback systems. This includes sophisticated LED arrays on the drone’s chassis that can display complex patterns, projected symbols onto the ground, or even subtle changes in flight behavior that are designed to be explicitly interpreted as communicative signals. For example, a drone performing a slow, methodical orbit might project a “mapping” icon downwards to signal its purpose to people below, or a distinct light pattern might indicate “landing sequence initiated.”

The development of these expressive interfaces is critical for fostering public trust and ensuring safety as drones become more common in urban airspace. When a drone can communicate its intent clearly—”I am delivering,” “I am surveying,” “I am observing for public safety”—it demystifies its presence and reduces potential anxiety. This requires extensive research into visual semiotics, color psychology, and human perception to ensure that the chosen “visual emojis” are unambiguous and culturally appropriate, preventing misinterpretations that could have serious consequences.

Furthermore, integrating these symbolic visual cues into augmented reality (AR) interfaces for drone operators offers a potent advancement. Instead of glancing down at a controller, operators could see “emoji-like” status indicators, flight path warnings, or mission objectives overlaid directly onto their view of the drone or its environment through AR goggles. This contextual, intuitive feedback loop significantly enhances the operator’s ability to process information and make quick, informed decisions, solidifying the drone’s role as a trusted, communicative partner rather than just a remote machine.

The Future of Expressive Drone-Human Interfaces

The exploration of “what meaning of emoji” within drone technology and innovation points towards a future where human-drone interaction is profoundly more intuitive, natural, and effective. This vision encompasses both sides of the communication coin: drones that intelligently interpret the symbolic “emojis” of human intent and environmental cues, and drones that fluently articulate their status and purpose through universally understood visual narratives.

The synergy of these two directions holds the promise of revolutionizing how autonomous systems integrate into society. Imagine drones in logistics that can subtly signal delivery status or potential delays to ground personnel via dynamic light patterns. Consider public safety drones that can communicate immediate hazards or safe zones to crowds during emergencies using projected symbols that cut across language barriers. This intuitive communication paradigm will significantly reduce cognitive load for operators, minimize training requirements for new users, and enhance the safety and public acceptance of drone operations.

Continued innovation in AI, particularly in areas like affective computing and advanced computer vision, will refine the drone’s ability to understand complex human emotional and intentional “emojis” from visual and behavioral data. Concurrently, advancements in display technologies, projection systems, and haptic feedback will enable drones to express themselves with unprecedented clarity and nuance. The development of standardized visual communication protocols, much like a universal set of digital emojis, will be crucial to ensure consistency and widespread understanding across different drone manufacturers and applications.

Ultimately, the meaning of emoji in the context of drone tech and innovation is about forging a profound connection between humans and machines through a shared, intuitive visual language. It represents a shift from complex, data-driven interfaces to elegant, symbolic communication that empowers more seamless human-drone collaboration, paving the way for autonomous aerial systems to become not just tools, but integral, communicative partners in building a more efficient, safer, and connected future.