Understanding the Interconnectedness of Drone Sensors

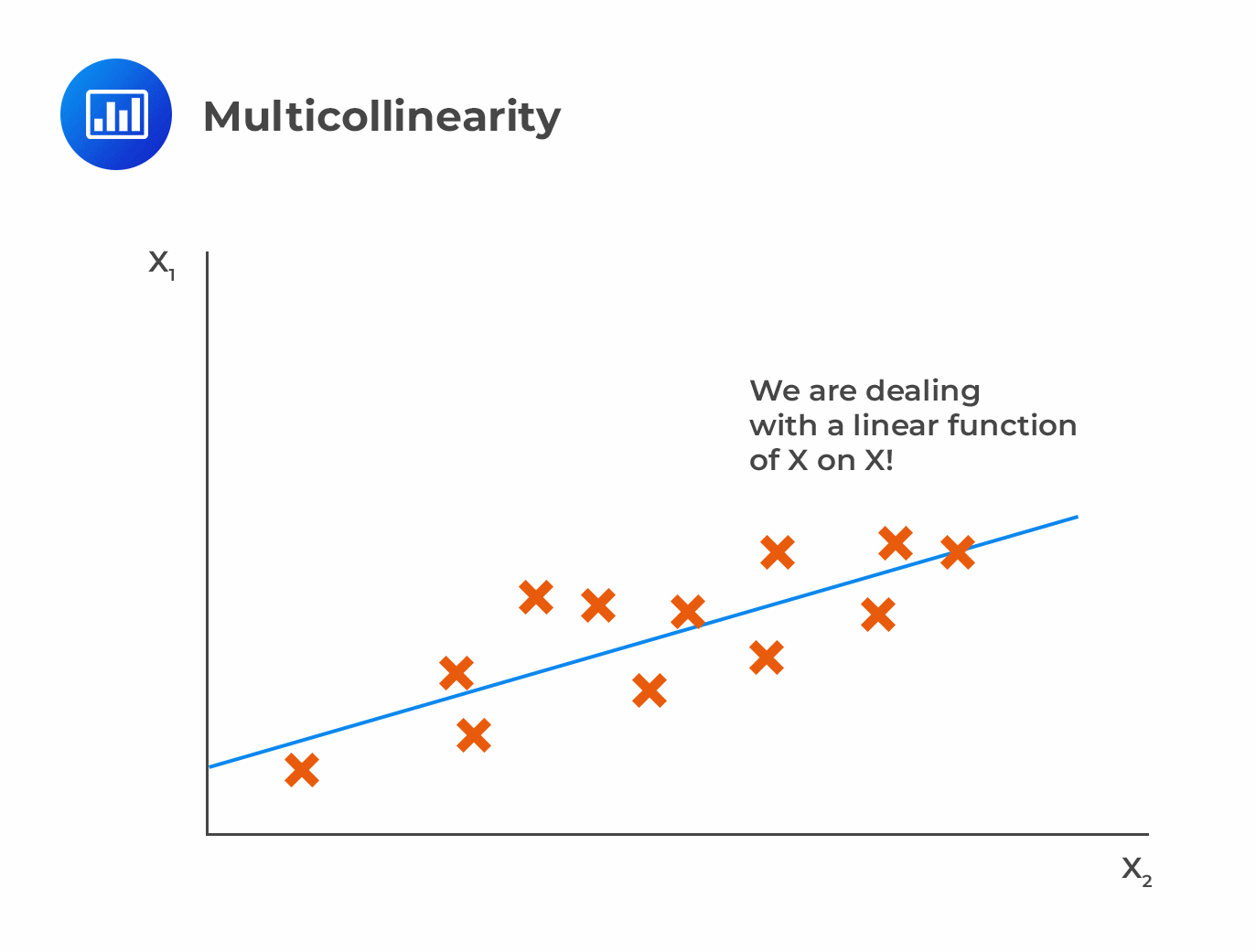

Multicollinearity, a statistical phenomenon that arises when independent variables in a regression model are highly correlated, presents a unique set of challenges within the complex world of drone navigation. While often discussed in the context of econometrics or social sciences, its implications for the reliability and accuracy of aerial systems are profound. In essence, multicollinearity occurs when two or more predictor variables (in a drone’s case, these are often sensor readings or navigation inputs) provide similar information, making it difficult for the system to distinguish their individual contributions and potentially leading to unstable or misleading outputs.

For drones, these “independent variables” are the streams of data pouring in from a multitude of onboard sensors. These can include GPS (Global Positioning System) receivers, Inertial Measurement Units (IMUs) – comprising accelerometers and gyroscopes – barometers, magnetometers, and even visual odometry systems. Each of these components is designed to provide crucial information about the drone’s position, orientation, and velocity. However, the physical world and the limitations of sensor technology mean that these data streams are rarely perfectly independent.

Consider the relationship between GPS and the IMU. GPS provides an absolute global position. The IMU, on the other hand, measures accelerations and angular velocities, which can be integrated over time to estimate changes in position and orientation. While both contribute to determining where the drone is and how it’s moving, they do so in fundamentally different ways. If the GPS signal is weak or intermittent, the navigation system might rely more heavily on the IMU. Conversely, if the IMU experiences drift over time (a common issue), the system will look to the GPS for correction. This inherent interplay, while often beneficial for robust navigation, can, under certain conditions, lead to multicollinearity.

The Data Fusion Challenge

At the heart of drone navigation is data fusion – the process of combining data from multiple sources to produce a more accurate, complete, and reliable estimate than could be obtained from any single sensor alone. Algorithms like Kalman filters and their variants (Extended Kalman Filters, Unscented Kalman Filters) are the workhorses of drone navigation, constantly updating the drone’s state estimation (position, velocity, attitude) based on incoming sensor data.

However, these algorithms are sensitive to the statistical properties of the input data. When the input variables are highly correlated, the covariance matrix used in these filters can become ill-conditioned or even singular. This means that the mathematical operations required to update the state estimation become unstable. Instead of a precise refinement of the drone’s position, the system might produce wildly oscillating estimates, or the confidence in the estimation might plummet.

For instance, imagine a scenario where a drone is attempting to maintain a precise hover. Both the IMU and optical flow sensors (which track movement based on visual patterns) are contributing to this task. If there are highly repetitive visual features in the environment, and the IMU is also experiencing some form of cyclical noise, the data from these two sources might become very similar. The fusion algorithm might struggle to assign appropriate weights to each sensor, leading to erratic altitude hold or horizontal drift.

GPS and IMU Synergies and Conflicts

The synergistic relationship between GPS and IMU is perhaps the most critical in drone navigation. The IMU is excellent for short-term, high-frequency motion tracking, providing smooth and responsive control. However, it suffers from drift, meaning errors accumulate over time due to small inaccuracies in its measurements. GPS, while providing absolute position, has a lower update rate and can be susceptible to signal dropouts, multipath interference (where the signal bounces off surfaces), and atmospheric conditions.

When both GPS and IMU data are clean and reliable, the Kalman filter can effectively fuse them. The GPS corrects the long-term drift of the IMU, and the IMU fills in the gaps during GPS outages, providing smooth motion estimates between GPS updates. This is the ideal scenario.

However, multicollinearity can arise when:

- Low Dynamic Motion: In very static or slow-moving scenarios, the IMU’s contribution to estimating changes in position becomes less distinct from the GPS-derived position. Both might be reporting very little movement, leading to high correlation.

- Sensor Malfunctions or Degradation: If an IMU accelerometer begins to consistently report a slight bias, and the GPS also has a corresponding, correlated error (perhaps due to a faulty antenna setup), the data streams can become highly similar, confusing the fusion algorithm.

- Environmental Factors: In canyons or urban environments where GPS signals are reflected, the GPS data itself might have a correlated error pattern. If the IMU also experiences a related artifact (e.g., vibrations affecting its readings in a similar way to the GPS signal degradation), multicollinearity can occur.

Manifestations of Multicollinearity in Flight Performance

The practical consequences of multicollinearity in drone navigation are diverse and can manifest in several critical aspects of flight performance. These are not abstract statistical concepts but tangible issues that directly impact a drone’s safety, mission success, and operational efficiency.

Unstable Position and Attitude Estimation

The most direct impact is on the drone’s state estimation. When multicollinearity is present, the algorithms responsible for calculating the drone’s precise position (latitude, longitude, altitude) and attitude (roll, pitch, yaw) become less confident. Instead of a smooth, steady estimate, the reported values may fluctuate erratically. This can lead to:

- “Jittery” Hover: A drone that should be holding a stable position might appear to “shiver” or subtly drift around its target point, even in calm weather.

- Inaccurate Waypoint Following: During automated flight missions, if the navigation system cannot accurately determine the drone’s position and velocity, it will struggle to follow pre-programmed flight paths. This can result in deviations from the intended route, missed targets, or even collision with obstacles.

- Erratic Orientation: Unstable attitude estimation can lead to unwanted rotations or tilts, making it difficult for the drone to maintain a level camera or perform controlled maneuvers.

Reduced Control Responsiveness

The flight controller, which translates pilot commands or autonomous flight plans into motor commands, relies heavily on accurate state estimation. If the underlying navigation data is compromised by multicollinearity, the flight controller’s response can become sluggish, overly sensitive, or unpredictable.

- Delayed or Exaggerated Control Inputs: A pilot attempting to steer the drone might experience a delay in its response, or a small input might result in a much larger, unintended movement. This is because the flight controller is trying to interpret noisy and correlated sensor data.

- Difficulty in Manual Control: Maintaining fine control during tasks like precise landing or delicate maneuvers becomes significantly harder, increasing the risk of crashes.

- Autopilot Instability: Autonomous functions, such as auto-takeoff, auto-land, or return-to-home, become less reliable. The system might abort these functions unexpectedly or perform them in an unsafe manner.

Over-Reliance and Under-Reliance on Specific Sensors

Multicollinearity can inadvertently cause the navigation system to over-emphasize or under-emphasize the data from certain sensors. When two sensors are providing highly correlated, albeit potentially noisy, information, the fusion algorithm might struggle to discern which one is more trustworthy.

- Over-Reliance on Noisy Data: If a degraded sensor starts producing data that is highly correlated with another sensor’s output, the system might disproportionately trust this combined, yet flawed, information. This can mask the underlying problem and lead to a gradual deterioration of navigation accuracy.

- Ignoring Valuable Data: Conversely, if a sensor is functioning correctly but its output is highly correlated with a sensor experiencing temporary interference, the fusion algorithm might incorrectly down-weight the good data, leading to a reliance on the compromised stream.

Increased Computational Load and Potential for System Freezes

While less common, severe multicollinearity can sometimes push the limits of computational resources. The complex calculations involved in state estimation and sensor fusion can become particularly intensive when dealing with ill-conditioned matrices. In extreme cases, this could lead to:

- Increased Processing Time: Delays in sensor fusion calculations can further exacerbate control issues.

- System Instability or Crashes: In the most severe scenarios, an unresolvable multicollinearity issue could lead to a failure in the navigation software, potentially causing a complete system freeze or emergency landing/shutdown.

Mitigating Multicollinearity in Drone Navigation Systems

Addressing multicollinearity in drone navigation is not about eliminating sensor correlations entirely, as many of these relationships are fundamental to achieving robust flight. Instead, it involves employing sophisticated techniques to manage and mitigate the negative impacts of these correlations, ensuring reliable and accurate navigation across diverse operational conditions.

Advanced Sensor Fusion Algorithms

The cornerstone of mitigating multicollinearity lies in the continued development and application of advanced sensor fusion algorithms. While Kalman filters are widely used, their effectiveness can be enhanced.

- Adaptive Kalman Filters: These filters can dynamically adjust their parameters (like process and measurement noise covariances) based on the observed data. If the algorithm detects that two sensor inputs are becoming too similar and potentially problematic, it can adapt its weighting strategy to reduce reliance on the combined problematic input.

- Factor Graphs and Graph-Based SLAM: More advanced techniques like factor graphs and Simultaneous Localization and Mapping (SLAM) algorithms offer a more holistic approach to state estimation. They can explicitly model the dependencies and uncertainties between different sensor measurements and map features, allowing for more robust solutions even in the presence of correlated data.

- Machine Learning Approaches: Neural networks and other machine learning models are increasingly being used for sensor fusion. These models can learn complex, non-linear relationships between sensor inputs and the drone’s state, potentially identifying and compensating for multicollinearity in ways that traditional algorithms might struggle with.

Sensor Diversity and Redundancy

While multicollinearity can arise from correlated data, employing a diverse set of sensors with different operating principles can help create a more resilient system.

- Complementary Sensors: Pairing sensors that measure fundamentally different aspects of the environment or motion can provide independent information. For example, combining GPS (absolute position) with visual odometry (relative motion from camera) and LiDAR (precise distance measurements) creates a rich set of data streams. If one stream becomes problematic, others can still provide valid, uncorrelated information.

- Redundant Sensors of the Same Type: Having multiple GPS receivers or multiple IMUs can provide cross-validation. If one sensor’s output deviates significantly from the others, it can be identified as faulty and excluded from the fusion process, preventing it from contributing to multicollinearity.

Pre-flight Calibration and Health Monitoring

Rigorous pre-flight calibration and continuous health monitoring of onboard sensors are critical preventive measures.

- Routine Calibration: Ensuring that IMUs are properly calibrated for bias and scale factor, and that magnetometers are compensated for hard-iron and soft-iron effects, minimizes inherent sensor inaccuracies that can lead to correlated errors.

- Real-time Anomaly Detection: Implementing algorithms that continuously monitor the output of each sensor for unexpected behavior, drift, or statistical anomalies can flag potential issues before they become severe enough to cause significant multicollinearity. This might involve checking for expected ranges of readings, rate of change, or consistency across redundant sensors.

Robustness to Environmental Conditions

Understanding how environmental factors can induce correlations and designing systems to cope is paramount.

- GPS Signal Quality Assessment: Navigation systems can incorporate algorithms to assess the quality of the GPS signal (e.g., number of satellites, Dilution of Precision – DOP values). In areas with poor GPS reception, the system can automatically increase its reliance on other sensors and reduce its susceptibility to GPS-induced multicollinearity.

- Vibration Damping: For IMUs, effective vibration damping can prevent external disturbances from introducing correlated noise into the accelerometer and gyroscope readings, which could otherwise lead to spurious correlations with other sensor data.

By embracing these strategies, drone manufacturers and operators can build and deploy aerial systems that are not only capable of complex navigation but also exceptionally resilient to the statistical challenges posed by multicollinearity, ensuring safer and more effective operations in an increasingly demanding world of aerial technology.