The digital age has seen an exponential explosion in data. From the personal photos we take to the vast datasets collected by scientific instruments, the sheer volume of information being generated is staggering. For years, the terabyte (TB) has been the benchmark for massive storage, a unit of measurement that once seemed almost incomprehensible. Yet, as technology continues its relentless march forward, we are increasingly confronted with a new reality: data exceeding the terabyte scale. This isn’t just about larger hard drives; it’s about the fundamental shifts in how we generate, process, and store information, particularly within fields like advanced imaging and remote sensing, where every pixel and every scan contributes to an ever-growing digital footprint.

The Insatiable Appetite for Data in Advanced Imaging

The evolution of cameras and imaging technology has been a primary driver behind the need for storage solutions beyond the terabyte. What began with relatively low-resolution digital sensors has blossomed into sophisticated imaging systems capable of capturing unprecedented detail and information. This advancement is not confined to consumer-grade equipment; it permeates scientific research, industrial inspection, and, critically, the ever-expanding world of aerial observation.

High-Resolution Imaging: Beyond Megapixels

Modern imaging sensors, particularly those found in professional photography, scientific instruments, and high-end aerial platforms, boast resolutions that dwarf older technologies. While megapixel counts were once the headline feature, today’s sensors are measured in tens or even hundreds of megapixels. A single high-resolution photograph, especially when captured in RAW format, can easily consume hundreds of megabytes. Now, imagine a drone equipped with such a camera, capturing thousands of images over an extended survey or a cinematic production. The raw data quickly accumulates.

RAW vs. Compressed Formats: The Storage Cost of Detail

The difference between RAW and compressed image formats is significant when considering storage. RAW files contain unprocessed data directly from the sensor, offering maximum flexibility in post-processing but at the cost of considerably larger file sizes. A single RAW image from a high-end camera can range from 50MB to over 100MB. When you multiply this by thousands of shots, or by the data streams from multiple cameras operating simultaneously, the terabyte barrier is easily breached. Compressed formats like JPEG offer substantial space savings, but they come with an inherent loss of detail and dynamic range, which can be unacceptable for professional applications or detailed scientific analysis.

Multi-Spectral and Hyperspectral Imaging: Adding Dimensions to Data

The need for data exceeding terabytes is particularly acute in the realm of multi-spectral and hyperspectral imaging. These advanced techniques go beyond capturing visible light to record information across a broader range of the electromagnetic spectrum.

Multi-Spectral Imaging: Capturing Key Wavelengths

Multi-spectral cameras capture data in several distinct spectral bands, often including visible light, near-infrared (NIR), and short-wave infrared (SWIR). This allows for the differentiation of materials based on their unique spectral signatures. For instance, in precision agriculture, multi-spectral data can help identify plant health, stress, and nutrient deficiencies invisible to the naked eye. A drone survey covering even a moderately sized agricultural field using a multi-spectral camera, capturing data across, say, five to ten different bands for each image, will generate datasets far exceeding the capacity of standard terabyte drives within a single mission.

Hyperspectral Imaging: The Ultimate in Spectral Detail

Hyperspectral imaging takes this concept to an extreme, capturing hundreds of narrow, contiguous spectral bands. This level of detail allows for the identification and characterization of materials with remarkable precision. Applications range from geological mapping and mineral exploration to environmental monitoring and identifying subtle changes in vegetation. The data generated by hyperspectral sensors is exceptionally dense. Each pixel in a hyperspectral image is not just a color value but a complete spectrum. A hyperspectral data cube, encompassing spatial and spectral dimensions, can grow exponentially in size. A small area surveyed with a hyperspectral sensor can easily produce terabytes of data, requiring storage solutions that dwarf conventional terabyte drives.

Video and High-Frame-Rate Capture: The Constant Stream

High-definition video, especially at high frame rates and bit depths, is another significant contributor to data volume. While 4K video has become commonplace, the demands of advanced imaging often push this further.

8K and Beyond: Unprecedented Resolution for Aerial Cinematography

The pursuit of cinematic quality in aerial filmmaking has led to the adoption of 8K and even higher resolution video capture. An 8K video stream, even when compressed, generates an enormous amount of data per second. For context, a single minute of uncompressed 8K RAW video can easily exceed several terabytes. While professional workflows rarely deal with entirely uncompressed video for extended periods, even highly compressed 8K footage for a lengthy drone shoot can quickly push storage needs into the tens or hundreds of terabytes.

High-Frame-Rate and Slow-Motion Capture: Capturing Fleeting Moments

The ability to capture fast-moving subjects or crucial transient events often necessitates high-frame-rate video recording. Capturing action at 120fps, 240fps, or even higher in resolutions like 4K means that significantly more frames are recorded per second than standard video. This drastically increases the data rate. For a drone tasked with capturing high-speed industrial processes or wildlife behavior in slow motion, the resulting footage can rapidly consume terabytes of storage.

The Scale of Data in Remote Sensing and Scientific Observation

Beyond conventional imaging, fields like remote sensing and scientific observation are generating data volumes that are fundamentally redefining our understanding of “big data.” These applications often involve the continuous collection of vast datasets over large geographical areas or extended periods.

LiDAR Scanning: Creating Dense 3D Worlds

Light Detection and Ranging (LiDAR) technology uses lasers to measure distances and create highly accurate 3D representations of the environment. LiDAR systems, particularly those mounted on aerial platforms like drones and aircraft, are used for a wide array of applications, including topographic mapping, infrastructure inspection, forestry management, and urban planning.

Point Clouds: The Building Blocks of 3D Models

The output of a LiDAR scan is a point cloud – a collection of millions or even billions of points, each with X, Y, and Z coordinates, and often additional attributes like intensity and color. A single LiDAR survey of a moderately sized area can generate point clouds containing tens of billions of points. Storing these massive point clouds, especially when combined with associated data like intensity values, RGB color information, and classification attributes, quickly pushes storage requirements well beyond terabytes. Larger projects, such as city-wide mapping or extensive infrastructure surveys, can easily generate petabytes (PB) of LiDAR data.

Radar Imaging: Penetrating the Surface

Synthetic Aperture Radar (SAR) is an active imaging technology that can penetrate clouds and darkness, providing valuable data for Earth observation, disaster monitoring, and reconnaissance. SAR systems, often deployed on satellites and aircraft, generate complex datasets that require substantial storage.

Interferometric SAR (InSAR): Measuring Displacement

Interferometric SAR (InSAR) is a technique that uses multiple SAR images to measure ground surface deformation with millimeter-level accuracy. This is crucial for monitoring seismic activity, land subsidence, and the structural integrity of large engineering projects. The processing of InSAR data, which involves comparing multiple large SAR datasets, results in further data generation that adds to the overall storage burden.

Environmental Monitoring and Scientific Datasets

Scientific endeavors, particularly in environmental research, often involve continuous data streams from various sensors.

Weather and Climate Data: Global Scale Observations

The collection of weather and climate data, from ground-based sensors and atmospheric probes to satellite observations, generates petabytes of information annually. While not always directly linked to drone imaging, these represent vast datasets that inform our understanding of the planet. Drones equipped with atmospheric sensors and deployed for localized environmental studies contribute to this data stream, further emphasizing the need for beyond-terabyte storage.

Geological and Geophysical Surveys: Deep Earth Insights

Large-scale geological and geophysical surveys, whether conducted from the ground or from aerial platforms, involve the collection of seismic, magnetic, and gravimetric data. These datasets are often massive, requiring specialized storage and processing infrastructure.

Beyond Terabytes: Petabytes and Exabytes

As the scale of data generation continues to accelerate, the terabyte has inevitably been superseded by larger units of measurement.

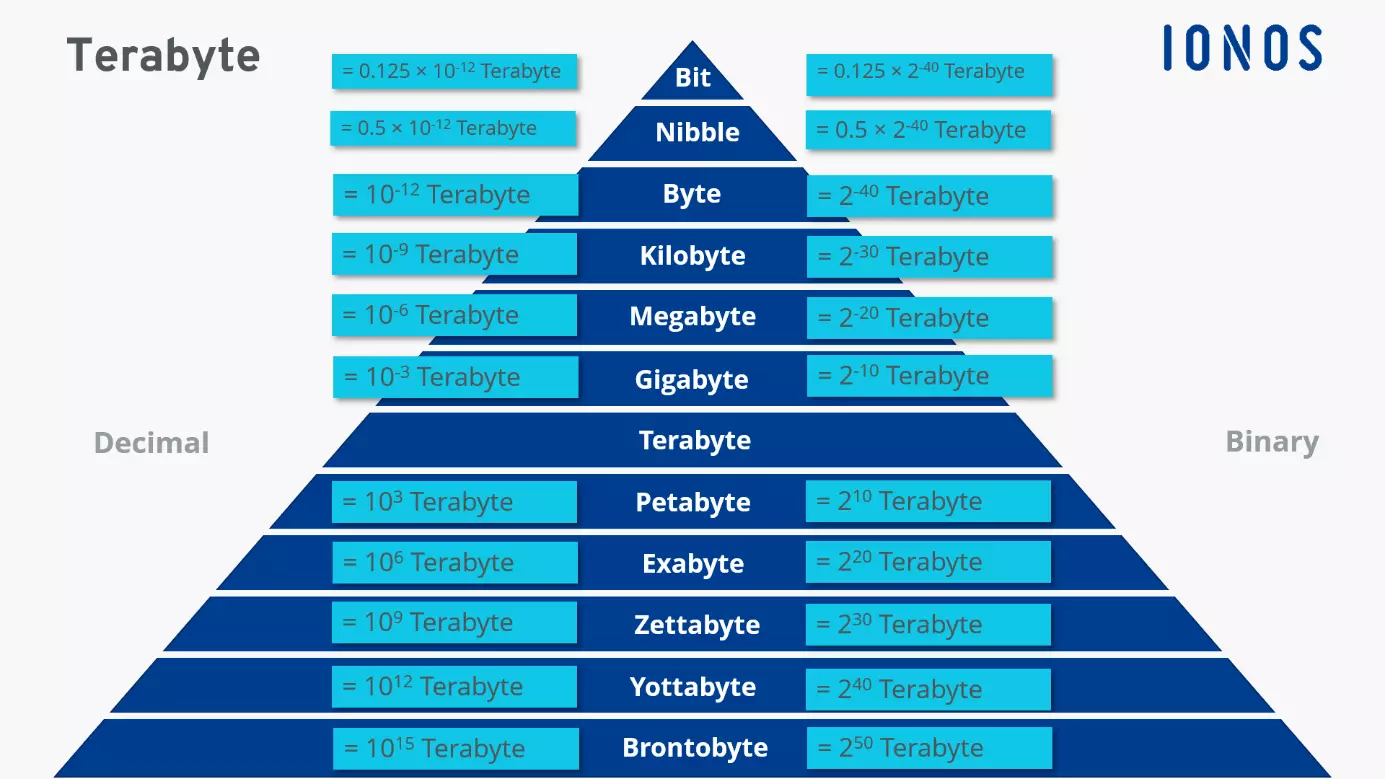

The Petabyte (PB): A Thousand Terabytes

A petabyte (PB) is equal to 1,000 terabytes. This is the scale at which large research institutions, major cloud providers, and national scientific projects operate. For instance, the Large Hadron Collider generates around 25 petabytes of data per year. Similarly, comprehensive aerial surveys using multiple advanced sensors over vast geographical regions can easily reach petabyte scales.

The Exabyte (EB): The Dawn of Immense Data

An exabyte (EB) is 1,000 petabytes, or one billion terabytes. This unit of measurement is becoming increasingly relevant as global data creation estimates continue to climb. While exabyte-scale data is still primarily the domain of the largest data centers and global initiatives, the trajectory suggests that the data volumes discussed in advanced imaging and remote sensing will continue their ascent towards these colossal figures.

Conclusion: Preparing for a Data-Rich Future

The question “what is bigger than a terabyte?” is no longer a hypothetical. It is a practical reality for anyone working at the cutting edge of imaging technology, remote sensing, and scientific data collection. Whether it’s capturing the intricate details of a multi-spectral landscape, mapping the precise topography of a region with LiDAR, or filming cinematic vistas in ultra-high definition, the data requirements are pushing us into the realm of petabytes and beyond. Understanding these evolving data scales is crucial for selecting appropriate storage solutions, developing efficient data management strategies, and ultimately, for unlocking the full potential of the incredible insights that advanced imaging and observational technologies can provide. The future of data is undeniably immense, and our capacity to store and process it must evolve in kind.