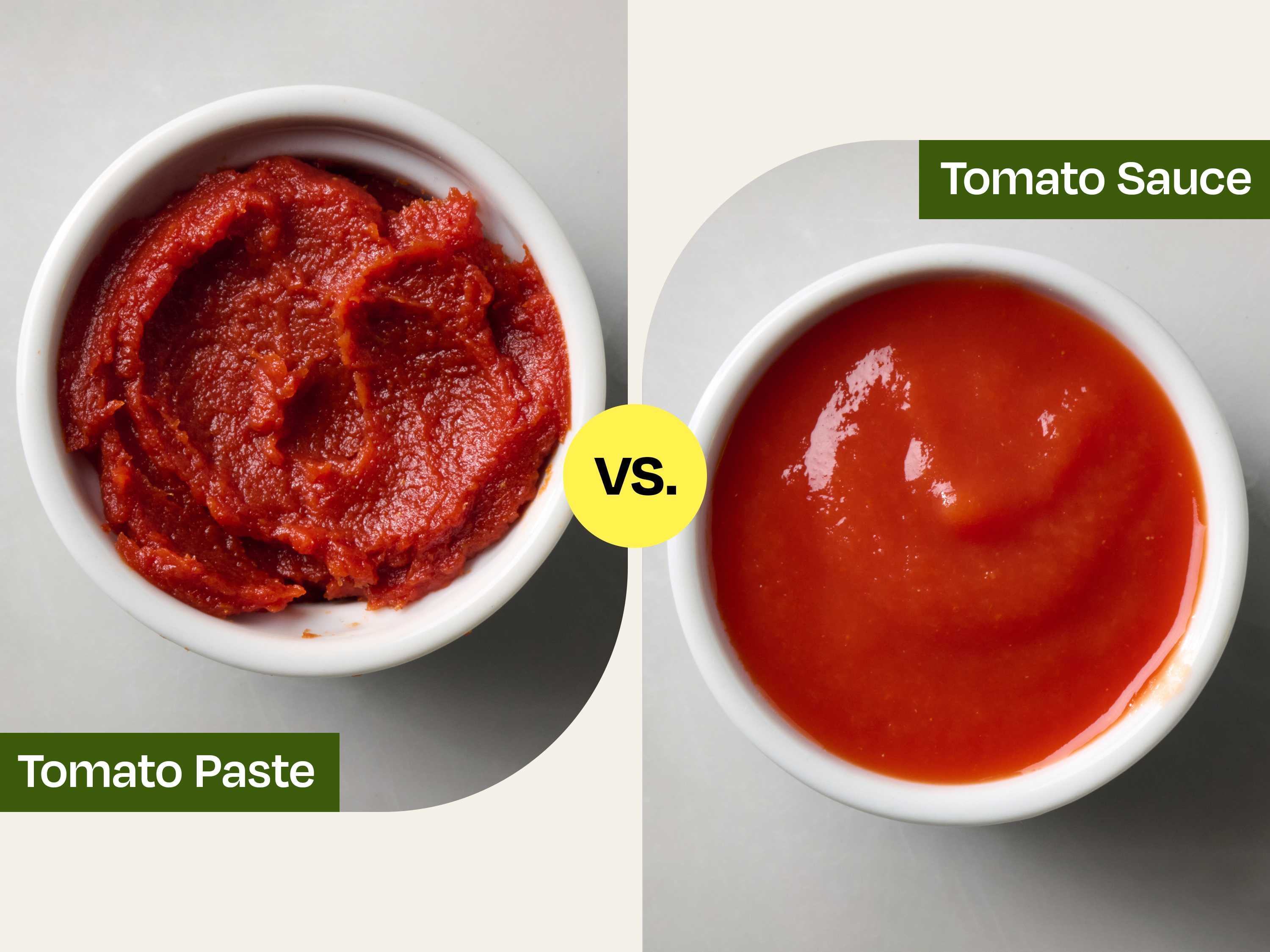

The rapid evolution of unmanned aerial vehicles (UAVs) has transformed them from remote-controlled gadgets into sophisticated flying robots. At the heart of this transformation lies Artificial Intelligence (AI), a multifaceted field that empowers drones with unparalleled capabilities. However, a common misconception conflates the underlying AI algorithms with the integrated features they enable. To draw an analogy from our daily lives, imagine the difference between tomato paste and tomato sauce. Tomato paste is a concentrated, fundamental ingredient – the essence of tomato flavor, requiring further processing and dilution to become a usable sauce. Tomato sauce, on the other hand, is a refined, ready-to-use product, built upon that paste but enhanced with other ingredients and preparation.

Similarly, in the realm of drones, we must distinguish between Core AI Algorithmic Intelligence – the foundational, concentrated computational ‘paste’ of machine learning and deep learning models – and Integrated Autonomous Flight & Smart Features – the refined, user-ready ‘sauce’ that allows drones to perform complex tasks with unprecedented autonomy and intelligence. Understanding this distinction is crucial for appreciating the current capabilities of drone technology and anticipating its future trajectory. It helps engineers, developers, and end-users alike to grasp where the fundamental research is happening versus where practical applications are manifesting.

The Foundation: Core AI Algorithmic Intelligence

At its most fundamental level, core AI algorithmic intelligence refers to the sophisticated computational models and frameworks that allow machines to learn from data, recognize patterns, make decisions, and even adapt without explicit programming for every scenario. These are the raw ingredients, the concentrated essence that gives drones their ‘brainpower.’

Machine Learning & Deep Learning Architectures

The bedrock of drone AI lies in various machine learning (ML) and deep learning (DL) architectures. These include:

- Neural Networks (NNs): The foundational structure, inspired by the human brain, allowing systems to learn from large datasets.

- Convolutional Neural Networks (CNNs): Primarily used for processing visual data, CNNs are vital for drones to “see” and interpret their surroundings. They excel at image recognition, object detection, and classifying elements within a scene, enabling drones to identify obstacles, subjects for tracking, or points of interest for inspection.

- Recurrent Neural Networks (RNNs) & Long Short-Term Memory (LSTMs): These are crucial for processing sequential data, such as flight telemetry over time, predicting future movements, or understanding dynamic environmental changes. They give drones a sense of temporal awareness.

- Reinforcement Learning (RL): A powerful paradigm where AI agents learn optimal behaviors by interacting with an environment and receiving rewards or penalties. RL is particularly significant for developing adaptive flight control systems, allowing drones to learn how to navigate complex, dynamic environments or perform intricate maneuvers through trial and error, much like a human pilot gains experience.

These architectures are the “paste” – dense, potent, and capable of a vast array of transformations, but not yet a direct, usable product for the end-user. They represent the mathematical models and computational processes that give rise to intelligence.

Data Processing & Perception

Core AI algorithms are the engine behind a drone’s ability to perceive its environment. Drones are equipped with a suite of sensors – high-resolution cameras (RGB, thermal, multispectral), LiDAR scanners, ultrasonic sensors, Inertial Measurement Units (IMUs), and GPS modules. The AI’s role here is to:

- Object Recognition and Tracking: Using CNNs, AI can accurately identify and classify objects (e.g., people, vehicles, power lines, specific crop diseases). This extends to tracking these objects through complex environments, compensating for occlusions or changes in lighting.

- Semantic Segmentation: This goes beyond simple object detection by classifying every pixel in an image, effectively understanding the “meaning” of different areas (e.g., distinguishing between sky, ground, water, buildings, and vegetation). This detailed environmental understanding is critical for safe navigation and intelligent interaction.

- Simultaneous Localization and Mapping (SLAM): A cornerstone of autonomous navigation, SLAM algorithms allow a drone to build a map of an unknown environment while simultaneously keeping track of its own location within that map. This is vital for indoor flight, GPS-denied environments, or creating 3D models of complex structures. The core AI processes raw sensor data from vision and inertial sensors to continuously update the drone’s understanding of its position and surroundings.

Predictive Analytics & Decision-Making Frameworks

Beyond mere perception, core AI provides drones with the ability to anticipate and decide. Predictive analytics, driven by ML models, can forecast the trajectory of moving objects, identify potential collision risks, or predict equipment failure based on operational data. Decision-making frameworks, often employing algorithms like decision trees, neural networks, or sophisticated planning agents, allow the drone to choose the optimal action in real-time, based on its perceived environment and mission objectives. This might involve altering a flight path to avoid a sudden gust of wind, prioritizing a target during a search, or selecting the most energy-efficient route. These capabilities are the intelligent core, working beneath the surface of any visible drone action.

The Application Layer: Integrated Autonomous Flight & Smart Features

While core AI algorithmic intelligence forms the backbone, it’s the Integrated Autonomous Flight & Smart Features that represent the “tomato sauce” – the refined, user-friendly applications built upon this powerful foundation. These are the features pilots and operators interact with directly, enabling complex operations with ease and precision.

Autonomous Navigation & Mission Planning

One of the most impactful applications of AI is enabling drones to fly independently. This isn’t just about following pre-set GPS waypoints; it involves dynamic, intelligent navigation:

- Real-time Obstacle Avoidance: Utilizing sophisticated sensor fusion and AI algorithms, drones can detect obstacles (trees, buildings, power lines, even birds) in real-time and dynamically adjust their flight path to bypass them safely. This moves beyond simple “stop and hover” to intelligent rerouting.

- Adaptive Path Planning: For tasks like mapping large areas or inspecting complex structures, AI can optimize flight paths for efficiency, coverage, and sensor data quality, often adapting the plan on the fly based on environmental conditions or unexpected discoveries.

- Complex Mission Execution: AI-driven systems allow drones to perform multi-faceted missions such as automated photogrammetry, cinematic tracking shots, or industrial inspections with minimal human intervention. The drone can autonomously take off, execute a detailed flight plan, capture specific data points, and return to base.

Intelligent Tracking & Follow Modes

Modern drones often feature advanced tracking capabilities that leverage robust AI:

- Active Subject Tracking: AI algorithms allow drones to identify a human, vehicle, or animal and autonomously follow it, keeping it centered in the frame regardless of its movement. This involves sophisticated object recognition, motion prediction, and real-time camera and gimbal control.

- Spotlight & Point of Interest (POI) Modes: In these modes, the drone’s AI maintains focus on a specified subject or geographical point while the pilot is free to control the drone’s flight path around it. The AI handles the camera’s pan, tilt, and zoom to keep the subject in view, allowing for complex, cinematic shots that would be challenging or impossible for a single pilot to execute manually. These features embody the seamless integration of AI for creative and practical applications.

Advanced Imaging & Data Capture Systems

AI doesn’t just fly the drone; it also significantly enhances its primary function: data collection.

- AI-Powered Gimbals & Stabilization: While mechanical gimbals provide physical stabilization, AI further refines this by predicting motion and making micro-adjustments to ensure perfectly smooth footage, even in challenging conditions.

- Intelligent Framing & Composition: Some drones use AI to suggest or automatically adjust camera angles and framing for optimal cinematic composition, mimicking techniques used by professional cinematographers.

- Automated Data Collection for Mapping & Inspection: For applications like surveying, agriculture, or infrastructure inspection, AI-driven systems can automatically identify relevant areas, optimize camera settings, and ensure comprehensive data capture, reducing the need for extensive post-processing and increasing accuracy.

Bridging the Gap: From Algorithm to Application

The journey from a raw AI algorithm to a polished, integrated feature is complex and requires significant engineering.

The Role of Software Frameworks & SDKs

The gap is often bridged by sophisticated software frameworks and Software Development Kits (SDKs). These provide the tools and interfaces that allow developers to harness the power of core AI algorithms and translate them into specific drone functionalities. Companies like DJI, Autel, and Parrot offer SDKs that expose API access to their drones’ underlying AI capabilities, enabling third-party developers to create specialized applications leveraging features like object detection, real-time telemetry, and flight control. These frameworks abstract away the low-level complexities of the AI, allowing for more efficient application development.

Hardware Integration & Edge Computing

For integrated autonomous features to work in real-time, the AI models must run efficiently on the drone itself. This necessitates powerful onboard processors – often specialized GPUs (Graphics Processing Units) or NPUs (Neural Processing Units) – capable of performing complex computations at the “edge” (i.e., on the device, rather than relying on cloud processing). Edge computing is critical for applications like obstacle avoidance, where milliseconds can mean the difference between a successful flight and a collision. It ensures that the drone can react instantly to its environment without latency, making real autonomy feasible.

Iterative Development & User Feedback

The development of integrated features is an iterative process. Core AI algorithms are continuously refined, and their applications are rigorously tested in various real-world scenarios. User feedback plays a crucial role, guiding developers in enhancing functionality, improving reliability, and making features more intuitive and robust. This cycle of development, testing, and refinement ensures that the “tomato sauce” (the features) becomes increasingly palatable and effective for the end-user.

The Impact and Future Trajectory

Understanding the distinction between core AI and integrated features illuminates the profound impact AI has on the drone industry and offers a glimpse into its future.

Enhanced Safety & Efficiency

Integrated autonomous features significantly enhance drone safety by reducing human error, automating complex maneuvers, and providing real-time hazard detection. They also dramatically increase operational efficiency across various sectors. Inspections of critical infrastructure can be completed faster and more accurately. Precision agriculture benefits from AI-driven analysis of crop health. Search and rescue operations can cover vast areas more effectively, identifying targets with greater speed.

Expanding Use Cases

The refined applications of AI are continually unlocking new possibilities. Drones are moving beyond simple aerial photography to become indispensable tools in construction for progress monitoring, in logistics for last-mile delivery, in entertainment for dynamic live event coverage, and in environmental monitoring for ecological surveys. Each new feature built upon the AI foundation broadens the drone’s utility and economic value.

The Road Ahead: Greater Autonomy & Swarm Intelligence

The future promises even greater sophistication. We can anticipate drones with heightened levels of autonomy, capable of long-duration missions with minimal human oversight, self-charging, and self-diagnosis. The frontier of swarm intelligence, where multiple drones cooperatively execute complex tasks, is also rapidly advancing. This will involve sophisticated AI algorithms enabling inter-drone communication, collaborative mapping, and synchronized actions, pushing the boundaries of what is currently possible.

In conclusion, just as tomato paste provides the concentrated flavor that forms the basis of a delicious sauce, Core AI Algorithmic Intelligence provides the fundamental computational power and learning capabilities that enable drones to perceive, reason, and act intelligently. Integrated Autonomous Flight & Smart Features, on the other hand, are the refined, user-centric applications built upon this powerful foundation – the ready-to-use ‘sauce’ that allows drones to perform sophisticated tasks with remarkable autonomy and precision. Both are indispensable, but understanding their distinct roles is key to appreciating the current marvels of drone technology and anticipating the transformative innovations yet to come in the exciting world of Tech & Innovation.