The digital landscape of social media is a vast and intricate ecosystem, and within its ever-expanding confines, platforms like Facebook play a pivotal role in connecting billions of individuals. However, this immense connectivity also presents inherent challenges, primarily related to the management of content. In this dynamic environment, the role of a Facebook moderator emerges as a critical, albeit often unseen, function. While not directly tied to the technological marvels of drones or flight, understanding the work of moderators is crucial for appreciating the operational complexities of the platforms that often facilitate the sharing of drone-related content, from breathtaking aerial cinematography to intricate FPV racing footage.

The very existence of a platform as expansive as Facebook necessitates robust systems for ensuring that the content shared aligns with community standards and legal regulations. This is where Facebook moderators, also known as content moderators, step in. They are the human gatekeepers, tasked with reviewing and acting upon user-generated content that may violate policies. This encompasses a wide spectrum of material, including hate speech, harassment, misinformation, graphic violence, nudity, and copyright infringements. The sheer volume of content uploaded to Facebook every second demands a large, dedicated workforce to perform these essential tasks.

The Evolving Landscape of Content Moderation

The role of a Facebook moderator has evolved significantly since the platform’s inception. Initially, moderation efforts were likely more rudimentary, relying on automated systems and a smaller human oversight team. However, as the user base and content volume exploded, so did the complexity and variety of problematic content. This led to a substantial scaling up of moderation operations.

The Scale of the Operation

Facebook, and by extension Meta, employs thousands of content moderators globally. These individuals work in various capacities, often for third-party outsourcing companies, though some may be directly employed by Meta. The global nature of this workforce is essential for several reasons. Firstly, it allows for 24/7 coverage, ensuring that harmful content can be addressed promptly regardless of the time zone. Secondly, it provides linguistic diversity, enabling moderators to understand and enforce policies across a multitude of languages and cultural nuances. A policy interpreted in one language might have a different implication or impact in another, making localized expertise indispensable.

The Tools of the Trade

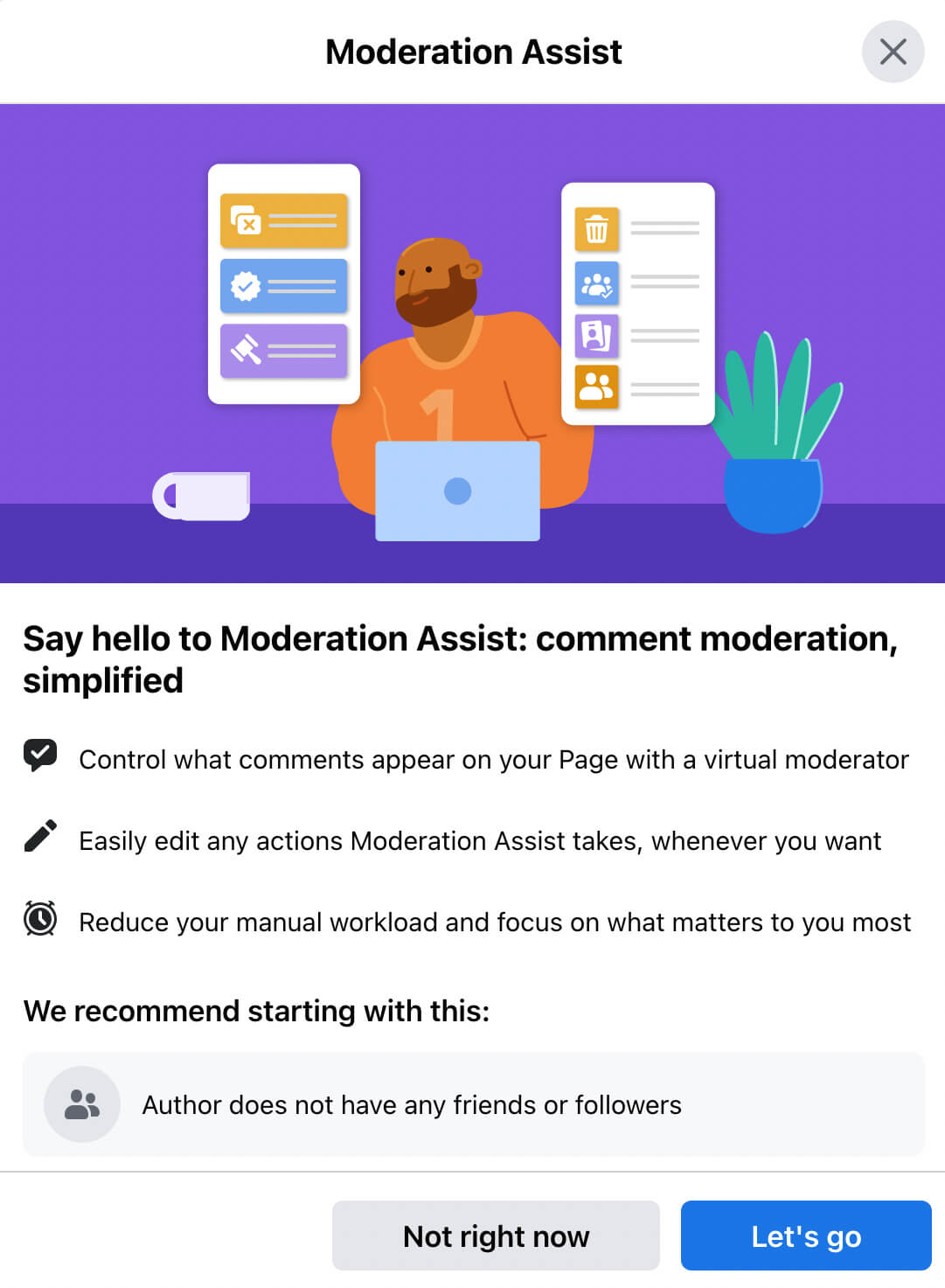

Facebook moderators do not operate in a vacuum. They are equipped with sophisticated internal tools and dashboards designed to streamline the review process. These tools often flag potentially problematic content based on algorithms that detect keywords, patterns, or user reports. Moderators then use these flags as a starting point for their in-depth review. The decision-making process involves carefully assessing the content against a comprehensive set of community standards and policies. This requires not only an understanding of the rules but also the ability to apply them with consistency and fairness, even in ambiguous situations.

The Responsibilities and Challenges of a Facebook Moderator

The responsibilities of a Facebook moderator are multifaceted and demanding. While their core task is to enforce community standards, the nuances of this role extend far beyond a simple binary “approve/reject” decision.

Content Review and Enforcement

The primary responsibility is to review reported content. This can involve text posts, images, videos, comments, and even live streams. Moderators must be adept at identifying content that violates Facebook’s policies, which are extensive and regularly updated. Once a violation is identified, moderators take appropriate action, which can range from removing the content and issuing a warning to a user, to temporarily or permanently disabling accounts. The goal is to create a safer and more respectful online environment for all users.

Dealing with Sensitive and Graphic Material

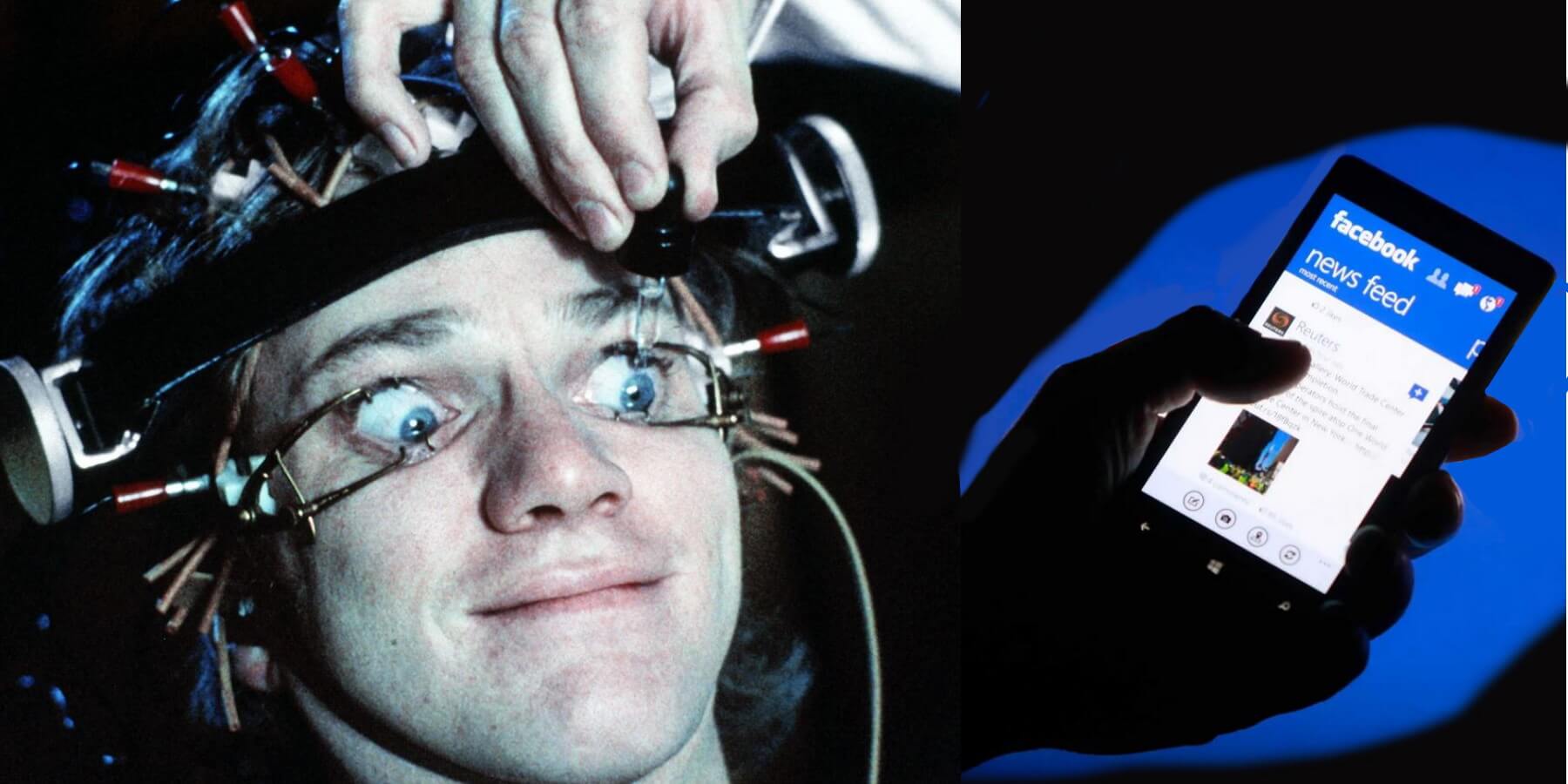

A significant challenge inherent in content moderation is the constant exposure to disturbing and often graphic material. Moderators regularly encounter violent imagery, hate speech, child exploitation material, and other deeply disturbing content. This exposure can take a significant toll on their mental and emotional well-being. Platforms like Facebook have implemented psychological support systems, including counseling and debriefing sessions, to help moderators cope with the emotional burden of their work. However, the effectiveness and sufficiency of these support systems are often subjects of ongoing debate and scrutiny.

The Nuance of Policy Interpretation

Enforcing policies is not always straightforward. Context is crucial. A piece of content that might appear problematic on its own could be permissible when viewed within a specific context, such as educational material or news reporting. Moderators must possess strong critical thinking skills and an ability to discern intent and context. This is particularly true in areas like satire, political commentary, or discussions of sensitive social issues, where lines can be blurred and interpretation can be subjective. Algorithms often struggle with these nuances, making the human moderator’s judgment indispensable.

The Speed-Accuracy Dilemma

In the fast-paced world of social media, speed is of the essence when addressing harmful content. However, accuracy must never be compromised. Moderators are often under pressure to review content quickly, especially during major global events or viral trends where misinformation or hate speech can spread rapidly. Striking the right balance between speed and accuracy is a constant operational challenge. A hasty decision can lead to wrongful content removal or, conversely, the failure to remove genuinely harmful material.

The Impact on the Online Ecosystem

The work of Facebook moderators has a profound impact on the entire online ecosystem. Their efforts, while often behind the scenes, are instrumental in shaping the user experience on one of the world’s largest social platforms.

Shaping User Experience

By removing content that violates policies, moderators contribute to a more positive and less toxic online environment for billions of users. This includes protecting vulnerable groups from harassment and abuse, preventing the spread of dangerous misinformation (such as fake medical advice or election interference), and safeguarding children from inappropriate content. The efficacy of their work directly influences whether users feel safe and comfortable engaging on the platform.

The Interplay with Technological Advancements

While moderators are human, their work is intrinsically linked to the technological advancements of the platforms they serve. Machine learning and artificial intelligence play an increasingly significant role in flagging content, identifying patterns, and automating certain aspects of the review process. However, these technologies are not infallible. They often require human oversight and correction, particularly for complex or novel forms of harmful content. The continuous development of AI in content moderation is an ongoing area of research and implementation, aiming to improve both efficiency and accuracy.

The Broader Societal Implications

The policies that moderators enforce, and the way they are applied, have broader societal implications. Decisions about what constitutes acceptable speech, what information is deemed misinformation, and how to handle graphic content can influence public discourse, political processes, and cultural norms. The debate surrounding content moderation often touches upon fundamental issues of free speech, censorship, and the responsibilities of powerful technology companies in shaping the information landscape. The role of the moderator, therefore, is not merely operational but also carries significant ethical and societal weight.

In conclusion, the role of a Facebook moderator is a complex, demanding, and essential function in the modern digital age. These individuals are the unsung heroes who work diligently to curate the vast ocean of user-generated content, striving to balance freedom of expression with the need for safety, respect, and accuracy. Their work, though often challenging and emotionally taxing, is fundamental to the functioning of social media platforms and profoundly impacts the online experiences of billions worldwide.