Jupyter Notebooks have become an indispensable tool for data scientists, researchers, and developers across various technical domains. Their interactive nature, ability to combine code, visualizations, and narrative text, makes them ideal for exploration, experimentation, and reproducible research. While the core functionality remains consistent, the practical application and integration of Jupyter Notebooks can significantly benefit from a deeper understanding of its installation and configuration within specific technological niches. This guide focuses on the installation process, emphasizing aspects relevant to Tech & Innovation, particularly in areas like AI, autonomous systems, and data-driven insights.

Understanding the Jupyter Ecosystem

Before diving into the installation, it’s crucial to grasp what Jupyter Notebooks represent. Jupyter is an open-source project that supports interactive data science and scientific computing across numerous programming languages. The “Notebook” is the most widely used component, a web application that allows users to create and share documents containing live code, equations, visualizations, and narrative text.

The Jupyter ecosystem extends beyond the notebook itself, encompassing:

- JupyterLab: A next-generation web-based user interface for Project Jupyter. It provides a more flexible and powerful environment, integrating notebooks, code consoles, terminals, and data file viewers.

- JupyterHub: A multi-user server that manages and proxies multiple single-user Jupyter Notebook servers. This is particularly relevant for collaborative environments and large-scale deployments in research institutions or companies.

- Kernels: These are the computational engines that execute code. The most common is the IPython kernel for Python, but Jupyter supports kernels for many other languages, including R, Julia, Scala, and even custom languages.

For those working in Tech & Innovation, particularly with AI and autonomous systems, the ability to rapidly prototype algorithms, visualize sensor data, train machine learning models, and document findings in a single, shareable format is paramount. Jupyter Notebooks facilitate this workflow by lowering the barrier to entry for experimentation and fostering collaboration among diverse technical teams.

Installation Methods: Choosing the Right Path

The installation of Jupyter Notebooks can be approached in several ways, each with its advantages depending on your existing software setup and technical expertise. For Tech & Innovation professionals, efficiency and reproducibility are key.

Method 1: Using Pip (Python Package Installer)

Pip is the standard package manager for Python, and it’s the most direct way to install Jupyter Notebooks and related components. This method is highly recommended for users who have Python already installed and configured on their system.

Prerequisites:

- Python Installation: Ensure you have Python 3.3 or a newer version installed. You can download it from the official Python website (python.org). It’s good practice to have Python added to your system’s PATH environment variable.

- Pip: Pip is usually bundled with Python installations from version 3.4 onwards. You can check if it’s installed by opening a terminal or command prompt and typing

pip --version.

Steps:

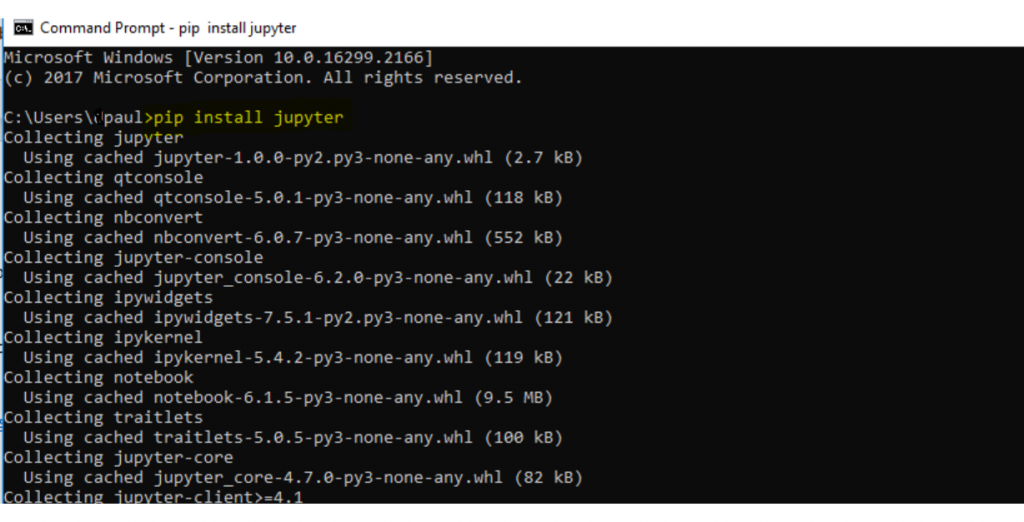

-

Open your Terminal or Command Prompt:

- On Windows: Search for “Command Prompt” or “PowerShell.”

- On macOS: Open “Terminal” from Applications > Utilities.

- On Linux: Open your preferred terminal emulator.

-

Install Jupyter Notebook:

The primary command to install the Jupyter Notebook application is:pip install notebookThis command downloads and installs the core Jupyter Notebook package along with its dependencies.

-

Install JupyterLab (Recommended for Enhanced Features):

For a more modern and integrated experience, especially for complex Tech & Innovation projects involving multiple tools, installing JupyterLab is highly advisable:pip install jupyterlabJupyterLab offers a more flexible interface that can host notebooks, terminals, and data viewers within a single window, streamlining workflows for AI development and data analysis.

-

Verify Installation:

After the installation completes, you can verify it by running:jupyter --versionThis command should output the versions of Jupyter core components.

-

Launch Jupyter Notebook/Lab:

To start the Jupyter Notebook server, simply type:

bash

jupyter notebook

Or, to launch JupyterLab:

bash

jupyter lab

Upon execution, a new tab should open in your default web browser, displaying the Jupyter file browser interface. The terminal will show server activity. To stop the server, return to the terminal and pressCtrl + Ctwice.

Method 2: Using Conda (Anaconda/Miniconda)

Conda is a powerful, cross-platform package and environment management system. It’s particularly popular in the data science and scientific computing communities because it simplifies the installation of complex packages and their dependencies, especially those that might be challenging to build from source. For Tech & Innovation roles that frequently deal with specific software versions, diverse libraries (like TensorFlow, PyTorch, or specialized sensor processing libraries), and the need for isolated development environments, Conda is an excellent choice.

Prerequisites:

- Anaconda or Miniconda Installation: Download and install either Anaconda (a full distribution including many data science packages) or Miniconda (a minimal installer that includes Conda and Python). You can find them on the official Anaconda website (anaconda.com).

Steps:

-

Open your Terminal or Conda Prompt:

- On Windows: Search for “Anaconda Prompt.”

- On macOS/Linux: Open your regular terminal.

-

Install Jupyter Notebook and JupyterLab:

Conda makes installing both Jupyter Notebook and JupyterLab straightforward. You can install them together for convenience:conda install notebook jupyterlabConda will resolve dependencies and install the necessary packages within your active Conda environment.

-

Verify Installation:

Similar to the pip method, you can verify the installation by checking versions:jupyter --version -

Launch Jupyter Notebook/Lab:

Once installed within a Conda environment, you launch them the same way as with pip:

bash

jupyter notebook

or

bash

jupyter lab

Managing Environments with Conda (Crucial for Tech & Innovation):

A significant advantage of using Conda is its robust environment management. This is vital for Tech & Innovation projects where different libraries or Python versions might be required for distinct tasks.

- Creating a New Environment:

bash

conda create --name myenv python=3.9 # Replace myenv and 3.9 as needed

- Activating an Environment:

bash

conda activate myenv

- Installing Packages in an Environment:

After activating an environment, useconda installorpip installto add packages specifically to that environment.

This isolation prevents conflicts between project dependencies and ensures a clean, reproducible setup for complex Tech & Innovation workflows.

Essential Configurations and Extensions for Advanced Users

While the basic installation gets you up and running, several configurations and extensions can significantly enhance the Jupyter Notebook experience, particularly for users engaged in cutting-edge Tech & Innovation.

Jupyter Kernels for Diverse Languages and Environments

For users working with technologies beyond Python, such as R for statistical analysis or Julia for high-performance scientific computing, installing specific kernels is essential.

-

Installing an R Kernel:

If you have R installed, you can install the IRkernel:# In R console: install.packages('IRkernel') IRkernel::installspec() # Register the kernelThen, in your terminal:

python -m ipykernel install --user --name=ir --display-name="R" -

Installing a Julia Kernel:

If you have Julia installed, you can add the IJulia kernel:

julia

# In Julia REPL:

using Pkg

Pkg.add("IJulia")

The IJulia package automatically registers itself as a Jupyter kernel.

These multi-language capabilities are invaluable in Tech & Innovation where interdisciplinary work is common, allowing seamless integration of tools and languages within a single notebook interface.

JupyterLab Extensions

JupyterLab’s extensibility is one of its major strengths. A rich ecosystem of extensions can augment its capabilities for tasks common in Tech & Innovation, such as version control, debugging, and advanced visualization.

-

Installing Extensions:

Extensions are typically installed using thepiporcondapackage managers, or directly via the JupyterLab extension manager.# Install an extension via pip pip install jupyterlab-git # Example: Git integration # After installing extensions via pip/conda, you often need to rebuild JupyterLab jupyter lab buildAlternatively, within JupyterLab itself, you can access the Extension Manager from the left sidebar to browse and install extensions graphically.

-

Useful Extensions for Tech & Innovation:

- Git Integration: Track changes, commit, and manage your notebooks directly within JupyterLab, crucial for collaborative development and reproducible research.

- Variable Inspector: Visualize the current state of your program’s variables, aiding debugging and understanding complex algorithms.

- Debugger: Step through your code line by line, inspect variables, and set breakpoints, significantly improving the development of sophisticated Tech & Innovation algorithms.

- Interactive Tables: Enhance the display and interactivity of Pandas DataFrames, making data exploration more intuitive.

Notebook Configuration and Customization

For highly specialized Tech & Innovation workflows, further customization of Jupyter Notebooks might be desired.

-

Customizing the Notebook Directory:

You can specify a different directory where Jupyter will look for notebooks when it starts. Edit or create ajupyter_notebook_config.pyfile (its location can be found usingjupyter --config-dir).# In jupyter_notebook_config.py c = get_config() c.NotebookApp.notebook_dir = '/path/to/your/preferred/notebooks' -

Server Settings:

Advanced configurations for the notebook server itself, such as IP address binding, port selection, and security settings, can also be managed within this configuration file. This is particularly relevant when deploying JupyterHub for shared access in Tech & Innovation teams.

Best Practices for Tech & Innovation Workflows

Installing Jupyter Notebooks is just the first step. To leverage its full potential in Tech & Innovation, adopting certain practices is highly beneficial.

1. Environment Management is Key

As emphasized earlier, consistently using virtual environments (with venv, virtualenv, or Conda) is non-negotiable. This ensures that each project has its isolated dependencies, preventing version conflicts and making projects portable and reproducible. For Tech & Innovation projects that often integrate with hardware, specific drivers, or low-level libraries, a clean environment is critical for successful deployment and testing.

2. Embrace Reproducibility

Jupyter Notebooks excel at narrative explanations combined with code. Document your experiments, data sources, preprocessing steps, model architectures, and evaluation metrics thoroughly within the notebook. This makes your work understandable and reproducible for colleagues, supervisors, or even your future self. Version control systems like Git are indispensable here.

3. Leverage JupyterLab for Complex Projects

While the classic Jupyter Notebook interface is functional, JupyterLab offers a more integrated and efficient workspace. Its ability to open multiple notebooks, terminals, and file browsers side-by-side streamlines the development of complex Tech & Innovation solutions, allowing for seamless switching between coding, running scripts, and managing files.

4. Optimize for Performance

For computationally intensive tasks common in Tech & Innovation (e.g., training deep learning models, processing large datasets), consider:

- Using Optimized Libraries: Ensure you are using libraries like NumPy, SciPy, Pandas, and specialized AI frameworks (TensorFlow, PyTorch) that are well-optimized for performance.

- GPU Acceleration: If your Tech & Innovation work involves deep learning, configure your environment and notebooks to utilize GPU acceleration, which can drastically reduce training times.

- Efficient Data Handling: For very large datasets, explore out-of-core processing or specialized data formats (like Parquet or HDF5) that can be efficiently read and written.

5. Collaboration and Sharing

- JupyterHub: For team-based Tech & Innovation efforts, setting up JupyterHub provides a centralized platform where multiple users can access their own Jupyter environments, fostering collaboration and knowledge sharing.

- nbconvert: Use

nbconvertto export notebooks into various formats like HTML, PDF, or Markdown for easy sharing and presentation of results.

By following these guidelines, the installation of Jupyter Notebooks becomes the gateway to a powerful, flexible, and reproducible environment that can significantly accelerate innovation in the ever-evolving landscape of Tech & Innovation.