In the rapidly evolving landscape of Artificial Intelligence, especially with the proliferation of Large Language Models (LLMs), terms like “zero-shot prompting” have emerged as pivotal concepts. Far from being mere technical jargon, zero-shot prompting represents a paradigm shift in how we interact with and extract value from advanced AI systems. It signifies a model’s remarkable ability to perform a task it has never explicitly been trained on, purely based on the instructions provided in a natural language prompt. This capability unlocks unprecedented levels of flexibility, efficiency, and adaptability, propelling AI into new frontiers of application and innovation.

At its core, zero-shot prompting leverages the vast knowledge and sophisticated pattern recognition abilities acquired by foundation models during their extensive pre-training phases. These models, having been exposed to colossal datasets encompassing text, code, and often other modalities from the internet, develop a profound understanding of language, concepts, and how to generalize information. When confronted with a zero-shot prompt, the model doesn’t retrieve a specific answer from its memory for that exact task; instead, it synthesizes an appropriate response or performs the requested action by applying its generalized understanding of language and context to the new, unseen instruction. This article delves into the intricacies of zero-shot prompting, exploring its mechanisms, applications, advantages, challenges, and its profound implications for the future of AI.

The Core Concept of Zero-Shot Prompting

Understanding zero-shot prompting begins with grasping its fundamental difference from traditional machine learning paradigms. It’s a testament to the power of modern AI architectures, particularly transformer models, to achieve a level of generalization that was once considered highly aspirational.

Defining Zero-Shot Learning

Zero-shot learning refers to a model’s capacity to recognize or process data types it hasn’t encountered during training. In the context of language models, this translates to performing tasks without specific examples or fine-tuning for that particular task. Imagine asking a child who has only seen dogs and cats to identify a “giraffe” based solely on a textual description: “It’s a very tall animal with a long neck and spots.” If the child can deduce and point to a giraffe (given a choice), they’ve performed a zero-shot identification. Similarly, an LLM performs a zero-shot task when it generates a summary, translates text, or answers a question on a topic it wasn’t explicitly trained to summarize, translate, or answer questions on in a task-specific dataset, but rather inferred how to perform these tasks from its general language understanding.

How it Differs from Few-Shot and Supervised Learning

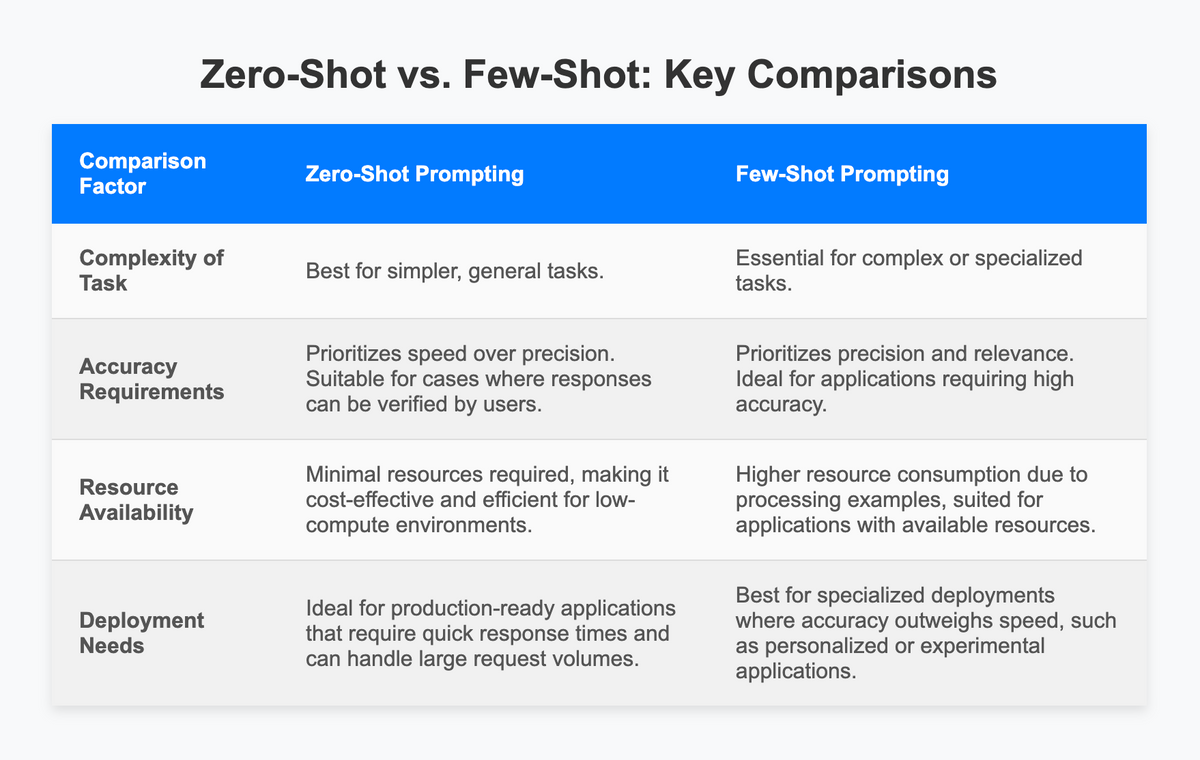

To truly appreciate zero-shot prompting, it’s helpful to contrast it with other common AI learning paradigms:

- Supervised Learning: This is the most traditional form of machine learning, where a model is trained on a large dataset of input-output pairs. For instance, to classify emails as spam or not spam, the model is fed thousands of emails explicitly labeled “spam” or “not spam.” The model learns to map specific input features to specific output labels. It requires extensive, task-specific labeled data.

- Few-Shot Learning: This approach reduces the dependency on vast labeled datasets. Instead of thousands, the model is given a handful of examples (e.g., 3-5) within the prompt itself to guide its understanding of the desired task. For example, to perform sentiment analysis on a new product review, one might provide three examples of positive reviews and their “positive” label, and three negative reviews with their “negative” label, before asking the model to classify a new review. This helps the model infer the pattern from a limited context.

- Zero-Shot Prompting/Learning: As discussed, this requires no examples within the prompt for the specific task. The model relies solely on its pre-trained knowledge and the instructions given in the prompt. Its ability to generalize allows it to tackle entirely new tasks without prior exposure or fine-tuning data for that specific task. This represents the pinnacle of transfer learning, where general knowledge is effectively applied to novel situations.

The Role of Pre-trained Language Models

The advent of powerful pre-trained language models, such as GPT (Generative Pre-trained Transformer) series, BERT, T5, and others, has been instrumental in making zero-shot prompting a reality. These models undergo an incredibly resource-intensive pre-training phase where they learn predictive tasks (like predicting the next word in a sentence or filling in masked words) across gargantuan text corpora. This process imbues them with a deep statistical understanding of language, grammar, facts, common sense, and even subtle nuances. This vast, internalized knowledge base is precisely what allows them to comprehend novel instructions and execute new tasks without specific prior examples. They learn a general representation of language that can be adapted to many downstream tasks.

Mechanisms and Underlying Principles

The capacity of LLMs to perform zero-shot tasks isn’t magic; it’s a sophisticated interplay of architectural design, massive data exposure, and advanced learning algorithms.

Leveraging Pre-trained Knowledge

The sheer scale of pre-training data is a primary factor. By ingesting petabytes of text from diverse sources—books, articles, websites, code repositories—these models build a rich, high-dimensional representation of words, phrases, and concepts. This representation allows them to understand semantic relationships, identify analogies, and infer logical connections across different domains. When a prompt asks for a task it hasn’t seen, the model draws upon this encyclopedic knowledge to find patterns and rules that are conceptually similar to what’s being requested. For example, if it has learned to summarize news articles, it can apply that underlying skill to summarize a research paper, even if it hasn’t seen “summarize research papers” explicitly.

The Power of Instruction Following

A critical component of effective zero-shot prompting is the model’s ability to interpret and follow instructions embedded within the prompt. Modern LLMs are not just predicting text; they are developing a capacity for “instruction following.” This capability is often enhanced through further training stages like instruction tuning (e.g., using datasets like Flan-T5, InstructGPT), where models are fine-tuned on diverse sets of tasks presented as instructions. This teaches the model to understand the intent behind a prompt and to act as a general-purpose instruction follower. The prompt then becomes a program written in natural language, dictating the desired behavior.

Inductive Bias and Generalization

Zero-shot prompting heavily relies on the inductive biases learned by the model during pre-training. Inductive bias refers to the assumptions a learning algorithm makes to generalize from limited training data. For LLMs, this means developing an inherent “preference” for certain types of solutions or patterns that are statistically prevalent in human language. This bias, coupled with the transformer architecture’s ability to capture long-range dependencies and hierarchical structures in text, allows the model to extrapolate from its general knowledge base to specific, unseen tasks. It can infer the implicit rules of a task even when no explicit examples are provided, demonstrating powerful generalization capabilities.

Practical Applications and Use Cases in AI

The practical implications of zero-shot prompting are vast, touching numerous sectors and applications within the Tech & Innovation sphere. Its ability to handle diverse tasks without extensive task-specific data makes it incredibly versatile.

Content Generation and Summarization

One of the most common applications is generating and summarizing text. A user can simply prompt an LLM: “Write a short blog post about the benefits of renewable energy for small businesses.” or “Summarize the following article in three bullet points.” The model, without specific training on “blog post writing” or “3-bullet-point summarization,” can execute these tasks effectively, producing coherent and relevant output. This capability streamlines content creation workflows, from marketing copy to academic outlines.

Question Answering and Information Extraction

Zero-shot prompting excels in question-answering scenarios. Users can ask factual questions (“What is the capital of France?”) or more complex, inferential questions (“What might be the implications of quantum computing on cybersecurity?”). The model draws from its vast pre-trained knowledge to formulate an answer. Similarly, for information extraction, one might prompt: “Extract all company names and their corresponding revenue figures from the following financial report.” The model can identify and present the requested data without needing to be fine-tuned on a dataset of financial reports and revenue figures.

Translation and Multilingual Tasks

While specialized translation models exist, LLMs can often perform zero-shot translation between various languages, especially common ones. A prompt like “Translate the following English paragraph into Spanish: [paragraph text]” can yield a reasonable translation. This extends to other multilingual tasks, such as cross-lingual summarization or understanding sentiments in different languages.

Code Generation and Debugging Assistance

For developers, zero-shot prompting has become a powerful tool. Programmers can ask an LLM to “Write a Python function to calculate the factorial of a number” or “Explain what this JavaScript code snippet does and suggest improvements.” The model can generate functional code, explain complex code, and even debug errors, leveraging its understanding of programming languages acquired during pre-training. This significantly boosts productivity and democratizes access to coding assistance.

Advantages and Challenges of Zero-Shot Prompting

While revolutionary, zero-shot prompting is not without its limitations. Understanding both its strengths and weaknesses is crucial for its responsible and effective deployment.

Key Benefits: Efficiency, Adaptability, Reduced Data Dependency

- Efficiency: It drastically reduces the time and resources required to deploy AI solutions. Instead of months spent on data collection and model training, a well-crafted prompt can achieve results almost instantly.

- Adaptability: LLMs become highly versatile tools capable of tackling an enormous range of tasks without needing re-engineering or retraining. This makes them adaptable to evolving needs and niche applications.

- Reduced Data Dependency: The need for large, task-specific labeled datasets is minimized or eliminated, addressing a significant bottleneck in AI development, especially for rare or emerging tasks where data is scarce.

Current Limitations: Hallucination, Prompt Sensitivity, Performance Variability

- Hallucination: LLMs can sometimes generate plausible-sounding but factually incorrect information, known as “hallucination.” In a zero-shot setting, where there are no examples to guide it, the model might invent details if its knowledge base is insufficient or if the prompt is ambiguous.

- Prompt Sensitivity: The performance of a zero-shot model can be highly sensitive to the exact phrasing, structure, and even subtle nuances of the prompt. A slight change in wording can lead to significantly different, sometimes undesirable, outputs. Crafting effective prompts (“prompt engineering”) becomes a critical skill.

- Performance Variability: While impressive, zero-shot performance for complex or highly specialized tasks might not match that of a fine-tuned, task-specific model. Its generalization has limits, and for high-stakes applications, dedicated training might still be necessary.

Ethical Considerations and Bias

Like all AI models, LLMs inherit biases present in their training data. In a zero-shot context, these biases can manifest in unexpected ways, leading to unfair, discriminatory, or harmful outputs, particularly when dealing with sensitive topics or making decisions. Ensuring ethical prompting and scrutinizing outputs for bias are critical responsibilities.

The Future of Zero-Shot Prompting and AI Innovation

Zero-shot prompting is more than just a technique; it’s a foundational element for the next generation of AI systems, promising to reshape how we interact with technology and foster innovation across industries.

Advancements in Model Architectures

Future developments will likely focus on creating even more powerful and robust foundation models. Research is ongoing to improve their reasoning capabilities, reduce hallucination, and enhance their ability to understand complex, multi-step instructions. This includes exploring novel architectural designs beyond the current transformer paradigm and incorporating multimodal learning more deeply.

Towards More Robust and Reliable AI

Efforts are underway to make zero-shot models less sensitive to prompt variations and more consistent in their performance. This involves developing better methods for instruction tuning, incorporating feedback mechanisms, and potentially integrating external knowledge bases to ground responses in verified information, thereby reducing factual errors. The goal is to move towards AI systems that are not only versatile but also trustworthy and reliable in zero-shot scenarios.

Impact on Human-AI Interaction

As zero-shot prompting becomes more sophisticated, it will profoundly impact human-AI interaction. Users will be able to communicate with AI systems in increasingly natural and intuitive ways, describing desired outcomes rather than needing to program or extensively configure them. This will make AI more accessible to non-experts and enable seamless integration of AI capabilities into everyday tools and workflows, driving innovation in areas like personalized assistance, advanced analytics, and creative collaboration. The ability to simply “ask” an AI to perform complex tasks without prior setup or training will democratize access to powerful computing capabilities, fueling a new wave of technological advancement.

Zero-shot prompting stands as a cornerstone of modern AI, embodying the potential for intelligent systems to generalize and adapt with unprecedented flexibility. Its continued development promises to unlock new frontiers in technological innovation, making AI more intuitive, powerful, and an indispensable partner in solving some of humanity’s most complex challenges.