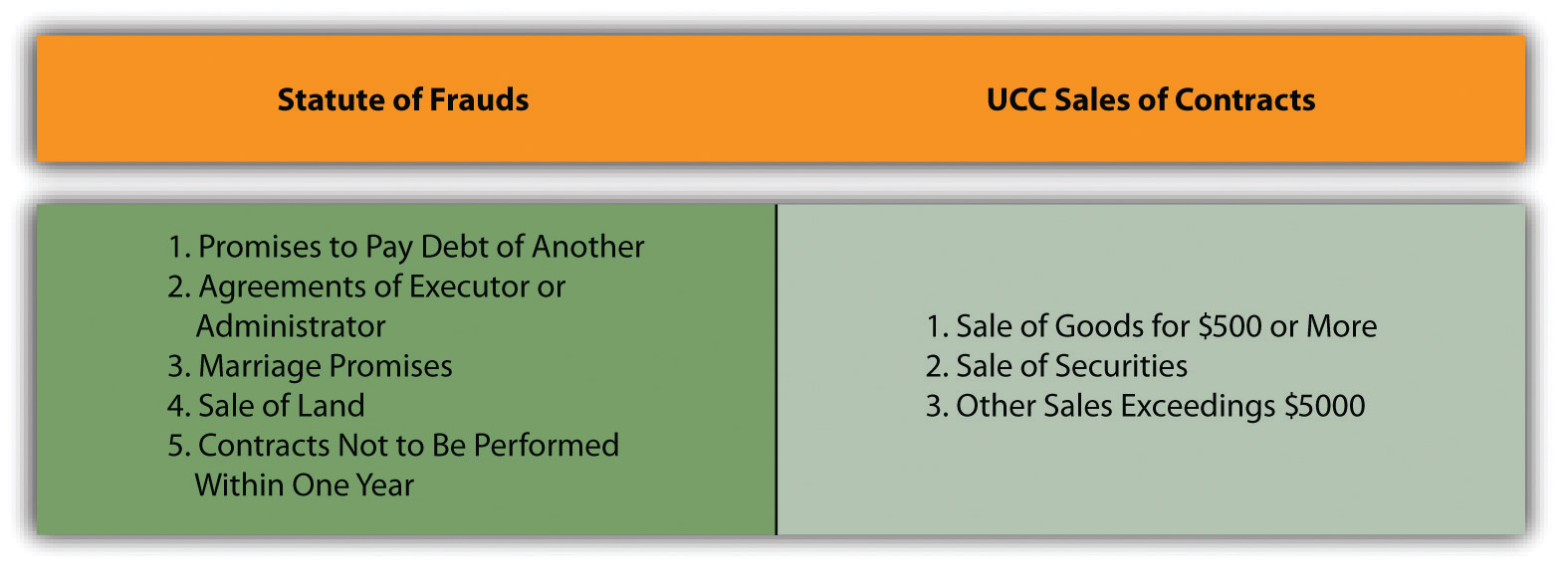

The traditional legal concept of the “Statute of Fraud” mandates that certain agreements, often those involving significant value or long-term commitments, must be in writing to be legally enforceable. This requirement serves to prevent fraudulent claims, misunderstandings, and misrepresentations by providing clear, undeniable proof of an agreement’s terms. In the rapidly evolving landscape of artificial intelligence, autonomous systems, and advanced remote sensing, while the literal legal statute doesn’t apply, its underlying principle—the imperative for verifiable, unalterable proof to prevent systemic misrepresentation or error—has profound conceptual relevance. We find ourselves asking: how do we establish a “digital writing” that provides certainty and trust in a world governed by algorithms, sensor data, and machine decisions?

The Digital “Writing” Requirement: Ensuring Verifiable Autonomy

In the realm of autonomous flight, AI-driven decision-making, and sophisticated remote sensing, human oversight is often minimal, deferred, or even entirely absent. Decisions are made at machine speed, data is processed in petabytes, and operations unfold without traditional human intervention points for verification. Here, the “writing” component of the Statute of Fraud transforms into the need for an immutable, auditable, and transparent record of every significant input, process, and output. This ensures accountability, facilitates troubleshooting, and builds essential trust in these complex systems.

Data Integrity and Immutability in Autonomous Operations

The foundation of trust in any autonomous system begins with the integrity and immutability of its data. Just as a physical contract is signed and stored, digital “agreements” within autonomous platforms—whether an AI choosing a flight path or a remote sensor identifying a critical anomaly—must be securely recorded. This involves more than just logging; it requires robust mechanisms to ensure data cannot be tampered with or altered retroactively. Cryptographic signatures, secure timestamps, and decentralized ledger technologies like blockchain are emerging as powerful tools to create unalterable audit trails. For instance, in drone delivery, every step from package loading to flight path deviation and final delivery confirmation could be recorded on a blockchain, providing an undeniable “written” history. Sensors themselves act as digital “witnesses,” providing raw data that must be authenticated at the point of capture to prevent spoofing or data injection, ensuring the veracity of the initial input.

Explainable AI (XAI) and Decision Transparency

A core challenge in deploying AI for critical functions is the “black box” problem, where even developers struggle to fully comprehend how a deep learning model arrived at a particular decision. For autonomous systems, especially those operating in sensitive environments or making safety-critical choices, merely logging the final output is insufficient. The “Statute of Fraud” here demands a form of “digital writing” that explains the reasoning. Explainable AI (XAI) seeks to provide this transparency, offering insights into the factors influencing an AI’s recommendation or action. This “written explanation” can involve logging the AI model’s inputs, internal states, activation patterns, and the weights assigned to various data points. Such transparency allows human operators, regulators, and incident investigators to audit AI decisions post-factum, understanding why a drone detected a certain object or why an autonomous vehicle chose a specific route, thereby making the AI’s “agreement” auditable and preventing functionally “fraudulent” (i.e., erroneous or unexplainable) behavior.

Mitigating Digital “Fraud”: Trust Protocols and Validation

In the context of autonomous systems, “fraud” is not solely about malicious intent; it encompasses any situation where a system’s output or action deviates from its intended, verifiable, and trustworthy operation. This can stem from sensor errors, software bugs, communication failures, or unverified data, all of which can lead to misrepresentation of reality and potentially catastrophic autonomous actions. Mitigating this requires sophisticated trust protocols and multi-layered validation mechanisms that go far beyond simple error checking.

Sensor Fusion and Redundancy for Reliable Data

Autonomous systems, whether for navigation, mapping, or remote sensing, rely on a symphony of sensors to perceive their environment. A single sensor can be compromised, experience drift, or encounter environmental interference (e.g., GPS signal loss, camera occlusion). The “Statute of Fraud” principle here dictates that critical perceptions must be cross-validated. Sensor fusion techniques combine data from diverse sources—such as cameras, LiDAR, radar, ultrasonic sensors, and inertial measurement units (IMUs)—to build a more robust and accurate picture of reality. Redundancy in these systems means that if one sensor fails or provides anomalous data, others can compensate or flag the discrepancy. This multi-modal validation acts as an internal “fraud detector,” ensuring that the system’s “understanding” of its environment is consistent and trustworthy, preventing reliance on a single, potentially misleading “testimony.”

Secure Communication and Authentication Protocols

The interconnectedness of modern autonomous systems means that data is constantly in motion—from sensors to processing units, between vehicles, and to cloud-based analysis platforms. This extensive communication surface presents opportunities for digital “fraud” through data tampering, unauthorized access, or the injection of false information. Therefore, robust cybersecurity becomes an indispensable part of the “Statute of Fraud” framework for autonomous tech. Secure communication protocols, involving strong encryption and mutual authentication, are vital to ensure that data in transit is protected and that only authorized entities can interact with the system. Authenticating connected devices and systems verifies their identity and prevents imposters from providing misleading commands or data. This foundational layer of cybersecurity protects the integrity of the digital “writing” throughout its lifecycle, safeguarding against external attempts to corrupt or misrepresent the system’s operational truth.

Operationalizing Trust: Compliance, Ethics, and Public Acceptance

Beyond the technical implementation of data integrity and validation, the conceptual “Statute of Fraud” in autonomous systems extends to the broader frameworks of regulatory compliance, ethical design, and public acceptance. For these advanced technologies to fully integrate into society, they must not only be technically robust but also demonstrably trustworthy and accountable, much like the enforceability of a written legal agreement.

Regulatory Compliance and Industry Standards

The absence of a universal “digital writing” standard for autonomous systems poses significant challenges for regulators. To operationalize trust, regulatory bodies are increasingly pushing for mandatory standards concerning data logging, operational transparency, and incident reporting for autonomous vehicles, drones, and remote sensing platforms. These standards serve as the “written law” for autonomous operations, establishing minimum requirements for auditability and verifiability. For instance, flight logs for drones must capture specific parameters, and data from remote sensing used in critical infrastructure planning must have clear provenance and validation trails. Such regulations help to enforce a baseline level of “digital writing,” ensuring that systems are not only safe but also accountable in the event of failure or dispute, thereby preventing systemic “fraud” by non-compliance.

Ethical AI and Accountability Frameworks

The deployment of autonomous systems raises complex ethical questions, particularly regarding accountability when errors occur. If an AI-powered drone makes an incorrect decision resulting in damage or injury, who is responsible? The conceptual “Statute of Fraud” insists on a clear, verifiable record that provides the basis for post-incident analysis and, crucially, for assigning responsibility. This necessitates building ethical considerations into AI design from the outset, focusing on fairness, bias mitigation, and transparency. The “written record” of an AI’s decision-making process, enabled by XAI and robust logging, is paramount for establishing accountability. By embedding ethical principles and traceability, the aim is to create autonomous systems that are inherently just and transparent, upholding the spirit of preventing “fraud” through clarity and verifiable truth, much as traditional legal statutes intend to do.

Real-World Implications: Mapping, Remote Sensing, and Beyond

The conceptual “Statute of Fraud” finds tangible application across various facets of Tech & Innovation, from ensuring the veracity of geographical data to guaranteeing the integrity of autonomous logistics. These applications highlight the practical imperative of verifiable digital records and transparent operations in building reliable autonomous ecosystems.

Accurate Mapping and Surveying in a Digital Age

In the era of smart cities and digital twins, accurate and trustworthy mapping and surveying data are foundational. Remote sensing platforms, including drones equipped with advanced LiDAR and photogrammetry, collect vast amounts of geospatial information. The “Statute of Fraud” in this context demands verifiable provenance for all mapping data. This means ensuring that every data point, every contour line, and every feature on a digital map can be traced back to its origin, validated against multiple sources, and authenticated as untampered. From urban planning to agricultural analytics and environmental monitoring, the integrity of this “written” geographical record is paramount. The consequences of inaccurate or fraudulent mapping data, such as miscalculated structural loads or incorrect land use decisions, underscore the critical need for a robust system of digital verification.

Autonomous Logistics and Secure Chain of Custody

Autonomous drones and ground vehicles are revolutionizing logistics and last-mile delivery. For this transformation to be trustworthy, the entire chain of custody must be digitally verifiable. Imagine a package being picked up, transported through multiple autonomous hubs, and delivered to a customer. Every handoff, every change in location, every flight path deviation must be logged and made immutable. Automated “proof of delivery” mechanisms, leveraging facial recognition, QR code scanning, or GPS coordinates, must generate digitally signed records that serve as the incontrovertible “written agreement” of delivery completion. This not only protects the consumer and the logistics provider but also provides essential data for regulatory oversight and incident investigation, effectively eliminating ambiguity and the potential for “fraudulent” claims within the autonomous delivery process. The continuous, verifiable digital trail ensures that the journey of every item is as transparent and accountable as a meticulously documented legal transaction.