In the rapidly evolving landscape of drone technology and innovation, sophisticated visual rendering is no longer a luxury but a fundamental necessity. From simulating complex flight scenarios for autonomous systems to rendering intricate 3D maps derived from aerial data, the underlying force enabling much of this visual magic is a set of specialized computer programs known as shaders. Often operating behind the scenes, shaders are the unsung heroes of modern graphics processing, dictating how everything from a digital blade of grass to a thermal anomaly is perceived on a screen. Within the realm of drone technology, particularly in areas like mapping, remote sensing, AI-driven autonomous flight, and cutting-edge data visualization, understanding shaders is key to grasping the full potential of these advanced applications.

The Core Concept of Shaders in Tech & Innovation

At their heart, shaders are small programs that run on a computer’s Graphics Processing Unit (GPU), processing graphics data to determine the final appearance of rendered objects and scenes. Unlike traditional fixed-function pipelines where graphics operations were hardwired, shaders offer immense flexibility and programmability. This flexibility is what makes them indispensable in fields demanding high fidelity, dynamic visualization, and complex data interpretation, such as drone technology.

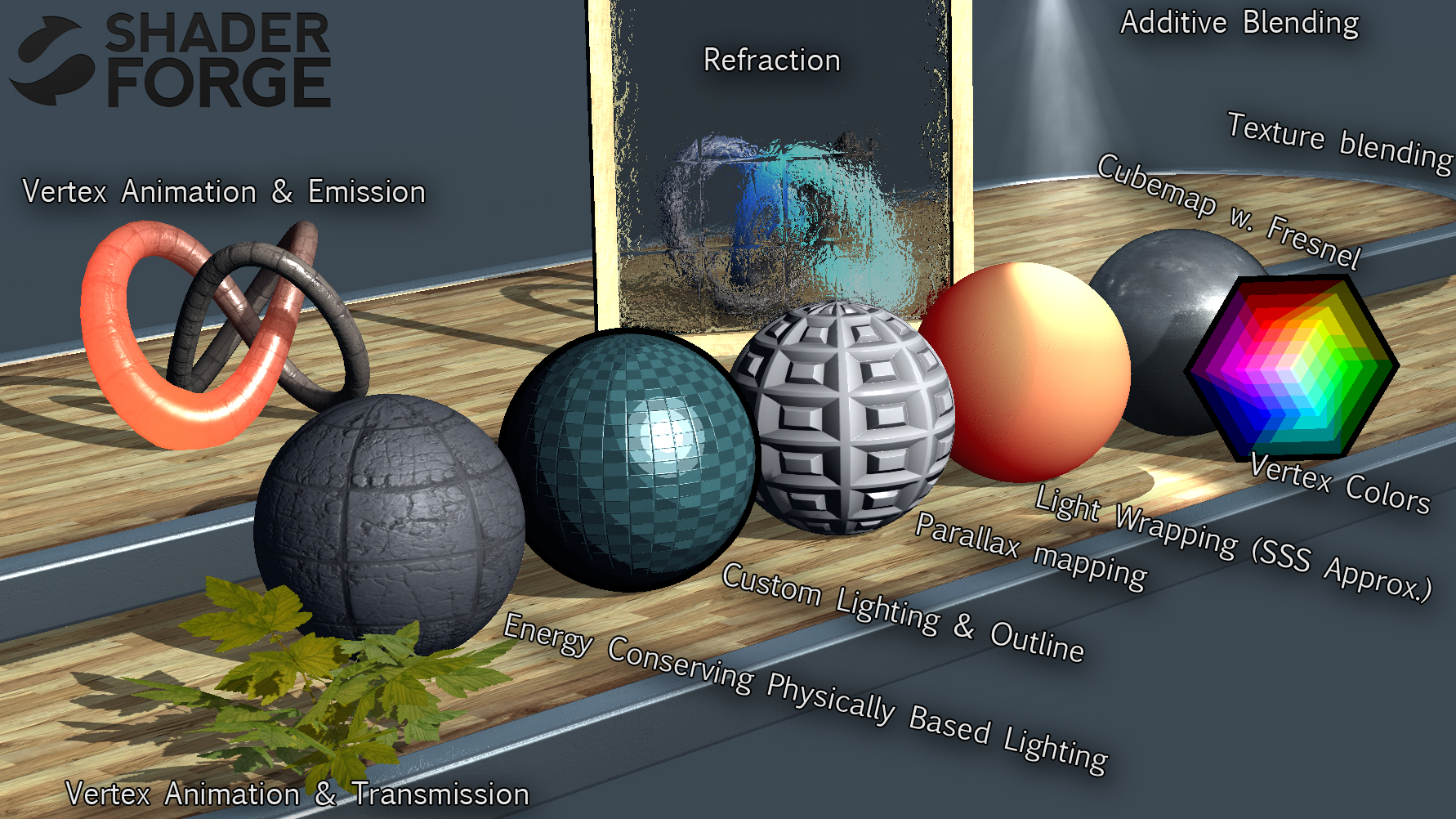

There are primarily two main types of shaders:

- Vertex Shaders: These shaders process individual vertices (points) of 3D models. Their primary role is to transform the position of these vertices in 3D space, projecting them onto the 2D screen, and calculating properties like lighting and texture coordinates at each vertex. In drone tech, this is crucial for accurately positioning and shaping 3D models of terrain, buildings, or drone components within a simulated environment or a reconstructed map.

- Fragment (or Pixel) Shaders: After the vertex shader has determined the position and other properties of vertices, the fragment shader takes over. It calculates the color and other attributes of each individual pixel that makes up the rendered surface. This is where textures are applied, lighting effects are computed (e.g., how sunlight reflects off a rooftop), and advanced visual effects like shadows, reflections, and atmospheric scattering are generated. For drone applications, fragment shaders are essential for rendering realistic ground textures in mapping, applying false colors to remote sensing data, or simulating complex atmospheric conditions for autonomous flight training.

Beyond these fundamental types, other shaders like geometry shaders, tessellation shaders, and compute shaders further enhance the GPU’s capabilities, allowing for the generation of new geometry, adaptive detail levels, and general-purpose computation that can accelerate data processing and visualization within drone-centric software. This programmable approach allows drone innovators to push the boundaries of visual realism and analytical insight far beyond what was previously possible.

Shaders in Drone Mapping and 3D Reconstruction

Drone-based mapping and 3D reconstruction are revolutionizing industries from construction and agriculture to urban planning and environmental monitoring. The raw data collected by drones – countless images or LiDAR points – is transformed into highly detailed 2D maps and intricate 3D models. Shaders play a pivotal role in making this transformation visually meaningful and analytically powerful.

Visualizing Complex Geospatial Data

When a drone captures thousands of aerial images, photogrammetry software processes these to create dense point clouds and textured 3D meshes. Shaders are responsible for taking this data and rendering it into a coherent, interactive 3D environment. They manage how textures (derived from the original images) are meticulously draped over the mesh, how light interacts with the reconstructed surfaces to create shadows and highlights, and how intricate details of buildings, trees, and terrain features are faithfully reproduced. Without advanced fragment shaders, the vibrant, detailed textures of a city model or the subtle topographical variations of a landscape would appear flat and unconvincing. Vertex shaders ensure that the millions of points in a point cloud are accurately positioned and scaled, forming a spatially precise representation of the real world.

Enhancing Photogrammetry Output

The output of photogrammetry—orthomosaics, digital elevation models (DEMs), and 3D models—are not just static images but dynamic datasets. Shaders enable advanced visualization techniques that enhance the utility of these outputs. For instance, they can be programmed to display slope analyses by coloring areas based on their incline, or to highlight specific features like impervious surfaces or vegetation health using custom color ramps. In construction site monitoring, shaders can dynamically render overlays showing progress against a BIM model, or visually alarm specific deviations from a planned design. This dynamic capability transforms static data into actionable intelligence, allowing stakeholders to interpret complex spatial information quickly and accurately.

Shaders for Remote Sensing and Data Interpretation

Remote sensing, often performed by drones equipped with specialized sensors, involves collecting information about an object or area without making physical contact. This data often goes beyond the visible spectrum, encompassing multispectral, hyperspectral, and thermal imagery. Shaders are crucial for translating this abstract data into interpretable visual formats.

Multispectral and Hyperspectral Data Visualization

Drones carrying multispectral sensors capture data across several specific wavelength bands, revealing insights invisible to the human eye, such as plant health (e.g., using NDVI – Normalized Difference Vegetation Index) or soil moisture. Hyperspectral sensors capture even more bands, providing a much richer spectral signature for detailed material identification. Shaders are used to apply false-color composites, where different wavelength bands are mapped to visible colors (red, green, blue) to highlight specific phenomena. For example, a shader can be programmed to render healthy vegetation in bright red and stressed vegetation in dull green, making patterns of agricultural stress immediately apparent. They can dynamically adjust color scales based on data ranges, enabling users to finely tune their visual analysis. This allows researchers and practitioners to quickly identify areas of interest, assess environmental changes, and make informed decisions regarding crop management, water conservation, or ecological monitoring.

Dynamic Feature Highlighting

Beyond static color mapping, shaders enable dynamic feature highlighting and thresholding. Imagine analyzing thermal imagery from a drone inspecting solar panels or infrastructure. A shader can be set to instantly highlight any area exceeding a certain temperature threshold with a vivid, attention-grabbing color, or to display a smooth gradient indicating temperature variations. Similarly, in environmental applications, shaders can dynamically color code areas based on water quality parameters, pollutant concentrations, or even classify different land cover types in real-time or near real-time visualization applications. This ability to instantly transform raw sensor data into visually intuitive representations is a cornerstone of effective remote sensing analysis and decision-making for drone operators and data analysts.

Shaders in Autonomous Flight and AI Simulation

The development of truly autonomous drones hinges on rigorous testing and training, much of which occurs in sophisticated virtual environments. Furthermore, understanding how an AI system perceives its surroundings and makes decisions requires advanced visualization techniques. Shaders are indispensable in both these domains.

Realistic Environmental Simulation

For an AI system to learn to navigate complex environments, avoid obstacles, and perform intricate tasks, it needs to be trained in simulations that accurately mimic the real world. Shaders are the backbone of creating these realistic virtual environments. They are used to render:

- Detailed Terrain and Structures: Generating convincing landscapes, urban environments, and varying weather conditions (rain, fog, snow) with realistic lighting and shadows. Vertex and fragment shaders work in tandem to apply high-resolution textures, complex material properties, and dynamic weather effects, making the simulated world indistinguishable from reality for the AI’s “eyes” (its simulated sensors).

- Dynamic Objects and Obstacles: Simulating moving vehicles, pedestrians, animals, and other drones with accurate visual properties and physical interactions. Shaders ensure these dynamic elements blend seamlessly into the environment and react realistically to simulated light and shadows, providing the AI with diverse and challenging scenarios for training.

- Sensor Noise and Imperfections: To truly prepare an AI for the real world, simulations must also account for sensor limitations. Shaders can be used to introduce realistic camera noise, lens distortions, or LiDAR beam scattering effects, ensuring the AI’s training data includes the imperfections it will encounter in actual flight.

This hyper-realistic simulation environment, powered by advanced shaders, allows developers to iterate rapidly on AI algorithms, test edge cases safely, and drastically reduce the cost and risk associated with real-world flight tests.

AI Perception and Decision Visualization

As AI-powered drones become more common, understanding their internal processes is crucial for trust and debugging. Shaders play a key role in visualizing what an AI “sees” and how it arrives at its decisions. For example:

- Object Detection Overlays: Shaders can render bounding boxes and labels around detected objects (people, vehicles, obstacles) on a real-time video feed or simulation display, showing the AI’s perception in action. Different colors or transparencies can indicate confidence levels or object classifications.

- Path Planning and Prediction: Shaders can visualize the AI’s planned flight path, predicted trajectories of dynamic obstacles, or areas identified as safe/unsafe for navigation. This provides immediate feedback on the AI’s decision-making process.

- Sensor Fusion Visualization: Drones often use multiple sensors (cameras, LiDAR, radar). Shaders can combine and display the fused data from these diverse inputs, for instance, overlaying LiDAR point clouds onto a camera feed, or visualizing the AI’s interpretation of sensor data differences to highlight potential discrepancies.

By making complex AI processes visually interpretable, shaders empower developers and operators to better understand, validate, and refine the intelligence driving autonomous drones.

The Future of Shaders in Drone Tech

As drone technology continues its rapid advancement, the role of shaders will only grow in importance. Future developments will likely include more sophisticated real-time rendering capabilities for on-board processing, enabling drones themselves to perform complex visual analysis and decision-making with greater autonomy. Advances in neural rendering and real-time photogrammetry, where shaders will be instrumental, could allow drones to build and update 3D models of their environment in real-time, adapting to changes instantaneously. Furthermore, as augmented reality (AR) and virtual reality (VR) become more integrated into drone operations for control and data review, shaders will be critical in blending real-world drone feeds with virtual information overlays, creating immersive and intuitive user experiences. The continuous innovation in shader technology is not just about making things look prettier; it’s about unlocking deeper insights, enhancing operational safety, and propelling the capabilities of drones into exciting new territories of innovation.