The rapid evolution of Unmanned Aerial Vehicles (UAVs) has moved beyond mere remote-controlled flight into an era of increasing autonomy. However, within the advanced algorithms and intricate sensor networks that govern modern drones, there exists a foundational layer of intelligence that, while functional, can be metaphorically described as the “monkey brain.” This isn’t a literal reference to primate anatomy, but rather an evocative term to describe the reactive, instinctual, and often rudimentary decision-making processes that characterize early or less sophisticated autonomous systems. It represents the inherent challenge in transitioning from programmed responses to genuine cognitive intelligence, highlighting the limitations of purely rule-based or immediate-stimulus-response mechanisms in complex environments. Understanding this concept is crucial for appreciating the strides being made in drone AI and navigating the path toward truly intelligent, adaptable, and self-aware aerial platforms.

The Dawn of Drone Autonomy: From Reflex to Reason

Early iterations of autonomous drones, and indeed many fundamental processes in contemporary systems, operate on principles akin to biological reflexes. These systems are designed to react swiftly to immediate sensory input according to predefined rules, executing tasks with precision within controlled parameters but often lacking the flexibility, foresight, or contextual understanding that characterizes higher-order intelligence. This forms the basis of what might be termed the “monkey brain” phase of drone autonomy.

Reactive Systems and Rule-Based Logic

At its core, a reactive system is built upon a simple stimulus-response model. A drone encounters an obstacle, and a pre-programmed rule dictates an immediate evasive maneuver—turn left, ascend, or halt. This logic is efficient for specific, well-defined scenarios and forms the backbone of many safety features, such as collision avoidance. Sensors like ultrasonic, infrared, or basic computer vision detect proximity, and the flight controller executes a programmed action without deep analysis of the broader environment or long-term consequences. Similarly, basic navigation might involve following GPS waypoints sequentially. Each waypoint reached triggers the next instruction, a linear progression dictated by a set plan rather than dynamic environmental interpretation. While effective for repetitive tasks in stable conditions, this rule-based approach struggles when faced with novel situations, ambiguous data, or rapidly changing, unpredictable environments where a more nuanced understanding is required. The system’s “intelligence” is confined to its pre-coded responses, lacking the capacity for learning or adaptation beyond its initial programming.

Sensor Dependence and Environmental Constraints

The “monkey brain” of a drone is highly dependent on its immediate sensory input, much like an animal relying solely on its current perceptions. While advanced sensors like lidar, radar, and multi-spectral cameras provide a rich stream of data, the interpretation layer in rudimentary autonomous systems often processes this data in isolation. For example, a drone might detect an object, but without broader contextual awareness, it might not differentiate between a static tree, a moving bird, or a falling leaf. Its response will be generic, based purely on proximity. Furthermore, environmental constraints significantly impact these systems. Poor lighting, fog, rain, or cluttered electromagnetic environments can degrade sensor performance, leading to unreliable input and consequently, unreliable reactive behavior. A system programmed to avoid obstacles within a certain range might falter if that range is obscured, leading to unpredictable or unsafe maneuvers. The “monkey brain” struggles to infer, predict, or reason under uncertainty, instead defaulting to its programmed responses or simply failing when data is insufficient or contradictory.

Unpacking the “Monkey Brain” Metaphor in UAVs

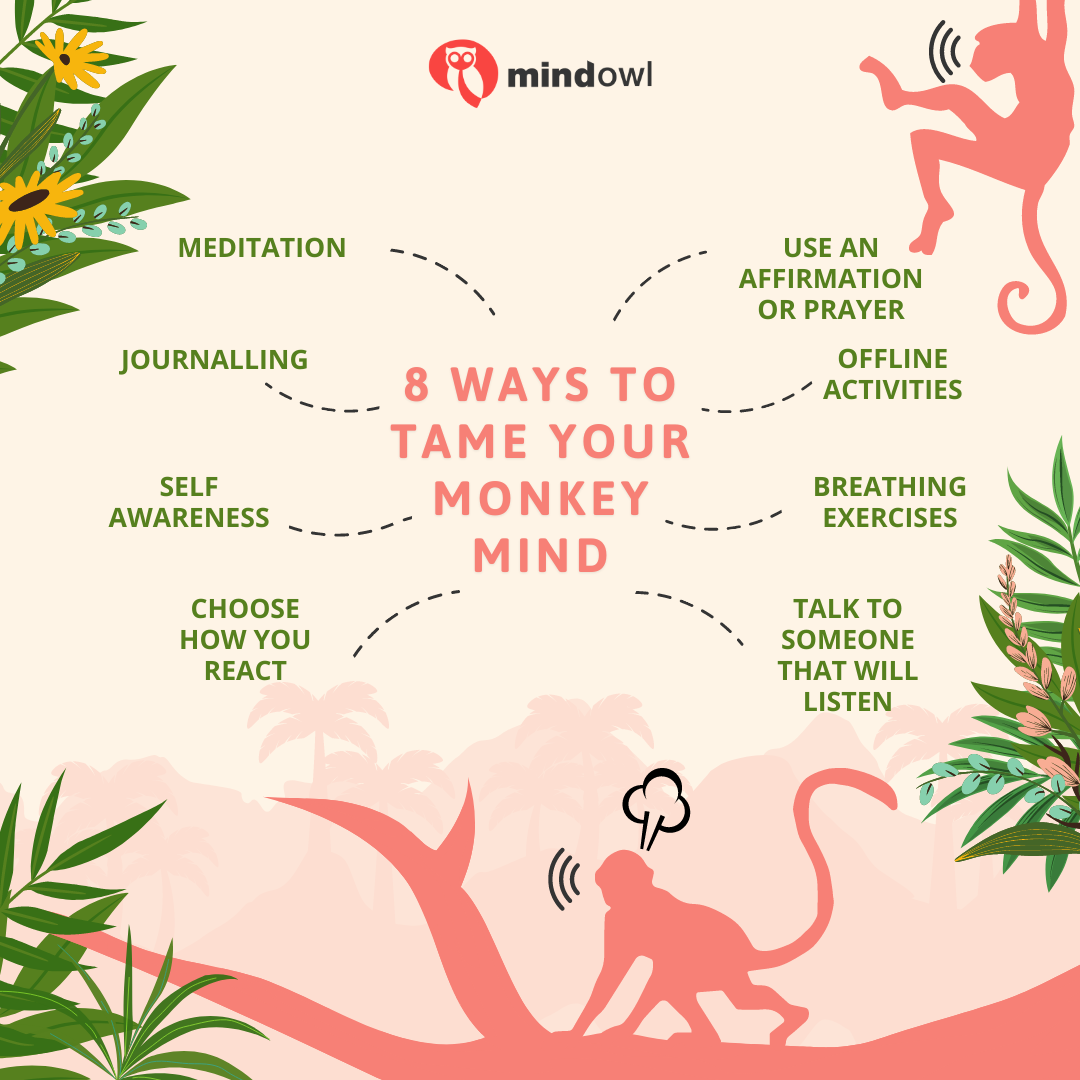

The metaphor of a “monkey brain” serves to illustrate the inherent limitations of these foundational autonomous systems. While functional and essential for basic operations, they represent a stage of intelligence that is primarily focused on immediate gratification (completing a task) without profound strategic planning, learning from mistakes, or understanding complex causality.

Limitations of Primitive Decision-Making

Primitive decision-making in drones manifests as a lack of sophisticated problem-solving and an inability to generalize. A drone might be expertly programmed to navigate a specific indoor environment, avoiding known obstacles with precision. However, transpose that drone to a slightly different, unfamiliar indoor environment, and its performance may degrade significantly. It lacks the ability to abstract lessons learned from one scenario and apply them flexibly to another. This is because its “understanding” is tied to specific sensor patterns and predefined responses rather than a deeper, adaptable model of the world. Such systems struggle with ambiguity, novel threats, or situations that require creative solutions beyond their programmed repertoire. They might get stuck in local optima, repeatedly attempting a maneuver that consistently fails, or oscillate between two conflicting reactive rules without a higher-level logic to resolve the impasse. This reactive loop, a hallmark of the “monkey brain,” consumes resources and can compromise mission effectiveness.

The Cost of “Instinctual” Flight

Relying solely on “instinctual” or reactive flight comes with tangible costs and risks. Energy consumption is often higher as the drone may perform inefficient maneuvers, overcorrecting or taking suboptimal paths due to its lack of foresight. Safety can be compromised when systems cannot anticipate potential dangers beyond their immediate sensor horizon. For instance, a drone might successfully avoid a direct collision but fly into a less obvious hazard just seconds later because its planning horizon is too short. Operational efficiency also suffers, as missions might require more human oversight, frequent manual intervention, or repeated attempts to complete complex tasks that a more intelligent system could accomplish seamlessly. Furthermore, data collection for tasks like mapping or inspection can be less optimal, with gaps or redundancies, if the drone’s flight path is purely reactive rather than strategically planned based on comprehensive environmental understanding. The “monkey brain” makes drones proficient at individual actions but less adept at holistic mission execution and adaptive performance.

Bridging the Gap: Evolving Beyond Basic Intelligence

The limitations of the “monkey brain” in drone autonomy have spurred significant innovation, pushing the boundaries of AI and machine learning to cultivate more sophisticated, adaptable, and truly intelligent aerial platforms. The transition involves moving from reactive responses to proactive planning, from sensor data interpretation to contextual understanding, and from rigid programming to continuous learning.

Machine Learning and Neural Networks

The advent and rapid advancement of machine learning (ML) and artificial neural networks (ANNs) have been instrumental in elevating drone intelligence beyond basic reactivity. Unlike rule-based systems, ML models can learn from vast datasets, identifying complex patterns and relationships that are difficult or impossible to hard-code. Deep learning, a subset of ML, utilizes multi-layered neural networks to process raw sensor data—such as high-resolution camera feeds—and extract meaningful features for object recognition, scene understanding, and even semantic segmentation. This allows drones to not just detect an object but to understand what it is (e.g., a human, a car, a tree) and its state (moving, stationary, damaged). This capability forms the bedrock for more nuanced decision-making, enabling drones to differentiate between a friendly face and a potential threat, or to distinguish a safe landing zone from uneven terrain, moving beyond mere proximity alerts to informed environmental interpretation.

Contextual Awareness and Predictive Analytics

Moving beyond immediate sensor input, modern drone AI is increasingly focused on developing contextual awareness. This involves fusing data from multiple sensors (visual, thermal, lidar, GPS, IMU) over time, alongside external information like weather forecasts, digital terrain models, and mission objectives, to build a comprehensive understanding of the operational environment. A drone with contextual awareness doesn’t just see a building; it understands its structural integrity, its purpose, the presence of people, and how its flight path relates to prevailing winds. Complementing this is predictive analytics, where AI models forecast future events based on current and historical data. For example, by observing movement patterns, a drone can predict the trajectory of a moving target or anticipate potential obstacles emerging into its path, allowing for proactive rather than reactive evasive maneuvers. This foresight is a critical leap beyond the “monkey brain,” enabling optimized flight paths, more efficient resource utilization, and significantly enhanced safety by avoiding potential issues before they become immediate threats.

The Future of Autonomous Flight: Towards Cognitive Drones

The ongoing advancements in AI, machine learning, and sensor fusion are paving the way for a new generation of cognitive drones. These platforms will not merely execute pre-programmed tasks or react to immediate stimuli, but will possess higher-order intelligence capable of abstract reasoning, long-term planning, and continuous adaptation in highly dynamic and unpredictable environments.

Advanced Decision-Making and Multi-Agent Collaboration

The future of autonomous flight hinges on advanced decision-making frameworks that emulate human-like cognitive abilities. This includes reinforcement learning for policy optimization, where drones learn optimal behaviors through trial and error in simulated or real-world environments, continuously refining their strategies. Symbolic AI, combined with neural networks, will allow drones to reason about complex situations, weigh competing objectives (e.g., speed vs. stealth, energy efficiency vs. data quality), and make trade-offs. This holistic approach moves beyond localized, short-term decision loops to encompass mission-level strategy. Furthermore, multi-agent collaboration is a frontier where individual cognitive drones will work in concert, sharing data, coordinating actions, and collectively achieving complex goals far beyond the capabilities of a single unit. Swarms of drones could autonomously map vast areas, conduct synchronized inspections, or perform intricate search and rescue operations, with each drone making context-aware decisions that contribute to the collective intelligence and efficiency of the group.

Ethical AI and Human-Machine Teaming

As drones become more intelligent, the integration of ethical considerations into their AI architecture becomes paramount. This involves developing algorithms that can interpret and adhere to ethical guidelines, legal frameworks, and societal norms, especially in sensitive applications like surveillance, law enforcement, or conflict zones. Principles such as fairness, accountability, and transparency will be embedded into their decision-making processes, ensuring that autonomous actions are not only effective but also responsible. Concurrently, the paradigm of human-machine teaming is evolving. Future cognitive drones will not necessarily replace human operators but will augment them, acting as intelligent assistants capable of understanding complex commands, providing insightful data analysis, and even suggesting optimal strategies. This symbiotic relationship will leverage the drone’s computational prowess and sensor capabilities with human intuition, ethical judgment, and creative problem-solving, leading to unprecedented levels of operational efficiency and safety across various industries, from logistics and agriculture to disaster response and scientific exploration. The journey beyond the “monkey brain” is one toward a future where drones are not just tools, but intelligent, collaborative partners.