The term “Docker daemon” might initially sound like a component from a science fiction novel or a highly technical piece of infrastructure obscure to most. However, for anyone involved in modern software development, deployment, or IT operations, understanding the Docker daemon is fundamental. It is the invisible engine that powers the entire Docker ecosystem, managing containers, images, networks, and volumes. Without it, the revolutionary containerization technology that Docker pioneered would simply not function. This article delves into the core of the Docker daemon, exploring its architecture, its critical functions, and why it is such an indispensable part of the containerization landscape.

The Heart of Docker: Understanding the Daemon’s Role

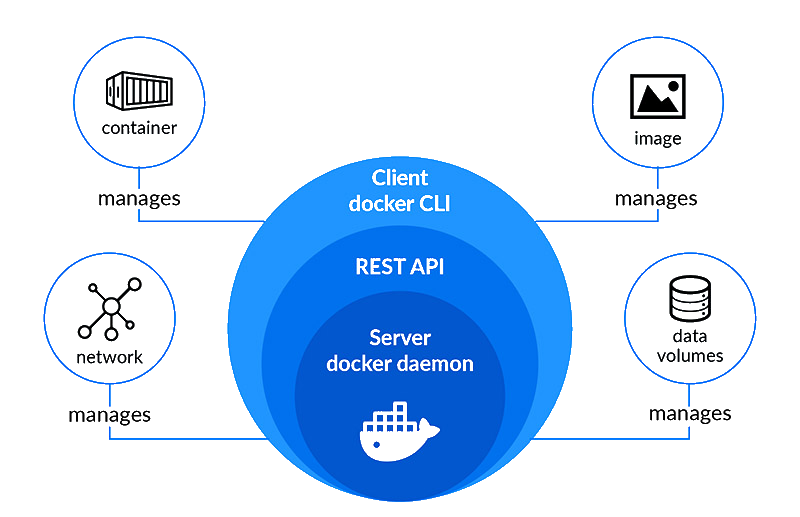

At its core, the Docker daemon, often referred to as dockerd, is a persistent background process that runs on the host machine. Its primary responsibility is to listen for Docker API requests and manage the lifecycle of Docker objects, including containers, images, networks, and volumes. Think of it as the central nervous system of Docker, orchestrating all the actions that users initiate through the Docker client.

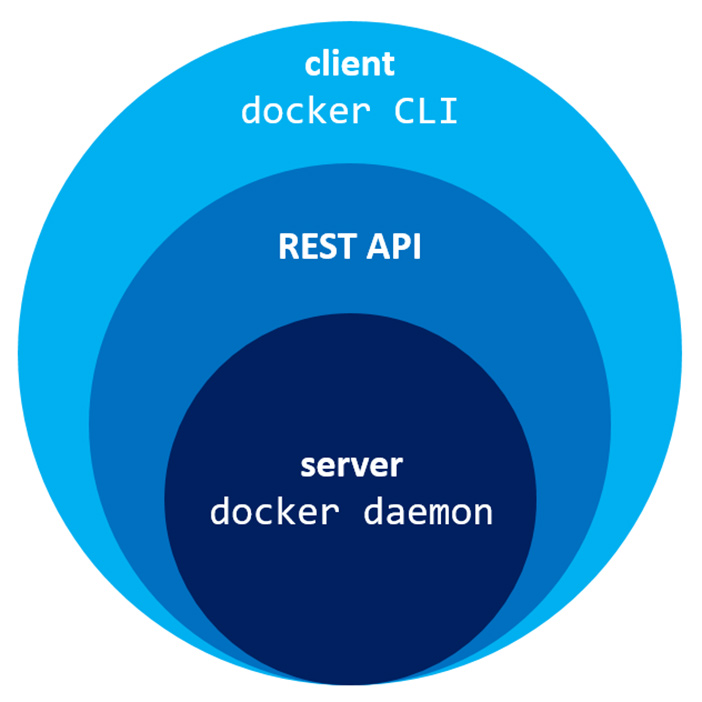

Architecture and Communication

The Docker daemon is typically implemented in Go and runs as a system service. It’s designed to be a robust and reliable component, ensuring that containerized applications can be built, shipped, and run consistently across different environments.

- The Docker Client: Users interact with Docker primarily through the Docker client (the

dockercommand-line interface or CLI). When a user executes a Docker command, such asdocker run,docker build, ordocker ps, the client sends these commands to the Docker daemon via a REST API. This communication typically happens over a Unix socket on Linux and macOS, or a named pipe on Windows. - The Docker Daemon (

dockerd): The daemon receives these API requests, interprets them, and then takes the necessary actions. It is responsible for everything from pulling images from registries, creating and starting containers, managing their networks, and persisting their data. - The Docker Engine: The Docker daemon is the core component of the Docker Engine, which also includes the Docker client and the Docker CLI. The Engine provides the tools and runtime environment for building and running containerized applications.

Key Responsibilities of the Daemon

The Docker daemon is a busy entity, constantly managing various aspects of the containerized environment. Its responsibilities are broad and critical to the functioning of Docker:

- Container Management: This is arguably the daemon’s most significant role. It handles the creation, starting, stopping, pausing, restarting, and deletion of containers. It ensures that containers are isolated from each other and the host system, managing their resources like CPU, memory, and I/O.

- Image Management: Docker images are the blueprints for containers. The daemon is responsible for pulling images from Docker registries (like Docker Hub), building new images from Dockerfiles, and managing the storage and organization of these images on the host.

- Network Management: Containers need to communicate with each other and with the outside world. The daemon sets up and manages virtual networks for containers, enabling them to connect and exchange data. This includes creating bridge networks, host networks, and overlay networks for distributed environments.

- Volume Management: Persistent data is crucial for many applications. The daemon manages Docker volumes, which are the preferred mechanism for persisting data generated by and used by Docker containers. Volumes are managed by Docker, are more efficient than bind mounts, and are the best choice for persisting stateful data.

- API Endpoint: As mentioned, the daemon exposes a REST API that the Docker client and other tools use to interact with the Docker host. This API is the gateway to all Docker functionalities.

- Security: The daemon plays a role in enforcing security policies and managing access to Docker resources. It handles user authentication and authorization for API requests.

Inside the Daemon: Core Components and Functionality

To better understand how the Docker daemon achieves its responsibilities, it’s helpful to look at its internal workings and the technologies it leverages.

The Runtime: Containerd and Runc

Historically, Docker had its own built-in container runtime. However, over time, the Docker project has evolved to leverage more standardized and modular components. The Docker daemon now relies on containerd as its high-level container runtime.

- containerd: This is an industry-standard core container runtime that manages the complete container lifecycle of its users, including image transfer and storage, container execution and supervision, and low-level storage or networking. It acts as an intermediary between the Docker daemon and the lower-level runtime.

- runc: runc is the low-level, command-line tool for spawning and running containers according to the Open Container Initiative (OCI) specification. When containerd needs to create a container, it invokes runc. Runc is responsible for the actual creation of the container’s filesystem, namespaces, and cgroups, which are fundamental to container isolation.

This modular approach, where the Docker daemon orchestrates via containerd, which in turn uses runc, allows for greater flexibility, standardization, and the adoption of industry best practices in container management.

Image Storage and Distribution

The Docker daemon is responsible for managing Docker images, which are the read-only templates that containers are created from.

- Image Layers: Docker images are built in layers. Each instruction in a Dockerfile (e.g.,

RUN,COPY,ADD) creates a new layer. This layering mechanism is highly efficient, as common layers can be shared across multiple images, saving disk space and reducing download times. The daemon manages the storage and retrieval of these layers. - Image Registries: The daemon interacts with image registries to pull and push images. Docker Hub is the default public registry, but users can also configure private registries. The daemon handles the authentication, download, and caching of images from these registries.

Networking

Container networking is a complex but crucial aspect of containerization. The Docker daemon provides a robust networking stack.

- Bridge Networks: By default, Docker creates a

bridgenetwork. When containers are connected to this network, they can communicate with each other using their IP addresses. The daemon manages the network interfaces, IP addressing, and routing for these bridge networks. - Host Networks: Containers can also be configured to use the host’s network directly, meaning they share the same network namespace as the host. This offers simpler network configuration but sacrifices isolation.

- Overlay Networks: In multi-host Docker deployments (using Docker Swarm or Kubernetes), overlay networks allow containers on different hosts to communicate as if they were on the same network. The daemon plays a role in setting up and managing these distributed network environments.

Volumes and Data Persistence

While containers are ephemeral by design, applications often need to store data persistently. Docker volumes are the primary mechanism for this.

- Volume Driver Interface: The Docker daemon exposes a volume driver interface, allowing for the integration of various storage backends. Docker comes with a default

localvolume driver, but integrations with cloud storage providers or enterprise storage solutions are possible. - Lifecycle Management: The daemon is responsible for creating, attaching, detaching, and removing volumes, ensuring that data is correctly associated with the containers that need it.

Daemon Configuration and Security Considerations

Like any critical system component, the Docker daemon requires careful configuration and attention to security.

Configuration Options

The Docker daemon can be configured through a configuration file (typically /etc/docker/daemon.json on Linux) or via command-line flags. These configurations allow administrators to customize various aspects of the daemon’s behavior, including:

- Storage Driver: The daemon can use different storage drivers (e.g.,

overlay2,aufs,devicemapper) for managing the storage of images and containers.overlay2is generally the recommended and most performant option. - Network Settings: Customizing network bridge IPs, DNS settings, and other network-related parameters.

- Logging Drivers: Configuring how container logs are collected and forwarded (e.g., to

json-file,syslog,journald). - Registry Mirrors: Specifying local mirrors for Docker registries to speed up image pulls.

- Security Options: Enabling or disabling certain security features, such as user namespace remapping.

Security Best Practices

The Docker daemon runs with elevated privileges and has access to the host system’s resources. Therefore, securing the daemon is paramount.

- Limit Daemon Access: Restrict access to the Docker daemon’s API socket. On Linux, this means ensuring the socket has appropriate file permissions and is not world-writable.

- Use TLS for Remote API Access: If the Docker daemon’s API needs to be accessed remotely, it must be secured with TLS certificates to prevent unauthorized access and man-in-the-middle attacks.

- Run Containers as Non-Root: Configure containers to run with non-root users whenever possible. This reduces the potential impact if a container is compromised.

- User Namespace Remapping: This feature allows containers to run with user IDs that are mapped to different user IDs on the host, enhancing security by isolating container users from host users.

- Keep Docker Updated: Regularly update the Docker daemon and its components to benefit from the latest security patches and features.

- Scan Images for Vulnerabilities: Utilize image scanning tools to identify and mitigate vulnerabilities in the container images being used.

The Daemon in Modern Orchestration

While the Docker daemon is the foundation for running containers on a single host, modern containerized applications often run at scale across multiple machines. Here, orchestration tools like Docker Swarm and Kubernetes take over much of the high-level management, but they still rely on the Docker daemon (or a compatible container runtime interface-compatible runtime like containerd) running on each node to manage the actual containers.

- Docker Swarm: Docker Swarm is Docker’s native orchestration tool. The Swarm manager distributes tasks to worker nodes, and on each worker node, the Docker daemon is responsible for creating, running, and managing the containers as instructed by the manager.

- Kubernetes: Kubernetes, the de facto standard for container orchestration, also relies on a container runtime on each node. While Kubernetes can use Docker as its runtime (via a shim called

dockershimwhich is being deprecated in favor of direct containerd integration), the underlying principle is the same: a daemon or runtime process on each node manages the containers.

In these orchestration scenarios, the Docker daemon on each node acts as the “kubelet agent’s” direct interface to the container runtime, ensuring that the desired state defined by the orchestrator is actualized on the ground.

Conclusion

The Docker daemon is the unsung hero of the containerization revolution. It is the complex, powerful, and indispensable engine that enables developers and operators to build, ship, and run applications with unprecedented efficiency and portability. From managing the intricate lifecycle of containers and images to orchestrating complex networking and storage solutions, the daemon is at the heart of every Docker operation. Understanding its architecture, responsibilities, and security considerations is crucial for anyone looking to harness the full potential of container technology. As the container ecosystem continues to evolve, the fundamental role of a robust and reliable container daemon remains constant, ensuring that the promise of portable and scalable applications is realized.