Computer technology is the bedrock upon which the modern world is built. It encompasses the design, development, implementation, and maintenance of computer systems and their applications. This sprawling field touches virtually every aspect of our lives, from the smartphones in our pockets to the complex networks that power global commerce and communication. At its core, computer technology is about information: how it’s processed, stored, transmitted, and utilized to solve problems, automate tasks, and create new possibilities.

The evolution of computer technology is a story of relentless innovation, marked by exponential growth in processing power, storage capacity, and connectivity. From the rudimentary mechanical calculators of centuries past to the sophisticated artificial intelligence systems of today, each leap forward has been driven by a deeper understanding of computation and a desire to harness its potential. This field is not a monolithic entity but rather a constellation of interconnected disciplines, each contributing to the broader ecosystem of digital advancement.

The Foundational Pillars of Computer Technology

At the heart of computer technology lie several interconnected domains, each crucial to the functioning and advancement of computational systems. Understanding these foundational pillars is key to grasping the breadth and depth of what computer technology entails.

Hardware: The Physical Manifestation

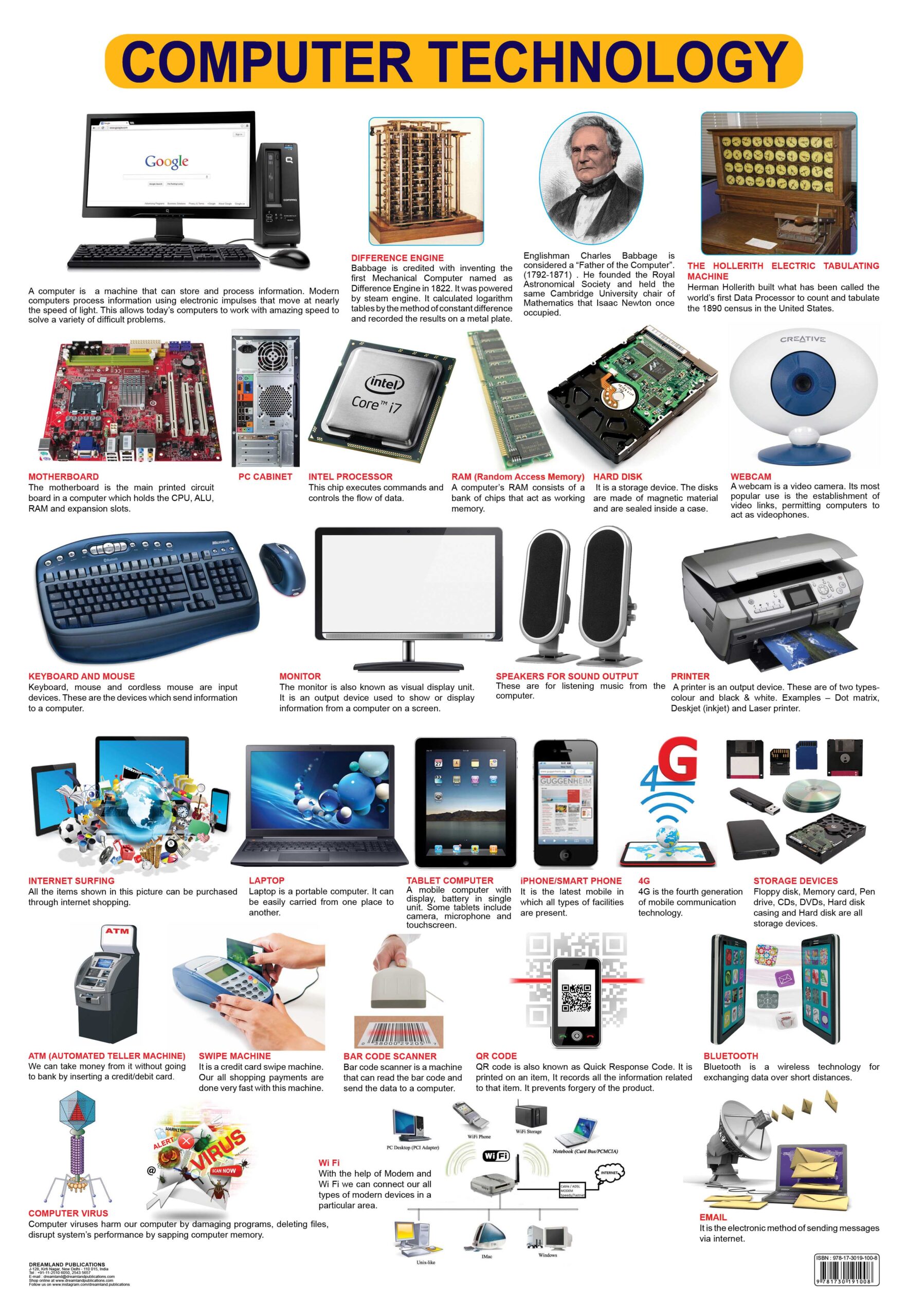

Computer hardware refers to the tangible, physical components that make up a computer system. This includes everything from the smallest transistor to the most complex supercomputer.

Central Processing Unit (CPU)

The CPU is often referred to as the “brain” of the computer. It performs most of the processing inside a computer. It fetches instructions from memory, decodes them, and executes them. The speed and efficiency of a CPU are critical determinants of a computer’s overall performance. Advances in CPU technology, such as multi-core processors and improved instruction sets, have dramatically increased computing power.

Memory and Storage

Memory, specifically Random Access Memory (RAM), is where the computer temporarily stores data and program instructions that are actively being used by the CPU. It’s volatile, meaning its contents are lost when the power is turned off. Storage, on the other hand, refers to non-volatile devices like hard disk drives (HDDs) and solid-state drives (SSDs) that store data persistently, even when the computer is off. The ongoing development in both memory and storage technologies, particularly the shift towards faster and more capacious SSDs, has profoundly impacted system responsiveness and data handling capabilities.

Input/Output (I/O) Devices

These are the devices that allow interaction between the user and the computer, or between the computer and the outside world. Input devices include keyboards, mice, touchscreens, and microphones, which allow users to provide data and commands. Output devices, such as monitors, printers, and speakers, present information to the user. Network interface cards (NICs) and modems facilitate communication with other computers and networks.

Motherboard and Peripherals

The motherboard is the main circuit board that connects all the other hardware components. It houses the CPU, RAM slots, expansion slots for graphics cards and other add-in cards, and connectors for storage devices and peripherals. Peripherals can range from specialized scientific instruments to everyday devices like webcams and external hard drives, all designed to extend the functionality of the core computing system.

Software: The Intangible Intelligence

Software, in contrast to hardware, comprises the set of instructions, data, or programs used to operate computers and execute specific tasks. It is the intangible element that brings hardware to life.

Operating Systems (OS)

The operating system is the most critical piece of software, acting as an intermediary between the user and the hardware. It manages the computer’s resources, including the CPU, memory, storage, and peripheral devices, and provides a platform for other applications to run. Examples include Windows, macOS, Linux, iOS, and Android. Modern operating systems are complex, multi-tasking environments that enable seamless user interaction and robust system management.

Applications

Applications, or “apps,” are software programs designed to perform specific tasks for the user. These can range from word processors and spreadsheets to web browsers, games, and specialized scientific software. The diversity and sophistication of application software are a testament to the versatility of computer technology.

Programming Languages and Development Tools

These are the tools and languages used by developers to create software. Programming languages, such as Python, Java, C++, and JavaScript, provide a structured way to write instructions that a computer can understand. Development tools, including integrated development environments (IDEs), compilers, and debuggers, assist developers in the entire software lifecycle, from coding to testing and deployment.

Interconnecting Systems: Networks and the Internet

Computer technology is not confined to isolated machines; it thrives on connectivity. The ability to share information and resources across vast distances is a defining characteristic of modern computing.

Computer Networks

A computer network is a collection of interconnected computers and devices that can communicate with each other. Networks can vary in scale, from small Local Area Networks (LANs) within an office to large Wide Area Networks (WANs) spanning continents. Key components include routers, switches, and network protocols that govern data transmission.

The Internet and the World Wide Web

The Internet is the largest and most well-known global network of interconnected computer networks. It is the physical infrastructure that enables global communication and data sharing. The World Wide Web (WWW), a service that runs on the Internet, is a system of interlinked hypertext documents accessed via the Internet. It has revolutionized access to information, commerce, and social interaction.

Cloud Computing

Cloud computing represents a paradigm shift in how computing resources are accessed and utilized. Instead of owning and managing physical servers and data centers, users can access computing services—including servers, storage, databases, networking, software, analytics, and intelligence—over the Internet (“the cloud”) from a cloud provider. This offers scalability, flexibility, and cost-effectiveness.

Advanced Frontiers and Emerging Trends

The field of computer technology is in a constant state of evolution, with ongoing research and development pushing the boundaries of what’s possible. Several advanced frontiers are reshaping the landscape.

Artificial Intelligence (AI) and Machine Learning (ML)

AI refers to the simulation of human intelligence in machines that are programmed to think like humans and mimic their actions. Machine Learning is a subset of AI that enables systems to learn from data without being explicitly programmed. AI and ML are driving advancements in areas like natural language processing, computer vision, autonomous systems, and personalized recommendations, transforming industries and everyday experiences.

Data Science and Big Data

The explosion of digital data has led to the rise of data science, an interdisciplinary field that uses scientific methods, processes, algorithms, and systems to extract knowledge and insights from data. Big Data refers to extremely large data sets that may be analyzed computationally to reveal patterns, trends, and associations. This field is crucial for informed decision-making, scientific discovery, and business strategy.

Cybersecurity

As our reliance on computer technology deepens, so does the need for robust cybersecurity. This domain focuses on protecting computer systems, networks, and data from theft, damage, or unauthorized access. It involves a range of technologies, processes, and practices designed to prevent, detect, and respond to cyber threats.

Quantum Computing

Quantum computing is an emerging field that harnesses the principles of quantum mechanics to perform calculations. Unlike classical computers that use bits representing either 0 or 1, quantum computers use qubits that can represent 0, 1, or both simultaneously. This allows for the potential to solve certain complex problems that are intractable for even the most powerful classical supercomputers, with implications for drug discovery, material science, and cryptography.

In conclusion, computer technology is a dynamic and multifaceted field that continues to drive innovation and shape the future. Its fundamental principles of information processing, coupled with rapid advancements in hardware, software, networking, and artificial intelligence, ensure its continued relevance and transformative impact on society.