A web archive is a digital repository that preserves snapshots of websites as they existed at specific points in time. Much like a historical archive preserves physical documents and artifacts, a web archive aims to capture the dynamic and ever-changing landscape of the World Wide Web. This preservation is crucial because websites are not static entities; they are frequently updated, redesigned, or, sadly, disappear altogether. Without web archives, vast swathes of digital information and cultural heritage would be lost to the ephemeral nature of the internet.

The concept of web archiving emerged as the internet began to mature and its significance as a platform for communication, information dissemination, and cultural expression became evident. Early efforts were often decentralized and driven by individual enthusiasts or academic institutions. However, as the volume of web content exploded, the need for more systematic and comprehensive archiving solutions became apparent. This led to the development of sophisticated tools and organizations dedicated to capturing and preserving the digital record.

The primary goal of a web archive is to provide access to past versions of web pages, enabling researchers, historians, journalists, and the general public to study the evolution of online content, track the development of ideas, and recover information that is no longer available through standard search engines. This access can be invaluable for understanding historical events, monitoring public discourse, analyzing trends in technology and society, and even for legal or academic verification.

The Mechanics of Web Archiving

The process of web archiving involves several key stages, from identifying content to be archived to ensuring its long-term accessibility. At its core, web archiving relies on automated programs called “crawlers” or “spiders.” These bots systematically browse the web, following links from one page to another, much like a human user would, but at a much greater scale and speed.

Crawling and Capturing Content

When a crawler visits a webpage, it downloads the HTML source code, along with any associated assets such as images, stylesheets (CSS), JavaScript files, and other embedded media. The crawler meticulously records the Uniform Resource Locator (URL) of the page and the date and time of the capture. This metadata is essential for contextualizing the archived content and understanding its origin.

The challenge in crawling is not just in downloading the content but in capturing the entirety of a website’s presence at a given moment. This includes handling dynamic content generated by JavaScript, interactive elements, and user-generated content. Advanced archiving systems employ sophisticated techniques to capture these complexities, often by simulating user interaction or using specialized rendering engines.

Data Storage and Organization

Once content is captured, it needs to be stored in a way that is both efficient and allows for future retrieval. Web archives typically store captured data in specialized formats. One of the most widely adopted formats is the “Web ARChive” (WARC) file format, which is an extension of the older ARC format. WARC files are designed to store crawl data in a standardized, append-only manner, facilitating efficient storage and retrieval of web resources.

Within these WARC files, each captured resource (HTML page, image, script, etc.) is stored along with its HTTP headers, the capture date, and the originating URL. This detailed metadata is crucial for reconstructing the webpage as it appeared and functioned. The sheer volume of data generated by web archiving means that robust, scalable storage solutions are essential. This often involves distributed storage systems and advanced data management techniques.

Access and Retrieval

Making archived content accessible to users is the ultimate goal. Web archives typically provide search interfaces that allow users to query for specific websites, keywords, or date ranges. When a user requests an archived page, the system retrieves the relevant files from its repository and reconstructs the page.

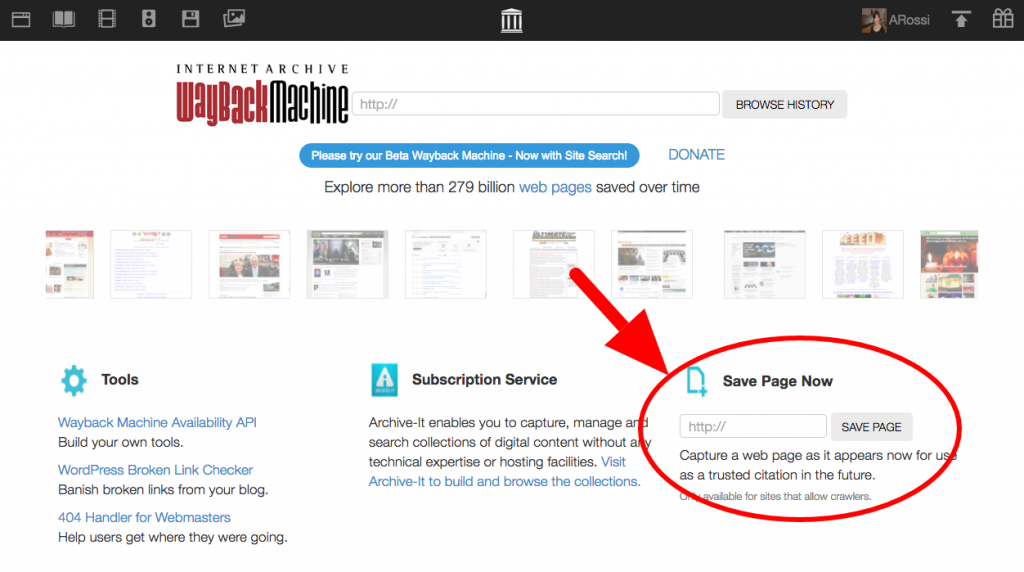

This reconstruction process can be complex. For example, a webpage might rely on stylesheets and JavaScript files that were also captured. The archive needs to link these resources correctly to render the page as accurately as possible. Tools like the Wayback Machine, one of the most well-known web archives, have developed sophisticated methods for presenting archived content in a user-friendly manner, allowing users to navigate through different historical versions of a site.

Types of Web Archives and Their Purpose

Web archives vary in their scope, focus, and operational models. Some are global in their ambition, while others concentrate on specific regions, institutions, or types of content. Understanding these distinctions helps in appreciating the diverse efforts to preserve the digital record.

National and Institutional Archives

Many national libraries and cultural heritage institutions around the world are engaged in web archiving as part of their mandate to preserve national memory and cultural output. These institutions often focus on archiving websites deemed culturally or historically significant, such as government websites, news outlets, academic publications, and digital art. Examples include the U.S. Library of Congress, the British Library, and the National Library of Australia.

These archives often have a long-term perspective, aiming to preserve digital content for future generations. They invest in robust infrastructure, develop preservation policies, and work to ensure the legal and technical frameworks are in place for sustained digital preservation.

Thematic and Research Archives

Beyond national efforts, numerous thematic and research archives exist, focusing on specific areas of interest. These might include archives dedicated to preserving the history of scientific research, the evolution of online communities, or the development of specific software or technologies.

For example, archives might focus on preserving early internet forums, the digital outputs of a particular scientific discipline, or the websites of social movements. These archives are often driven by the needs of specific research communities and provide valuable, curated datasets for scholarly inquiry.

Personal and Community Archives

On a smaller scale, individuals and communities also engage in web archiving. This can range from saving important personal websites or online correspondence to archiving the digital presence of local organizations or events. While not always as formalized as institutional archives, these efforts contribute to the broader goal of preserving digital history.

The Role of Organizations

Prominent organizations play a critical role in advancing web archiving. The Internet Archive, a non-profit digital library, is perhaps the most well-known, operating the Wayback Machine. The International Internet Preservation Consortium (IIPC) is another key player, bringing together institutions globally to develop standards, tools, and best practices for web archiving. These organizations collaborate to address the technical, legal, and ethical challenges of preserving the web at scale.

Challenges and Future of Web Archiving

Despite significant progress, web archiving faces ongoing challenges. The sheer volume and complexity of web content, coupled with rapid technological change, present continuous hurdles.

Technical Challenges

The dynamic nature of the web poses a significant technical challenge. Websites increasingly rely on complex JavaScript, single-page applications, and personalized content, making it difficult to capture a stable and representative snapshot. Furthermore, the rise of ephemeral content, such as social media posts or live-streaming events, presents unique preservation problems. Ensuring that archived content is not only captured but also rendered accurately and accessibly in the future requires constant innovation in crawling, rendering, and storage technologies.

Legal and Ethical Considerations

Copyright is a major legal hurdle in web archiving. While many believe that archiving content for preservation and research purposes falls under fair use or similar exceptions, the legal landscape is complex and varies by jurisdiction. Obtaining explicit permission from every copyright holder for every piece of content is practically impossible. This often leads to a tension between the desire to preserve and the need to comply with copyright law.

Ethical considerations also arise, particularly concerning privacy. Archived personal data, sensitive information, or content that was not intended for permanent public display can raise privacy concerns. Archiving organizations must develop policies and technical measures to mitigate these risks, such as anonymizing or redacting sensitive information.

Funding and Sustainability

Web archiving is a resource-intensive endeavor. It requires significant investment in infrastructure, personnel, and ongoing maintenance. Securing consistent funding and ensuring the long-term sustainability of archiving projects, especially for non-profit organizations, remains a persistent challenge. The exponential growth of the web means that archiving efforts must continually scale to keep pace.

The Future of Web Archiving

The future of web archiving likely involves greater collaboration, technological innovation, and a deeper understanding of the value of preserving our digital heritage. We may see advancements in artificial intelligence and machine learning being used to identify and prioritize content for archiving, as well as to improve the accuracy of content reconstruction. New formats and standards may emerge to better handle the complexities of modern web applications.

Furthermore, as the internet becomes increasingly central to human activity and culture, the importance of web archives will only grow. They are not just digital libraries; they are essential tools for understanding our present and our past, offering a crucial window into the ever-evolving digital world. The ongoing efforts to build and maintain robust web archives are vital for ensuring that future generations can access and learn from the vast tapestry of information that exists online today.