The concept of a cofactor is fundamental in linear algebra, playing a crucial role in determinant calculations, inverse matrix computation, and solving systems of linear equations. While seemingly abstract, understanding cofactors unlocks deeper insights into the structure and properties of matrices, which have direct and impactful applications in sophisticated drone navigation and control systems.

Understanding the Building Blocks: Minors and Cofactors

Before delving into the cofactor itself, it’s essential to grasp the concept of a minor. The minor of an element $a{ij}$ in a matrix $A$, denoted as $M{ij}$, is the determinant of the submatrix formed by deleting the $i$-th row and the $j$-th column of $A$. This process of systematically reducing the matrix size by removing specific rows and columns is a key step in various matrix operations.

Consider a general $n times n$ matrix $A$:

$$

A = begin{pmatrix}

a{11} & a{12} & cdots & a{1n}

a{21} & a{22} & cdots & a{2n}

vdots & vdots & ddots & vdots

a{n1} & a{n2} & cdots & a_{nn}

end{pmatrix}

$$

To find the minor $M{11}$, we would remove the first row and the first column, leaving a $(n-1) times (n-1)$ submatrix. The determinant of this smaller matrix is $M{11}$. This process can be repeated for any element $a{ij}$ in the original matrix to find its corresponding minor $M{ij}$.

Calculating Minors

The calculation of a minor is a recursive process. For a $2 times 2$ matrix:

$$

A = begin{pmatrix}

a{11} & a{12}

a{21} & a{22}

end{pmatrix}

$$

The minor $M{11}$ is simply the determinant of the submatrix formed by removing the first row and first column, which is the scalar $a{22}$. Similarly, $M{12} = a{21}$, $M{21} = a{12}$, and $M{22} = a{11}$.

For a $3 times 3$ matrix:

$$

A = begin{pmatrix}

a{11} & a{12} & a{13}

a{21} & a{22} & a{23}

a{31} & a{32} & a_{33}

end{pmatrix}

$$

The minor $M_{11}$ is the determinant of the $2 times 2$ submatrix:

$$

begin{pmatrix}

a{22} & a{23}

a{32} & a{33}

end{pmatrix}

$$

So, $M{11} = a{22}a{33} – a{23}a_{32}$. This process continues for all elements, and the minors themselves will involve determinants of smaller matrices.

The Significance of the Minor

The minor $M{ij}$ essentially quantifies the “influence” of the element $a{ij}$ on the overall determinant of the matrix, after accounting for the positional effects that will be introduced by the cofactor. It represents the determinant of the matrix that remains when the row and column containing $a_{ij}$ are removed. This concept is crucial for understanding how changes in individual elements propagate through the matrix’s determinant.

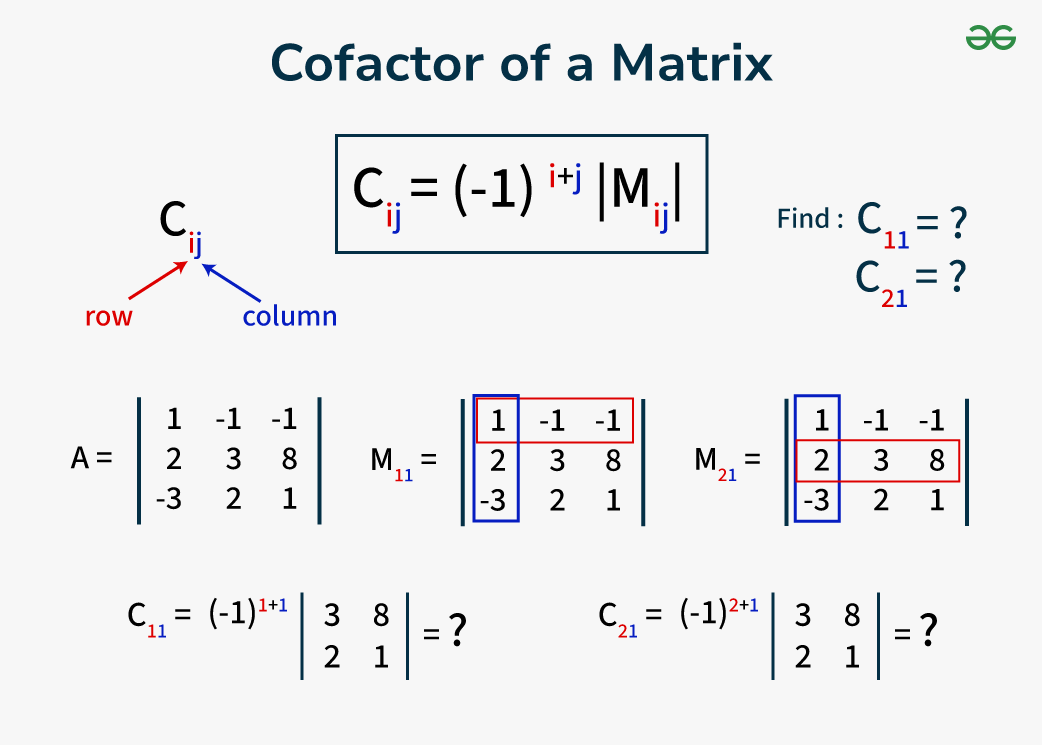

Defining the Cofactor

The cofactor of an element $a{ij}$ in a matrix $A$, denoted as $C{ij}$, is closely related to its minor $M_{ij}$ but includes a sign adjustment based on the position of the element. The formula for the cofactor is:

$$

C{ij} = (-1)^{i+j} M{ij}

$$

where $i$ is the row index and $j$ is the column index of the element $a{ij}$. The term $(-1)^{i+j}$ acts as a sign toggle. If the sum of the row and column indices ($i+j$) is even, the cofactor is equal to the minor ($C{ij} = M{ij}$). If the sum ($i+j$) is odd, the cofactor is the negative of the minor ($C{ij} = -M_{ij}$).

The Checkerboard Pattern of Signs

This sign adjustment can be visualized as a checkerboard pattern applied to the matrix, where the signs alternate:

$$

begin{pmatrix}

- & – & + & cdots

- & + & – & cdots

- & – & + & cdots

vdots & vdots & vdots & ddots

end{pmatrix}

$$

For an element in the first row and first column ($i=1, j=1$), $i+j = 2$ (even), so $C{11} = +M{11}$. For an element in the first row and second column ($i=1, j=2$), $i+j = 3$ (odd), so $C{12} = -M{12}$. This pattern continues for all elements.

Calculating Cofactors

Let’s revisit the $3 times 3$ matrix example to calculate its cofactors.

$$

A = begin{pmatrix}

a{11} & a{12} & a{13}

a{21} & a{22} & a{23}

a{31} & a{32} & a_{33}

end{pmatrix}

$$

We’ve already found $M{11} = a{22}a{33} – a{23}a_{32}$. Since $i=1, j=1$, $i+j=2$ (even), the cofactor is:

$C{11} = (-1)^{1+1} M{11} = +M{11} = a{22}a{33} – a{23}a_{32}$

Now let’s find $C{12}$. First, the minor $M{12}$ is the determinant of the submatrix formed by removing the first row and second column:

$$

begin{pmatrix}

a{21} & a{23}

a{31} & a{33}

end{pmatrix}

$$

So, $M{12} = a{21}a{33} – a{23}a_{31}$. Since $i=1, j=2$, $i+j=3$ (odd), the cofactor is:

$C{12} = (-1)^{1+2} M{12} = -M{12} = -(a{21}a{33} – a{23}a{31}) = a{23}a{31} – a{21}a_{33}$

This process is repeated for all elements in the matrix. The resulting cofactors form a new matrix, known as the matrix of cofactors.

The Cofactor Expansion for Determinants

The primary application of cofactors is in the calculation of the determinant of a matrix. The determinant of an $n times n$ matrix $A$, denoted as $det(A)$ or $|A|$, can be computed by a cofactor expansion along any row or any column.

Expansion Along a Row

To expand along the $i$-th row, the determinant is given by:

$$

det(A) = a{i1}C{i1} + a{i2}C{i2} + cdots + a{in}C{in} = sum{j=1}^{n} a{ij}C_{ij}

$$

This formula states that the determinant is the sum of the products of each element in a chosen row (or column) and its corresponding cofactor.

Expansion Along a Column

Similarly, to expand along the $j$-th column, the determinant is given by:

$$

det(A) = a{1j}C{1j} + a{2j}C{2j} + cdots + a{nj}C{nj} = sum{i=1}^{n} a{ij}C_{ij}

$$

The choice of row or column for expansion can often simplify the calculation, especially if the chosen row or column contains many zero elements. For instance, if $a{ik} = 0$, then the term $a{ik}C_{ik}$ becomes zero, effectively removing that term from the summation.

Example: Determinant of a $3 times 3$ Matrix

Let’s calculate the determinant of the following matrix using cofactor expansion along the first row:

$$

A = begin{pmatrix}

2 & 1 & 3

0 & 4 & 1

-1 & 2 & 5

end{pmatrix}

$$

We need the cofactors $C{11}$, $C{12}$, and $C_{13}$.

$M{11} = det begin{pmatrix} 4 & 1 2 & 5 end{pmatrix} = (4)(5) – (1)(2) = 20 – 2 = 18$.

$C{11} = (-1)^{1+1} M_{11} = +18$.

$M{12} = det begin{pmatrix} 0 & 1 -1 & 5 end{pmatrix} = (0)(5) – (1)(-1) = 0 – (-1) = 1$.

$C{12} = (-1)^{1+2} M_{12} = -1$.

$M{13} = det begin{pmatrix} 0 & 4 -1 & 2 end{pmatrix} = (0)(2) – (4)(-1) = 0 – (-4) = 4$.

$C{13} = (-1)^{1+3} M_{13} = +4$.

Now, using the cofactor expansion along the first row:

$det(A) = a{11}C{11} + a{12}C{12} + a{13}C{13}$

$det(A) = (2)(18) + (1)(-1) + (3)(4)$

$det(A) = 36 – 1 + 12$

$det(A) = 47$

This method is robust and can be applied to matrices of any size, although for larger matrices, it becomes computationally intensive.

The Adjugate Matrix and Inverse

Cofactors are also indispensable in finding the inverse of a matrix. The adjugate (or classical adjoint) of a matrix $A$, denoted as $text{adj}(A)$, is the transpose of the matrix of cofactors.

Constructing the Matrix of Cofactors

First, calculate all the cofactors $C_{ij}$ for the matrix $A$. This forms the matrix of cofactors:

$$

C = begin{pmatrix}

C{11} & C{12} & cdots & C{1n}

C{21} & C{22} & cdots & C{2n}

vdots & vdots & ddots & vdots

C{n1} & C{n2} & cdots & C_{nn}

end{pmatrix}

$$

Transposing to Get the Adjugate

The adjugate matrix is then obtained by transposing the matrix of cofactors:

$$

text{adj}(A) = C^T = begin{pmatrix}

C{11} & C{21} & cdots & C{n1}

C{12} & C{22} & cdots & C{n2}

vdots & vdots & ddots & vdots

C{1n} & C{2n} & cdots & C_{nn}

end{pmatrix}

$$

Calculating the Inverse

A fundamental theorem in linear algebra states that for an invertible matrix $A$ (i.e., $det(A) neq 0$), its inverse $A^{-1}$ can be calculated using the adjugate matrix:

$$

A^{-1} = frac{1}{det(A)} text{adj}(A)

$$

This formula highlights the critical role of both the determinant and the cofactors in matrix inversion.

Example: Inverse of a $2 times 2$ Matrix

For a $2 times 2$ matrix $A = begin{pmatrix} a & b c & d end{pmatrix}$, the determinant is $det(A) = ad – bc$.

The matrix of cofactors is $C = begin{pmatrix} d & -c -b & a end{pmatrix}$.

The adjugate matrix is $text{adj}(A) = C^T = begin{pmatrix} d & -b -c & a end{pmatrix}$.

Therefore, the inverse is $A^{-1} = frac{1}{ad-bc} begin{pmatrix} d & -b -c & a end{pmatrix}$.

This simplified formula for $2 times 2$ matrices is derived directly from the general cofactor method.

Relevance to Drone Navigation and Control

The ability to invert matrices is paramount in advanced flight control systems for drones. When processing sensor data (like IMU readings, GPS coordinates, and vision data) to estimate the drone’s state (position, velocity, orientation), complex systems of linear equations are often encountered. Solving these systems efficiently and accurately relies on matrix operations, particularly inversion.

For instance, in state estimation algorithms like the Kalman Filter, the covariance matrices are manipulated. The Kalman Gain, a crucial component of the filter, is often calculated using matrix inversions. A well-conditioned matrix, which is directly related to the values of its cofactors and determinant, ensures a stable and reliable estimation of the drone’s state. If the determinant is zero or very close to zero, the matrix is singular, and its inverse does not exist, leading to a breakdown in the control loop. Understanding cofactors, therefore, provides a theoretical foundation for the stability and predictability of drone flight control systems.

Applications Beyond Determinants and Inverses

While calculating determinants and inverses are the most prominent applications of cofactors, their utility extends to other areas of linear algebra and its practical implementations.

Solving Systems of Linear Equations (Cramer’s Rule)

Cramer’s Rule offers a method for solving systems of linear equations using determinants. For a system $Ax = b$, where $A$ is an $n times n$ matrix of coefficients, $x$ is the vector of unknowns, and $b$ is the vector of constants, the solution for the $j$-th variable $x_j$ is given by:

$$

xj = frac{det(Aj)}{det(A)}

$$

where $Aj$ is the matrix formed by replacing the $j$-th column of $A$ with the vector $b$. The calculation of $det(Aj)$ and $det(A)$ heavily relies on the concept of cofactors. While not always the most computationally efficient method for large systems, Cramer’s Rule provides a direct analytical solution and is valuable for theoretical understanding and small-scale problems.

Eigenvalue Problems

Eigenvalues and eigenvectors are fundamental to understanding the behavior of linear transformations and are essential in areas like vibration analysis, stability analysis, and signal processing. The characteristic equation for finding eigenvalues, $det(A – lambda I) = 0$, involves calculating the determinant of a matrix that includes a variable $lambda$. This determinant calculation, especially for symbolic manipulation, often employs cofactor expansion. Understanding the sensitivity of the determinant to changes in matrix elements (which cofactors help quantify) can provide insights into the robustness of eigenvalue solutions.

Understanding Matrix Properties

The existence and magnitude of cofactors are intrinsically linked to the properties of a matrix. A non-zero determinant, which is crucial for invertibility, is directly dependent on the interplay of its cofactors and their corresponding elements. The rank of a matrix, which indicates its linear independence, can also be determined by examining minors of various sizes. A rank-deficient matrix has at least one minor of a certain size equal to zero, which has implications for the solvability of associated linear systems.

Data Analysis and Machine Learning

In more complex computational fields, such as machine learning and advanced data analysis, matrices are ubiquitous. Techniques like Principal Component Analysis (PCA) rely on the eigendecomposition of covariance matrices. The stability and interpretability of PCA results can be indirectly influenced by the underlying properties of these matrices, which are, in turn, governed by concepts like determinants and cofactors. While direct cofactor calculation might not be the computationally favored method for extremely large matrices in these fields, the theoretical understanding derived from cofactor analysis provides a foundational grasp of matrix behavior and the conditions under which certain algorithms will perform robustly.

The journey through understanding the minor, the cofactor, and their applications in determinants and matrix inverses reveals a powerful framework for analyzing and manipulating matrices. This mathematical foundation underpins many sophisticated technologies, including the precise navigation and control systems that enable advanced drone operations.