Spatial disorientation represents one of the most insidious and dangerous threats in aviation, historically claiming countless lives and jeopardizing missions across all flight domains. At its core, spatial disorientation is a pilot’s inability to accurately determine the aircraft’s attitude, altitude, velocity, or position relative to the Earth’s surface or other objects. This profound confusion arises when the sensory information received by a pilot conflicts with reality, leading to erroneous judgments and potentially catastrophic control inputs. While often discussed in the context of human physiology and psychology, the combat against spatial disorientation is fundamentally a technological battle—a continuous evolution of flight technology designed to provide objective, unwavering truth in the face of deceptive sensory inputs. From rudimentary compasses to sophisticated multi-sensor arrays and advanced stabilization systems, the drive to accurately perceive and maintain spatial awareness is at the very heart of flight technology. This article delves into the phenomenon of spatial disorientation and explores how advancements in flight technology act as the crucial antidote, ensuring safer and more reliable operations, whether piloted by humans or increasingly, by autonomous systems.

The Human Element: Understanding Sensory Conflict

For human pilots, spatial disorientation is primarily a sensory conflict, a battle between what the body perceives and what is actually happening. Our brains are remarkably adept at integrating sensory input from our eyes, ears (specifically the vestibular system), and proprioceptors (nerve endings in muscles and joints) to construct a coherent understanding of our orientation in space. However, these systems, evolved for life on the ground, are easily deceived in the three-dimensional, high-speed environment of flight, particularly when visual references are limited or absent.

Visual System Deception

The visual system is typically our most dominant sense for orientation. When visual cues are clear and consistent—such as a visible horizon, ground features, or stars—a pilot can easily maintain spatial awareness. However, when these cues are degraded or misleading, the visual system can become a source of disorientation. Flying into clouds, fog, or at night without external lights can completely strip away visual references, leading to the “black hole” effect where depth perception and distance judgments become impossible. Conversely, misleading visual cues, such as sloped cloud decks mistaken for a horizon, or ground lights appearing to tilt, can induce false sensations of banking or climbing, compelling the pilot to react incorrectly to what their eyes are telling them. Even simple patterns, like parallel runway lights on a wide runway, can create an illusion of being too high, prompting an unsafe descent.

Vestibular System Illusions

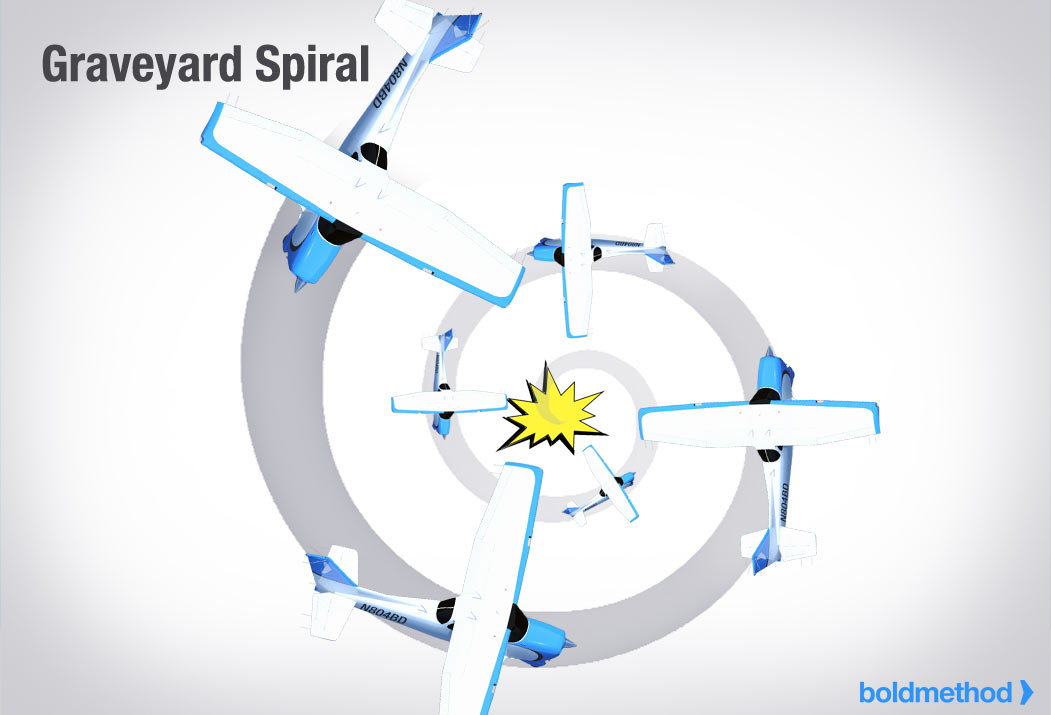

The vestibular system, located in the inner ear, contains two primary components: the semicircular canals, which detect angular acceleration (changes in rotation), and the otolith organs (utricle and saccule), which detect linear acceleration and gravity. While effective on the ground, these systems are notorious sources of disorientation in flight. The semicircular canals, for instance, can be fooled during prolonged turns. If a pilot enters a gradual turn and then levels the wings, the fluid in the canals may continue to move due to inertia, creating the illusion of still turning in the original direction. This “leans” illusion can cause a pilot to re-enter the turn without realizing it. Similarly, rapid acceleration can stimulate the otolith organs, giving the sensation of pitching up, known as the “somatogravic illusion.” If a pilot attempts to correct for this perceived climb, they may inadvertently push the nose down, potentially flying into the ground or water.

Proprioceptive System Cues

The proprioceptive system provides information about the position and movement of our body parts, contributing to our sense of balance and “seat of the pants” feel. While crucial for coordination, it’s a weak and often misleading source of spatial orientation in flight. Centrifugal forces during turns, turbulence, or unusual attitudes can create sensations that are easily misinterpreted. For example, a pilot performing a coordinated turn might feel like they are flying straight and level, despite the aircraft banking. Conversely, in uncoordinated flight, a pilot might feel a strong side force and assume the aircraft is skidding, when in fact it might be slipping. Relying solely on these subtle and often inaccurate body sensations can quickly lead to a loss of control, especially when other sensory inputs are compromised.

The Peril of Conflicting Information

The true danger of spatial disorientation arises when these sensory systems provide conflicting information. Imagine a pilot flying into clouds at night. Their eyes see nothing but blackness, offering no horizon. Their vestibular system might be providing misleading cues from a gradual turn, suggesting they are banking when they are not, or vice versa. Their proprioceptive system offers unreliable “seat of the pants” sensations. In this scenario, the pilot’s internal reference frame collapses, replaced by a confusing and often terrifying set of false perceptions. Without an objective, external reference, the pilot is left to make critical flight decisions based on faulty sensory data, which can quickly lead to loss of control, controlled flight into terrain (CFIT), or mid-air collisions. This is precisely where flight technology steps in as the indispensable guardian of spatial awareness.

Flight Technology as the Objective Arbiter

Recognizing the inherent limitations of human physiology in the flight environment, flight technology has continuously evolved to provide objective, unambiguous data about an aircraft’s state and position. These systems act as a constant, reliable arbiter, offering the truth about attitude, altitude, heading, and speed, irrespective of external visual cues or a pilot’s internal sensations. They are the bedrock upon which modern aviation safety is built, transforming the cockpit from a place of sensory deception into a hub of precise, data-driven awareness.

Global Positioning Systems (GPS) for Absolute Positioning

The Global Positioning System (GPS) revolutionized navigation by providing highly accurate, three-dimensional positional information anywhere on Earth. By triangulating signals from multiple orbiting satellites, a GPS receiver can determine an aircraft’s precise latitude, longitude, and altitude. This absolute positioning capability is a powerful countermeasure against spatial disorientation. Even when visual references are nil, GPS allows pilots (or autonomous systems) to know exactly where they are on a map, their ground speed, and their track. More advanced GPS variants, such as those incorporating differential GPS (DGPS) or the Satellite-Based Augmentation System (SBAS) like WAAS (Wide Area Augmentation System), offer even greater accuracy, crucial for precision approaches and operations in challenging environments. For autonomous drones, GPS is often the primary means of navigation, allowing them to follow pre-programmed flight paths and maintain position hold with remarkable stability, effectively removing the human element of spatial disorientation from the core navigation task.

Inertial Measurement Units (IMUs) for Relative Orientation

While GPS provides absolute position, an Inertial Measurement Unit (IMU) is critical for understanding an aircraft’s attitude and motion relative to itself. Comprising accelerometers and gyroscopes, an IMU measures linear acceleration and angular velocity in three axes (pitch, roll, and yaw). These raw measurements are then integrated over time to calculate the aircraft’s current orientation (attitude) and velocity. Unlike GPS, which relies on external signals, IMUs are self-contained and provide continuous, high-frequency updates on how the aircraft is moving and oriented. This makes them indispensable for stabilization systems, providing immediate feedback on any deviation from the desired attitude. When a pilot experiences “the leans” or “somatogravic illusion,” the IMU provides the objective truth: the aircraft’s actual bank angle or pitch attitude, displayed clearly on instruments like the Attitude Indicator (AI) or Artificial Horizon. Modern IMUs are often miniaturized and highly accurate, forming the core sensing component for drone flight controllers and advanced stabilization systems in manned aircraft.

Advanced Stabilization Systems

Building upon the data provided by IMUs, advanced stabilization systems actively work to counteract unwanted movements and maintain a stable flight attitude. These systems, whether in autopilots for manned aircraft or flight controllers for drones, constantly monitor the IMU’s output and make instantaneous adjustments to control surfaces (or propeller speeds in multirotors) to damp oscillations and maintain a desired orientation. For drones, especially, these systems are paramount. Without them, a multirotor would be inherently unstable and unflyable. The flight controller, acting on IMU data, makes thousands of micro-adjustments per second to maintain level flight, hold position, or execute smooth maneuvers. This technological stability directly mitigates spatial disorientation, not by correcting a human pilot’s perception, but by ensuring the aircraft itself remains in a stable and predictable state, providing a stable platform from which a human operator can receive consistent visual feedback (e.g., from an FPV camera) or allowing an autonomous system to execute its mission without environmental perturbations causing unexpected attitude changes.

Enhancing Awareness and Preventing Disorientation

Beyond the fundamental systems, flight technology continues to evolve, creating increasingly sophisticated ways to enhance a pilot’s (or an autonomous system’s) spatial awareness and prevent the onset of disorientation. This involves intelligent data integration, environmental awareness, and intuitive display technologies.

Redundant Sensor Systems and Data Fusion

Reliance on a single sensor, no matter how advanced, always carries a risk. Therefore, modern flight technology heavily emphasizes redundancy and data fusion. Aircraft often employ multiple GPS receivers, several IMUs (sometimes using different underlying technologies like MEMS and fiber optic gyros), magnetometers, barometric altimeters, and airspeed sensors. The data from these diverse sources is then fed into a central flight management system or flight controller. Through advanced algorithms, this system performs “data fusion,” intelligently combining and cross-referencing all available information. If one sensor provides anomalous data, the system can detect it, flag it, and often disregard it in favor of more consistent data from other sensors. This comprehensive approach provides a robust and resilient picture of the aircraft’s state, making it far more difficult for any single sensor failure or environmental anomaly to induce spatial disorientation. For autonomous drones, robust sensor fusion is critical for reliable navigation, especially in complex or GPS-denied environments.

Obstacle Avoidance Technologies

Spatial disorientation isn’t just about knowing one’s attitude; it’s also about knowing one’s position relative to other objects. Obstacle avoidance technologies directly address this aspect of spatial awareness, especially critical for low-altitude flight, precision maneuvers, and autonomous operations. Systems like radar, lidar, ultrasonic sensors, and computer vision (using cameras) constantly scan the aircraft’s surroundings for potential hazards. These sensors detect obstacles and provide real-time information to the pilot (via displays and alerts) or directly to the flight control system for autonomous evasive action. By building a dynamic, real-time “map” of the surrounding environment, these technologies prevent collisions that could otherwise result from a pilot’s misjudgment of distance or orientation, or an autonomous system lacking sufficient environmental awareness. For drones, obstacle avoidance is a rapidly developing field, crucial for operating safely in complex urban or industrial environments.

Synthetic Vision and Augmented Reality Displays

Even with accurate sensor data, how that information is presented to the pilot is crucial. Traditional “steam gauge” instruments require mental interpretation and can be slow to read. Modern glass cockpits, with their large, integrated displays, have significantly improved information presentation. Synthetic Vision Systems (SVS) take this a step further by generating a realistic, three-dimensional depiction of the outside world on the cockpit display, complete with terrain, obstacles, and runway outlines, even when external visibility is zero. This “virtual window” directly combats visual disorientation by providing a consistent, true-to-life visual reference, regardless of actual weather conditions. Augmented Reality (AR) displays, often projected onto a Head-Up Display (HUD) or even directly onto a pilot’s visor (especially in FPV drone applications), overlay critical flight data and navigational cues directly onto the real-world view. This allows pilots to keep their eyes outside the cockpit while simultaneously receiving essential instrumentation, reducing the cognitive load and potential for sensory conflict that can lead to disorientation.

The Future of Spatial Awareness in Autonomous Flight

As aviation increasingly moves towards greater autonomy, the concept of spatial disorientation shifts from a human physiological challenge to a technological one: how can machines reliably and robustly understand their own state and environment without human intuition? The goal remains the same—accurate spatial awareness—but the methods become more sophisticated, leveraging cutting-edge AI and sensing paradigms.

AI and Machine Learning for Environmental Understanding

Autonomous systems, particularly drones, rely heavily on Artificial Intelligence (AI) and Machine Learning (ML) to process complex sensor data and build a comprehensive understanding of their spatial context. Unlike rule-based systems, AI can learn to interpret ambiguous or novel environmental conditions, effectively “perceiving” the world in a more nuanced way. This includes object recognition, semantic mapping (identifying different types of terrain or structures), and predicting the movement of dynamic obstacles. For instance, AI-powered computer vision systems can analyze camera feeds to estimate velocity relative to ground features (optical flow), even in GPS-denied environments, providing a form of visual “proprioception” for the drone. ML algorithms can fuse data from numerous dissimilar sensors (e.g., lidar, radar, vision, IMU) to create a robust and continuous spatial model, making the autonomous system resilient to individual sensor failures or environmental challenges that might disorient a human.

Collaborative Sensing and Swarm Intelligence

The future of spatial awareness for autonomous systems isn’t just about individual aircraft; it’s about interconnected networks. Collaborative sensing involves multiple autonomous vehicles sharing their individual spatial data (positions, detected obstacles, environmental conditions) to create a richer, more complete shared understanding of the operational space. This “swarm intelligence” enhances overall spatial awareness, allowing for more efficient navigation, collective obstacle avoidance, and robust operations in complex environments. If one drone loses GPS signal, it can potentially infer its position from the known positions of nearby drones. This distributed spatial awareness fundamentally changes how disorientation is managed, moving from an individual problem to a collective, self-correcting challenge.

Robustness in GPS-Denied Environments

While GPS is foundational, reliance on it poses vulnerabilities in environments where signals are weak, jammed, or spoofed. Future flight technology for autonomous systems is heavily focused on achieving spatial awareness without GPS. This involves advanced visual-inertial odometry (VIO), where cameras and IMUs work in tandem to track motion and build 3D maps of the environment. Lidar-based simultaneous localization and mapping (SLAM) algorithms allow drones to create detailed maps of unknown environments while simultaneously localizing themselves within those maps. Furthermore, magnetic field navigation, celestial navigation (for higher altitude/space applications), and even ultra-wideband (UWB) radio triangulation are being explored to provide redundant or alternative means of precise spatial positioning, ensuring that autonomous systems can maintain their orientation and navigate effectively even in the most challenging or hostile electromagnetic environments.

Conclusion

Spatial disorientation remains a critical concern in aviation, a testament to the persistent challenge of accurately perceiving one’s place in three-dimensional space. While the human element is profoundly susceptible to sensory deceptions, the relentless march of flight technology has provided increasingly sophisticated and reliable countermeasures. From the precise positioning offered by GPS and the unblinking truth of IMUs to the active stability provided by advanced flight controllers, technology acts as the objective arbiter, guiding pilots and autonomous systems with unwavering accuracy. As we venture further into the age of autonomous flight, the evolution continues, with AI, machine learning, and collaborative sensing pushing the boundaries of spatial awareness beyond anything previously imagined. Ultimately, the ongoing development of flight technology is not just about enhancing performance; it is fundamentally about ensuring that the objective reality of flight always prevails over the subjective perils of disorientation, making the skies safer and more accessible for all.