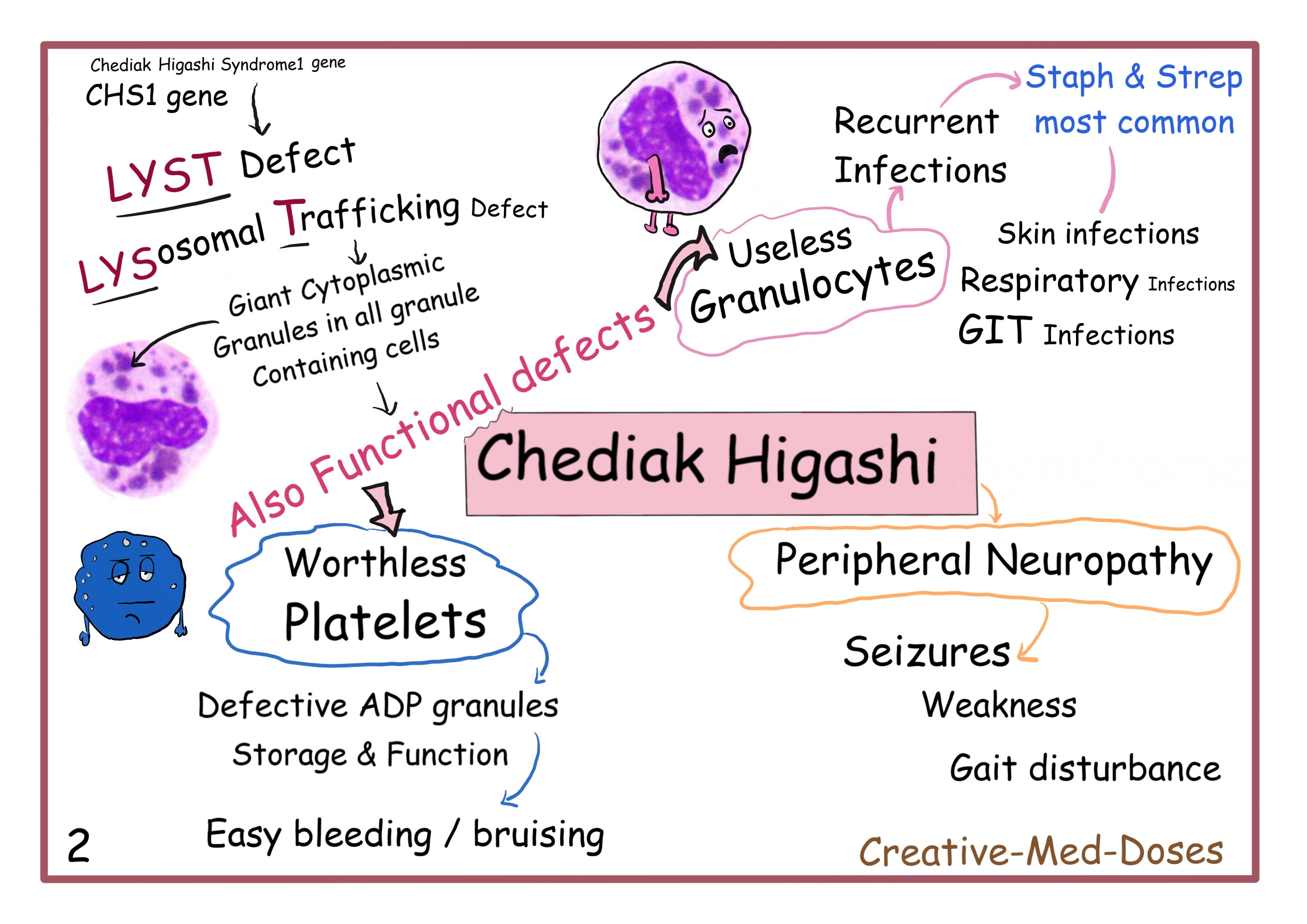

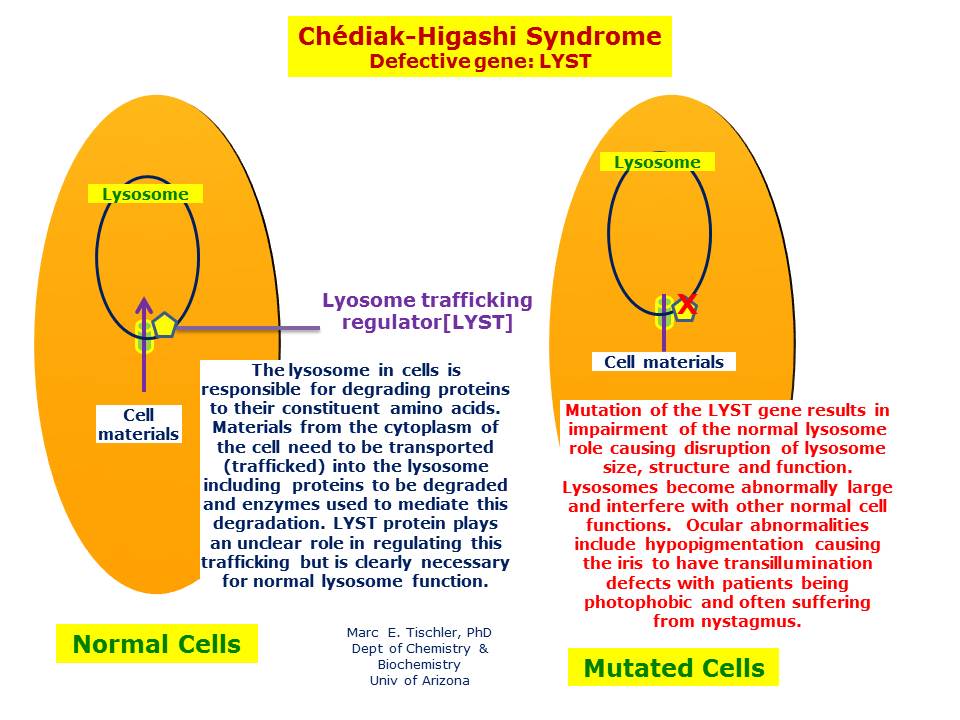

In the specialized field of autonomous drone development and complex remote sensing, “Chediak Higashi Syndrome” has transitioned from its origins in clinical pathology to become a powerful technical metaphor. In the context of Tech & Innovation, specifically regarding unmanned aerial vehicle (UAV) fleet management and AI-driven autonomous navigation, the term refers to a rare but catastrophic failure of data aggregation. Just as the biological condition involves a defect in the transport of intracellular materials and the formation of oversized, non-functional granules, the technological “syndrome” describes a state where an AI’s neural network begins to over-aggregate sensor data, leading to massive, unprocessable “data clumps” that paralyze the vehicle’s decision-making matrix.

As we push the boundaries of autonomous flight, mapping, and remote sensing, understanding this technological bottleneck is crucial for engineers and innovators. It represents the ultimate challenge in high-density data processing: ensuring that the “immune system” of a drone—its ability to filter noise and prioritize critical flight path information—does not succumb to its own internal processing inefficiencies.

The Architecture of Systemic Vulnerability in Autonomous UAVs

The innovation behind modern autonomous drones relies on a delicate balance of sensor fusion. To achieve true autonomy, a drone must simultaneously process inputs from LiDAR, ultrasonic sensors, GPS, and optical flow cameras. When these systems operate correctly, the data flows in a streamlined, granular fashion, allowing the AI follow modes and navigation algorithms to make micro-adjustments in milliseconds. However, when a “Chediak Higashi” event occurs within the software architecture, this streamlined flow breaks down.

Defining the Data Aggregation Bottleneck

In the realm of Tech & Innovation, a data aggregation bottleneck occurs when the centralized processing unit (CPU) or the edge-computing AI module fails to distribute incoming sensor packets efficiently. Instead of the drone seeing a continuous stream of individual obstacles, the system begins to “clump” these inputs together. This results in the creation of oversized data granules—metaphorical “giant lysosomes” of information—that the navigation logic cannot decompose quickly enough.

For a drone performing a high-speed autonomous mapping mission, this delay is fatal. The system becomes “blinded” by its own internal data density. While the sensors are physically functioning and the cameras are capturing high-resolution imagery, the internal logic is unable to separate a tree branch from the background sky because the data has aggregated into a single, unreadable block of information. This failure of intracellular (or intra-systemic) transport within the drone’s logic gates is what engineers call the “Chediak Higashi” of AI.

The Parallel Between Biological and Synthetic Logic

The reason this terminology has gained traction in drone innovation circles is the striking parallel between biological systems and high-level autonomous flight. In biology, the syndrome affects the immune response and the pigmentation of the organism. In drone technology, the “immune response” is the obstacle avoidance system, and “pigmentation” refers to the spectral accuracy of remote sensing data.

When a drone fleet is deployed for complex tasks such as multi-spectral agricultural analysis or search and rescue in dense forest canopies, the integrity of every individual data packet is vital. If the system suffers from this “syndrome,” the “pigmentation” (or the quality of the thermal and optical data) becomes blotchy and unusable. The drone loses its ability to “defend” itself against environmental hazards, much like an immune-compromised organism, leading to mid-air collisions or total system lockouts.

Remote Sensing and the “Giant Granule” Phenomenon in LiDAR

LiDAR (Light Detection and Ranging) is perhaps the most susceptible area of drone technology to these types of systemic anomalies. Innovation in LiDAR has moved toward higher point densities, creating millions of points per second to generate high-fidelity 3D maps. However, the more data we collect, the higher the risk of the “giant granule” phenomenon—a core component of the Chediak Higashi metaphor.

Impact on Real-Time Mapping Accuracy

In a healthy autonomous system, LiDAR point clouds are processed through a series of filters that categorize objects based on their spatial coordinates. In a system experiencing an aggregation failure, the AI begins to lose its resolution. Instead of perceiving a power line as a distinct, thin line, the software aggregates the surrounding air particles, dust, and electrical noise into a massive, impenetrable wall.

This “giant granule” in the digital twin of the environment prevents the drone from finding a path forward. It is not a hardware failure; the laser is firing and the receiver is catching the return. It is a failure of the innovation in the filtering algorithms. This illustrates the critical need for “distributed intelligence” where the processing is handled at the sensor level rather than being sent back to a central node that can become overwhelmed by data clumps.

Challenges in Autonomous Navigation and Obstacle Avoidance

Autonomous flight relies on a hierarchy of priorities. Avoiding a stationary object like a building is a low-level priority, while reacting to a moving object like a bird or another drone is a high-level priority. The Chediak Higashi error in the software stack causes a “priority inversion.” Because the data is clumped together, the AI can no longer distinguish between a high-priority moving target and low-priority environmental noise.

This leads to “stuttering” flight paths. You may have observed a drone in AI-follow mode that appears to twitch or pause erratically. This is often the result of the system struggling to break down an oversized data granule. Innovation in this space is currently focused on “de-clumping” algorithms that act as a digital enzyme, breaking down complex data structures into manageable parts to ensure smooth, fluid motion in three-dimensional space.

Diagnostic AI: Treating the “Syndrome” in Drone Fleets

As we move toward a future where drone swarms and autonomous fleets are the norm, the “health” of the system becomes an architectural priority. Innovators are now developing “diagnostic AI” that sits alongside the primary flight controller to monitor for the early signs of data clumping. This is the drone equivalent of preventative medicine.

Redundancy Protocols and Self-Healing Networks

One of the most exciting innovations in UAV tech is the development of self-healing software networks. When a drone identifies that its obstacle avoidance data is beginning to aggregate abnormally—suffering from the “syndrome”—it can initiate a redundancy protocol. This involves offloading the processing load to other drones in the swarm or to a ground-based edge server.

By distributing the “lysosomal” load, the drone prevents the formation of the giant data granules that lead to a crash. This level of communication between units is the hallmark of the next generation of autonomous flight. It turns a collection of individual units into a single, cohesive organism that is resistant to the logic failures that plague isolated systems.

The Role of Edge Computing in Eliminating Latency “Clumps”

Latency is the primary catalyst for the Chediak Higashi effect in drone technology. When there is a delay between data capture and data processing, the information inevitably piles up. Innovation in edge computing—processing the data directly on the drone’s hardware rather than in the cloud—is the “cure” for this syndrome.

Modern drone controllers are being built with dedicated AI accelerators (NPUs) that are specifically designed to handle high-velocity data streams. These chips ensure that the data never has the opportunity to aggregate into unmanageable clumps. By keeping the information flow “lean and granular,” these innovations allow drones to navigate through dense urban environments or thick forests with the grace of a biological organism, free from the systemic bottlenecks of previous generations.

Future Horizons in Robust Drone Innovation

The study of systemic failures like the Chediak Higashi metaphor is driving the next wave of innovation in the drone industry. We are moving away from simply adding more sensors and toward a focus on “intelligent filtration.” The goal is not just to see more, but to process what we see more efficiently.

The future of autonomous flight lies in the ability of AI to maintain its “systemic health” in the face of overwhelming environmental data. As we develop more advanced remote sensing tools, such as hyperspectral cameras and solid-state LiDAR, the risk of data aggregation errors will only increase. However, by identifying these “syndromes” early in the development cycle, engineers can create more resilient, robust, and truly autonomous machines.

In conclusion, “Chediak Higashi Syndrome” in the tech world serves as a vital reminder that more data is not always better. The true innovation lies in the architecture—in the ability to transport, process, and act upon information without allowing it to clump into the giant, paralyzing granules of systemic failure. As we continue to refine the neural networks that power our drones, we move closer to a world where autonomous systems are as fluid, responsive, and reliable as the natural world they are designed to navigate.