In the realm of aerial imaging, light is the fundamental currency. Whether you are capturing a cinematic sunset with a high-end gimbal camera or conducting a specialized thermal inspection of a solar farm, your drone’s sensor is essentially a sophisticated collector of light wavelengths. To the uninitiated, a wavelength is simply a measurement of distance between peaks of an electromagnetic wave. However, to a drone pilot or a digital imaging specialist, understanding light wavelength is the key to mastering color accuracy, image sharpness, and the specialized data collection that happens beyond the visible spectrum.

Every photon captured by a drone’s CMOS sensor carries information determined by its wavelength. This information dictates the color we see, the amount of energy the sensor records, and how the light interacts with the glass elements of the lens. By diving deep into the mechanics of wavelengths, we can better understand how drone cameras translate the physical world into a digital masterpiece.

The Physics of Light in Aerial Imaging

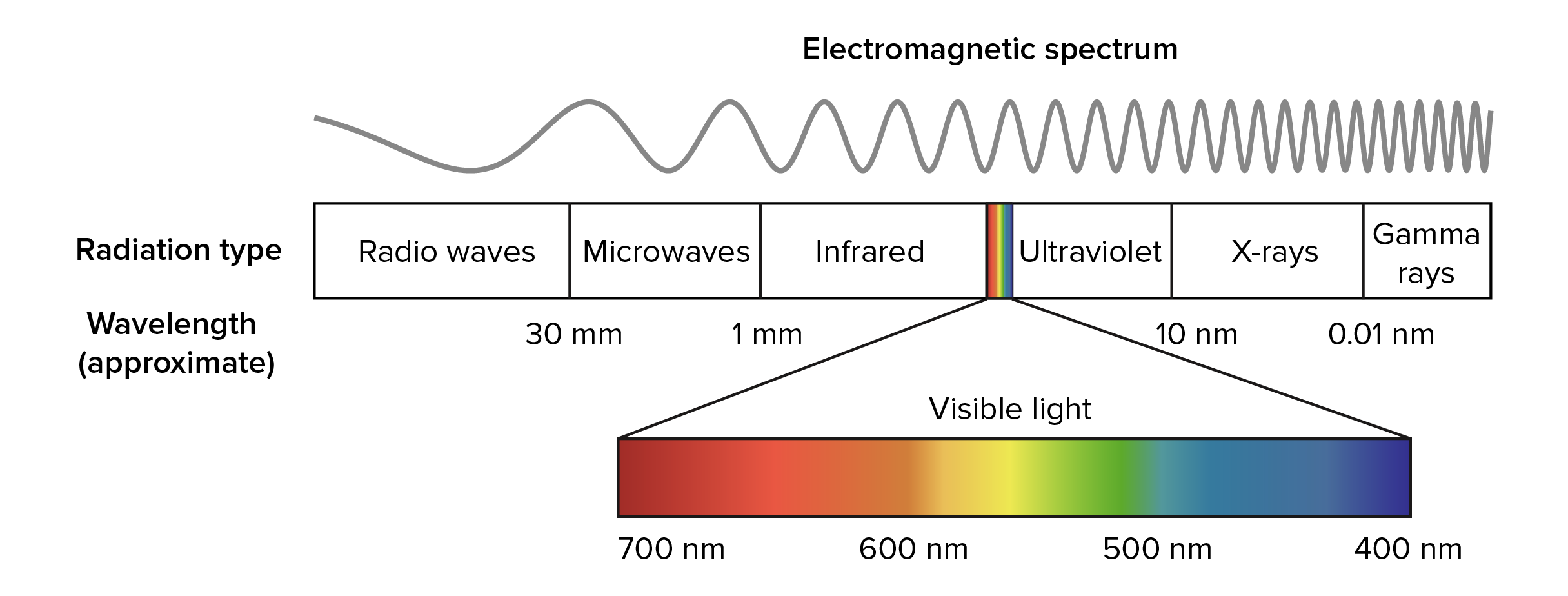

At its core, light is electromagnetic radiation that travels in waves. The “wavelength” refers to the spatial period of the wave—the distance over which the wave’s shape repeats. In the context of drone technology, we primarily deal with the electromagnetic spectrum ranging from ultraviolet to long-wave infrared.

Defining the Electromagnetic Spectrum for Drone Pilots

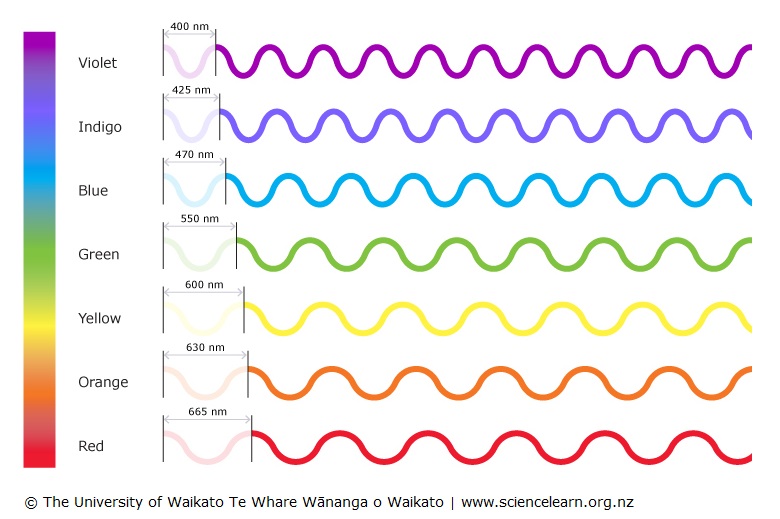

The human eye is sensitive to a very narrow band of the electromagnetic spectrum, roughly from 380 nanometers (nm) to 750 nm. This is what we call visible light. Within this range, different wavelengths correspond to different colors. Short wavelengths (around 400 nm) appear as violet or blue, while long wavelengths (around 700 nm) appear as red.

When a drone is hovering 400 feet in the air, the light reaching its sensor has often traveled through significant atmospheric interference. Shorter blue wavelengths scatter more easily than longer red wavelengths—a phenomenon known as Rayleigh scattering. This is why the sky appears blue and why distant horizons in aerial photos often have a blueish, hazy tint. Professional drone cameras must account for these variations in wavelength to produce clear, high-contrast imagery.

How Sensors Interpret Wavelengths as Color

Standard drone cameras, such as those found on the DJI Mavic or Autel EVO series, utilize a Bayer filter mosaic over the sensor. This is a grid of red, green, and blue (RGB) filters that allow only specific wavelengths to reach individual pixels. Because the sensor itself is color-blind—only measuring the intensity of light—the wavelength filtering is what allows the onboard processor to reconstruct a full-color image.

The efficiency with which a sensor captures different wavelengths is known as its “quantum efficiency.” High-end aerial cameras are designed to maximize this efficiency across the visible spectrum, ensuring that the subtle gradients of a landscape are preserved. When we talk about “bit depth” and “dynamic range” in drone photography, we are essentially discussing the sensor’s ability to distinguish between minute variations in wavelength intensity and energy.

Wavelengths Beyond the Human Eye: Thermal and Multispectral Sensors

One of the most powerful aspects of modern drone technology is the ability to “see” wavelengths that are invisible to the human eye. This capability has transformed industries ranging from precision agriculture to search and rescue.

Infrared and Heat Mapping (Long-wave IR)

Thermal cameras, such as the Zenmuse H20T or the FLIR Boson integrated into various enterprise drones, do not “see” visible light at all. Instead, they detect Long-wave Infrared (LWIR) radiation, which typically falls in the 8,000 to 14,000 nm (8-14 microns) range.

Everything with a temperature above absolute zero emits infrared radiation. The wavelength of this radiation changes based on the object’s temperature. Thermal sensors are calibrated to detect these specific long wavelengths and translate them into a “thermogram” or heat map. In industrial drone inspections, detecting a “hot spot” in a power line means identifying a specific spike in infrared wavelength emission that indicates resistance or impending failure.

Near-Infrared (NIR) for Agricultural Analysis

In the world of “Ag-tech” drones, the focus shifts to the Near-Infrared (NIR) spectrum, roughly between 700 nm and 1,100 nm. Healthy vegetation reflects a large amount of NIR light while absorbing most of the visible red light for photosynthesis.

Multispectral drone cameras, like those on the P4 Multispectral or the MicaSense series, carry multiple sensors—each dedicated to a specific narrow band of wavelengths (e.g., Red, Green, Blue, Red Edge, and NIR). By comparing the amount of red light absorbed to the amount of NIR light reflected—a ratio known as the Normalized Difference Vegetation Index (NDVI)—farmers can determine the health of a crop long before the plants show visible signs of stress to the naked eye. This is a direct application of understanding how specific surfaces interact with different light wavelengths.

Optical Quality and the Management of Wavelengths

While the sensor records the light, the lens is responsible for delivering it. The way different wavelengths of light pass through glass is one of the greatest challenges in optical engineering for drones.

Combatting Chromatic Aberration in Drone Lenses

A phenomenon known as “dispersion” occurs when a lens fails to focus all wavelengths of color to the same convergence point. Because glass has a different refractive index for different wavelengths, blue light (short wavelength) bends more than red light (long wavelength).

In lower-quality drone cameras, this results in “chromatic aberration,” often seen as purple or green fringing around high-contrast edges, such as a white building against a blue sky. High-end drone optics, like the Hasselblad camera on the Mavic 3, use Extra-low Dispersion (ED) glass elements. These specialized materials are engineered to minimize the separation of wavelengths, ensuring they all land on the same spot on the sensor, resulting in the razor-sharp imagery required for professional cinematography and mapping.

The Importance of Anti-Reflective Coatings and Filters

Light wavelengths can also bounce off the surface of the lens or the sensor itself, causing lens flare or “ghosting.” To prevent this, drone lenses are treated with multi-layer anti-reflective coatings. These coatings are designed to be a fraction of the thickness of a light wavelength. Through a process of destructive interference, the coating “cancels out” specific reflected wavelengths, allowing more light to pass through to the sensor and increasing the overall transmission efficiency.

Furthermore, drone pilots frequently use Neutral Density (ND) filters or Polarizers. A polarizer works by filtering out light waves that are vibrating in a specific orientation—usually light that has reflected off a non-metallic surface like water or glass. By blocking these specific “polarized” wavelengths, the drone can capture what is beneath the surface of the water or reduce the glare on a windshield, significantly enhancing the visual data collected.

Practical Applications: Enhancing Aerial Filmmaking and Industrial Inspection

Understanding the science of wavelengths allows drone operators to make more informed decisions during flight, whether they are chasing a cinematic look or gathering critical infrastructure data.

Color Science and Cinematic Dynamic Range

In aerial filmmaking, “color science” refers to how a camera manufacturer maps specific wavelengths to digital values. This is why a shot from a Sony Airpeak might look different than a shot from a DJI Inspire 3, even if they are filming the same scene. The way the sensor and image signal processor (ISP) handle “warm” wavelengths (yellows and oranges) during the “golden hour” determines the emotional impact of the footage.

Professional cinematographers often utilize LOG profiles (like D-Log or S-Log). These profiles preserve the maximum amount of wavelength data across the entire dynamic range of the sensor, allowing for more flexibility in post-production. By understanding that “highlights” are high-energy, high-intensity wavelengths and “shadows” are the absence thereof, editors can manipulate the color wheels to enhance specific parts of the spectrum, creating a stylized or hyper-realistic look.

Remote Sensing and Atmospheric Correction

For drone pilots involved in mapping and 3D modeling, wavelengths play a crucial role in “photogrammetry.” When creating a high-resolution map, the software must align thousands of photos based on “tie points.” If the lighting changes or if atmospheric haze shifts the dominant wavelengths in the image (making it look flatter or more washed out), the software may struggle to align the images correctly.

Advanced remote sensing techniques involve “atmospheric correction,” where the pilot uses a calibrated reflectance panel on the ground. By taking a photo of a panel that reflects a known percentage of all light wavelengths, the software can adjust the drone’s data to account for the specific atmospheric conditions of that day. This ensures that the “reflectance” values are consistent, whether the sun is high at noon or obscured by light clouds.

The Future of Wavelength Tech in Drones

As we look toward the future of drone-based imaging, the focus is shifting toward “Hyperspectral” imaging. While multispectral cameras look at 5 or 6 broad bands of wavelengths, hyperspectral sensors can see hundreds of very narrow bands. This allows drones to identify the chemical composition of objects from the air. For example, a hyperspectral drone could differentiate between different types of plastics in a landfill or identify specific mineral deposits in a quarry based on their unique “spectral signature”—the specific pattern of wavelengths they reflect and absorb.

In conclusion, a light wavelength is far more than a physics definition. In the context of drones, it is the bridge between the physical world and digital insight. From the glass in the lens to the silicon in the sensor, every component of a drone’s imaging system is a tool designed to manipulate, capture, and interpret wavelengths. By mastering this science, drone professionals can push the boundaries of what is possible in both the visible and invisible worlds.