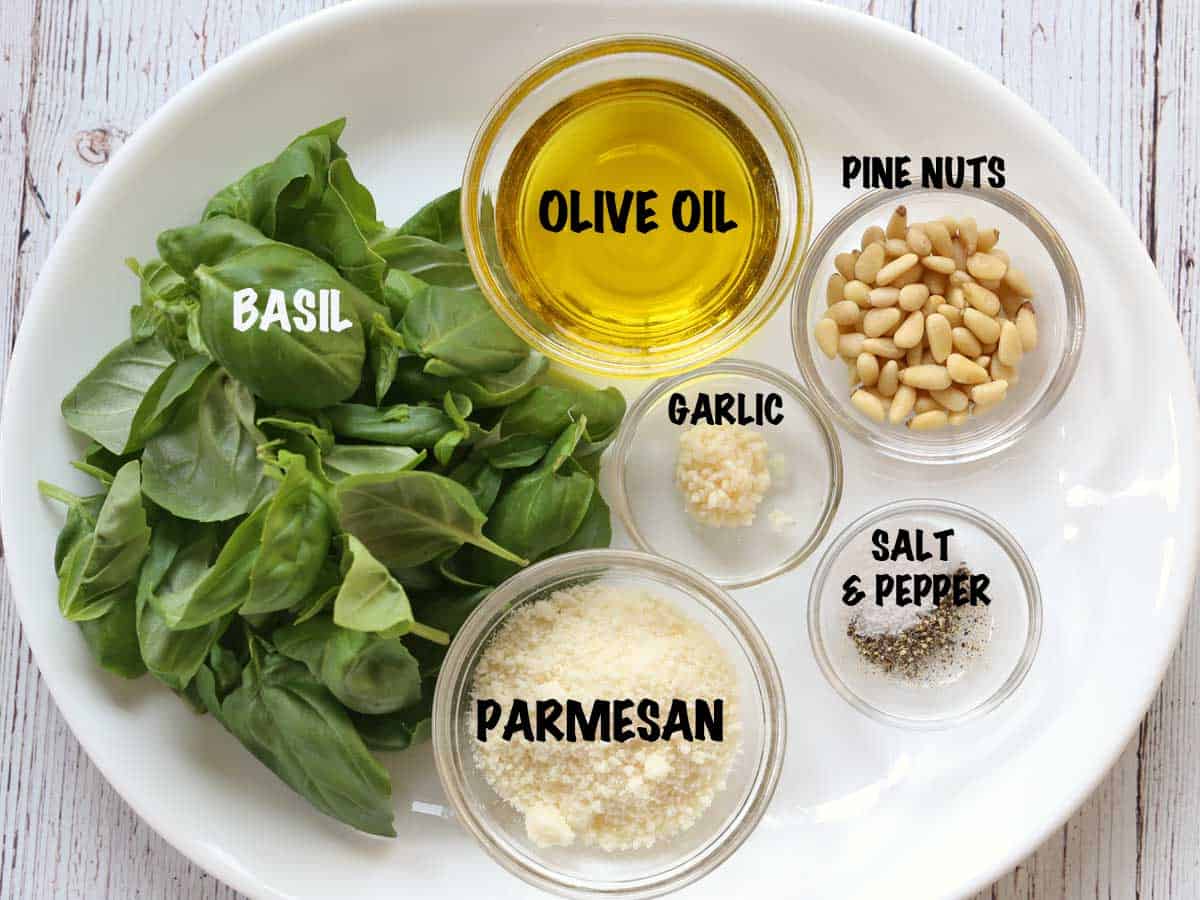

In the rapidly evolving landscape of unmanned aerial vehicles (UAVs) and autonomous systems, the term “traditional” is often used to describe the established standard of technology that allows a machine to perceive, navigate, and interact with its environment without human intervention. Just as a master chef relies on a precise “recipe” of specific elements to create a cohesive result, the field of drone tech and innovation relies on a foundational framework of high-tech ingredients. To understand what makes a modern autonomous system function, one must look at the “ingredients” of the P.E.S.T.O. framework: Perception, Environmental mapping, Sensing, Telemetry, and Operations. These five pillars represent the traditional core of state-of-the-art drone innovation, blending complex software with robust hardware to achieve the pinnacle of flight technology.

Perception and the AI Core: The Essential Base

The first and most critical ingredient in any sophisticated autonomous drone system is perception. In the context of tech and innovation, perception is driven by Artificial Intelligence (AI) and Machine Learning (ML). For a drone to be more than a remote-controlled toy, it must possess the ability to “see” and “understand” its surroundings in real-time. This process begins with Computer Vision (CV), a field of AI that trains computers to interpret and understand the visual world.

Computer Vision and Neural Networks

At the heart of a drone’s perception system are Convolutional Neural Networks (CNNs). These are deep learning algorithms specifically designed to process pixel data. When we talk about “AI Follow Mode” or “ActiveTrack” technologies, we are witnessing CNNs at work. The drone’s onboard processor analyzes video frames at high speeds—often 30 to 60 frames per second—identifying shapes, colors, and patterns. By comparing these inputs against massive datasets, the drone can distinguish between a person, a vehicle, a tree, or a power line. This recognition is the “basil” of the recipe—the primary flavor that defines the system’s character. Without high-level perception, autonomous flight becomes a series of blind movements rather than a coordinated mission.

AI Follow Mode and Predictive Pathing

Modern innovation has moved beyond simple object recognition into the realm of predictive analytics. Using “Follow Mode” as a primary example, the drone does not just react to where a subject is; it predicts where the subject is going. By calculating velocity, trajectory, and potential environmental obstacles, the drone’s AI can adjust its flight path milliseconds before a change is required. This level of autonomy is achieved through Edge AI—processing the data on the drone itself rather than sending it to a cloud server. This reduces latency to near-zero, which is vital for high-speed tracking in complex environments like forests or urban centers.

Environmental Mapping and Remote Sensing: Building Spatial Context

If perception is how a drone sees, environmental mapping is how it remembers and contextualizes that information. In the niche of tech and innovation, mapping has evolved from simple 2D overhead imagery to complex 3D reconstructions. This ingredient provides the substance of the autonomous experience, allowing the UAV to create a digital twin of the world it inhabits.

LiDAR and Photogrammetry

The two primary technologies driving this innovation are LiDAR (Light Detection and Ranging) and Photogrammetry. LiDAR is often considered the “premium” ingredient in the drone recipe. It works by emitting rapid laser pulses and measuring the time it takes for them to bounce back from objects. This creates a “point cloud”—a highly accurate 3D map of the environment that is unaffected by lighting conditions.

Photogrammetry, on the other hand, uses high-resolution images taken from different angles to triangulate the coordinates of points in space. While photogrammetry is more accessible and provides better visual detail (textures and colors), LiDAR offers unmatched precision through dense vegetation and in low-light scenarios. Innovations in remote sensing now allow drones to switch between these modes or fuse their data, providing a comprehensive environmental profile that informs every second of flight.

Real-Time SLAM (Simultaneous Localization and Mapping)

The most impressive technological leap in mapping is SLAM. Simultaneous Localization and Mapping is the algorithmic process that allows a drone to map an unknown environment while simultaneously keeping track of its own location within that map. This is essential for autonomous flight in GPS-denied environments, such as inside warehouses, tunnels, or under dense forest canopies. By using a combination of visual odometry and inertial data, a drone can “paint” its surroundings digitally, ensuring it can find its way back to its starting point even if its external navigation signals are severed.

Sensor Fusion: The Sharp Reality of Obstacle Avoidance

A “traditional” autonomous setup requires more than just cameras; it requires a suite of sensors that act as the drone’s nervous system. Sensor fusion is the process of combining data from multiple sensors—ultrasonic, infrared, monocular vision, and dual-vision—to produce a result that is more accurate than any single sensor could provide on its own.

The Multi-Directional Array

Innovation in obstacle avoidance has led to the development of omnidirectional sensing. Traditional drones may have only had forward-facing sensors, but modern autonomous systems utilize an array of sensors on all six sides of the aircraft.

- Vision Sensors: Dual-camera setups that provide depth perception, similar to human eyes.

- Ultrasonic Sensors: Used primarily for altitude hold and ground detection, especially over reflective surfaces like water where vision sensors might fail.

- Infrared (ToF) Sensors: Time-of-Flight sensors measure the time it takes for an infrared light signal to travel to an object and back, providing highly accurate distance measurements at short ranges.

By fusing this data, the drone creates a “virtual bubble” around itself. If an object enters this bubble, the drone’s autonomous logic can decide whether to brake, hover, or navigate around the obstacle using pre-calculated bypass algorithms.

Redundant Systems and Failure Mitigation

In high-stakes industrial or mapping missions, redundancy is a key technological ingredient. This involves having dual IMUs (Inertial Measurement Units), dual compasses, and dual barometers. If one sensor provides an outlier reading—perhaps due to electromagnetic interference from a power line—the drone’s software can identify the discrepancy and rely on the secondary sensor. This focus on reliability is what separates experimental tech from the industrial-grade innovations currently reshaping fields like search and rescue and infrastructure inspection.

Telemetry and Connectivity: The Flow of Information

No autonomous system is an island. The “olive oil” that keeps the entire drone ecosystem moving is the flow of data through telemetry and high-bandwidth connectivity. As drones become more autonomous, the volume of data they generate increases exponentially, necessitating breakthroughs in how that data is transmitted and processed.

5G Integration and Low-Latency Transmission

The integration of 5G technology is perhaps the most significant recent innovation in drone connectivity. Traditional radio frequencies (2.4GHz and 5.8GHz) are limited by line-of-sight and distance. 5G allows for Beyond Visual Line of Sight (BVLOS) operations by utilizing cellular networks. This enables a drone to be controlled or monitored from thousands of miles away with minimal latency.

Furthermore, 5G supports the massive data throughput required for real-time remote sensing. Instead of waiting for a drone to land to download a 3D map, 5G allows the drone to stream processed point clouds or 4K thermal feeds directly to a command center. This connectivity ensures that the “ingredients” of the mission are shared instantly with the people or systems that need them.

MAVLink and Standardized Protocols

Technological innovation also happens at the protocol level. MAVLink (Micro Air Vehicle Link) has become the “traditional” language of communication between the flight controller and the ground station. By standardizing how telemetry data (battery life, GPS coordinates, pitch, roll, and yaw) is packaged, developers can create cross-platform applications that work across different drone manufacturers. This interoperability is a catalyst for innovation, allowing third-party apps to add specialized “flavors” to the drone’s functionality, such as automated crop spraying or precise magnetic field mapping.

Autonomous Operations: The Binding Agent

The final ingredient in the recipe of drone tech is the operational logic that binds everything together. This is where AI, mapping, sensing, and telemetry converge into a purposeful action. Autonomous operations represent the shift from the drone as a “vehicle” to the drone as a “worker.”

Pathfinding Algorithms and Mission Planning

At the center of autonomous operations are pathfinding algorithms like A* (A-star) or Dijkstra’s algorithm. These mathematical models allow the drone to calculate the most efficient path from point A to point B while considering variables like battery life, wind speed, and “no-fly” zones. In mapping and remote sensing, this manifests as “Waypoints,” where a user can plot a complex 3D grid, and the drone executes the flight with centimeter-level precision using RTK (Real-Time Kinematic) positioning.

The Shift Toward Full Level 5 Autonomy

Innovation is currently pushing toward “Level 5” autonomy, where the drone requires no human intervention from takeoff to landing. This includes “Drone-in-a-Box” solutions, where a UAV lives in a weather-sealed docking station, exits automatically to perform a scheduled inspection or security patrol, and returns to charge—all governed by AI. This level of operation is the ultimate goal of the “traditional pesto” of drone tech—a perfect blend of ingredients that creates a self-sustaining, intelligent system capable of transforming industries.

As we look toward the future, the “ingredients” of this technology will continue to be refined. AI will become more intuitive, sensors will become smaller and more powerful, and connectivity will become more ubiquitous. Yet, the core recipe remains: a balance of perception, mapping, sensing, and data flow. For any organization or enthusiast looking to leverage the power of modern UAVs, understanding these essential components is the first step in mastering the art and science of flight innovation.