In the realm of human jurisprudence, when a judge deliberately disregards presented evidence, it can lead to accusations of judicial misconduct, bias, or even a mistrial, fundamentally undermining the principles of justice. Such an act suggests a failure of the system to uphold fairness and truth, potentially leading to incorrect or unjust outcomes. However, when we transpose this seemingly legalistic question into the dynamic and rapidly evolving landscape of technology and innovation, particularly concerning autonomous systems, artificial intelligence (AI), and data-driven operations, the concept of a “judge ignoring evidence” takes on a profoundly different, yet equally critical, meaning.

In the context of advanced technology, the “judge” is often an AI algorithm, an autonomous decision-making system, or a sophisticated data processing unit. The “evidence” comprises the vast streams of sensor data, environmental inputs, historical patterns, and real-time observations that these systems are designed to collect, analyze, and interpret. When such a system effectively “ignores” this evidence, it signifies a critical failure in its design, implementation, or operational parameters. It’s not a matter of human intent or bias, but rather a flaw that can lead to miscalculations, erroneous decisions, and potentially catastrophic consequences, especially in high-stakes applications like autonomous flight, remote sensing, and intelligent automation. This article delves into what this “ignoring of evidence” truly means in a technological sense, its classifications, implications, and the strategies being developed to ensure data integrity and system reliability in the age of intelligent machines.

The Digital ‘Judge’: How Autonomous Systems Process Data

The foundation of any intelligent or autonomous system lies in its ability to perceive its environment, gather information, and make decisions based on that input. This is where the concept of the “digital judge” comes to life, a complex interplay of hardware and software meticulously designed to mimic, or even surpass, human cognitive processes in specific domains. These systems are constantly bombarded with a deluge of “evidence,” which they must filter, process, and weigh to arrive at an “informed judgment.”

Sensor Fusion and Data Integrity

At the heart of an autonomous system’s perception capabilities is sensor fusion. Modern drones, autonomous vehicles, and remote sensing platforms employ a sophisticated array of sensors – LiDAR, radar, ultrasonic, optical cameras, thermal imagers, GPS, IMUs (Inertial Measurement Units), and more. Each sensor provides a unique slice of “evidence” about the environment: distance, velocity, visual appearance, temperature, position, orientation. Sensor fusion is the process of combining these disparate data streams into a single, coherent, and more accurate model of the world. For instance, in an autonomous drone, GPS data might provide a general location, while IMUs track precise movements, and optical cameras identify specific landing zones or obstacles.

The integrity of this data is paramount. If a sensor malfunctions, provides corrupted data, or if the fusion algorithm misinterprets inputs, it’s akin to a judge receiving faulty or misleading evidence. Ensuring data integrity involves rigorous calibration, noise reduction techniques, and validation checks to confirm that the “evidence” being considered is accurate and reliable. Without this foundational integrity, any subsequent decision-making is inherently compromised.

The Role of Algorithms in Decision-Making

Once data is collected and fused, algorithms act as the “reasoning engine” of the digital judge. These complex sets of instructions define how the system interprets patterns, predicts outcomes, and makes choices. Machine learning algorithms, particularly deep learning networks, are trained on vast datasets to recognize objects, classify situations, and even anticipate actions. For example, an AI Follow Mode algorithm on a drone learns to distinguish its target from background clutter and predict its movement trajectory to maintain a stable follow.

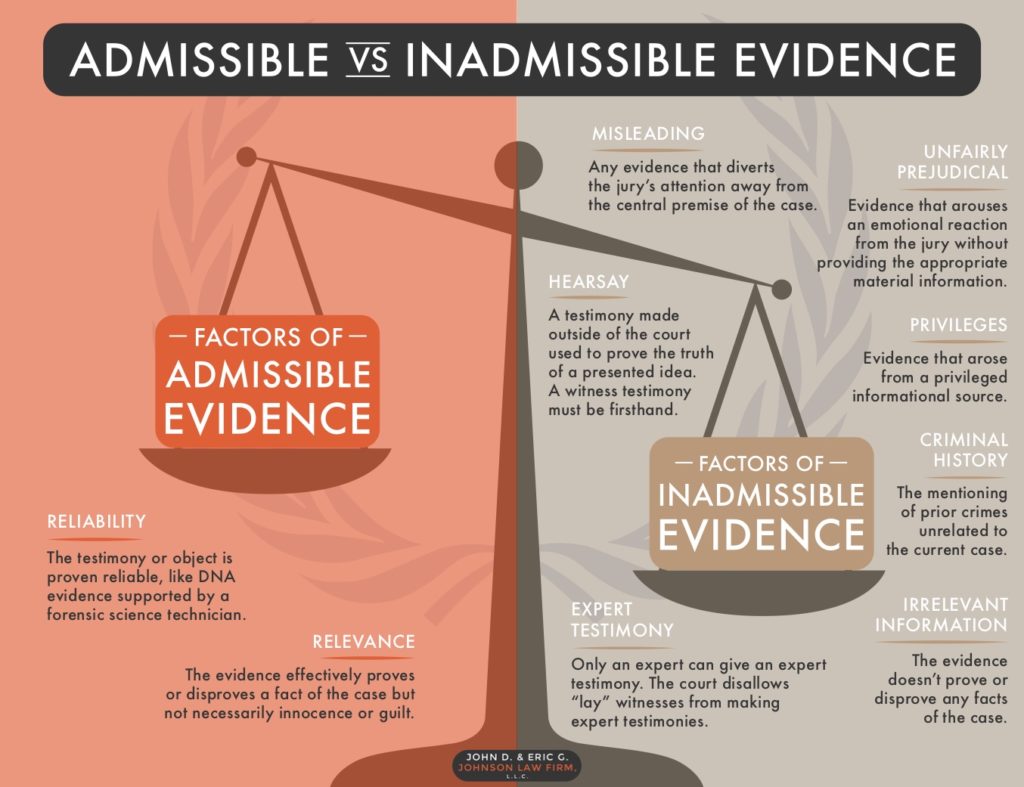

These algorithms are programmed with a “rulebook” – sometimes explicitly coded, sometimes learned implicitly through training. They weigh different pieces of “evidence” according to their programmed priorities and confidence levels. A critical aspect here is how algorithms handle conflicting evidence or uncertainties. A well-designed algorithm will have mechanisms to flag discrepancies, request more data, or defer to safer, pre-programmed responses when faced with ambiguity. When an algorithm “ignores evidence,” it often points to a flaw in its programming, its training data, or its ability to handle edge cases where its learned rules simply don’t apply or where critical data is overlooked.

When the System ‘Ignores’ Evidence: Classification of Failures

The concept of a technological system “ignoring evidence” isn’t an act of willful negligence but rather a categorization of critical failure modes. These failures can manifest in various forms, each posing unique challenges to the reliability and safety of advanced technologies.

Data Omission and Incomplete Input

One primary way a system can “ignore evidence” is through the simple omission of critical data. This isn’t an active rejection but a passive failure to acquire or integrate necessary information.

- Sensor Blind Spots: Physical limitations of sensors can create blind spots where critical objects or environmental conditions are simply not detected. For instance, an optical camera might struggle in dense fog, or a radar system might fail to detect certain materials. If the system relies solely on that sensor, it’s effectively operating without that piece of “evidence.”

- Communication Failures: In distributed systems, data transfer between sensors, processing units, and control modules can be interrupted. A lost data packet from a critical sensor might mean the system never receives crucial information about an impending obstacle.

- Filtering Errors: Sometimes, data is intentionally filtered to reduce noise or highlight relevant features. An overly aggressive filter might inadvertently discard vital “evidence” that, in a specific context, was actually highly relevant. For example, filtering out small, fast-moving objects might be efficient but could lead to ignoring a small, rapidly approaching drone.

Algorithmic Bias and Misinterpretation

Even when all data is present, the “digital judge” can still effectively “ignore” evidence if its algorithms are biased or fundamentally misinterpret the input.

- Training Data Bias: AI models learn from the data they are trained on. If this data is incomplete, unrepresentative, or contains biases, the algorithm will inherit and perpetuate these biases. For instance, an object recognition system trained predominantly on clear weather conditions might “ignore” critical visual cues in rain or snow, misidentifying objects or failing to detect them entirely. The system isn’t ignoring the current visual input, but rather misinterpreting it based on skewed learned patterns.

- Feature Overweighting/Underweighting: Algorithms might be programmed to prioritize certain types of evidence over others, or through their learning process, might implicitly assign undue weight to certain features. This can lead to situations where a less impactful piece of evidence is prioritized over a more critical one, effectively sidelining the latter. For example, an autonomous system might prioritize GPS coordinates over visual confirmation in a cluttered environment, leading it astray if GPS signals are momentarily inaccurate.

- Lack of Contextual Understanding: Current AI systems often excel at pattern recognition but struggle with nuanced contextual understanding. A system might “see” all the data (evidence) but fail to grasp the deeper implications or specific real-world context, leading it to an illogical conclusion, akin to a human judge failing to understand the circumstances surrounding an incident.

System Overrides and Human Error

While not a direct algorithmic failure, external factors can also lead to systems “ignoring evidence.”

- Human Override: In many semi-autonomous systems, human operators can override automated decisions. If an operator ignores system warnings or takes manual control based on incomplete information or overconfidence, it can lead to the system’s “evidence” being effectively disregarded.

- Software Glitches and Bugs: Unexpected software bugs can cause parts of the system to freeze, miscalculate, or simply fail to process certain inputs, leading to crucial evidence being unintentionally overlooked.

- Hardware Malfunctions: Beyond sensors, processing units themselves can malfunction, leading to computational errors or a failure to execute the algorithms that are supposed to process the incoming evidence.

Consequences of ‘Ignored Evidence’ in Tech Applications

The ramifications of a technological system “ignoring evidence” extend far beyond theoretical discussions of algorithmic integrity. In real-world applications within the Tech & Innovation domain, these failures can have severe practical, safety, and ethical consequences.

Safety Implications in Autonomous Flight

In autonomous flight, particularly with drones (UAVs) and future air taxis, ignoring evidence can be catastrophic.

- Collision Risks: If an obstacle avoidance system “ignores” LiDAR data indicating a tree or fails to process visual evidence of a power line due to an algorithmic misinterpretation or sensor blind spot, it could result in a mid-air collision, property damage, or even injury.

- Loss of Control: An autonomous drone’s stabilization system might “ignore” critical IMU data indicating sudden gusts of wind, leading to instability and a crash. Similarly, an AI-powered delivery drone might misinterpret landing zone evidence due to poor lighting, leading to an unsafe or damaging descent.

- Navigation Errors: For drone mapping and remote sensing missions, if a GPS receiver provides inaccurate data and other sensors (like visual odometry) are “ignored” or inadequately fused, the drone could deviate from its planned flight path, enter restricted airspace, or even get lost.

Inaccurate Mapping and Remote Sensing Outcomes

Remote sensing and mapping applications heavily rely on the meticulous collection and interpretation of data. When “evidence” is ignored, the resulting outputs can be severely compromised.

- Flawed Datasets: If a drone performing agricultural mapping “ignores” spectral evidence indicating stressed crops due to an issue with its multispectral sensor or processing pipeline, farmers could miss critical intervention opportunities, leading to yield losses.

- Incorrect Environmental Assessments: Environmental monitoring via drones often involves identifying pollution, deforestation, or wildlife populations. If thermal imaging “evidence” of illegal dumping or specific animal signatures is overlooked, critical environmental damage might go unnoticed and unaddressed.

- Compromised Infrastructure Inspections: Drones inspecting bridges, pipelines, or wind turbines collect visual and thermal evidence of structural integrity. If an AI analysis system “ignores” subtle cracks or heat anomalies, critical structural failures could be missed, leading to potential disasters.

Ethical Dilemmas in AI Decision-Making

Beyond immediate operational failures, systems “ignoring evidence” introduce significant ethical challenges, especially as AI permeates more aspects of daily life.

- Bias Reinforcement: If an AI system used in hiring or loan applications “ignores” evidence of an applicant’s qualifications due to biases learned from historical data, it perpetuates discrimination and denies individuals fair opportunities.

- Lack of Accountability: When an autonomous system makes a flawed decision because it effectively “ignored” crucial evidence, determining accountability becomes complex. Is it the fault of the programmer, the sensor manufacturer, the training data provider, or the operator?

- Erosion of Trust: A persistent failure of autonomous systems to reliably process and act upon “evidence” erodes public trust in these technologies, hindering their adoption and preventing society from benefiting from their potential.

Strategies for Ensuring Data-Driven Integrity

Preventing systems from “ignoring evidence” is a cornerstone of responsible technology development. It requires a multi-faceted approach, integrating robust engineering practices, advanced algorithmic design, and stringent validation protocols.

Redundancy and Cross-Verification

One of the most effective strategies is to build redundancy into sensor arrays and data processing.

- Multiple Sensors: Employing diverse types of sensors to gather the same kind of “evidence” (e.g., LiDAR, radar, and cameras for obstacle detection). If one sensor fails or provides anomalous data, others can cross-verify or take over, ensuring that critical evidence is not entirely lost or ignored.

- Data Fusion Algorithms with Anomaly Detection: Advanced sensor fusion techniques are designed not just to combine data but also to detect discrepancies. If different sensors provide conflicting “evidence,” the system should flag the anomaly, attempt to resolve it using statistical methods, or escalate the issue to a human operator rather than simply ignoring the conflicting input.

- Software Redundancy: Running multiple instances of critical algorithms or using diverse algorithms for the same task. If one algorithm produces an unexpected result, the output of others can serve as a cross-check.

Explainable AI (XAI) and Transparency

For AI systems, particularly machine learning models, fostering transparency is crucial to understanding why a decision was made and what evidence was considered (or ignored).

- Interpretability Tools: Developing tools that allow developers and users to understand the internal workings of AI models. This includes visualizing which features (pieces of evidence) an algorithm weighted most heavily in its decision, helping to uncover hidden biases or instances where critical evidence was overlooked.

- Decision Logging and Auditing: Implementing comprehensive logging mechanisms that record all input data, internal states, and final decisions of autonomous systems. This creates an audit trail, making it possible to retrospectively analyze what evidence was present when a system made a particular choice, and whether any crucial evidence was effectively ignored.

- Clear Operating Parameters: Defining and communicating the operational envelopes and limitations of autonomous systems. Users need to understand the conditions under which the “digital judge” can reliably process evidence and when its capabilities might be compromised, preventing misapplication.

Robust Testing and Validation Protocols

Thorough testing and validation are indispensable for identifying and rectifying instances where systems might “ignore evidence” before deployment.

- Real-World and Simulated Testing: Conducting extensive testing in diverse real-world scenarios, including edge cases and adverse environmental conditions, and complementing this with high-fidelity simulations. These tests are designed to expose situations where sensors fail, data is corrupted, or algorithms misinterpret critical “evidence.”

- Adversarial Testing: Intentionally attempting to trick or confuse the system by feeding it misleading or ambiguous “evidence” to stress-test its robustness and ability to distinguish valid inputs from noise or malicious attacks.

- Continuous Learning and Update Cycles: Autonomous systems should be designed for continuous improvement. Data from real-world operations, including instances where the system made suboptimal decisions (effectively “ignoring evidence”), should be fed back into the development cycle to refine algorithms and sensor configurations. This iterative process ensures that the “digital judge” learns from its mistakes and becomes more adept at weighing all available evidence.

In conclusion, while the phrase “what is it called when a judge ignores evidence” originates from the courtroom, its metaphorical application to the world of Tech & Innovation illuminates a critical challenge in the development and deployment of autonomous systems. It compels us to rigorously examine how our digital “judges” perceive, process, and act upon the vast amounts of data they are given. By understanding the ways in which evidence can be overlooked or misinterpreted by technology, and by implementing robust strategies for data integrity, transparency, and validation, we can build more reliable, safer, and ethically sound intelligent systems that truly consider all the “evidence” before making their critical judgments.