In the rapidly evolving landscape of autonomous drone technology, measuring true performance is a complex challenge. Traditional metrics often conflate a drone’s inherent capabilities with a myriad of external factors, making it difficult to pinpoint areas for improvement or accurately compare different systems. This challenge mirrors a long-standing analytical dilemma in sports, particularly baseball, where a pitcher’s performance can be heavily influenced by the defense behind them. Enter the concept of “Fielding Independent Pitching” (FIP) – a revolutionary statistical approach in baseball designed to strip away external variables and focus solely on outcomes a pitcher controls.

By analogy, we can apply the core philosophy of FIP to autonomous drones, defining it as Autonomous Flight Performance Independent of External Variables (AFPIEV). This paradigm shift aims to quantify a drone’s intrinsic operational efficiency and reliability, isolating it from fluctuating environmental conditions, human intervention, or unforeseen obstacles that are beyond its algorithmic control. Understanding AFPIEV is crucial for developers, operators, and regulators seeking to objectively evaluate, benchmark, and enhance the true technological prowess of self-navigating aerial platforms. It moves beyond simple mission success rates to delve into the very computational integrity and robustness of the autonomous system itself, paving the way for more dependable and sophisticated drone applications across industries.

Defining Autonomous Flight Performance Independent of External Variables (AFPIEV)

At its core, AFPIEV seeks to create a purified metric for drone autonomy. Just as baseball’s FIP focuses on a pitcher’s strikeouts, walks, hit-by-pitches, and home runs—events largely independent of fielders—AFPIEV zeroes in on the autonomous system’s ability to execute its programmed directives and adapt to expected scenarios, irrespective of confounding external variables. This innovative metric offers a profound shift from merely observing mission outcomes to analyzing the underlying algorithmic resilience and hardware precision.

The Core Concept: Isolating Intrinsic Drone Capability

The fundamental premise of AFPIEV is to disentangle a drone’s inherent technological performance from factors that are either random, external, or beyond the scope of its designed autonomous control. For instance, a drone might fail a delivery mission due to an unexpected, un-mapped gust of wind, a sudden interference with GPS signals, or an unforeseen human obstruction. While these are critical operational considerations, they don’t necessarily reflect a flaw in the drone’s core navigation algorithms, sensor fusion capabilities, or AI decision-making. AFPIEV seeks to filter out these “fielding errors” of the operational environment, allowing us to evaluate the drone’s “pitching” – its own autonomous execution. This isolation is vital for developers to understand whether a system’s underperformance is due to a design flaw in its autonomy stack or an external variable that needs to be mitigated through operational planning or more robust environmental sensing. By stripping away external noise, we gain a clearer picture of the drone’s true potential and limitations, fostering more targeted development efforts.

Analogy to Traditional Metrics: Beyond Raw Mission Success Rates

Traditional metrics for drone performance often include simple success/failure rates for missions, flight duration, payload capacity, or distance covered. While valuable, these metrics are akin to a baseball pitcher’s Earned Run Average (ERA) – they tell you the result but don’t fully explain the why. An ERA can be inflated by poor fielding; similarly, a drone’s mission success rate can be skewed by factors external to its autonomy. AFPIEV, much like FIP, aims to be a more predictive and diagnostic tool. It posits that a drone’s true autonomous prowess is best judged by its ability to manage its trajectory, avoid detected obstacles, maintain stable flight, and execute predetermined tasks based purely on its internal processing and control loops. By dissecting performance in this manner, AFPIEV offers a more granular and insightful assessment, moving beyond the surface-level outcome to the root causes of success or failure in autonomous operation. This allows for a more accurate comparison between different drone platforms and iterative improvements in their AI and control systems.

Key Components of AFPIEV Measurement

To effectively calculate and interpret AFPIEV, it’s essential to meticulously categorize the various factors influencing a drone’s flight. This involves clearly distinguishing between variables that an autonomous system is designed to control or predict, and those that are genuinely external and unpredictable.

Controllable Variables: Onboard AI, Navigation Algorithms, Sensor Fusion

These are the “pitches” a drone throws. Controllable variables encompass all aspects of the drone’s internal intelligence and execution capabilities. They include the sophistication and efficiency of its onboard AI for real-time decision-making, the precision and robustness of its navigation algorithms (e.g., SLAM, path planning), and the seamless integration and interpretation of data from its various sensors (e.g., LiDAR, cameras, IMUs, altimeters) through sensor fusion. A high AFPIEV would indicate superior performance in these areas, demonstrating accurate waypoint adherence, efficient path optimization, reliable object identification and avoidance based on detected threats, and stable flight control under expected conditions. Evaluating these components provides direct feedback on the core autonomous technology, highlighting the effectiveness of software updates, hardware upgrades, and algorithmic refinements.

Uncontrollable Variables: Environmental Factors, Signal Interference, Unpredictable Obstacles

These are the “fielding errors” or external influences that a drone’s autonomy cannot reasonably account for or control, at least within its current design parameters. This category includes sudden, unforecasted extreme weather events (e.g., microbursts, unexpected heavy rain), temporary but significant GPS signal degradation or jamming, electromagnetic interference, and the sudden, unannounced appearance of dynamic, un-mapped obstacles (e.g., an animal darting into the flight path, a kite appearing from nowhere) that exceed its detection range or processing speed. While robust systems aim to mitigate the impact of such events through resilience and failsafe protocols, AFPIEV acknowledges that these factors inherently test the operational environment rather than solely the drone’s autonomous capability. By isolating these, we avoid penalizing a drone’s core intelligence for challenges outside its immediate control or design scope.

Core Events for Evaluation: Autonomous Waypoint Accuracy, Collision Avoidance Efficacy, Task Completion Rate

To quantify AFPIEV, we must define specific, measurable “events” that reflect the drone’s intrinsic performance. These are analogous to strikeouts, walks, and home runs in FIP.

- Autonomous Waypoint Accuracy (AWA): Measures the drone’s ability to precisely navigate to and maintain position at designated waypoints, independent of minor atmospheric disturbances or GPS jitter that might be present but within its design tolerance. Deviations beyond a threshold would indicate algorithmic or control system inaccuracies.

- Collision Avoidance Efficacy (CAE): Assesses the drone’s success in detecting and autonomously avoiding detectable obstacles, both static and dynamic, within its sensory and processing limits. Failures here would highlight shortcomings in perception, decision-making, or response time. This excludes avoidance failures caused by obstacles appearing too quickly or too close for any system to react.

- Task Completion Rate (TCR) under optimal conditions: Evaluates the drone’s success in executing its primary mission tasks (e.g., object recognition, data collection, payload deployment) when environmental factors are stable and within expected parameters. This differentiates between a task failure due to a sensor malfunction (internal) versus a task failure due to unforeseen obscuring fog (external). By meticulously tracking and weighting these events, developers can generate a comprehensive AFPIEV score that is a true reflection of the drone’s autonomous intelligence.

Calculating and Interpreting AFPIEV

Deriving a meaningful AFPIEV score requires a structured methodology that combines quantitative data from controlled test environments with advanced analytical models. The goal is to produce a single, digestible metric that reflects the sum total of a drone’s intrinsic autonomous capabilities.

The AFPIEV Formula: Quantifying Intrinsic Performance

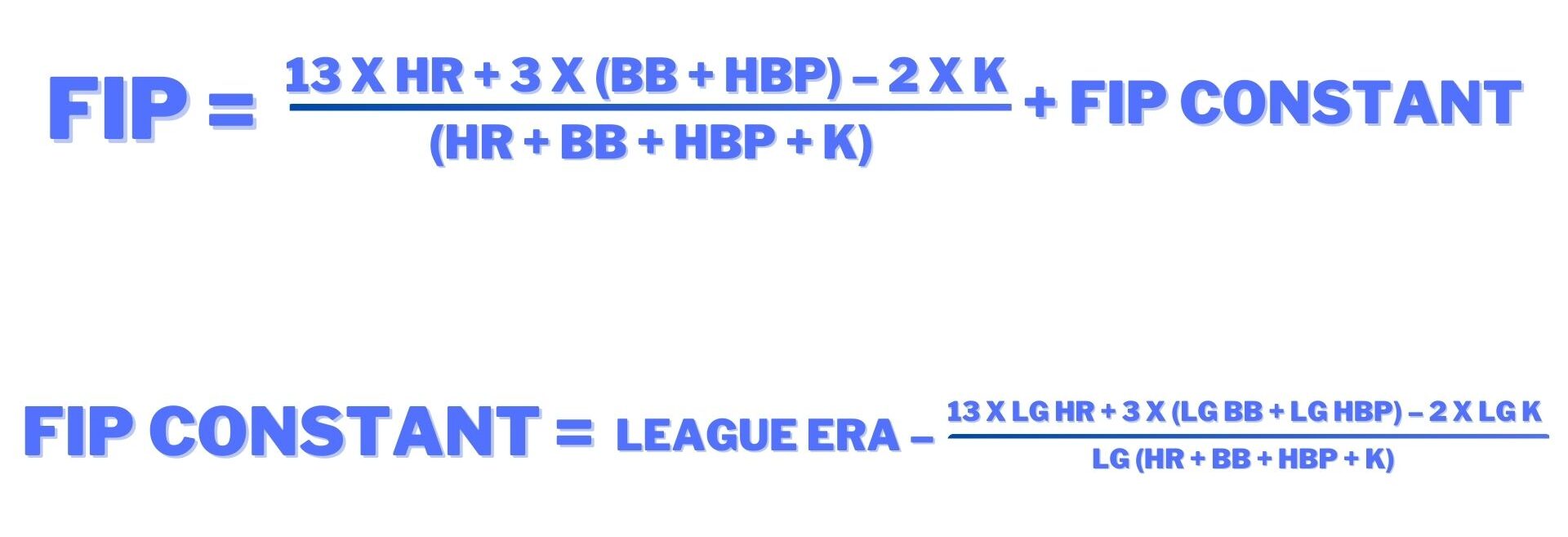

The precise formula for AFPIEV would be complex and customized to the specific drone application, but it would generally involve a weighted sum of the core controllable events, normalized to a baseline or a desired performance standard. Conceptually, it could look something like this:

AFPIEV = (w1 * AWA) + (w2 * CAE) + (w3 * TCR_optimal) – (w4 * AvoidableErrors)

Where:

- AWA (Autonomous Waypoint Accuracy): A score reflecting deviation from intended path/waypoints. Higher score for lower deviation.

- CAE (Collision Avoidance Efficacy): A score reflecting successful avoidance of detectable obstacles. Higher score for more successful avoidances.

- TCR_optimal (Task Completion Rate under optimal conditions): A score reflecting success in completing mission tasks when external factors are within tolerance. Higher score for more successful tasks.

- AvoidableErrors: Penalties for errors solely attributable to the drone’s internal systems (e.g., software bugs, sensor miscalibration, processing delays) even if external conditions were challenging but manageable.

- w1, w2, w3, w4: Weighting factors adjusted based on the criticality of each component to the drone’s mission. These weights would be determined through extensive analysis and expert consensus for specific drone types (e.g., a delivery drone might heavily weight AWA, while a surveillance drone might prioritize CAE). The AFPIEV score would then be normalized to a scale (e.g., 0-100, or a relative index) to allow for easier interpretation and comparison.

Benchmarking and Comparative Analysis

One of the most powerful applications of AFPIEV is its use in benchmarking. By standardizing the calculation, drone manufacturers can objectively compare the autonomous capabilities of different models or generations of their own systems. Similarly, organizations procuring drone fleets can use AFPIEV to evaluate potential suppliers based on verified intrinsic performance rather than anecdotal evidence or mission success rates influenced by varied operational conditions. This fosters healthy competition and encourages innovation in core autonomous technologies. A common AFPIEV standard across the industry would also facilitate clearer communication about drone capabilities, fostering trust and transparency.

Applications in Drone Development and Deployment

For developers, AFPIEV serves as a diagnostic tool. A low AFPIEV score, even if mission success rates are acceptable due to favorable conditions, would flag areas where the underlying AI or control systems need improvement. It helps prioritize R&D efforts, ensuring resources are allocated to strengthen the drone’s core intelligence rather than simply compensating for environmental variances. In deployment, AFPIEV can inform operational guidelines, indicating the inherent reliability of a drone in diverse scenarios and helping operators understand its true limitations before encountering real-world challenges. It also assists in regulatory compliance by providing a quantifiable measure of autonomous safety and dependability.

Significance and Future Implications

The concept of AFPIEV, or Fielding Independent Pitching for autonomous systems, represents a critical step forward in drone technology. Its widespread adoption promises to profoundly impact how drones are designed, evaluated, and trusted.

Elevating Autonomous Drone Reliability and Trust

Currently, public trust in autonomous drones is often hampered by spectacular failures or perceived unreliability. AFPIEV addresses this by offering a transparent, data-driven metric of a drone’s inherent reliability. By showcasing a drone’s performance under its own control, independent of “external fielding errors,” it helps build confidence among consumers, businesses, and regulatory bodies. As AFPIEV scores become standardized and publicly available, they will serve as a clear indicator of a drone’s dependable autonomous performance, fostering greater acceptance and accelerating the integration of drones into various societal functions, from logistics to emergency services. This higher level of trust will be essential for scaling drone operations globally.

Driving Innovation in AI and Robotics

By focusing development efforts on factors directly controlled by the drone’s internal systems, AFPIEV naturally drives innovation in core AI, robotics, and sensor fusion technologies. When designers and engineers are judged on their ability to improve the AFPIEV score, they are incentivized to develop more sophisticated algorithms for navigation, more robust decision-making AI, and more resilient control systems. This analytical discipline will push the boundaries of what autonomous systems can achieve, leading to breakthroughs in areas like real-time adaptive learning, predictive analytics for obstacle avoidance, and ultra-precise flight control. The continuous pursuit of a higher AFPIEV will become a cornerstone of competitive advantage in the drone industry.

Towards a Universal Standard for Drone Autonomy

Ultimately, the vision for AFPIEV is to become a universal standard, much like safety ratings in the automotive industry or energy efficiency labels for appliances. A widely adopted AFPIEV framework would enable clear, unambiguous communication about the autonomous capabilities of any drone system, regardless of its manufacturer or intended application. This standardization would simplify procurement processes, streamline regulatory approvals, and create a common language for engineers and researchers worldwide. It would ensure that progress in drone autonomy is not only measured but also understood and validated in a consistent, rigorous manner, propelling the entire industry towards a future where autonomous drones are not just innovative, but inherently trustworthy and exceptionally capable.