In the relentless march of technological progress, efficiency and performance stand as twin pillars supporting innovation. Yet, an often-overlooked, yet fundamentally critical, phenomenon persistently challenges engineers and scientists: dissipation. Far from a mere technical nuisance, understanding, managing, and mitigating dissipation is paramount to unlocking the next generation of advancements across artificial intelligence, autonomous systems, advanced computing, and beyond. This article delves into the essence of dissipation within the realm of Tech & Innovation, exploring its manifestations, implications, and the ingenious solutions being developed to conquer its omnipresent influence.

The Fundamental Nature of Dissipation in Technology

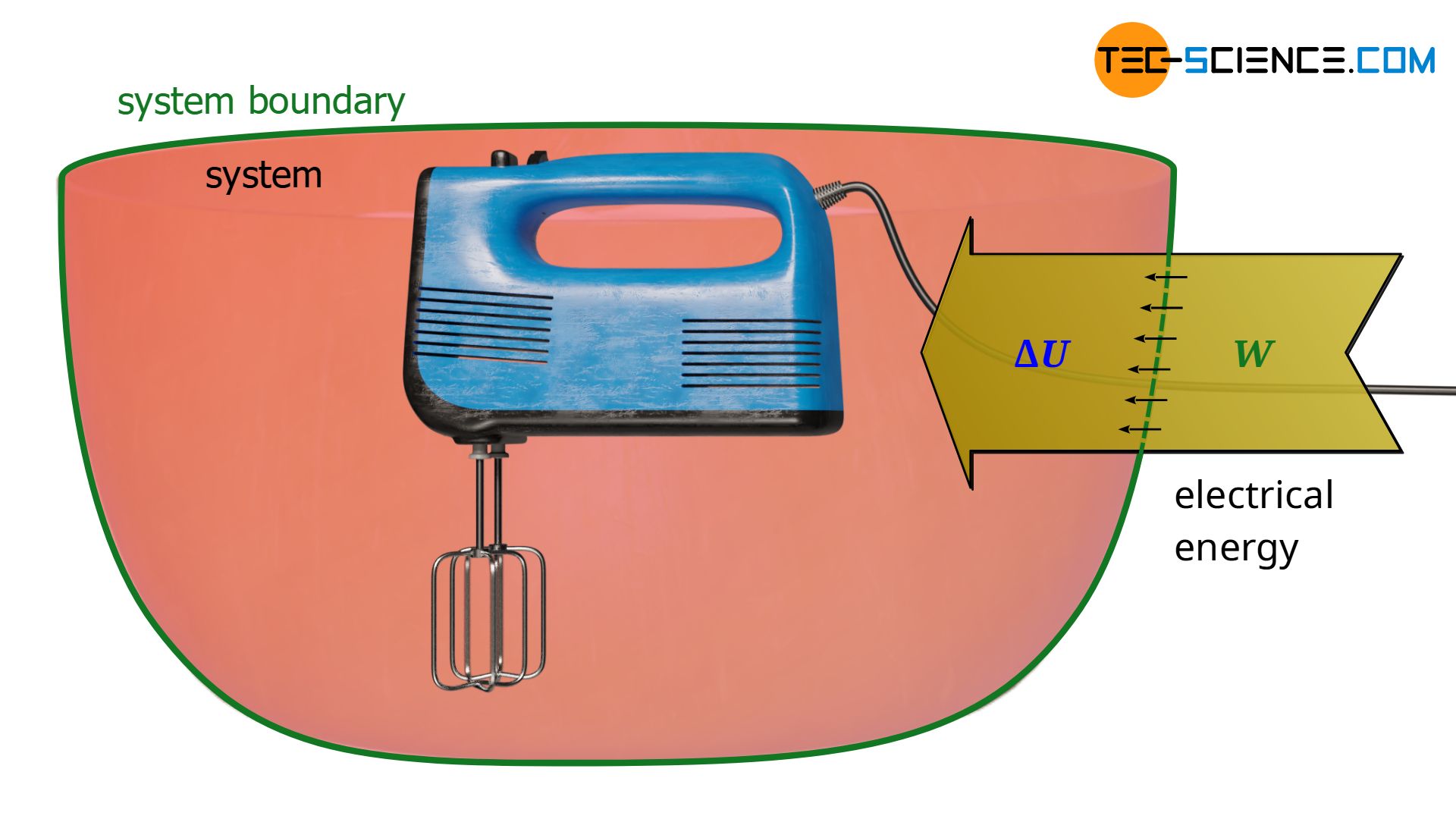

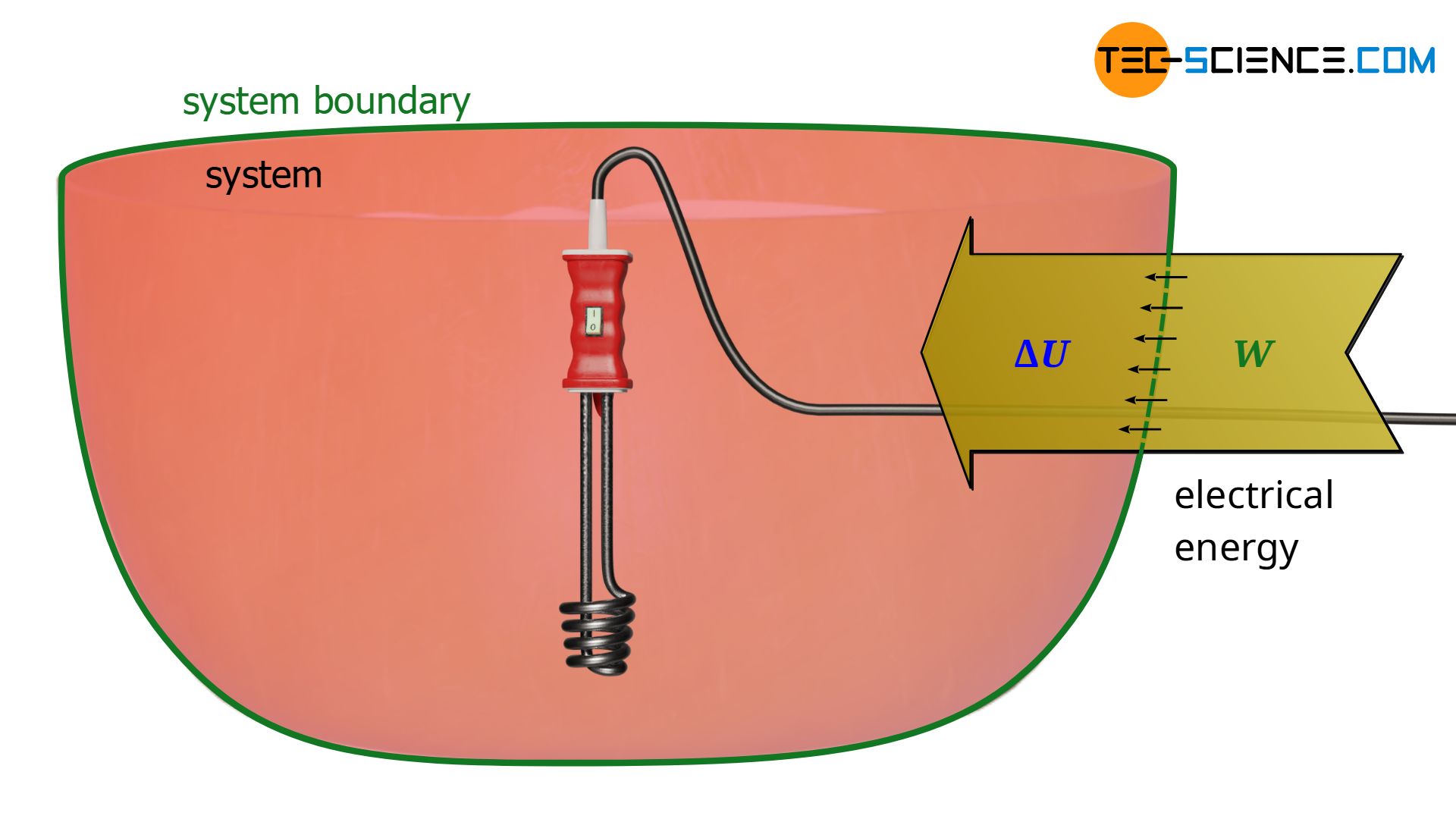

At its core, dissipation refers to the process by which useful energy is converted into less useful or unusable forms, most commonly heat, and spread throughout a system or environment. It is an inherent consequence of physical processes, dictated by the immutable laws of thermodynamics. In the context of technology, this translates directly to challenges in power consumption, thermal management, and overall system efficiency, impacting everything from battery life in a smartphone to the operational costs of a supercomputer.

Defining Dissipation: Beyond the General Sense

While the general definition of dissipation might evoke images of things scattering or fading away, in engineering and technology, it carries a very specific weight. It refers to the irreversible transformation of energy from an organized, useful form (electrical, mechanical) into a disorganized, often unwanted form (thermal, acoustic). Every electronic component, from a simple resistor to a complex processor, dissipates energy. Motors dissipate energy as heat and sound. Radio transmitters dissipate energy as electromagnetic waves that don’t reach the intended receiver. This energy loss is not just about waste; it directly impacts the performance, reliability, and lifespan of technological devices.

The Inevitability of Energy Loss: The Second Law in Action

The pervasive nature of dissipation is rooted in the Second Law of Thermodynamics, which states that the total entropy of an isolated system can only increase over time, or remain constant in ideal cases. For technological systems, this means that perfect energy conversion is impossible; some energy will always be lost to increasing entropy, typically in the form of heat. Whether an electric current flows through a wire, a transistor switches states, or a mechanical system moves, resistance and friction invariably lead to energy being converted into heat. This fundamental principle underscores why managing dissipation is not about eliminating it entirely, but about minimizing its effects and developing systems that can efficiently cope with its presence.

Why Dissipation Matters: Efficiency, Performance, and Longevity

The implications of dissipation are profound and far-reaching in technology. First, it directly affects efficiency. A device that dissipates a significant amount of energy consumes more power than necessary to perform its function, leading to shorter battery life for portable devices and higher electricity bills for data centers. Second, it impacts performance. Overheating can cause processors to throttle down, reducing their clock speed and computational power to prevent damage. This thermal throttling is a direct bottleneck for high-performance computing. Third, it significantly influences longevity and reliability. Elevated temperatures accelerate the degradation of electronic components, leading to premature failures and reduced operational lifespans for expensive equipment. Thus, mastering dissipation is not merely an engineering nicety; it is a critical determinant of technological viability and progress.

Dissipation’s Impact on Advanced Computing and AI

The exponential growth in computational demand, particularly driven by artificial intelligence (AI) and machine learning (ML), has brought the challenge of dissipation to the forefront. Modern processors, designed to execute billions of operations per second, generate immense amounts of heat, threatening to undermine the very performance they are designed to deliver.

The Thermal Challenge of AI Processors and GPUs

AI workloads, characterized by vast parallel processing and intensive matrix operations, push the limits of modern silicon. Graphics Processing Units (GPUs), initially designed for rendering graphics, have become the workhorses of AI due to their parallel architectures. However, as the number of transistors on a chip grows and clock speeds increase, the power density—power dissipated per unit area—skyrockets. High power density translates directly to intense heat generation. Without effective cooling, these powerful AI processors would quickly overheat, leading to catastrophic failure or significantly reduced performance through thermal throttling, a self-preservation mechanism that slows down the chip to reduce heat output. This thermal barrier is a primary constraint on the computational horsepower available for training complex neural networks and executing sophisticated AI algorithms.

Managing Heat in High-Performance Computing

In high-performance computing (HPC) environments, such as supercomputers and large data centers, managing dissipation is an engineering marvel in itself. These facilities house thousands of processors, each generating significant heat. Traditional air cooling, while still prevalent, often struggles to keep up with the increasing heat loads. Innovative liquid cooling solutions, including direct-to-chip cooling, immersion cooling, and even two-phase cooling, are becoming standard practice. These systems circulate dielectric fluids or refrigerants directly over or around the chips, transferring heat far more efficiently than air. The scale of these cooling infrastructures highlights the critical role dissipation management plays in enabling the massive computational power required for scientific simulations, big data analytics, and advanced AI research.

From Data Centers to Edge Devices: Dissipation’s Scale

The challenge of dissipation scales from massive data centers down to compact edge AI devices. While data centers grapple with megawatts of heat, edge devices face constraints of size, weight, and passive cooling capabilities. Running AI inference on drones, smart cameras, or wearable devices demands highly efficient, low-power AI accelerators that minimize heat generation. Designers must carefully balance computational capability with thermal design power (TDP) to ensure the device remains cool enough to operate reliably without bulky heatsinks or active fans, which are often impractical for mobile or embedded applications. The push for more powerful and compact edge AI solutions is inextricably linked to innovations in low-dissipation chip architectures and ultra-efficient power management.

Dissipation in Autonomous Systems and Robotics

Autonomous systems, ranging from self-driving cars and industrial robots to drones, represent another frontier where dissipation management is critical. These systems rely on a complex interplay of sensors, powerful processors, and actuators, all of which contribute to the thermal load and demand efficient energy use for extended operation.

Powering Intelligent Machines: Energy Conversion Losses

Autonomous systems are inherently power-hungry. Their ability to perceive, process, and act upon their environment requires significant energy. Electrical power supplied to these systems is never fully converted into useful work. For instance, DC motors, which drive robotic limbs or drone propellers, are subject to resistive losses in their windings and friction in their bearings, leading to heat generation. Power converters, which step up or step down voltage levels to suit various components, also experience energy losses, typically in the form of heat, as they perform their function. These cumulative energy conversion losses directly impact the operational duration of battery-powered autonomous systems and the overall energy efficiency of their powered counterparts. Minimizing these losses is crucial for extending mission times, reducing recharging cycles, and achieving sustainable operation.

Cooling for Reliability: Sensors, Actuators, and Control Units

Beyond the primary processing units, the myriad components within autonomous systems also face dissipation challenges. Advanced sensors, such as LiDAR, radar, and high-resolution cameras, often contain their own processing units that generate heat. Actuators, including motors and hydraulic pumps, generate heat during operation. The central control units, which integrate data from various sensors and execute complex decision-making algorithms, are themselves powerful embedded computers that require robust thermal management. Reliable operation of these systems in diverse and often harsh environments (e.g., extreme temperatures in industrial settings or direct sunlight for outdoor drones) hinges on effective cooling. Overheated sensors can provide inaccurate readings, compromised actuators can fail to respond correctly, and a malfunctioning control unit can lead to catastrophic system failure.

The Energy-Efficiency Imperative for Extended Operations

For many autonomous applications, particularly those involving drones or remote sensing platforms, extended operational time is a key performance metric. Every watt of power dissipated as heat represents a watt that could have been used to power motors for longer flight, run sensors for more comprehensive data collection, or fuel onboard intelligence for more sophisticated tasks. The drive for energy efficiency in autonomous systems is therefore a direct battle against dissipation. Engineers are constantly seeking ways to reduce quiescent power consumption, optimize motor control algorithms to minimize resistive losses, and design power electronics with higher conversion efficiencies. These efforts are not just about saving energy; they are about enabling entirely new capabilities and expanding the practical utility of autonomous technology.

Addressing Dissipation: Innovative Solutions and Future Directions

The ongoing struggle against dissipation has spurred incredible innovation. Researchers and engineers are exploring a diverse array of solutions, from fundamental material science to advanced software optimization, to push the boundaries of what’s possible.

Advanced Cooling Technologies: Beyond Fans and Heatsinks

While traditional air-cooled heatsinks and fans remain workhorses, the escalating power densities of modern chips demand more sophisticated approaches. Liquid cooling, involving the circulation of cooling fluids (like water, specialized dielectric coolants, or refrigerants) directly over or through microchannels embedded in chips, offers significantly higher heat transfer coefficients than air. Vapor chambers and heat pipes, which leverage the phase change of a working fluid within a sealed vacuum, can transport heat very efficiently from a hot spot to a larger cooling surface. Emerging technologies include solid-state cooling using thermoelectric (Peltier) devices, which can actively pump heat, and even experimental magnetic refrigeration. The pursuit is not just about moving heat away, but about doing so quietly, reliably, and within compact form factors.

Material Science Innovations for Thermal Management

Breakthroughs in material science are also crucial in the battle against dissipation. New highly conductive materials like advanced ceramics, carbon nanotubes, and graphene composites are being developed for heatsinks, thermal interface materials (TIMs), and even directly integrated into chip packaging. These materials offer superior thermal conductivity, allowing heat to spread and dissipate more efficiently. Research into phase-change materials (PCMs) explores substances that can absorb and release large amounts of latent heat as they melt and solidify, providing a temporary thermal buffer for intermittent high-power loads. Furthermore, improvements in dielectric materials for insulation and interconnect materials within chips themselves aim to reduce resistive losses at the fundamental level.

Software and Algorithmic Approaches to Energy Optimization

Dissipation isn’t solely a hardware problem; software plays a significant role. Dynamic Voltage and Frequency Scaling (DVFS) allows processors to adjust their operating voltage and clock speed based on workload, significantly reducing power consumption and heat generation during periods of lower demand. Power management algorithms in operating systems and firmware intelligently control component states, putting unused peripherals to sleep or throttling performance when thermal limits are approached. For AI, model compression techniques and efficient inference algorithms aim to reduce the computational resources required for specific tasks, thereby lowering power draw and heat. Software optimization, therefore, acts as a crucial layer of defense against unnecessary dissipation.

The Pursuit of Adiabatic Computing

Looking further into the future, researchers are even exploring the theoretical realm of adiabatic computing. This paradigm aims to minimize energy dissipation by manipulating charges and currents in a way that approaches thermodynamic reversibility. Instead of dissipating energy as heat every time a logical state changes, adiabatic circuits aim to recycle or reuse the energy, theoretically reducing power consumption by orders of magnitude. While still largely experimental and facing immense engineering challenges, adiabatic computing represents the ultimate frontier in the fight against dissipation, promising a future of ultra-efficient, low-power processing that could revolutionize all aspects of technology.

The Broader Implications for Tech & Innovation

Effectively addressing dissipation is not just about making existing technologies better; it’s about enabling entirely new paradigms and pushing the boundaries of what’s conceivable in the world of Tech & Innovation.

Miniaturization and Form Factor Challenges

The ability to manage heat efficiently is a direct enabler of miniaturization. As components become smaller and more densely packed, the power density increases, making thermal management exceptionally difficult. Innovations in cooling and low-dissipation components are crucial for developing ever-smaller, more powerful devices, whether they are micro-drones, wearable sensors, or implantable medical devices. Without advancements in dissipation control, the physical limits of miniaturization would be reached much sooner.

Sustainability and Energy Consumption

In a world increasingly concerned with environmental impact and energy sustainability, minimizing dissipation is not just an economic imperative but also an ecological one. Reducing the energy consumption of data centers, industrial robots, and consumer electronics translates directly into lower carbon footprints and less strain on global power grids. The pursuit of energy efficiency, driven by the need to combat dissipation, aligns perfectly with broader sustainability goals, making technological progress more responsible and environmentally friendly.

Pushing the Boundaries of Performance and New Frontiers

Ultimately, controlling dissipation liberates technological performance. It allows AI models to train faster, autonomous systems to operate longer and more reliably, and high-performance computing to tackle problems of ever-greater complexity. The ability to manage heat effectively removes a significant barrier to innovation, opening doors to new materials, architectures, and computational approaches that were previously deemed impractical due to thermal constraints. From quantum computing to advanced biotechnology, understanding and mastering dissipation remains a critical enabler for the next wave of disruptive technologies. As we continue to innovate, our capacity to manage the fundamental physics of energy loss will undoubtedly dictate the pace and potential of our progress.