In the realm of modern computing, few components hold as much transformative power and influence as the Graphics Processing Unit, or GPU. While often associated with the vibrant visuals of video games and the intricate rendering of professional design software, the GPU’s capabilities extend far beyond mere graphical output. It is a specialized processor, designed for massively parallel computation, a characteristic that has propelled it to the forefront of numerous technological advancements, particularly within the burgeoning field of Artificial Intelligence and its applications in fields like drone technology, advanced imaging, and autonomous systems.

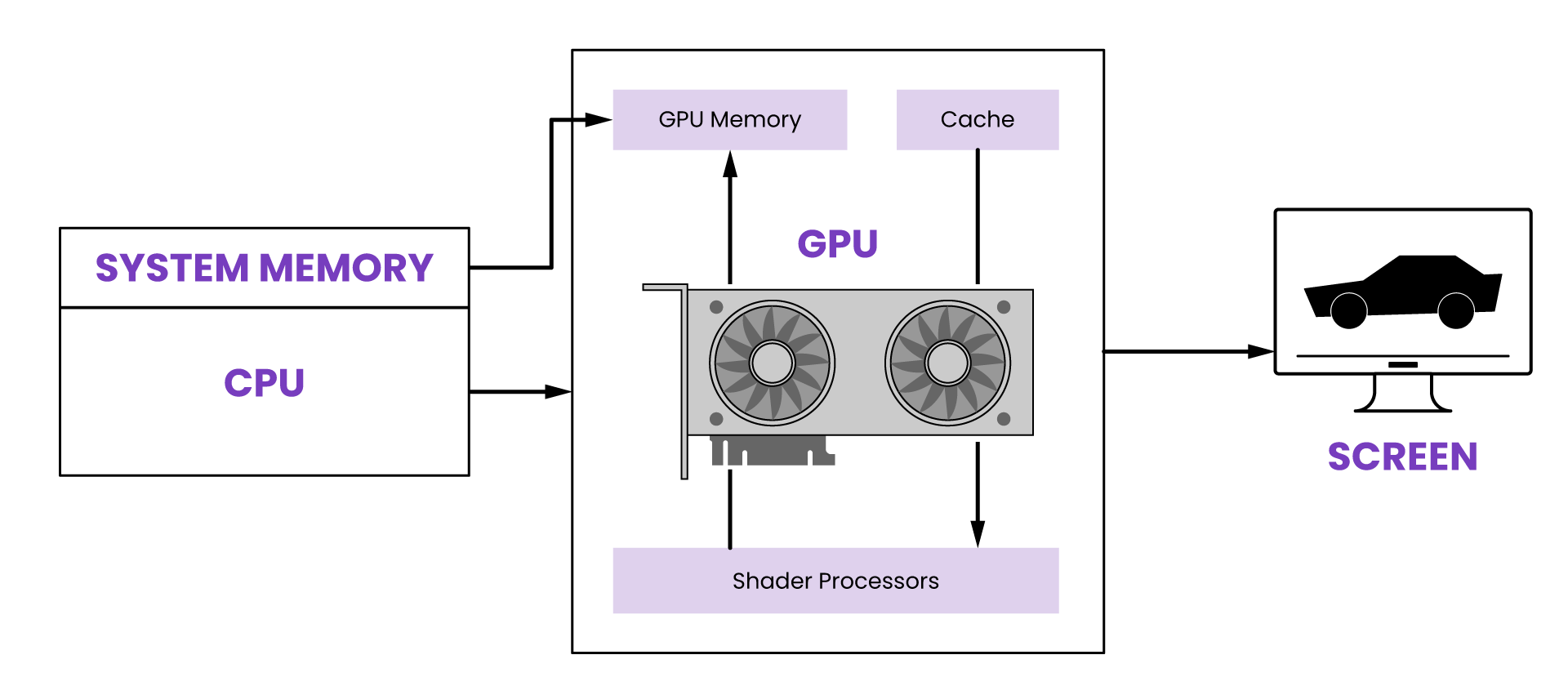

The fundamental difference between a CPU (Central Processing Unit) and a GPU lies in their architecture and intended purpose. A CPU is designed to handle a wide variety of tasks sequentially, excelling at complex decision-making and intricate logical operations. Think of it as a highly intelligent conductor of an orchestra, meticulously directing each individual instrument to play its part. A GPU, on the other hand, is built to perform a multitude of simpler tasks simultaneously. Its architecture is akin to an army of highly specialized workers, each capable of performing the same, albeit less complex, operation on a vast dataset at the exact same moment. This parallel processing capability is what unlocks the GPU’s extraordinary potential.

Initially conceived to accelerate the rendering of 2D and 3D graphics, the GPU’s ability to process thousands of threads concurrently proved to be a revelation. This made it exceptionally adept at handling the repetitive, yet computationally intensive, calculations required to display pixels on a screen. However, as computing evolved, researchers and engineers recognized that this inherent parallelism could be leveraged for a much broader range of applications, a concept that gave rise to General-Purpose computing on Graphics Processing Units (GPGPU). This paradigm shift has fundamentally reshaped how we approach complex computational problems, democratizing access to high-performance computing and driving innovation across diverse industries.

The Evolution and Architecture of the GPU

The journey of the GPU from a dedicated graphics accelerator to a versatile computational powerhouse is a testament to its adaptable architecture and the relentless pursuit of processing power. Its evolution has been marked by increasing core counts, advancements in memory bandwidth, and the development of specialized instruction sets designed to optimize for parallel workloads. Understanding this evolution is crucial to appreciating the GPU’s current significance.

From Pixels to Parallelism: The Genesis of the GPU

The origins of the GPU can be traced back to the early days of computer graphics. As the demand for more realistic and dynamic visual experiences grew, dedicated hardware was developed to offload the burdensome task of rendering from the CPU. Early graphics cards were relatively simple, focusing on accelerating basic tasks like drawing polygons and applying textures. However, the fundamental concept of processing visual data in parallel was already at play, laying the groundwork for future innovations. The introduction of specialized fixed-function pipelines allowed for the rapid execution of common graphics operations, significantly improving frame rates and enabling more complex scenes to be rendered in real-time.

The true turning point came with the advent of programmable shaders. This innovation allowed developers to write custom code that ran directly on the GPU, giving them unprecedented control over the rendering process. This opened the door to a new era of visual fidelity, enabling sophisticated lighting effects, realistic material simulations, and advanced post-processing techniques. The shift from fixed-function hardware to programmable shaders marked a fundamental change in how graphics were processed, transforming the GPU from a dedicated accelerator into a more flexible and powerful processing unit.

Massively Parallel Architecture: The Core Strength

The defining characteristic of a GPU is its massively parallel architecture. Unlike a CPU, which might have a few powerful cores optimized for complex tasks, a GPU boasts thousands of smaller, simpler cores. These cores are organized into streaming multiprocessors (SMs), each capable of executing a large number of threads concurrently. This design is inherently suited for workloads where the same operation needs to be applied to vast amounts of data simultaneously.

Consider the task of rendering a high-resolution image. Each pixel on the screen requires a series of calculations to determine its final color. A CPU would typically process these pixels one by one, or in small batches. A GPU, however, can assign a group of its thousands of cores to handle the calculations for different sets of pixels in parallel. This division of labor dramatically reduces the overall processing time, allowing for fluid animation and complex visual effects. This parallel processing power is not limited to graphics; it is the very engine that drives the GPU’s utility in fields beyond visual rendering.

GPU Computing Beyond Graphics: The GPGPU Revolution

The realization that the GPU’s parallel processing capabilities could be harnessed for non-graphical computations was a watershed moment in computer science. This shift, known as General-Purpose computing on Graphics Processing Units (GPGPU), has unlocked the GPU’s potential to tackle problems that were previously intractable or prohibitively time-consuming for traditional CPUs.

The Rise of Parallel Algorithms

The effectiveness of GPGPU hinges on the ability to break down complex problems into smaller, independent tasks that can be executed in parallel. This requires a shift in algorithmic thinking. Instead of sequential processing, developers must design algorithms that can leverage the GPU’s architecture. This often involves data-parallel operations, where the same set of instructions is applied to different data elements simultaneously.

Examples of such algorithms are abundant. In scientific simulations, for instance, complex models of fluid dynamics, molecular interactions, or astrophysical phenomena can be broken down into millions of individual calculations that can be performed in parallel by the GPU. Similarly, in data analysis, tasks like matrix multiplication, Fourier transforms, and statistical calculations are highly amenable to parallelization and benefit immensely from GPU acceleration. This has led to significant speedups in research and development across numerous scientific disciplines.

Key Frameworks and Technologies

To facilitate GPGPU programming, several key frameworks and technologies have emerged. CUDA (Compute Unified Device Architecture), developed by NVIDIA, is one of the most prominent. CUDA provides a parallel computing platform and programming model that allows developers to use C, C++, and Fortran to write programs that leverage the power of NVIDIA GPUs. It offers a set of extensions to these languages, along with an API, that enable direct access to the GPU’s parallel execution resources.

OpenCL (Open Computing Language) is another significant open standard that provides a framework for writing programs that execute across heterogeneous platforms, including CPUs, GPUs, and other processors. Unlike CUDA, which is proprietary to NVIDIA hardware, OpenCL is designed to be vendor-neutral, promoting interoperability across different hardware manufacturers. These frameworks, along with others like ROCm (Radeon Open Compute platform) for AMD GPUs, have been instrumental in democratizing access to GPU computing power and fostering a vibrant ecosystem of GPGPU applications.

GPU Applications in Modern Technology

The influence of the GPU extends far beyond gaming and professional graphics. Its computational prowess has become indispensable in a rapidly growing number of fields, driving innovation and enabling capabilities that were once the stuff of science fiction.

Artificial Intelligence and Machine Learning

Perhaps the most significant impact of the GPU in recent years has been in the field of Artificial Intelligence (AI) and Machine Learning (ML). Training deep neural networks, the backbone of many modern AI systems, involves an enormous number of matrix multiplications and other parallelizable operations. GPUs are exceptionally well-suited for these tasks, enabling researchers and developers to train increasingly complex models in a fraction of the time it would take using CPUs alone.

The ability to rapidly iterate on model architectures and hyperparameters, thanks to GPU acceleration, has been a key driver of the AI revolution. From natural language processing and computer vision to recommendation systems and autonomous driving, GPUs are the unsung heroes powering the intelligence behind these transformative technologies. The ongoing development of specialized AI accelerators and further optimizations in GPU architectures continue to push the boundaries of what AI can achieve.

Scientific Research and Data Analysis

Beyond AI, GPUs are revolutionizing scientific research and data analysis across a multitude of disciplines. In fields like computational fluid dynamics, weather forecasting, climate modeling, and particle physics, GPUs enable researchers to run simulations at unprecedented scales and resolutions. This leads to more accurate predictions, deeper insights into complex phenomena, and the ability to explore scientific questions that were previously computationally infeasible.

The healthcare industry also benefits significantly. GPUs are used for accelerating medical image processing, drug discovery through molecular simulations, and genomic analysis. Financial institutions leverage GPUs for high-frequency trading, fraud detection, and risk management, where the ability to process vast amounts of data in real-time is critical. In essence, any field that involves the processing of large datasets and computationally intensive tasks can find a powerful ally in the GPU.

High-Performance Computing and Emerging Technologies

The rise of High-Performance Computing (HPC) clusters has been significantly fueled by the inclusion of GPUs. These clusters, composed of numerous interconnected nodes, often integrate GPUs to provide massive parallel processing power for tackling the world’s most complex computational challenges. This is crucial for scientific endeavors, national security initiatives, and the development of groundbreaking technologies.

Looking ahead, the GPU’s role is likely to expand even further. Its ability to process data rapidly and efficiently makes it a key component in the development of advanced robotics, the Internet of Things (IoT) with its ever-increasing data streams, and the ongoing exploration of quantum computing. As computational demands continue to grow, the GPU, with its inherent parallelism and continuous evolution, will remain a cornerstone of technological advancement.