GPT-4o represents a significant evolution in the field of Artificial Intelligence, pushing the boundaries of what conversational AI can achieve. Developed by OpenAI, this latest iteration isn’t just an incremental update; it’s a paradigm shift, particularly in its multimodal capabilities and its enhanced efficiency. The “o” in GPT-4o stands for “omni,” signifying its ability to process and generate information across text, audio, and vision seamlessly. This fundamental change moves beyond the traditional text-based interactions of AI, opening up a vast array of new possibilities for how we interact with and leverage artificial intelligence.

The implications of GPT-4o extend across numerous technological domains, but its core strength lies in its sophisticated processing and innovative application within the broader landscape of Tech & Innovation. This article will delve into what GPT-4o is, its groundbreaking features, and the profound impact it is poised to have on the future of AI-driven technologies. We will explore its architecture, its superior performance, and the diverse applications that are being unlocked by its advanced capabilities.

The Architecture and Evolution of GPT-4o

GPT-4o builds upon the foundational advancements of its predecessors, most notably GPT-4, while introducing crucial architectural changes that enhance its performance and versatility. Understanding these changes is key to appreciating the significance of this new model.

From Text-Centric to Multimodal Processing

Previous generations of large language models (LLMs) were primarily designed to excel in processing and generating text. While some could interact with other modalities through separate, specialized models, the integration was often clunky and inefficient. GPT-4o, however, is inherently multimodal. This means it was trained from the ground up to understand and generate content across different types of data simultaneously.

The development process for GPT-4o involved training a single neural network on a massive dataset that encompasses text, audio, and visual information. This unified approach allows the model to develop a deeper, more contextual understanding of user inputs, regardless of their format. For instance, it can listen to spoken language, interpret an image, and then respond with a coherent, contextually relevant text explanation or even generate audio. This cross-modal understanding is a fundamental departure from earlier models, where separate components would handle different data types, leading to potential information loss or delays in processing.

Enhanced Efficiency and Speed

A significant breakthrough with GPT-4o is its dramatically improved efficiency and speed. OpenAI has engineered the model to be significantly faster and more cost-effective than GPT-4. This optimization is crucial for enabling real-time, interactive AI experiences that were previously infeasible. The ability to process complex requests much more quickly means that users can engage in more natural, fluid conversations with the AI, and applications can leverage its power for tasks that require rapid decision-making or immediate feedback.

This increased efficiency is likely a result of architectural improvements, such as more optimized attention mechanisms and a more streamlined inference process. By reducing the computational overhead associated with processing multimodal inputs, GPT-4o makes advanced AI more accessible and practical for a wider range of applications and users. This also translates to lower operational costs for businesses deploying AI solutions, further accelerating its adoption.

Improved Performance Across Benchmarks

GPT-4o has demonstrated superior performance across a wide array of benchmarks, including those that test its reasoning, coding, and language understanding capabilities. Its multimodal nature allows it to excel in tasks that require integrating information from different sources. For example, it can analyze an image of a graph and answer questions about the data it represents, or it can listen to a spoken explanation of a problem and then provide a detailed written solution.

The model also shows significant improvements in its ability to understand nuances, context, and emotional tone in spoken language, making it a more empathetic and responsive conversational agent. This enhanced understanding contributes to more human-like interactions, which is a critical step towards developing AI that can truly collaborate with humans in complex tasks.

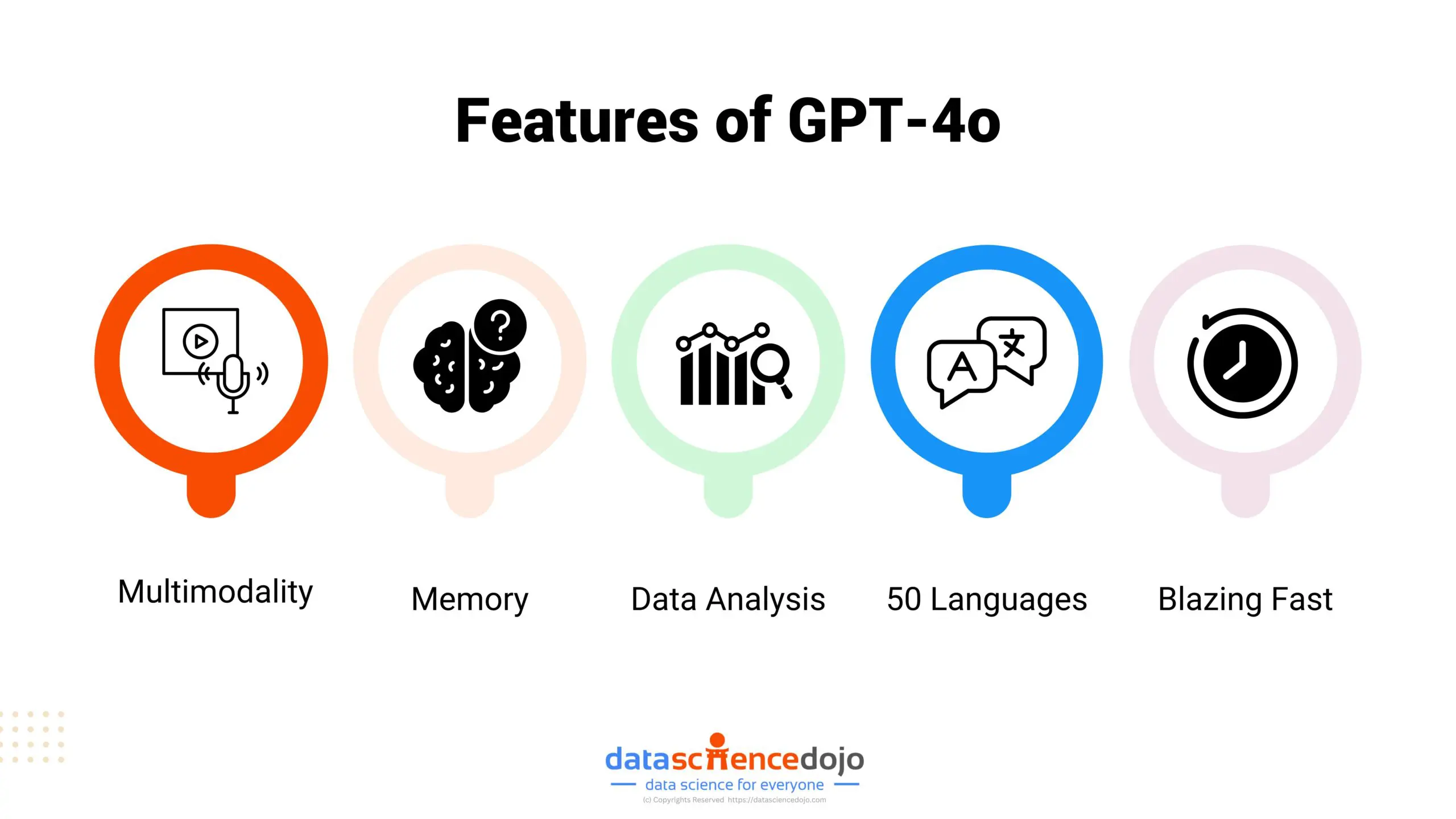

Groundbreaking Capabilities of GPT-4o

The architecture of GPT-4o underpins a suite of groundbreaking capabilities that set it apart from previous AI models and pave the way for novel technological applications.

Real-time Voice and Vision Interaction

One of the most compelling features of GPT-4o is its ability to engage in near real-time voice and vision interactions. Unlike older systems that might have a noticeable delay between a spoken command and a response, or between an image upload and analysis, GPT-4o can process these inputs and generate outputs with remarkably low latency.

This capability enables a more natural and intuitive way for users to interact with AI. Imagine having a conversation with an AI assistant where you can interrupt, ask clarifying questions, and receive immediate, context-aware responses, all while showing it visual aids. For example, you could point your phone camera at a complex piece of machinery and ask the AI to explain its function, or show it a challenging math problem written on a whiteboard, and receive a step-by-step audio explanation. This level of interactivity has the potential to revolutionize education, customer support, and personal assistance.

Sophisticated Emotional Tone Detection and Generation

GPT-4o has shown a remarkable ability to detect and even generate emotional tones in conversation. This is a significant advancement in making AI interactions feel more human and empathetic. The model can analyze vocal inflections, word choice, and contextual cues to infer the emotional state of the speaker.

Conversely, GPT-4o can also generate responses with specific emotional characteristics. This means it can tailor its communication style to be more encouraging, empathetic, formal, or even playful, depending on the situation and user preference. This capability is crucial for applications where emotional intelligence is paramount, such as mental health support chatbots, educational tutors, or customer service agents designed to de-escalate situations. The nuanced understanding and generation of tone can foster deeper trust and more effective communication between humans and AI.

Enhanced Reasoning and Problem-Solving

Beyond its multimodal capabilities, GPT-4o demonstrates enhanced reasoning and problem-solving skills. Its ability to process information from various sources simultaneously allows it to tackle more complex and abstract problems. For instance, it can analyze a research paper (text), a diagram within it (vision), and then synthesize this information to answer intricate questions or propose novel solutions.

In the realm of coding, GPT-4o can understand code snippets, identify bugs, suggest optimizations, and even generate entirely new code based on natural language descriptions. This level of analytical power makes it an invaluable tool for developers, researchers, and anyone involved in tasks that require intricate logical deduction and creative problem-solving. The model’s improved performance in these areas signifies a step closer to true artificial general intelligence, where AI can understand and solve problems across a broad range of domains.

Impact on Tech & Innovation

The introduction of GPT-4o is poised to be a transformative force across the entire landscape of technology and innovation, unlocking new possibilities and accelerating existing trends.

Democratizing Advanced AI Capabilities

With its increased efficiency and reduced operational costs, GPT-4o makes sophisticated AI capabilities more accessible than ever before. This democratization means that smaller businesses, individual developers, and researchers with limited resources can now leverage cutting-edge AI without the prohibitive costs associated with previous models. This can lead to a surge in innovation, as a broader range of creators can experiment with and build upon advanced AI.

The ease of integration and use of GPT-4o, particularly its multimodal interaction, will lower the barrier to entry for developing AI-powered applications. This could lead to a proliferation of smart devices, personalized services, and intelligent tools that were previously only imaginable in science fiction.

Revolutionizing Human-Computer Interaction

GPT-4o is fundamentally changing how humans interact with computers. The shift from typing commands to natural, conversational dialogue, enhanced with visual and auditory cues, makes technology more intuitive and user-friendly. This is particularly impactful for individuals with disabilities or those who are less technologically proficient.

The ability of GPT-4o to understand context, emotion, and intent allows for more personalized and adaptive user experiences. AI assistants can become more proactive and helpful, anticipating user needs and providing assistance before being explicitly asked. This creates a more seamless and integrated technological environment where AI acts as a true partner rather than just a tool.

Driving Future AI Research and Development

The advancements seen in GPT-4o will undoubtedly spur further research and development in the field of AI. Its success in unified multimodal processing will encourage more exploration into how to train and optimize models for even more complex data integrations and cognitive tasks. The breakthroughs in efficiency and speed will push the boundaries of what is computationally feasible, opening doors to larger and more powerful models.

Furthermore, the ethical considerations and societal impacts of such advanced AI will become increasingly important areas of study and policy development. As AI becomes more capable and integrated into our lives, understanding its limitations, potential biases, and responsible deployment will be paramount. GPT-4o, by pushing the envelope of AI capabilities, also accelerates the conversation around these critical issues, guiding the future trajectory of AI innovation.

In conclusion, GPT-4o represents a monumental leap forward in artificial intelligence. Its inherent multimodal architecture, enhanced efficiency, and superior performance across various tasks position it as a key enabler of future technological advancements. From revolutionizing human-computer interaction to democratizing access to sophisticated AI, GPT-4o is set to redefine the possibilities within the realm of Tech & Innovation, ushering in an era of more intelligent, intuitive, and integrated AI experiences.