The rapid advancements in artificial intelligence have ushered in an era where digital content can be generated, manipulated, and disseminated with unprecedented ease and sophistication. Among the most controversial and ethically fraught applications of this technology is the emergence of AI pornography. Far from being a niche concern, AI pornography represents a significant technological and societal challenge, impacting discussions on privacy, consent, digital authenticity, and the very fabric of trust in online interactions. This phenomenon, rooted deeply in the realm of “Tech & Innovation,” necessitates a thorough examination of its technical underpinnings, ethical implications, and the innovative countermeasures being developed.

The Emergence of AI Pornography: A Technological Landscape

AI pornography refers to sexually explicit content, whether still images or video, that has been generated or manipulated using artificial intelligence algorithms, typically without the consent of the individuals depicted. This goes beyond traditional image manipulation by leveraging powerful AI models to create highly realistic and often indistinguishable fabrications. Its rise is a direct consequence of the democratization of sophisticated AI tools, making previously complex tasks accessible to a wider audience.

Defining AI Pornography

At its core, AI pornography encompasses content where an individual’s likeness (face, body, voice) is synthetically placed into explicit scenarios they never participated in, or where an entirely new, lifelike explicit image or video of a non-existent person is created. The key distinction lies in the AI’s role in synthesizing or altering the content, making it appear authentic. This can range from “deepfakes,” where a person’s face is swapped onto another body in an existing video, to entirely AI-generated content (often referred to as “synthetic media”) that fabricates everything from scratch using complex models trained on vast datasets. The target is often public figures, but increasingly, private individuals are also falling victim, highlighting the severe privacy implications.

The Underlying AI Technologies

The backbone of AI pornography lies in several cutting-edge AI technologies, primarily generative adversarial networks (GANs) and various forms of neural networks. GANs consist of two competing neural networks: a generator that creates new data (e.g., an image) and a discriminator that tries to distinguish between real and fake data. Through this adversarial process, the generator learns to produce increasingly convincing fakes.

Deep learning models, especially convolutional neural networks (CNNs) and recurrent neural networks (RNNs), are integral to analyzing and synthesizing visual and auditory data. For deepfakes, sophisticated autoencoders and variational autoencoders (VAEs) are often used to encode and decode facial features, allowing for seamless face swapping. Furthermore, advancements in natural language processing (NLP) and text-to-image models (like Stable Diffusion or DALL-E) are making it possible to generate explicit images from simple text prompts, vastly expanding the ease of creation and posing new challenges.

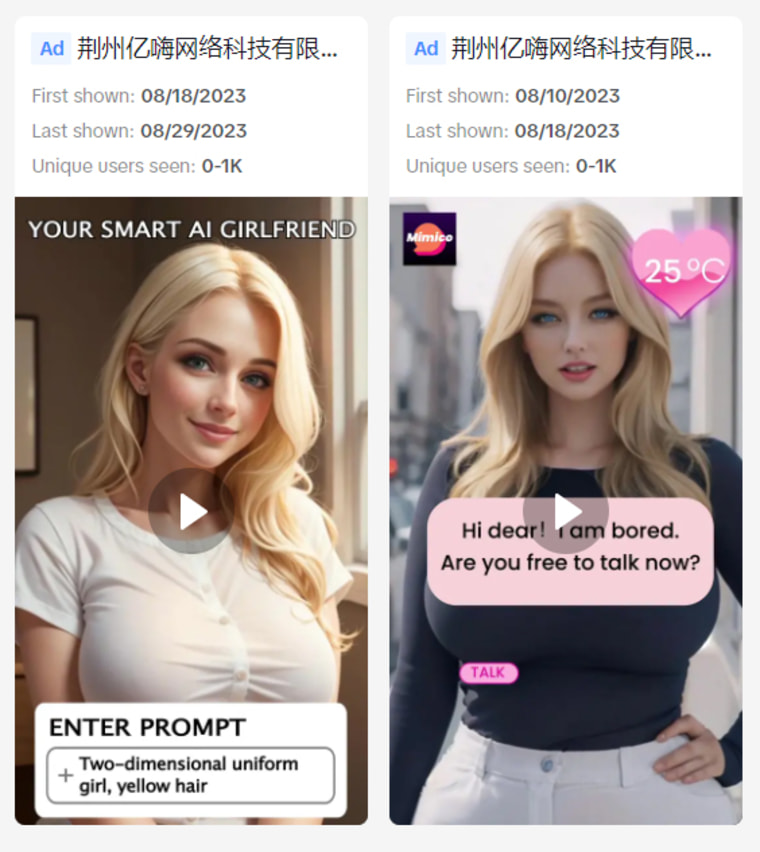

The Accessibility and Democratization of Creation

What makes AI pornography particularly insidious is the increasingly low barrier to entry for its creation. While early deepfake creation required significant technical expertise and computational power, open-source tools, user-friendly applications, and cloud-based services have dramatically simplified the process. Software packages and online platforms now allow individuals with minimal technical knowledge to create convincing AI-generated explicit content. This democratization of creation means that the technology is no longer confined to state-sponsored actors or highly skilled researchers but is readily available to anyone with malicious intent, amplifying the potential for widespread abuse and harassment. The accessibility extends not just to faces but also to body parts, voices, and entire scenarios, enabling comprehensive synthetic identity manipulation.

Technical Mechanisms and Methodologies

Understanding the technical methodologies behind AI pornography is crucial for grasping its capabilities and developing effective countermeasures. These mechanisms exploit the sophisticated pattern recognition and generation abilities of AI to craft convincing fictions.

Synthesizing Images and Videos

The primary method for creating AI pornography involves synthesizing images and videos. For deepfakes, the process typically involves training an AI model on a large dataset of images and videos of the target individual’s face from various angles and lighting conditions. Concurrently, another dataset of the source body/scene is used. The AI then learns to map the target’s facial features onto the source, ensuring expressions, head movements, and lighting are consistent. This often involves techniques like perceptual loss functions to ensure visual fidelity and temporal consistency algorithms to reduce flickering or unnatural movements in videos.

Beyond face-swapping, entirely synthetic images can be generated using text-to-image models. Users simply input a descriptive prompt, and the AI renders a novel image based on its training data, which might include explicit content. These models leverage diffusion processes, iteratively refining random noise into coherent images based on the provided text, offering immense creative freedom—for good or ill.

Voice Cloning and Deep Audio

Complementing visual manipulation is the rise of voice cloning and “deep audio.” AI models, often based on neural networks like WaveNet or Tacotron, can learn to mimic a person’s voice and speech patterns from just a few seconds of audio. This cloned voice can then be used to generate new speech, saying anything the creator desires. When combined with deepfake videos, deep audio can create incredibly convincing synthetic media where the target individual not only appears in a fabricated scenario but also speaks fabricated dialogue. This adds another layer of authenticity and makes detection more challenging, as discrepancies between visual and auditory cues are often key indicators of manipulation.

Automated Content Generation and Personalization

A more advanced and disturbing aspect is the potential for automated content generation and personalization. AI can be trained on vast datasets of explicit material to automatically generate new content tailored to specific preferences or scenarios. This could involve creating “virtual porn stars” who don’t exist in reality, or generating explicit content featuring a specific likeness on demand. The personalization aspect means that content could be endlessly varied, dynamically adapted, and even made interactive, blurring the lines between reality and simulation in deeply unsettling ways. This capability threatens to flood the internet with an unprecedented volume of synthetic pornography, making content moderation and individual protection exponentially more difficult.

The Broader Implications for Tech and Society

The technological prowess of AI pornography casts a long shadow, raising profound questions about ethics, digital trust, and the future of online regulation. It is a critical area where technological innovation collides directly with fundamental human rights and societal values.

Ethical Dilemmas and Consent

The most pressing ethical dilemma revolves around consent. AI pornography, particularly deepfakes, inherently involves the non-consensual use of an individual’s likeness for sexual exploitation. This constitutes a severe violation of privacy, dignity, and autonomy. It can lead to immense psychological distress, reputational damage, and even real-world harassment for victims. The technology facilitates revenge porn and sextortion on an industrial scale, allowing perpetrators to create and disseminate highly personalized and damaging content without ever having obtained the subject’s explicit permission. The legal frameworks around image rights and consent are struggling to keep pace with the fluidity and realism of AI-generated content.

The Challenge to Digital Trust and Authenticity

AI pornography fundamentally erodes digital trust and challenges the very notion of authenticity. When highly convincing synthetic media can be easily produced, the ability to discern truth from fiction becomes severely compromised. This “liar’s dividend” phenomenon, where genuine evidence can be dismissed as “just a deepfake,” has implications far beyond pornography, affecting journalism, legal proceedings, and political discourse. It fosters an environment of pervasive skepticism, making it harder to establish facts and hold individuals accountable. The technology weaponizes verisimilitude, forcing society to re-evaluate how it verifies information and establishes credibility in a digitally saturated world.

Regulatory and Policy Responses in the Digital Age

Governments and regulatory bodies worldwide are grappling with how to effectively address AI pornography. Responses vary, from outright bans and criminalization (e.g., in some U.S. states and countries like the UK) to requiring clear disclosure of AI-generated content. Key challenges include defining what constitutes “harm,” establishing jurisdiction across international borders, and balancing free speech concerns with the protection of individuals. Tech companies, as custodians of digital platforms, are also facing increasing pressure to implement robust content moderation policies and utilize AI detection tools to identify and remove such content. The debate often centers on whether to regulate the technology itself, its malicious use, or the platforms that host it.

Innovation in Detection and Countermeasures

In response to the proliferation of AI pornography, significant innovation is occurring in developing detection technologies and countermeasures. The fight against malicious AI-generated content is often an “AI vs. AI” battle, where advanced algorithms are used to combat the very technology that created the problem.

AI for AI: Developing Detection Algorithms

The most prominent countermeasure involves using AI to detect AI-generated content. Researchers are developing sophisticated detection algorithms that can identify subtle artifacts, inconsistencies, or unique digital fingerprints left by generative AI models. These detectors look for anomalies in facial expressions, eye blinking rates, lighting inconsistencies, pixel noise patterns, and other minute details that human eyes might miss but are characteristic of synthetic media. Machine learning models, trained on vast datasets of both real and fake content, are crucial in this arms race, constantly adapting as generative AI improves. This involves forensic analysis of the digital data itself, looking for traces of algorithmic manipulation.

Digital Watermarking and Provenance

Another promising area of innovation is digital watermarking and provenance tracking. This involves embedding imperceptible digital signatures or metadata into images and videos at the point of creation, indicating their origin and whether they have been altered. Blockchain technology is also being explored to create immutable records of content provenance, allowing users to verify the authenticity of media by tracing its history. The idea is to create a trusted chain of custody for digital media, making it difficult to pass off synthetic content as genuine. However, challenges remain in making these watermarks resilient to sophisticated removal techniques and ensuring widespread adoption by content creators and platforms.

Platform Responsibility and Content Moderation Technologies

Technology platforms play a crucial role in mitigating the spread of AI pornography. This includes implementing robust content moderation policies, investing in advanced AI-powered detection and filtering systems, and proactively removing violative content. Many platforms now employ combinations of AI and human moderators to identify and take down deepfakes and other forms of AI pornography. Beyond removal, platforms are also exploring mechanisms for rapid reporting, victim support, and cooperation with law enforcement. The development of AI-driven content moderation tools that can process vast amounts of data in real-time is essential, given the sheer volume of content uploaded daily, to effectively curb the dissemination of harmful synthetic media.

The Future Trajectory of AI and Content Creation

The trajectory of AI and content creation is one of continuous evolution, bringing both immense creative potential and unprecedented risks. The challenge lies in harnessing AI’s power responsibly while mitigating its potential for harm.

Evolving Capabilities and Risks

AI’s capabilities in content generation are evolving at an astonishing pace. Future models will likely produce even more realistic, high-resolution, and contextually aware synthetic media, making detection increasingly difficult. We may see AI capable of generating entire narratives, films, or interactive experiences from minimal prompts, blurring the lines between human and machine creativity. Alongside this, the risks of sophisticated influence campaigns, hyper-personalized scams, and the erosion of shared reality will intensify. The “generative AI” revolution is still in its nascent stages, promising both wondrous artistic tools and terrifying instruments of deception.

The Imperative for Responsible AI Development

Addressing AI pornography and similar harms necessitates an imperative for responsible AI development. This includes incorporating ethical guidelines, privacy-by-design principles, and safeguards into AI models from their inception. Researchers and developers must consider the potential for misuse of their technologies and implement technical limitations or “red-teaming” to identify vulnerabilities before deployment. Developing “explainable AI” (XAI) that provides transparency into how models make decisions can also aid in detecting malicious outputs. Promoting a culture of ethical AI among developers and institutions is paramount to ensure that technological progress serves humanity rather than undermining it.

Educating Users and Fostering Digital Literacy

Finally, a crucial aspect of navigating the future of AI and content creation involves educating users and fostering digital literacy. Citizens need to understand how AI-generated content works, recognize the signs of manipulation, and critically evaluate the authenticity of digital media. Media literacy programs must adapt to include discussions on synthetic media, the concept of digital provenance, and the importance of skepticism in a post-truth era. Empowering individuals with the knowledge and tools to discern genuine from fabricated content is a vital defense against the harms of AI pornography and other forms of digital deception. As AI continues to reshape our digital landscape, a collective commitment to ethical technology, robust regulation, and informed citizenry will be essential in navigating its complex future.