In the dynamic and often unpredictable world of cutting-edge technology and innovation, there comes a moment for every engineer, data scientist, and project manager when things simply don’t go as planned. We’ve all been there: an AI model delivers baffling results, an autonomous system veers unexpectedly, remote sensing data is riddled with inexplicable noise, or a complex mapping project yields an output that feels, well, a little “salty.” This evocative phrase, borrowed from a colloquial expression of disappointment or frustration, perfectly encapsulates that critical juncture when the meticulously planned technical endeavor presents a challenge that feels off-kilter, problematic, or simply unappetizing. It’s not just a minor bug; it’s a fundamental deviation from expectation that threatens the integrity or success of the entire operation.

This article delves into the “what do I do” when our technological “fish” turns “salty” within the realm of Tech & Innovation, specifically focusing on the advanced applications of AI follow mode, autonomous flight, mapping, and remote sensing. We will explore systematic approaches to diagnose, rectify, and ultimately innovate our way out of these sticky situations, transforming moments of frustration into opportunities for deeper understanding and more robust solutions. From deciphering anomalous data to recalibrating complex autonomous systems and fostering a culture of resilience, we’ll navigate the challenges of the unexpected in our quest for technological excellence.

Decoding the “Salty” Data: Identifying Anomalies in Remote Sensing and AI

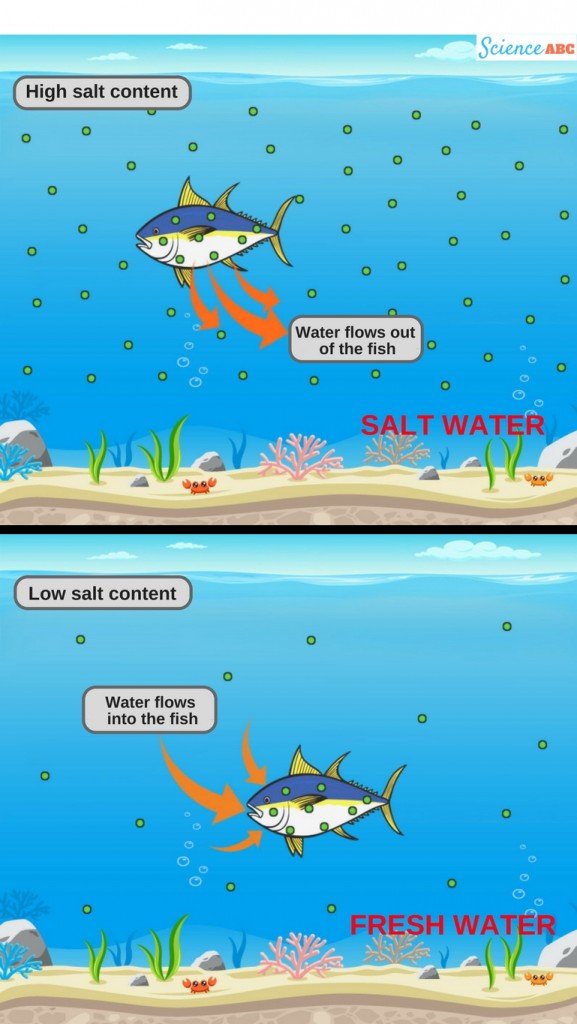

The bedrock of any innovative technology project, especially in areas like remote sensing and AI, is data. When this data, our digital “fish,” turns “salty”—meaning it’s inconsistent, corrupted, or misleading—the subsequent analyses and decisions become unreliable. Identifying these anomalies is the first critical step toward salvaging a project. This isn’t merely about finding missing values; it’s about discerning subtle patterns of error or deviation that can throw off an entire autonomous system or misguide an AI model.

Data Ingestion and Pre-processing Pitfalls

The journey of data from raw capture to insightful analysis is fraught with potential missteps. In remote sensing, data can be “salty” from the moment of ingestion due to sensor calibration issues, atmospheric interference, or transmission errors. For AI, training data can harbor hidden biases, incorrect labels, or incomplete records. The pre-processing phase, intended to clean and prepare data, can inadvertently introduce new problems if not handled with extreme care. For instance, aggressive normalization might obscure genuine outliers that hold critical information, while insufficient cleaning can propagate noise through an entire machine learning pipeline. When facing unexpected results, it’s imperative to re-examine the earliest stages: are the data sources reliable? Were calibration procedures followed rigorously? Is the data pipeline robust enough to handle varying input qualities? Tools for data lineage tracking and versioning become invaluable here, allowing engineers to pinpoint exactly where deviations might have been introduced and to roll back to a known good state if necessary. Validating data integrity at each stage—from acquisition to storage—is a proactive measure against “salty” outcomes.

Spotting Irregularities in AI Model Outputs

Even with pristine data, AI models can produce “salty” outputs. This could manifest as an AI follow mode losing its target erratically, a mapping algorithm creating distorted terrain models, or a remote sensing classification incorrectly identifying land cover. Such irregularities are often symptoms of deeper issues within the model’s architecture, training methodology, or deployment environment. Beyond simply observing poor performance, identifying why the output is salty requires a multi-faceted approach. Explainable AI (XAI) techniques, such as SHAP or LIME, can help uncover which features or input segments are driving peculiar decisions. Visualizing model activations or attention mechanisms can reveal if the model is focusing on irrelevant aspects of the input. Furthermore, monitoring model performance against a diverse set of real-world scenarios, not just the training set, can expose edge cases where the model fails. A “salty” output isn’t always a sign of a broken model, but sometimes of a model encountering conditions it wasn’t adequately prepared for, highlighting gaps in its generalization capabilities or robust features.

Ground Truthing and Validation Strategies

When theoretical models and observed realities diverge, ground truthing becomes the ultimate arbiter. In remote sensing and mapping, this means physically verifying features on the ground that have been identified or measured from aerial or satellite platforms. If an autonomous agricultural drone, for instance, uses remote sensing to detect crop stress, ground truthing involves on-site inspection and lab analysis of plant samples to confirm the drone’s assessment. For AI systems, particularly those involved in autonomous flight or object recognition, validation extends beyond simple accuracy metrics. It involves rigorous testing in simulated environments that mimic real-world complexity, followed by controlled field tests. A “salty” outcome might indicate that the model performs well on metrics but fails on crucial real-world behaviors or safety constraints. Robust validation involves diverse datasets, adversarial testing, and blind evaluations to ensure the system is not merely accurate on paper but truly reliable and trustworthy in its intended application. When the “fish is salty,” the answer often lies in going back to basics and comparing digital predictions with physical realities.

Course Correction in Autonomous Systems: Re-calibrating for Smooth Operations

Autonomous systems, from drones with AI follow mode to self-navigating mapping vehicles, are designed to operate independently. However, their complex interplay of sensors, software, and actuators means that unexpected deviations can occur, making the “fish salty.” These deviations demand swift and precise course correction to maintain operational integrity and safety.

Sensor Fusion and GPS Drift Management

A common source of “salty” behavior in autonomous systems is the subtle degradation or misinterpretation of sensor data. GPS drift, inherent in satellite navigation, can cause an autonomous drone to gradually deviate from its intended flight path. When fused with other sensors like IMUs (Inertial Measurement Units), LiDAR, or visual odometry, minor discrepancies can compound into significant errors. If an autonomous drone’s AI follow mode starts to lag or overshoot its target, it’s often a sign that sensor inputs are not being harmonized effectively. The “what do I do” here involves sophisticated sensor fusion algorithms—such as Kalman Filters or Extended Kalman Filters—that intelligently combine data from multiple sources, weighing their reliability and mitigating individual sensor noise. Regular calibration of all onboard sensors, especially after environmental changes or system updates, is paramount. Furthermore, integrating differential GPS (DGPS) or RTK (Real-Time Kinematic) GPS systems can dramatically reduce positional errors, ensuring that the autonomous “fish” swims precisely where it’s supposed to.

Adaptive Path Planning and Obstacle Avoidance Adjustments

An autonomous system’s ability to plan and execute paths while avoiding obstacles is central to its utility. When this fails—e.g., an autonomous mapping drone deviates into restricted airspace or an AI follow mode system repeatedly misjudges distances—the “fish is salty.” The solution often lies in enhancing the system’s adaptive capabilities. This involves not just static obstacle maps but dynamic, real-time sensing and re-planning. If a drone encounters an unexpected gust of wind or an unforeseen temporary obstruction, its path planning algorithm must be robust enough to recalculate an optimal, safe route instantly. Advanced techniques like Model Predictive Control (MPC) allow the system to predict future states and optimize control inputs over a receding horizon, making it more resilient to disturbances. For obstacle avoidance, the ability to discern static versus dynamic objects, and to predict the movement of dynamic objects, is crucial. This requires sophisticated perception algorithms, often leveraging machine learning on LiDAR, radar, and camera data. Regular updates to these algorithms, trained on diverse real-world scenarios, are essential to keep the autonomous system’s navigation capabilities sharp and responsive.

Human-in-the-Loop Protocols for Autonomous Failures

Despite advancements, complete autonomy without any human oversight is often undesirable, especially when the “fish is salty.” Establishing clear human-in-the-loop protocols is a vital safety net. This means defining explicit thresholds and conditions under which an autonomous system signals for human intervention or automatically reverts to a failsafe mode. For an AI follow mode drone, this could be a pre-set distance from the target or a detected drop in battery life that triggers a return-to-home sequence or a request for manual control. For large-scale autonomous mapping operations, operators must have the capability to pause, redirect, or abort missions remotely if anomalies are detected in real-time data feeds or flight parameters. The design of these human interfaces must be intuitive, providing critical information succinctly to allow for rapid, informed decision-making. Training operators to recognize “salty” behaviors and to execute emergency procedures is just as important as the underlying autonomous technology. A well-designed human-in-the-loop system transforms potential catastrophic failures into manageable incidents, ensuring that even when the autonomous “fish” struggles, a skilled human hand is ready to guide it back to calm waters.

Innovative Solutions for “Sour” Project Outcomes: Leveraging Advanced Tech

When the initial attempts at troubleshooting a “salty” tech outcome fall short, it’s time to elevate the approach. This involves leveraging the very innovations that define the “Tech & Innovation” category to develop more sophisticated, often predictive, solutions to mitigate future issues and optimize performance.

AI-Driven Diagnostics and Predictive Maintenance

One of the most potent answers to a “salty” system is to let AI analyze its own health. Instead of reactively fixing issues, AI-driven diagnostics can monitor system parameters, sensor readings, and operational logs to detect subtle patterns indicative of impending failures. For autonomous drones, this might mean an AI model analyzing motor vibrations, battery discharge rates, or IMU noise to predict when a component is likely to fail before it affects an autonomous flight or mapping mission. This transitions from traditional troubleshooting to predictive maintenance, where components are replaced or serviced proactively, preventing “salty” incidents from occurring in the first place. Machine learning models, trained on historical failure data and operational logs, can identify anomalous behavior that a human might miss, providing early warnings and actionable insights. This proactive approach significantly reduces downtime, enhances reliability, and ensures that the “fish” remains fresh.

Reinforcement Learning for System Optimization

When an autonomous system consistently exhibits suboptimal or “salty” behavior in specific scenarios, traditional programming might struggle to provide a comprehensive fix. This is where reinforcement learning (RL) shines. RL algorithms can learn optimal behaviors through trial and error, interacting with an environment and receiving rewards or penalties for its actions. For an AI follow mode, an RL agent could learn to adjust its speed, altitude, and tracking parameters dynamically to maintain a smoother, more consistent follow in various terrains and weather conditions, without explicit programming for every scenario. In autonomous mapping, RL could optimize sensor placement and flight patterns to maximize data collection efficiency and quality under different environmental constraints. By allowing the system to discover optimal control policies through experience, reinforcement learning offers a powerful paradigm for moving beyond reactive fixes to proactive, self-improving performance, turning “salty” behaviors into finely tuned, optimized operations.

Blockchain for Data Integrity and Trust

In complex ecosystems involving multiple autonomous systems, vast datasets, and various stakeholders (e.g., in smart city mapping or remote sensing for environmental monitoring), ensuring data integrity and fostering trust can be a significant challenge, often leading to “salty” disputes over data authenticity. Blockchain technology offers a robust solution. By creating an immutable, distributed ledger of all data transactions, sensor readings, and system operations, blockchain can guarantee the authenticity and provenance of data. If an autonomous drone records environmental data, that data can be time-stamped and immutably recorded on a blockchain, providing an auditable trail that verifies its origin and integrity. This is particularly crucial in applications where data is used for regulatory compliance or scientific research. When data is questionable or “salty,” a blockchain ledger can quickly reveal if it has been tampered with or corrupted, providing an irrefutable source of truth. This technology not only prevents malicious data manipulation but also builds a foundation of trust among all participants, ensuring that the insights derived from the data are reliable.

Cultivating Resilience: Building Robustness into Your Tech Ventures

Ultimately, addressing “salty” outcomes in tech and innovation isn’t just about fixing immediate problems; it’s about building systems and processes that are inherently resilient, capable of anticipating, withstanding, and recovering from future challenges.

Iterative Development and Agile Methodologies

The journey of innovation is rarely a straight line. When an autonomous system or AI model encounters unexpected behaviors, trying to achieve perfection in one go often leads to more “salty” experiences. Embracing iterative development and agile methodologies is crucial. Instead of monolithic development cycles, breaking projects into smaller, manageable sprints allows for frequent testing, feedback, and adaptation. If an initial AI follow mode struggles with rapid changes in target speed, an agile approach allows developers to quickly iterate on the algorithm, test the fix, and deploy an improved version without rehauling the entire system. This continuous cycle of planning, execution, evaluation, and adaptation means that “salty” issues are identified early, addressed promptly, and integrated into subsequent iterations, leading to a more robust and refined product over time. It transforms problems from roadblocks into learning opportunities.

Establishing Comprehensive Testing Frameworks

The antidote to many “salty” tech outcomes lies in exhaustive testing. This extends beyond basic unit and integration tests to encompass a wide array of simulation, stress, and adversarial testing. For autonomous flight systems, this means simulating millions of flight hours under diverse weather conditions, sensor failures, and unexpected scenarios that would be impractical or dangerous to conduct in the real world. For AI models, adversarial testing involves deliberately feeding the model inputs designed to trick or confuse it, thereby identifying vulnerabilities before they can be exploited. Comprehensive testing also includes continuous integration/continuous deployment (CI/CD) pipelines, ensuring that every code change is automatically tested, reducing the likelihood of introducing new “salty” bugs. A robust testing framework acts as a critical immune system for your tech projects, proactively identifying weaknesses and building resilience against unforeseen challenges.

Fostering a Culture of Continuous Improvement

Beyond technical solutions, the most powerful tool against “salty” tech outcomes is a culture that embraces continuous improvement and learning from failures. When an autonomous drone misbehaves or an AI model underperforms, the response shouldn’t be blame, but inquiry. What went wrong? Why? How can we prevent it from happening again? This involves conducting thorough post-mortem analyses, documenting lessons learned, and sharing insights across teams. Implementing feedback loops from field operations back to R&D is vital. Encouraging experimentation, even if it leads to occasional “salty fish,” fuels innovation. Companies that cultivate an environment where asking “What do I do?” is met with collaborative problem-solving and a commitment to evolution will ultimately build more resilient, innovative, and successful technologies. The “salty fish” becomes not a failure, but a catalyst for growth and a stepping stone towards more refined and reliable technological solutions.

Conclusion

The journey through Tech & Innovation is one of constant discovery, punctuated by moments when our meticulously crafted systems or carefully gathered data reveal unexpected flaws – when our “fish turns salty.” Far from being insurmountable obstacles, these challenges are invaluable opportunities for growth. By systematically decoding anomalous data, recalibrating autonomous systems with precision, deploying innovative AI and blockchain solutions, and embedding a culture of resilience through iterative development and comprehensive testing, we transform these “salty” moments into profound advancements. The question “What do I do?” is not one of helplessness, but a call to action, driving us to refine our understanding, enhance our technologies, and ultimately, engineer a future where our innovations are not just intelligent, but also robust, reliable, and truly revolutionary.