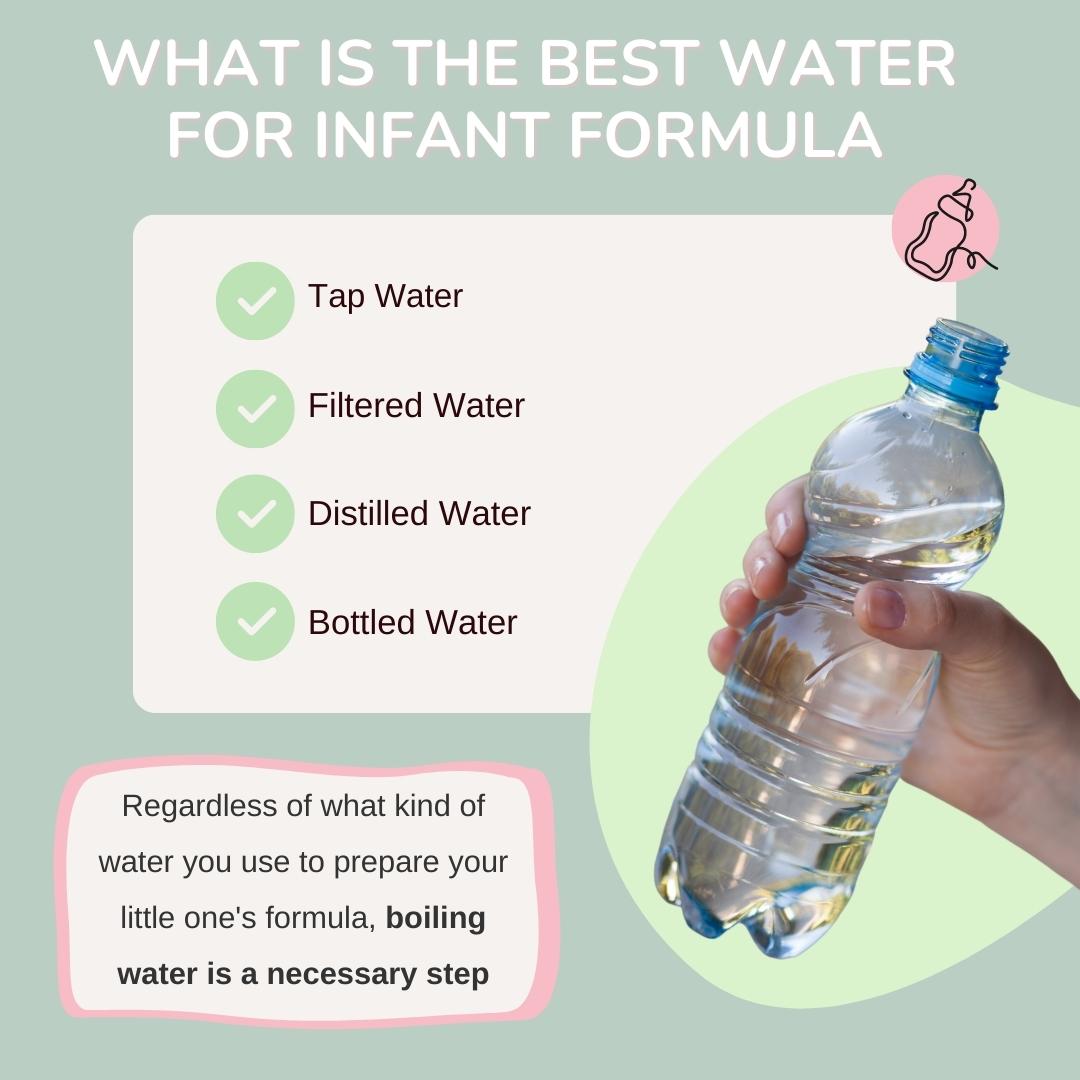

In the rapidly accelerating world of technology, where AI-driven autonomous systems, sophisticated mapping, and remote sensing are becoming commonplace, the quality of the underlying data is paramount. Just as the type and purity of water are critical for preparing baby formula – a foundational input for human development – the “water” that feeds our intelligent systems, namely data, dictates their effectiveness, reliability, and ethical standing. Without pristine, well-sourced, and appropriately processed data, even the most advanced algorithms risk becoming ineffective, biased, or even dangerous. This article delves into the crucial considerations for selecting and refining the data foundation that fuels the next generation of tech and innovation.

The Critical Role of Data Purity in AI and Autonomous Systems

The pursuit of truly intelligent machines hinges entirely on the information they consume and learn from. For AI Follow Mode on drones, precise obstacle avoidance in autonomous flight, or hyper-accurate mapping and remote sensing, the integrity of data is not merely an advantage; it is an absolute necessity.

“Garbage In, Garbage Out”: The Fundamental Truth of AI Training

The adage “garbage in, garbage out” (GIGO) rings particularly true in machine learning and AI development. If an autonomous drone is trained on visual data that is blurry, mislabeled, or incomplete, its ability to accurately identify objects, track targets, or navigate complex environments will be severely compromised. Similarly, a remote sensing platform processing noisy or improperly calibrated data will yield inaccurate maps, leading to flawed insights in agriculture, urban planning, or disaster response. The robustness and reliability of any AI system are directly proportional to the quality and relevance of its training data.

Bias and Noise: Contaminants in the Data Stream

Data is rarely perfectly pristine. It can be plagued by various contaminants, much like water can contain impurities.

- Bias: This is perhaps the most insidious contaminant. If the data used to train an AI model reflects societal biases (e.g., facial recognition systems trained predominantly on certain demographics performing poorly on others) or operational biases (e.g., flight data collected only under ideal weather conditions leading to poor performance in adverse weather), the AI will inevitably perpetuate and amplify these biases. This can lead to discriminatory outcomes or significant operational failures.

- Noise: Random errors, irrelevant information, or inconsistencies within datasets constitute noise. In sensor data for autonomous navigation, noise can manifest as erratic readings from GPS, IMUs, or LiDAR, leading to jittery movements or misinterpretations of the environment. While some noise is inherent, excessive noise obscures meaningful patterns, making it challenging for AI models to learn effectively.

- Missing Data: Incomplete records can lead to gaps in an AI’s understanding, forcing it to make assumptions or leading to system failures when encountering scenarios not covered by its training. For critical systems like autonomous flight, even minor data omissions can have catastrophic consequences.

Ethical Implications of Compromised Data

Beyond operational failures, compromised data carries significant ethical weight. Biased algorithms can perpetuate discrimination in hiring, law enforcement, or financial services. Privacy breaches due to inadequately protected or anonymized data can erode public trust. In the context of drone technology, imagine an AI-powered surveillance drone whose object recognition system is biased, leading to misidentification and incorrect actions, or a delivery drone making decisions based on incomplete spatial data, potentially endangering public safety. Ensuring data purity is not just a technical challenge but a profound ethical responsibility.

Sourcing the “Water”: Diverse Data Streams for Robust Systems

Just as a balanced diet requires various nutrients, robust AI and autonomous systems thrive on diverse data streams. The source and nature of data profoundly impact an AI’s adaptability and intelligence.

Sensor Fusion: Integrating Multi-Modal Data for Comprehensive Understanding

Modern autonomous platforms, such as drones, are equipped with an array of sensors: cameras (RGB, thermal, multispectral), LiDAR, radar, GPS, IMUs (Inertial Measurement Units), and more. Each sensor provides a unique “perspective” on the environment. Sensor fusion is the process of combining data from multiple sensors to gain a more complete and accurate understanding than any single sensor could provide alone. For instance, a drone using AI for autonomous navigation might fuse visual data from cameras with depth information from LiDAR and positional data from GPS/IMU to create a highly accurate 3D map of its surroundings, enhancing obstacle avoidance and path planning even in low-light conditions. This multi-modal approach reduces uncertainty and improves resilience to individual sensor failures.

Curated Databases and Labeled Datasets: The Gold Standard

For supervised machine learning, curated and meticulously labeled datasets are the “gold standard” for training. These datasets, often painstakingly created by human annotators, provide clear examples of inputs mapped to desired outputs (e.g., an image of a person labeled as “person,” or a specific flight maneuver labeled as “takeoff”).

- ImageNet, COCO, OpenImages: These massive datasets have been instrumental in advancing computer vision, providing millions of labeled images for object detection, segmentation, and classification.

- Custom Labeled Datasets: For highly specialized applications, such as identifying specific crop diseases from multispectral drone imagery or detecting subtle structural faults in infrastructure, custom datasets often need to be created and meticulously labeled by domain experts. The investment in high-quality labeling directly translates to the performance ceiling of the resultant AI model.

Real-World vs. Synthetic Data: Bridging the Gap

While real-world data is invaluable for grounding AI in reality, it can be expensive, time-consuming, and sometimes dangerous to collect at scale, especially for rare events or hazardous scenarios. This is where synthetic data comes into play.

- Synthetic Data Generation: Using simulations, procedural generation, or advanced generative AI models (like GANs or diffusion models), synthetic data can mimic real-world phenomena. For training autonomous flight systems, simulators can generate vast amounts of flight scenarios, including extreme weather, emergency landings, and crowded airspace, which would be impractical or unsafe to capture in reality.

- Benefits: Synthetic data offers advantages like perfect labeling, control over data distribution (reducing bias), and the ability to generate specific edge cases.

- Challenges: The primary challenge lies in ensuring that synthetic data accurately reflects the complexities and nuances of the real world (“sim-to-real gap”). Bridging this gap often involves domain randomization and robust transfer learning techniques.

Purification Processes: Refining Data for Optimal Performance

Even well-sourced data often requires “purification” – a series of preprocessing steps to clean, transform, and optimize it for AI consumption. These processes are analogous to water filtration, removing impurities and making the water suitable for its intended use.

Data Preprocessing and Cleaning Techniques

Before feeding data into an AI model, several cleaning steps are typically necessary:

- Normalization and Standardization: Scaling numerical data to a common range (e.g., 0-1) or standardizing it to have zero mean and unit variance helps prevent features with larger values from dominating the learning process.

- Handling Missing Values: This can involve imputation (filling in missing values with estimated ones, e.g., mean, median, or predictive models) or removal of records/features with excessive missing data.

- Noise Reduction: Techniques like smoothing filters (for sensor data), outlier removal, or advanced denoising algorithms can reduce the impact of random variations.

- Data Deduplication: Removing duplicate records ensures that the model doesn’t overlearn from redundant information.

Anomaly Detection and Outlier Management

Outliers are data points that significantly deviate from other observations. While sometimes representing valid but rare events, they can also be the result of measurement errors or data corruption.

- Impact: Outliers can skew statistical analyses and negatively affect machine learning models, leading them to learn incorrect patterns.

- Detection Methods: Statistical methods (e.g., Z-scores, IQR), clustering algorithms (e.g., DBSCAN), and specialized machine learning models can identify outliers.

- Management: Depending on their cause and impact, outliers can be removed, transformed (e.g., capping), or treated as a separate class for anomaly detection tasks (e.g., identifying unusual drone flight patterns indicating a malfunction).

Feature Engineering: Distilling Raw Data into Meaningful Insights

Feature engineering is the art and science of transforming raw data into features that better represent the underlying problem to the predictive models, thereby improving model accuracy. It’s about distilling the “pure essence” from the raw “water.”

- Example in Drone Tech: Instead of feeding raw pixel values, features like edges, corners, textures, or even more abstract representations learned by convolutional neural networks (CNNs) can be more effective for object recognition. For flight control, instead of just raw IMU readings, calculated features like angular velocity, acceleration changes over time, or derived positional vectors might be more informative.

- Importance: Well-engineered features can drastically reduce the complexity of the learning problem, allow simpler models to perform better, and improve model interpretability. With the rise of deep learning, some feature engineering is automated (e.g., CNNs learning hierarchical features), but human ingenuity in crafting relevant features remains invaluable, especially for structured data.

Tailoring the “Formula”: Data Specificity for Diverse Applications

Just as baby formula is tailored for different age groups or dietary needs, data needs to be specifically prepared and optimized for various tech and innovation applications. The “right water” for one application might be unsuitable for another.

Mapping and Remote Sensing: Precision in Geospatial Data

For applications in mapping, agriculture, geology, or environmental monitoring using drones, the “water” is geospatial data.

- Key Data Types: High-resolution RGB imagery, multispectral and hyperspectral data, LiDAR point clouds, Synthetic Aperture Radar (SAR) data.

- Purity Requirements: Absolute and relative georeferencing accuracy is paramount. Data must be free from geometric distortions, radiometric inconsistencies, and cloud cover (or methods to mitigate its impact).

- Processing: Orthorectification, photogrammetric reconstruction, atmospheric correction, radiometric calibration, and robust change detection algorithms are crucial to derive actionable insights from drone-acquired geospatial data. The precision of this “water” directly impacts the reliability of maps, volumetric calculations, or disease detection in crops.

Autonomous Navigation: Real-time Perception and Prediction

The “water” for autonomous navigation systems in drones and other robotic platforms is a complex, dynamic stream of real-time sensor data.

- Key Data Types: High-frame-rate camera feeds, LiDAR scans for depth, radar data for velocity and ranging, GPS/IMU for pose estimation.

- Purity Requirements: Low latency, high refresh rates, robustness to varying lighting and environmental conditions, and accurate synchronization across multiple sensors are vital. The data needs to be clean enough to enable instantaneous decision-making.

- Processing: Simultaneous Localization and Mapping (SLAM), object detection and tracking, motion prediction, and semantic segmentation in real-time are essential. The AI “formula” here must quickly transform raw sensor inputs into a reliable, continuously updated understanding of the vehicle’s position, surroundings, and potential hazards.

AI Follow Mode and Object Recognition: Granularity in Visual Data

For features like AI Follow Mode, where a drone automatically tracks a subject, or for broader object recognition tasks (e.g., identifying wildlife, inspecting power lines), the “water” is primarily visual data.

- Key Data Types: High-resolution video streams, images from RGB or thermal cameras.

- Purity Requirements: High contrast, good lighting, minimal motion blur, and a diverse range of subjects and backgrounds in training data are crucial. For follow mode, consistent object identity across frames is vital.

- Processing: Advanced computer vision algorithms for object detection, classification, tracking, and pose estimation. The “formula” here involves segmenting objects from their background, understanding their movement patterns, and maintaining a consistent lock, often integrating with predictive algorithms to anticipate subject motion.

The Future of Data Sourcing and Management in Innovation

As tech and innovation continue their relentless march forward, the methods for sourcing, purifying, and managing data must evolve in tandem. The future promises even more sophisticated approaches to ensure our AI systems are fed the purest “water” possible.

Decentralized Data Architectures and Blockchain for Trust

The provenance and integrity of data are becoming increasingly important. Decentralized data architectures, potentially leveraging blockchain technology, could offer new ways to:

- Track Data Lineage: Providing an immutable record of where data originated, how it was collected, and what transformations it underwent. This is crucial for auditing, compliance, and ensuring ethical data sourcing.

- Enhance Security and Privacy: Distributing data storage and access control can reduce single points of failure and enhance privacy, especially when dealing with sensitive information for autonomous systems or remote sensing.

- Foster Data Marketplaces: Creating transparent and trustworthy environments for sharing and monetizing high-quality, specialized datasets, which could accelerate innovation across various domains.

AI-Assisted Data Annotation and Generation

The bottleneck of human-intensive data labeling is increasingly being addressed by AI itself.

- Active Learning: AI models identify data points they are most uncertain about, requesting human annotation only for those specific examples, dramatically reducing the labeling effort.

- Weak Supervision: Leveraging heuristic rules, knowledge bases, or other less precise sources to automatically label large datasets, which are then refined by human experts.

- Generative AI: Beyond simple synthetic data, advanced generative adversarial networks (GANs) and diffusion models are capable of creating highly realistic and diverse datasets, potentially even filling in missing data or augmenting existing datasets in a way that truly reflects real-world variability. This could be transformative for training robust perception systems for autonomous drones.

Continuous Learning and Adaptive Data Strategies

The real world is dynamic, and AI systems must adapt. The concept of continuous learning, where AI models are constantly updated with new, relevant data, is gaining traction.

- Online Learning: Models learn incrementally from a continuous stream of data, adapting in real-time or near real-time.

- Reinforcement Learning: Agents learn by interacting with their environment, generating their own “experience data” through trial and error, which is highly relevant for autonomous navigation and control.

- Data Drift Detection: Monitoring input data for changes in its distribution, which can signal that the model’s performance may degrade, prompting a need for retraining with fresh, relevant data. This ensures that the “water” being fed to the AI “formula” remains pure and relevant over time.

In conclusion, just as the proper preparation of baby formula demands careful selection and purification of water, the development of cutting-edge tech and innovation requires an unwavering commitment to data quality, thoughtful sourcing, and rigorous preprocessing. The “water” of our digital age—data—is the fundamental input that determines the health, intelligence, and ethical integrity of our AI and autonomous systems. By prioritizing pure inputs, we pave the way for powerful, reliable, and ethically sound outcomes, driving innovation that truly benefits society.