In an era defined by rapid technological advancement and groundbreaking innovation, the intricate language of mathematics often remains a silent, yet indispensable, force. Far from being a mere academic exercise, mathematical notation is the bedrock upon which virtually all modern technology is built. It is the precise, unambiguous, and universal language that allows engineers, scientists, and innovators to conceptualize, design, analyze, and communicate complex ideas with unparalleled clarity and efficiency. Without it, the sophisticated algorithms driving artificial intelligence, the intricate control systems guiding autonomous vehicles, and the robust frameworks underpinning data science would simply not exist. Mathematical notation transforms abstract concepts into concrete instructions, enabling the leap from theoretical discovery to practical application, making it a cornerstone of contemporary tech and innovation.

The Universal Language of Innovation

At its core, mathematical notation is a system of symbols and rules used to represent mathematical objects, operations, and relationships. It’s a specialized form of written communication designed for conciseness, precision, and global understanding. While natural languages like English or Chinese can be ambiguous and context-dependent, mathematical notation strives for absolute clarity, ensuring that a given expression holds the same meaning for any trained individual, anywhere in the world. This universality is crucial in a globalized tech landscape where collaboration and standardized understanding are paramount.

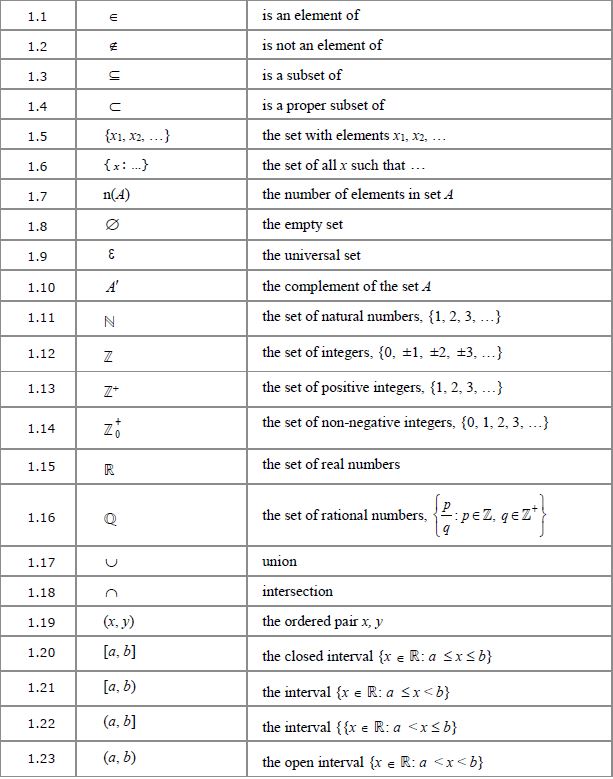

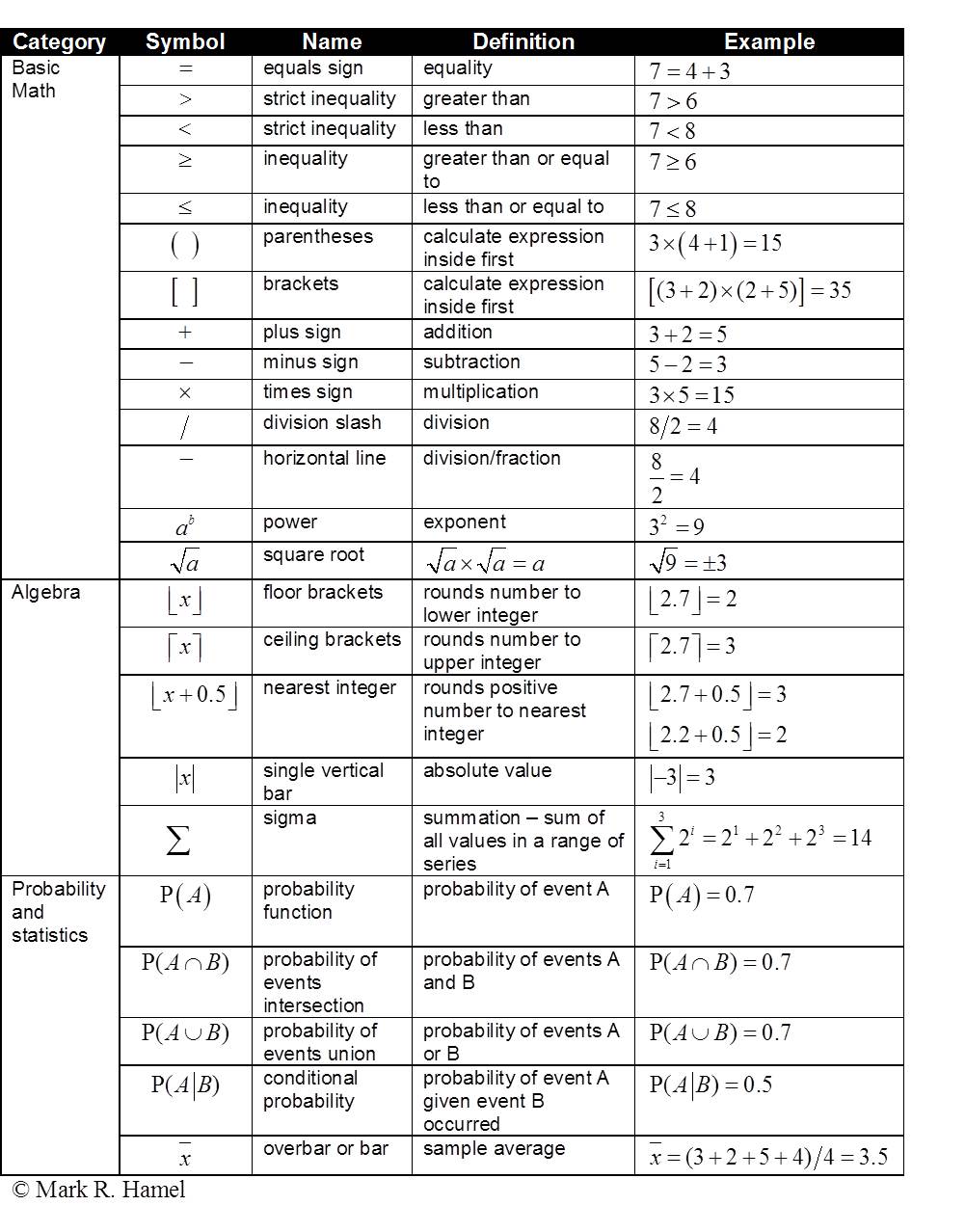

Beyond Numbers: Symbols of Precision

The common misconception is that mathematical notation is primarily about numbers. While numbers are fundamental, notation extends far beyond them. It encompasses a vast array of symbols representing variables (e.g., x, y, z), constants (e.g., π, e), operations (e.g., +, -, ×, ÷, ∫, Σ), relationships (e.g., =, <, >), sets (e.g., { }, ∈), functions (e.g., f(x)), and logical operators (e.g., ∧, ∨, ¬). Each symbol is carefully chosen for its distinct meaning, avoiding the semantic overloading common in everyday language. This precision is what makes mathematical notation an ideal tool for describing complex systems and processes found in cutting-edge technology. For instance, the simple equation E=mc² encapsulates a profound physical relationship, doing so with a brevity and exactitude that prose could never match. In software development, logical operators are foundational to conditional statements and loops, guiding program flow with absolute certainty.

The Efficiency of Abstraction

One of the most powerful aspects of mathematical notation is its ability to abstract complex ideas into concise forms. Abstraction is the process of focusing on essential properties and omitting non-essential details. In technology, this allows developers and researchers to work with high-level concepts without getting bogged down in every minute detail simultaneously. For example, a differential equation might model the dynamics of a complex system, such as an aircraft’s flight path or the spread of a virus, using just a few symbols. The notation allows us to manipulate and analyze these models without needing to constantly re-describe the physical system in full. This efficiency is critical in the fast-paced world of tech and innovation, where rapidly prototyping, testing, and iterating on ideas is essential. It enables engineers to solve problems that would otherwise be intractable due to their sheer complexity, paving the way for innovations from optimized algorithms to sophisticated hardware designs.

Building Blocks of Modern Tech

The diverse landscape of mathematical notation provides the essential building blocks for various technological disciplines. Each domain leverages specific notational systems to articulate its unique challenges and solutions, underpinning everything from software architecture to advanced data analytics.

Algebraic and Functional Notations: Programming’s Core

Algebraic notation, with its use of variables, equations, and expressions, forms the fundamental syntax of nearly all programming languages. When a programmer writes x = y + 5; or function calculateArea(radius) { return Math.PI * radius * radius; }, they are directly applying algebraic and functional notation. Variables store data, operators perform computations, and functions encapsulate reusable logic. This direct correspondence means that the mathematical rigor embedded in algebraic notation translates into robust and predictable software behavior. From simple mobile apps to complex enterprise systems, the clarity and deterministic nature of algebraic expressions are indispensable for creating reliable and efficient code. The evolution of programming paradigms, such as functional programming, further emphasizes the direct application of mathematical functions as first-class citizens, promoting immutability and predictability in software design.

Calculus and Differential Equations: Engineering the Future

Calculus, with its notation for derivatives (rate of change, e.g., dy/dx) and integrals (accumulation, e.g., ∫ f(x) dx), is the cornerstone of engineering and physics, and by extension, much of modern tech. Differential equations, which describe how quantities change over time, are used to model dynamic systems found everywhere in technology. Consider autonomous vehicles: their navigation systems rely on solving differential equations to predict motion, control steering, and manage acceleration. Robotics engineers use calculus to optimize robot arm movements for precision and efficiency. In signal processing, the Fourier Transform, represented by its integral notation, is crucial for converting signals between time and frequency domains, a technique vital for wireless communication, audio processing, and image compression. Without the precise language of calculus, designing and optimizing these complex, dynamic systems would be virtually impossible.

Set Theory and Logic: Data Structures and AI Foundations

Set theory notation (e.g., {a, b, c}, ∪, ∩, ∈) and logical notation (e.g., ∧, ∨, ¬, ⇒) are fundamental to the architecture of data structures, database design, and the very fabric of artificial intelligence. In computer science, data is often organized into sets, and operations on these sets (union, intersection, difference) are critical for managing information. Boolean logic, expressed through logical notation, is the foundation of digital circuits and decision-making processes within algorithms. Every if-else statement, every database query, and every search algorithm implicitly or explicitly uses principles derived from set theory and logic. In AI, particularly in areas like knowledge representation, formal logic provides a framework for reasoning. Machine learning algorithms, too, rely heavily on statistical and algebraic notations but are often built upon foundational logical structures that dictate how decisions are made or patterns are recognized.

Statistical Notation: Unlocking Data Insights

In the era of big data, statistical notation (e.g., Σ for summation, μ for mean, σ for standard deviation, P(X) for probability) has become exceptionally critical for tech and innovation. It provides the tools to describe, analyze, and make predictions from vast datasets. Machine learning models, which are at the heart of AI, are essentially sophisticated statistical models. Notation for vectors and matrices is indispensable for representing and manipulating large volumes of data, especially in deep learning. Data scientists use statistical notation to perform hypothesis testing, build predictive models, and quantify uncertainty. From optimizing advertising campaigns and personalizing user experiences to predicting equipment failure and developing new medical diagnostics, statistical notation is the language that allows technology to extract meaningful insights and drive data-driven decision-making.

From Abstract to Application: Notation in Action

The true power of mathematical notation is fully realized when it transitions from abstract theory to tangible technological applications. It serves as the blueprint for complex systems, enabling innovations that redefine industries and daily life.

Powering Artificial Intelligence and Machine Learning

The entire edifice of Artificial Intelligence (AI) and Machine Learning (ML) is meticulously constructed upon mathematical notation. Neural networks, the engine behind many AI breakthroughs, are represented as intricate networks of weighted connections and activation functions, all defined using linear algebra (matrices, vectors) and calculus (gradient descent for optimization). The notation for loss functions, regularization terms, and optimization algorithms are the core elements that allow AI models to learn from data, recognize patterns, and make predictions. Without a precise notational system for defining these components and their interactions, developing, debugging, and improving AI models would be an insurmountable task. The ability to write W * x + b (matrix multiplication and vector addition) and apply a non-linear activation function σ(z) is what transforms raw data into intelligent insights, from natural language processing to computer vision.

Engineering Autonomous Systems and Robotics

Autonomous systems, including self-driving cars, delivery drones, and industrial robots, are marvels of engineering that rely profoundly on mathematical notation. The algorithms governing their navigation, perception, and decision-making are expressed through complex mathematical models. Control theory, a discipline heavily reliant on differential equations and linear algebra, dictates how these systems maintain stability, follow desired trajectories, and react to their environment. For instance, the Kalman filter, a mathematical algorithm described with matrix notation, is crucial for sensor fusion – combining data from various sensors (GPS, lidar, radar) to estimate an object’s precise state and position. This allows an autonomous vehicle to know exactly where it is and where it’s going, even amidst noisy sensor readings. The formalization offered by mathematical notation ensures these systems operate safely, efficiently, and predictably in complex, dynamic environments.

Cryptography and Cybersecurity: The Language of Secure Data

In the realm of cybersecurity, mathematical notation is the very language of security. Cryptography, the science of secure communication, is entirely based on deep mathematical principles, particularly number theory, abstract algebra, and modular arithmetic. Algorithms like RSA or ECC (Elliptic Curve Cryptography), which secure everything from online banking to private messaging, are precisely defined using mathematical notation. The notation expresses public and private keys, encryption and decryption functions, and the intricate mathematical operations that make it computationally infeasible for unauthorized parties to decipher information. This mathematical rigor guarantees the confidentiality, integrity, and authenticity of digital information, forming the backbone of trust in the digital age. Without the precise and unambiguous language of mathematical notation, designing and verifying secure cryptographic systems would be impossible, leaving all our digital interactions vulnerable.

Modeling and Simulation: Digital Twins of Reality

Mathematical notation is the critical tool for creating sophisticated models and simulations, often referred to as “digital twins” in engineering. From simulating airflow over an aircraft wing to modeling financial markets or predicting climate change, these virtual representations allow engineers and scientists to test hypotheses, optimize designs, and predict outcomes without the need for costly and time-consuming physical experiments. Finite element analysis (FEA), computational fluid dynamics (CFD), and agent-based modeling are all expressed through systems of equations and algorithms using mathematical notation. This enables iterative design processes, rapid prototyping, and the ability to explore a vast parameter space, leading to innovations in materials science, product development, and complex system optimization. The clarity and precision of the notation ensure that these models accurately reflect the underlying physical or economic realities they seek to represent.

The Evolving Landscape of Mathematical Notation in Tech

As technology advances, so too does the complexity of the mathematical underpinnings required. The evolution of mathematical notation within tech is a continuous journey of standardization, interpretation, and accessibility, ensuring it remains a potent tool for future innovations.

Standardizing Complex Systems

In highly specialized fields within tech, proprietary or domain-specific notations often emerge to handle unique complexities. However, for broader adoption and interoperability, there’s a constant push towards standardization. Efforts to create universal markup languages like LaTeX, MathML, and now tools for rendering mathematical expressions in web environments (like MathJax), highlight the importance of consistent representation. This standardization allows research and development to be shared, verified, and built upon across different teams and organizations, accelerating the pace of innovation. For instance, in quantum computing, specialized notation for qubits, quantum gates, and superposition is crucial, and developing standard ways to represent these will be vital for its widespread adoption and development.

Visualizing and Interpreting Advanced Notations

With the rise of data visualization and interactive computing, the interpretation of complex mathematical notation is becoming more dynamic. Tools that can not only render mathematical expressions but also graph functions, animate simulations, and visually represent data structures derived from complex equations are transforming how engineers and scientists interact with mathematics. This visual interpretation aids in understanding, debugging, and communicating intricate mathematical models to a broader audience, fostering better collaboration between mathematicians, programmers, and even non-technical stakeholders. Interactive notebooks like Jupyter, which seamlessly integrate code, mathematical equations, and visualizations, exemplify this trend, making the abstract more tangible.

Bridging the Gap: Education and Accessibility

Despite its undeniable importance, the perceived barrier to entry for understanding advanced mathematical notation can be a bottleneck for innovation. Bridging this gap through improved educational methodologies and accessible tools is a growing area of focus. Initiatives that demystify mathematical notation and its applications in real-world tech scenarios are crucial for cultivating the next generation of innovators. Moreover, developing intuitive software interfaces that abstract away some of the notational complexity for users, while retaining the underlying mathematical rigor, allows for broader application of powerful tools. Ultimately, making mathematical notation more approachable, without compromising its precision, is key to democratizing advanced technology and ensuring its continued growth.

In conclusion, mathematical notation is not merely a collection of arcane symbols; it is the universal language of precision, efficiency, and abstraction that underpins virtually every aspect of modern technology and innovation. From the fundamental algorithms driving AI to the intricate control systems of autonomous vehicles, and the secure protocols guarding our digital lives, notation provides the framework for conceptualizing, developing, and communicating complex ideas. Its continuous evolution and integration into new technological paradigms underscore its irreplaceable role as a catalyst for future breakthroughs, solidifying its position as a cornerstone of the Tech & Innovation landscape.