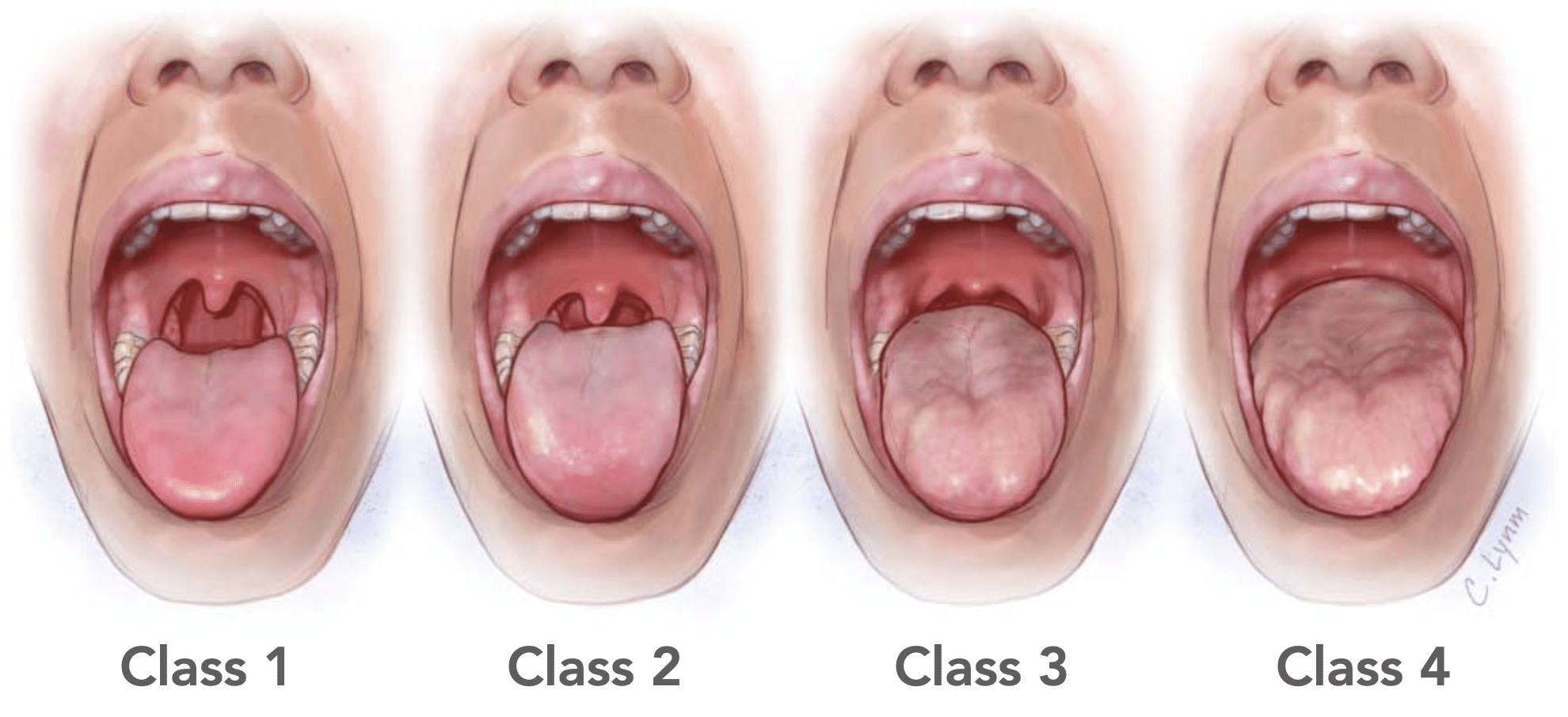

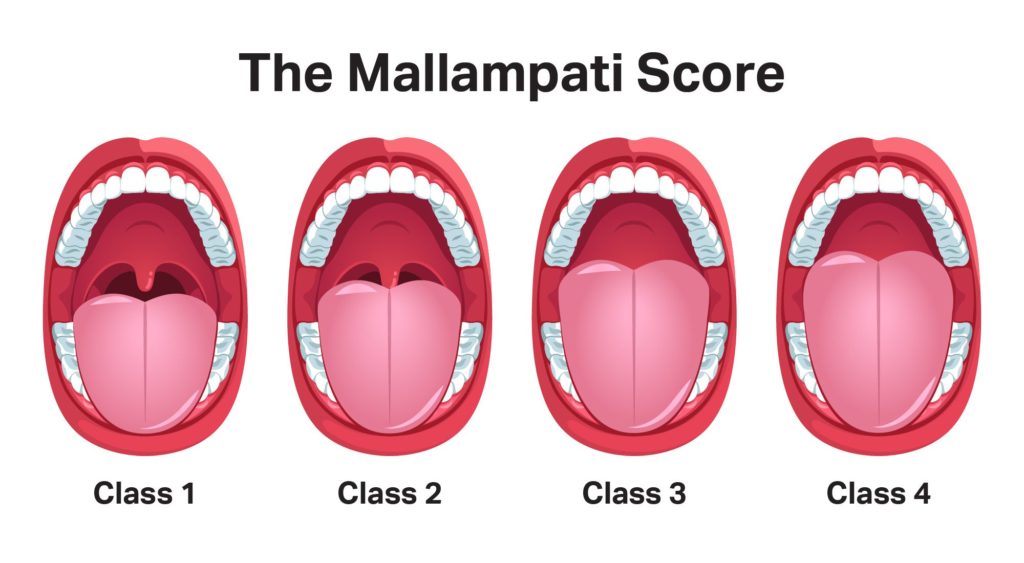

In the rapidly evolving world of uncrewed aerial vehicles (UAVs) and autonomous flight, the ability to accurately perceive and interpret the environment is paramount. Just as human perception dictates the ease of intricate medical procedures, the efficacy of a drone’s onboard systems—its “eyes” and “ears”—is profoundly influenced by ambient conditions. To bridge this gap and provide a standardized framework for evaluating environmental visual clarity for flight technology, we introduce the concept of the Mallampati Score within this domain.

The Mallampati Score, repurposed and redefined for flight technology, is a conceptual classification system designed to quantify and categorize the prevailing visual conditions impacting a drone’s optical, LiDAR, and other perception-based navigation and obstacle avoidance systems. It serves as a crucial metric for flight planning, sensor configuration, and the dynamic adaptation of autonomous algorithms. By standardizing the assessment of environmental visual impedance, the Mallampati Score enables operators and AI systems to make informed decisions regarding flight safety, operational feasibility, and data acquisition quality, thereby pushing the boundaries of reliable autonomous flight.

The Genesis of the Mallampati Score in Flight Technology

The inspiration for adapting a classification system like the Mallampati Score stems from the critical need for a universal language to describe environmental challenges in autonomous flight. While human pilots inherently assess visual conditions before and during flight, autonomous systems require predefined parameters and robust decision-making frameworks.

Bridging Human Perception to Autonomous Systems

Human pilots instinctively gauge factors like haze, glare, fog, and precipitation, making real-time adjustments to their flight path, speed, and even the decision to fly. For a drone’s flight controller and its suite of sensors, such nuanced human interpretation must be translated into quantifiable data. The original Mallampati classification, by systematically categorizing the visibility of anatomical structures, provides an elegant template for such an adaptation. We envision our Mallampati Score for flight technology as a systematic way to categorize the ‘visibility’ of the operational environment for a drone’s perception systems, enabling a more human-like, intuitive assessment to be integrated into autonomous decision-making processes. It aims to quantify the degree to which environmental factors obscure critical visual information, influencing everything from GPS signal strength to the performance of computer vision algorithms.

The Need for Standardized Visual Assessment

Currently, flight planning often relies on generalized weather reports, which, while useful, lack the granular detail required for precise autonomous operations. Terms like “partly cloudy” or “light fog” are subjective and do not directly translate into predictable impacts on sensor performance or navigation accuracy. A standardized visual assessment system, like our adapted Mallampati Score, provides an objective, measurable scale. This allows for:

- Consistent Operational Planning: Teams can use a common language to discuss flight conditions globally.

- Predictive Performance Modeling: Developers can simulate sensor performance under specific Mallampati Score conditions, refining algorithms and hardware.

- Dynamic Flight Adaptation: Autonomous systems can, in real-time, assess their Mallampati Score and adjust flight parameters, sensor sensitivities, or even abort missions when conditions exceed safe thresholds.

- Post-Flight Analysis: Incident investigations can utilize the Mallampati Score to understand environmental contributions to operational anomalies.

Without such a system, the reliability and safety of autonomous drone operations remain susceptible to unpredictable environmental variables, making advanced applications like package delivery, infrastructure inspection, and precision agriculture less dependable.

Deconstructing the Mallampati Score Levels

Our Mallampati Score for Flight Technology is divided into four distinct classes, each representing a progressive level of environmental visual impedance. These classes are designed to be universally applicable across various drone platforms and sensor technologies, offering a graduated scale of challenge to autonomous perception.

Class I: Optimal Visual Clarity (Clear Line of Sight)

Description: This represents ideal flying conditions where environmental factors pose minimal to no obstruction to visual or optical sensors. The atmosphere is clear, with excellent visibility, minimal glare, and no precipitation.

Impact on Flight Technology:

- Sensor Performance: All optical, thermal, LiDAR, and ultrasonic sensors operate at peak efficiency. Computer vision algorithms exhibit high confidence in object detection, tracking, and environmental mapping.

- Navigation & Obstacle Avoidance: GPS signals are strong and accurate. Visual Odometry (VO) and Simultaneous Localization and Mapping (SLAM) systems function optimally, providing precise positional data and robust obstacle avoidance.

- Data Quality: High-resolution imagery and video data are crisp and artifact-free, suitable for detailed analysis and cinematic production.

Operational Recommendation: Unrestricted autonomous flight, ideal for precision tasks, high-speed operations, and data-intensive missions.

Class II: Minor Visual Obstructions (Haze, Glare)

Description: Conditions involve subtle environmental factors that introduce minor visual impedance. This might include light atmospheric haze, mild glare from the sun at certain angles, distant heat shimmer, or very light, scattered cloud cover.

Impact on Flight Technology:

- Sensor Performance: Optical sensors may experience slight reduction in contrast and range, particularly in challenging light angles. LiDAR returns might show minor noise. Thermal sensors are largely unaffected.

- Navigation & Obstacle Avoidance: GPS accuracy remains high, but VO/SLAM systems might show slightly increased error margins or require higher processing power to maintain accuracy, especially when tracking distant objects. Minor risk of false positives/negatives in obstacle detection due to glare.

- Data Quality: Imagery might exhibit slight softness or reduced dynamic range. Post-processing might be required to enhance quality for critical applications.

Operational Recommendation: Most autonomous functions remain reliable, but mission planning should account for potential glare angles. Consider adjusting flight paths or sensor settings to mitigate minor visual interference.

Class III: Moderate Visual Impairment (Fog, Light Precipitation)

Description: Characterized by significant environmental factors that moderately impair visual clarity. This includes light to moderate fog, mist, light rain or snow, moderate dust, or significant atmospheric pollution. Visibility is reduced, making distant objects appear blurred or indistinct.

Impact on Flight Technology:

- Sensor Performance: Optical sensors (cameras) are significantly impacted, with reduced effective range and clarity. Color perception may be altered. LiDAR penetration is reduced, and returns can be sparse or noisy. Ultrasonic sensors may struggle with precipitation. Thermal sensors become more critical as primary perception tools.

- Navigation & Obstacle Avoidance: GPS and Inertial Measurement Units (IMUs) become primary navigation sources. VO/SLAM systems may struggle with feature extraction and tracking, leading to degraded localization accuracy and potential drift. Obstacle avoidance systems must rely more heavily on redundant sensors (e.g., radar, thermal) or operate at reduced confidence levels.

- Data Quality: Images and video are visibly degraded, with reduced detail, contrast, and color fidelity. Unsuitable for missions requiring high visual precision without significant post-processing or specialized sensors.

Operational Recommendation: Autonomous flight is feasible but requires caution. Mission parameters should be conservative (e.g., slower speeds, closer proximity to targets, shorter ranges). Heavy reliance on non-optical sensors and redundant systems is advised. Manual override readiness is crucial.

Class IV: Severe Visual Degradation (Heavy Fog, Night, Smoke)

Description: Represents extremely challenging conditions where visual clarity is severely compromised or nonexistent. This includes heavy fog, dense smoke, white-out snowstorms, heavy rain, or complete darkness without external illumination. Objects are largely invisible to the human eye and conventional optical sensors.

Impact on Flight Technology:

- Sensor Performance: Optical sensors are effectively rendered blind or provide minimal usable data. LiDAR performance is severely degraded, with extremely limited range and high signal attenuation. Ultrasonic sensors are often overwhelmed by dense particulate matter or precipitation.

- Navigation & Obstacle Avoidance: GPS and IMU become almost solely responsible for primary navigation, making reliance on absolute positioning critical. VO/SLAM systems are largely non-functional. Obstacle avoidance relies entirely on radar, robust thermal imaging (if contrast exists), or pre-mapped environments with high positional accuracy.

- Data Quality: Optical data is generally unusable. Thermal imagery may provide some utility if temperature differentials are present, but overall data acquisition for visual assessment is compromised.

Operational Recommendation: Autonomous flight is highly risky and generally discouraged or impossible without specialized, purpose-built systems (e.g., radar-centric drones, military-grade thermal cameras). Only critical missions with pre-defined, obstacle-free paths and robust non-optical perception should be attempted. Often, this class implies mission postponement or cancellation for safety reasons.

Application in Autonomous Navigation and Sensor Performance

The implementation of the Mallampati Score in flight technology is not merely an academic exercise; it has profound practical implications for the development and deployment of autonomous drone systems.

Optimizing Sensor Configuration and Redundancy

A key application of the Mallampati Score is guiding the optimal configuration and strategic redundancy of a drone’s sensor payload. When a drone operates in a predicted Class III environment, for instance, flight planners can instruct the system to prioritize radar or thermal cameras over optical ones, dynamically adjust exposure settings, or increase the sensitivity of specific sensors. Furthermore, knowing the expected Mallampati Score for a mission can inform the initial design phase, ensuring that drones destined for operations in typically challenging environments (e.g., industrial inspections in dusty conditions) are equipped with the appropriate array of complementary sensors. This proactive approach minimizes sensor blind spots and maximizes situational awareness regardless of environmental conditions.

Enhancing Obstacle Avoidance Systems

Obstacle avoidance is perhaps the most critical safety function for autonomous drones. The Mallampati Score directly impacts its effectiveness. In Class I conditions, standard stereo vision or LiDAR systems might suffice. However, as the score degrades to Class III or IV, reliance must shift dramatically. A system aware of its current Mallampati Score can:

- Adapt Detection Thresholds: Increase the sensitivity of radar or thermal sensors in low visibility.

- Modify Flight Paths: Opt for wider, more conservative flight paths or higher altitudes to compensate for reduced sensor range.

- Prioritize Known Obstacles: Focus processing power on detecting static, pre-mapped obstacles, or dynamically updating known potential hazards.

- Trigger Emergency Protocols: Initiate a safe landing or return-to-home sequence if the visual degradation exceeds the system’s safe operational limits, especially in areas with dynamic, unmapped obstacles.

This dynamic adaptability, driven by an understanding of environmental visual clarity, significantly enhances the robustness and reliability of obstacle avoidance systems, moving beyond static decision trees.

Predictive Modeling for Flight Planning

The Mallampati Score can be integrated into predictive modeling tools used for flight planning. By cross-referencing meteorological forecasts with the expected Mallampati Score, operators can gain an informed estimate of mission feasibility and potential challenges.

- Mission Feasibility Assessment: Determine if a mission is even possible or safe under forecasted conditions, preventing costly and dangerous aborted flights.

- Resource Allocation: Allocate appropriate drone platforms (e.g., a radar-equipped drone for anticipated Class III conditions) and personnel (e.g., requiring more experienced human oversight for riskier flights).

- Dynamic Route Optimization: Pre-plan routes that minimize exposure to areas prone to specific visual impairments (e.g., avoiding low-lying areas known for fog in Class III scenarios, or flying higher to escape ground haze).

By incorporating the Mallampati Score, flight planning moves from reactive responses to proactive strategic decisions, significantly improving operational efficiency and safety across the entire drone ecosystem.

Challenges and Future of the Mallampati Score

While the conceptual Mallampati Score for Flight Technology offers immense potential, its practical implementation faces several challenges and holds exciting future prospects.

Dynamic Environmental Factors

The primary challenge lies in the dynamic and often unpredictable nature of environmental factors. Fog can roll in quickly, rain can intensify, and lighting conditions change with remarkable speed. For the Mallampati Score to be truly effective, drones must be able to assess their environment and classify the current score in real-time, or near real-time. This requires sophisticated onboard processing capabilities and highly reliable environmental sensing. Developing sensors that can accurately quantify atmospheric particulate matter, water vapor density, and light diffusion across various spectra will be crucial for real-time Mallampati Score calculation. Furthermore, the localized nature of many environmental phenomena (e.g., a patch of fog or a pocket of dust) means that a global score for an entire mission area might not be sufficient; a localized, spatially varying Mallampati map could be required.

Integration with AI and Machine Learning

The future of the Mallampati Score is inextricably linked with advancements in AI and machine learning. Instead of relying on predefined thresholds, AI models could be trained on vast datasets of sensor data correlated with human-labeled Mallampati scores. This would enable drones to:

- Self-Assess Mallampati Score: AI algorithms could analyze raw sensor feeds (camera, LiDAR, thermal, radar) and autonomously classify the current environmental clarity, moving beyond simple meteorological data.

- Predict Mallampati Score Changes: Machine learning models could predict how the Mallampati Score might evolve along a flight path or over time, based on current conditions and local microclimates.

- Learn from Experience: Drones could learn from previous flights how different Mallampati scores impacted their performance, continuously refining their adaptive strategies.

The integration of AI would transform the Mallampati Score from a static classification into a dynamic, intelligent assessment tool, enabling unprecedented levels of adaptability in autonomous flight.

Towards Real-time Adaptive Flight Systems

Ultimately, the goal is to develop real-time adaptive flight systems that seamlessly integrate the dynamically assessed Mallampati Score. This would mean:

- Automatic Flight Parameter Adjustment: As the Mallampati Score changes, the drone could instantly adjust its speed, altitude, sensor fusion weights, and even choose alternative navigation strategies.

- Intelligent Sensor Management: The system could dynamically power on/off or reconfigure sensors, optimizing power consumption and processing load while maintaining safety and data quality.

- Proactive Risk Mitigation: By predicting future score changes, the drone could proactively take mitigating actions, such as changing course or initiating a safe landing before conditions become critical.

The Mallampati Score, when fully integrated into such intelligent systems, promises to unlock a new era of robust, reliable, and truly autonomous drone operations, making complex missions in challenging environments not just possible, but consistently safe and efficient.