In an increasingly interconnected world driven by rapid technological advancement, the concepts of libel and slander have gained new dimensions and complexities. While traditionally understood within the confines of print, broadcast, and spoken word, the advent of the internet, social media, artificial intelligence, and sophisticated data collection methods—including those involving drones and remote sensing—has profoundly reshaped the landscape of defamation law. Understanding “what is libel slander” today requires not just a grasp of legal definitions, but also an appreciation for how tech innovation impacts the creation, dissemination, and legal implications of potentially defamatory statements. This article delves into the core principles of defamation, then explores its intricate relationship with the tools and platforms of the digital age, particularly within the niche of Tech & Innovation.

Understanding Defamation: The Traditional Framework

At its core, defamation is the act of harming the reputation of another by making a false statement to a third person. It protects individuals and entities from malicious falsehoods that could cause them significant damage, whether professional, personal, or financial. The law recognizes two primary forms of defamation: libel and slander.

Libel vs. Slander: Key Distinctions

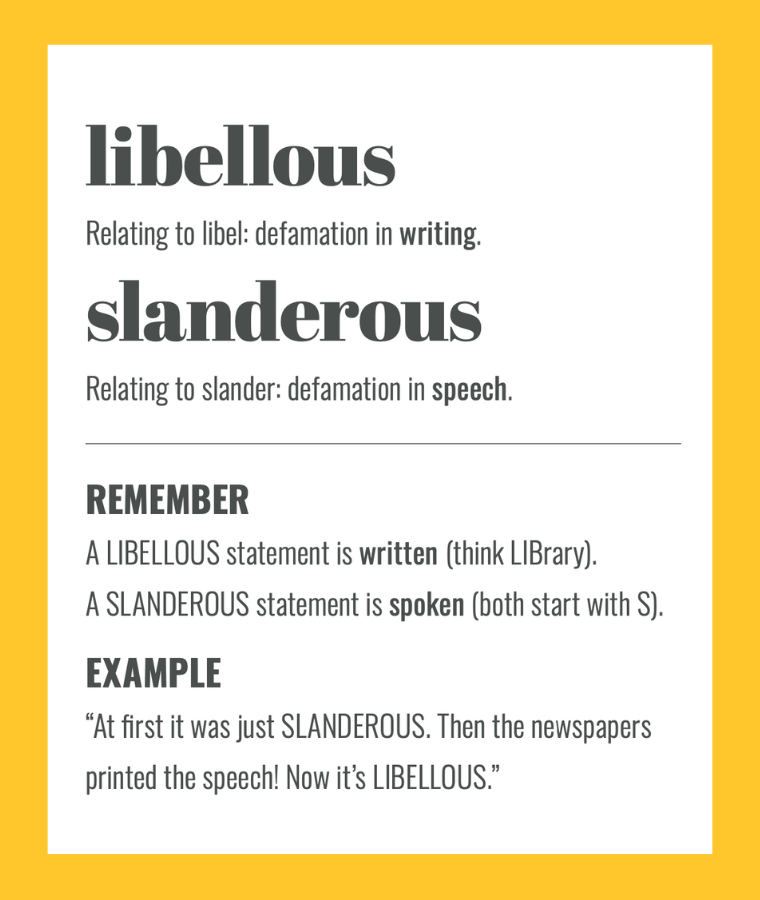

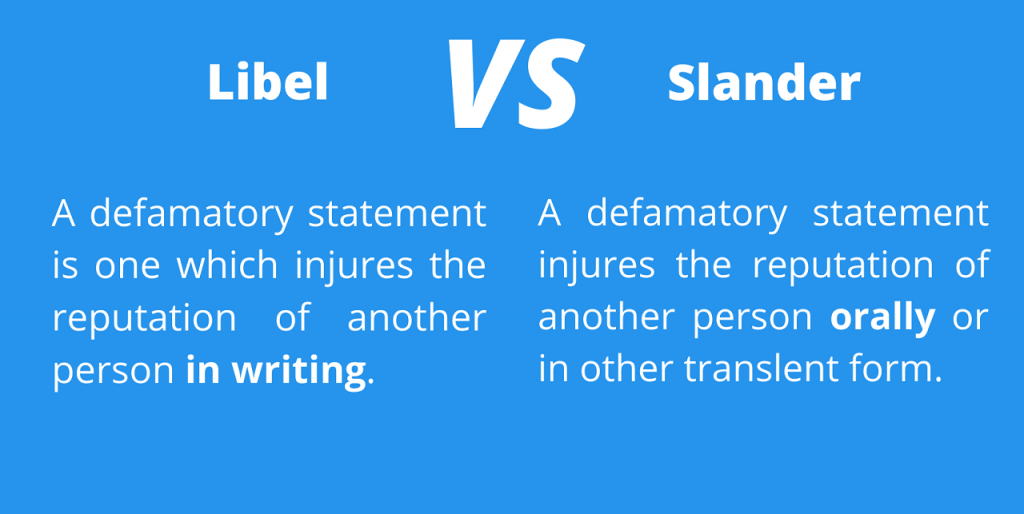

Historically, the distinction between libel and slander hinged primarily on the medium through which the defamatory statement was conveyed:

- Libel refers to defamation in a permanent or visible form. This traditionally included written words (newspapers, magazines, books), printed images, signs, and later, radio and television broadcasts. The permanence of libel often meant that it was presumed to cause damage, making it easier for a plaintiff to prove harm. Its enduring nature allowed for wider dissemination and a longer-lasting impact on reputation.

- Slander refers to defamation in a transient or spoken form. This typically involved oral statements, gestures, or other non-permanent expressions. Because slander was often fleeting and had a more limited audience, plaintiffs generally had to prove “special damages” – actual, quantifiable financial loss – resulting directly from the defamatory statement. Certain categories of slander, deemed “slander per se” (e.g., falsely accusing someone of a crime, implying professional incompetence, or alleging a loathsome disease), were exceptions where damages could be presumed.

In the contemporary tech landscape, these distinctions have become increasingly blurred. A tweet, a social media post, a YouTube video, or a podcast recording, while originating in a digital medium, often possesses the permanence and widespread reach traditionally associated with libel. Many jurisdictions now treat digital forms of defamation more akin to libel due to their potential for enduring impact and global dissemination, regardless of their initial format.

Essential Elements of a Defamation Claim

Regardless of whether it’s classified as libel or slander, a plaintiff typically must prove several key elements to establish a defamation claim:

- A False Statement of Fact: The statement must be presented as a fact, not merely an opinion, and it must be untrue. Truth is almost always an absolute defense to defamation.

- Publication/Communication to a Third Party: The defamatory statement must have been communicated to at least one person other than the plaintiff and the defendant. This element is crucial in the digital age, as online content inherently involves communication to a broad “third party” audience.

- Identification of the Plaintiff: The statement must be “of and concerning” the plaintiff, meaning a reasonable person could understand that the statement refers to them. This can be direct (naming the person) or indirect (describing them uniquely).

- Harm to Reputation: The statement must cause injury to the plaintiff’s reputation, exposing them to hatred, contempt, ridicule, or causing them to be shunned or injured in their business or profession.

- Fault: The defendant must have acted with a certain level of fault regarding the falsity of the statement. For private figures, this typically means negligence (failing to exercise reasonable care to ascertain the truth). For public figures (or matters of public concern), a higher standard applies: “actual malice” must be proven, meaning the defendant knew the statement was false or acted with reckless disregard for its truth or falsity.

The Digital Revolution and Its Impact on Defamation

The internet and its attendant technologies have fundamentally altered the dynamics of communication, giving rise to new challenges and considerations for defamation law. The speed, reach, and permanence of digital content have transformed how defamatory statements are made and how they inflict harm.

Amplification and Reach: The Internet’s Role

Before the internet, a defamatory statement typically had a limited reach—the circulation of a newspaper, the audience of a TV show, or the attendees of a meeting. Today, a single post on social media, an article on a blog, or a video uploaded to a sharing platform can instantaneously reach millions globally. This unparalleled amplification means that a false statement can inflict widespread and irreparable damage to a reputation in mere moments, often before the subject is even aware of its existence. The virality of content means that even if an initial defamatory statement is removed, screenshots, re-posts, and shares can ensure its persistent presence online.

Anonymity and Jurisdiction Challenges

The perceived anonymity offered by the internet complicates defamation claims. While pseudonyms and VPNs can mask identities, legal avenues exist to unmask anonymous defilers. However, this process can be lengthy, costly, and not always successful. Furthermore, the global nature of the internet poses significant jurisdictional challenges. A defamatory statement made in one country can be accessed and cause harm in another, raising complex questions about which country’s laws apply and where a lawsuit can be filed. This international dimension often requires a sophisticated understanding of cross-border legal frameworks, a challenge that didn’t exist in traditional defamation cases.

New Technologies: Drones, AI, and the Evolving Landscape of Defamation

The rapid advancements in areas like autonomous systems, artificial intelligence, and advanced data collection tools (such as drones with sophisticated sensors) introduce novel scenarios and complexities into the realm of defamation. These technologies offer unprecedented capabilities but also carry potential for misuse that can lead to reputational harm.

Drone-Captured Data and Misinformation

Drones, as part of the broader Tech & Innovation ecosystem, are increasingly used for everything from aerial photography and cinematography to mapping, remote sensing, and surveillance. While incredibly beneficial, the data they collect—images, videos, spatial information—can potentially be weaponized for defamation.

- Miscontextualized Footage: Drone footage, while appearing objective, can be deliberately or accidentally manipulated or presented out of context to convey a false and damaging narrative. For instance, footage of an individual or property could be edited, selectively shown, or accompanied by false commentary to imply wrongdoing, illegal activity, or unethical behavior.

- False Claims through AI Analysis: As AI capabilities in image and video analysis advance, there’s a risk of AI algorithms misinterpreting drone data, or worse, being intentionally fed biased data to generate false “insights” that are then presented as fact. For example, AI-powered analysis of drone-captured thermal imagery could be falsely used to “prove” certain activities are occurring on a property, leading to defamatory accusations.

- Privacy Invasion Leading to Defamation: While privacy and defamation are distinct legal areas, privacy violations facilitated by drone technology can sometimes precipitate defamation. Illegally captured drone footage of private moments, if published with false accompanying commentary, could constitute both a privacy breach and a defamatory statement.

AI-Generated Content and Deepfakes

Perhaps one of the most significant challenges arising from “Tech & Innovation” concerning defamation is the rise of AI-generated content, particularly deepfakes. These sophisticated synthetic media can create incredibly convincing but entirely fabricated images, audio, or videos of individuals saying or doing things they never did.

- Fabricated Statements: Deepfakes allow for the creation of completely false “statements” attributed to a person, making them appear to utter defamatory remarks, endorse controversial views, or engage in illicit activities. The convincing nature of deepfakes makes it incredibly difficult for the average viewer to discern truth from falsehood, leading to rapid and widespread reputational damage.

- Attribution and Responsibility: Determining who is responsible for creating and disseminating a deepfake—and therefore liable for defamation—is a complex legal puzzle. Is it the creator of the deepfake? The platform hosting it? The individual who initially shared it? The algorithms themselves? This blurring of lines challenges traditional notions of fault and publication.

- Pre-emptive Strikes: The potential for deepfakes to be used for defamation raises questions about the need for pre-emptive legal measures or technological solutions to detect and flag synthetic media, preventing its spread before it causes harm.

The Blurring Lines of Responsibility

In the tech-driven world, the traditional understanding of a “publisher” or “communicator” becomes ambiguous. Is a social media platform a publisher when users post defamatory content? What about an AI system that generates text or images? While many jurisdictions offer “safe harbor” protections to platforms that merely host user-generated content, the increasing involvement of algorithms in content curation, recommendation, and even generation complicates these protections. If an AI system, for instance, synthesizes information (perhaps from drone data and other sources) and inadvertently generates a false and damaging statement, where does the liability lie?

Protecting Reputation in a Technologically Advanced World

Navigating the complexities of libel and slander in an age defined by “Tech & Innovation” requires both legal acumen and a proactive understanding of digital ethics and technological safeguards.

Proactive Measures and Digital Ethics

Individuals and organizations must adopt proactive strategies to protect their reputations in the digital sphere. This includes:

- Digital Footprint Management: Actively monitoring one’s online presence, utilizing tools to track mentions and content related to oneself or one’s brand.

- Fact-Checking and Verification: Promoting rigorous fact-checking before sharing information, especially in professional contexts involving data from drones or other remote sensing technologies.

- Ethical AI Development: Developers of AI and autonomous systems bear a responsibility to integrate ethical considerations, including safeguards against the generation or propagation of false and harmful content, into their design from the outset.

- Transparency and Disclosure: Clearly labeling AI-generated content and being transparent about the source and context of digital information, particularly when derived from automated collection methods like drones.

Legal Recourse in the Digital Age

Despite the challenges, legal recourse for defamation remains a vital tool. However, it often requires a specialized approach:

- Swift Action: Given the speed of digital dissemination, prompt action is often critical. This might involve issuing cease-and-desist letters, requesting takedowns from platforms, or seeking injunctive relief.

- Digital Forensics: Proving defamation in the digital age often necessitates sophisticated digital forensics to trace origins, demonstrate publication, and quantify harm.

- Evolving Legal Interpretations: Courts continually grapple with applying existing defamation laws to new technologies. Precedent is being set regarding platform liability, the definition of “publication” for AI-generated content, and the standards for fault in a hyper-connected world.

- International Cooperation: For cross-border defamation, international legal cooperation may be required to unmask anonymous defendants or enforce judgments.

In conclusion, “what is libel slander” is no longer a question confined to legal textbooks. It is a dynamic and evolving challenge at the intersection of law, ethics, and “Tech & Innovation.” As drones collect ever more data, AI generates increasingly sophisticated content, and digital platforms facilitate instantaneous global communication, the imperative to understand, prevent, and address defamation in this complex new landscape becomes paramount for individuals, businesses, and society at large.