In the rapidly evolving world of drone technology, where innovation pushes the boundaries of autonomous flight, AI-driven capabilities, and sophisticated data collection, the reliability of software is paramount. Every advanced feature, from precise GPS navigation to real-time object avoidance and intelligent mapping, hinges on a complex tapestry of software components interacting flawlessly. It is within this intricate ecosystem that “integration testing in software” emerges not merely as a best practice, but as an indispensable pillar ensuring the safety, performance, and successful deployment of next-generation unmanned aerial vehicles (UAVs).

Integration testing is a critical phase in the software development lifecycle that focuses on exposing defects in the interfaces and interactions between integrated software modules. Unlike unit testing, which scrutinizes individual components in isolation, integration testing validates that these distinct units, once combined, function correctly as a group. For drone technology, this means verifying that the flight control algorithm communicates effectively with sensor data processing units, that the AI path planning module seamlessly hands off commands to the propulsion system, and that ground control station (GCS) software accurately interprets telemetry from the aircraft. Without robust integration testing, even perfectly crafted individual modules can lead to catastrophic system failures when combined, turning potential innovation into operational hazards.

The Imperative of Integration Testing in Drone Software Development

The very nature of drone technology—operating in real-world environments, often performing critical tasks, and interacting with complex physical systems—elevates integration testing from a mere quality assurance step to a fundamental safety and performance requirement.

Beyond Unit Purity: The Reality of Interconnected Systems

Modern drone software is seldom a monolithic block; it’s a distributed system comprising numerous modules, microservices, and specialized components. Consider the software stack of an autonomous drone designed for infrastructure inspection: it includes modules for flight stabilization, obstacle detection using multiple sensor types (Lidar, camera, ultrasonic), AI for anomaly identification, GPS for precise positioning, communication protocols for ground control, and data logging for post-flight analysis. Each of these modules might pass its unit tests with flying colors, but their true test lies in their ability to collaborate effectively. Does the object avoidance module correctly interpret sensor data from different sources? Does it integrate seamlessly with the flight controller to execute evasive maneuvers? Does the AI vision system correctly tag anomalies and log their coordinates, which are then transmitted to the GCS? Integration testing answers these critical questions, revealing defects that only manifest when components interact. These often include interface mismatches, incorrect data formats, timing issues, or unexpected side effects between modules.

Why Drone Software Demands Robust Integration

The consequences of software integration failures in drones can range from mild operational inefficiencies to severe incidents. A simple communication glitch between a navigation module and the autopilot could cause a drone to deviate from its flight path, leading to data loss or even collision. In more advanced scenarios involving autonomous missions or swarm operations, a failure in how AI modules integrate could lead to a lack of coordination, incorrect decision-making, or failure to complete mission objectives. The “fail-safe” mechanisms crucial for drone operation themselves rely on the flawless integration of monitoring and response systems. Without comprehensive integration testing, developers are essentially crossing their fingers, hoping that individual pieces will magically form a functional whole. This risk-averse industry simply cannot afford such an approach.

Mitigating Risks in Complex Autonomous Systems

Autonomous drones are inherently complex systems where software directly interfaces with hardware, environmental data, and often, human operators. This complexity introduces numerous points of potential failure during integration. Integration testing acts as a critical risk mitigation strategy. By systematically verifying the interfaces and interactions, it helps identify and rectify issues early in the development cycle, when they are significantly cheaper and easier to fix. Catching an integration bug that could lead to an autonomous drone crashing during a simulated flight is immeasurably better than discovering it during an actual mission. This proactive approach not only saves development costs and time but, more importantly, enhances the safety of drone operations and builds trust in the reliability of advanced drone technologies for critical applications like search and rescue, logistics, or environmental monitoring.

Core Principles and Methodologies of Integration Testing for UAVs

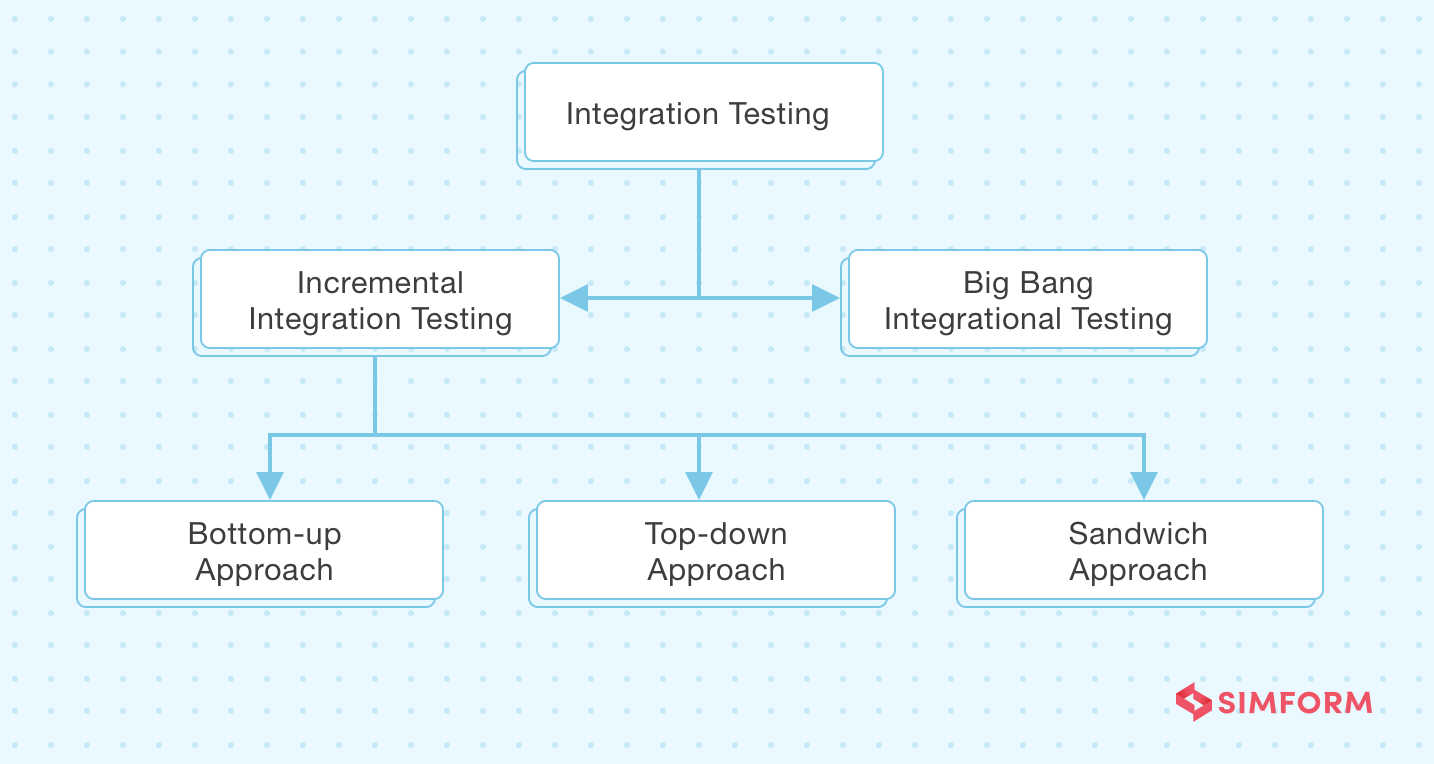

Implementing effective integration testing for drone software requires a strategic approach, considering the dependencies and complexity involved. Various methodologies exist, each with its strengths and suitable applications depending on the architecture and development phase.

Top-Down vs. Bottom-Up: Strategic Approaches

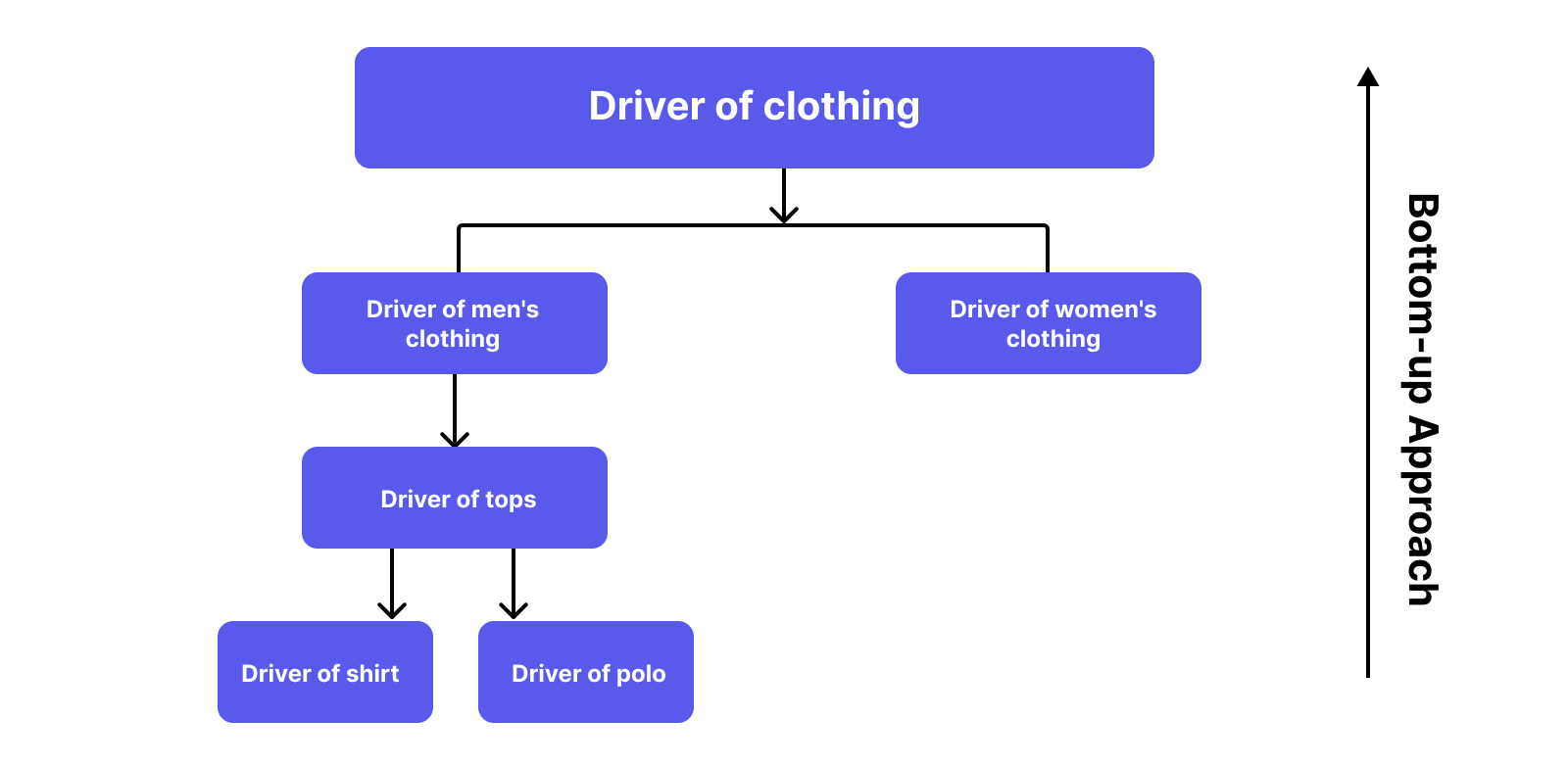

- Bottom-Up Integration: This approach starts by testing the lowest-level modules first. Once these are integrated and tested, they are then combined with the next level up, and the process continues until all modules are integrated.

- Application in Drones: This is often effective for hardware-centric drone systems, where low-level drivers (e.g., sensor interfaces, motor controllers) are integrated and verified first, then built upon by higher-level flight control logic. It ensures that foundational components are solid before more complex systems are layered on.

- Top-Down Integration: Conversely, this method begins with the highest-level modules (e.g., the main control unit, mission planner) and progressively integrates lower-level modules. Stubs (dummy programs) are used to simulate the behavior of modules that are not yet integrated.

- Application in Drones: Ideal for scenarios where the overall system architecture or user interface (e.g., Ground Control Station) is critical early on. You might test the mission planning interface and its communication with a “stub” flight controller, allowing early validation of high-level workflows before lower-level components are fully developed.

The Sandwich Approach: Balancing Efficiency and Coverage

Often considered a hybrid, the sandwich approach combines elements of both top-down and bottom-up strategies. It integrates higher-level modules using a top-down approach and lower-level modules using a bottom-up approach, meeting in the middle.

- Application in Drones: This is particularly useful for complex drone software where there’s a clear hierarchical structure. For example, testing core flight control algorithms (middle layer) might happen simultaneously with both the high-level mission planning/AI systems (top layer) using stubs, and low-level sensor/actuator drivers (bottom layer) being integrated upwards. This parallelization can accelerate development while maintaining comprehensive coverage.

Stubbing and Mocking: Simulating the Drone Environment

A crucial technique in integration testing, especially for drone software that often interfaces with physical hardware or external services, is the use of stubs and mocks.

- Stubs: These are simple dummy programs that mimic the behavior of a component that isn’t yet available or is too complex to use in a test. They provide canned responses to method calls.

- Application in Drones: When testing the AI-driven obstacle avoidance module, you might use stubs to simulate sensor readings (Lidar, camera) without needing actual sensors or a physical drone. This allows the AI’s decision-making logic and its interface with the flight controller to be tested in isolation from hardware dependencies.

- Mocks: Mocks are more sophisticated stubs that not only return predefined data but also record how they were called, allowing tests to verify interactions between components.

- Application in Drones: To test the integration of a mission planner with a flight logging service, a mock logging service could be used. The test could then verify that the mission planner correctly called the logging service methods with the expected flight parameters, even if the actual service is an external cloud API or not yet fully implemented.

These techniques allow developers to isolate the specific interaction being tested, controlling external factors and ensuring repeatable test results, which is vital for the deterministic behavior required by autonomous systems.

Key Areas of Integration Testing in Drone Tech & Innovation

For drones, almost every advanced capability relies on multiple software components working in concert. Integration testing is thus broadly applied across the entire software stack.

Flight Control Systems and Sensor Fusion

At the heart of any drone is its flight control system. This involves integrating algorithms that process data from multiple sensors (IMU, GPS, barometer, magnetometer) to determine the drone’s position, orientation, and velocity.

- Integration Focus: Verifying that sensor data from different inputs is correctly fused and filtered to provide accurate state estimation. Testing the seamless handoff of control between different flight modes (e.g., GPS hold, altitude hold, manual). Ensuring that motor commands derived from the flight controller are accurately transmitted and executed by the electronic speed controllers (ESCs) and motors.

AI, Autonomous Navigation, and Object Avoidance

These are the distinguishing features of advanced drones, enabling complex missions without constant human intervention.

- Integration Focus: Testing how AI perception modules (e.g., for object recognition, mapping SLAM) integrate with the path planning engine. Verifying that the autonomous navigation system correctly interprets planned routes, sensor inputs for obstacle detection, and adapts flight paths in real-time. Ensuring that AI-driven decision-making (e.g., for AI follow mode, target tracking) correctly interfaces with the flight controller to execute maneuvers. This often involves simulating dynamic environments and complex scenarios.

Data Processing, Mapping, and Remote Sensing Modules

Drones are increasingly sophisticated data collection platforms, requiring robust integration for processing and transmitting captured information.

- Integration Focus: Testing the integration between imaging hardware interfaces (e.g., 4K camera gimbals, thermal sensors) and the onboard image processing software. Verifying that geo-referencing data (from GPS and IMU) is accurately combined with imaging data for precise mapping and 3D model generation. Ensuring that remote sensing data (e.g., multispectral, Lidar) is correctly processed, packaged, and transmitted to ground stations or cloud services for analysis.

Ground Control Station (GCS) and Communication Links

The GCS serves as the human-machine interface for drone operations, and its integration with the drone’s onboard systems is critical.

- Integration Focus: Testing the bidirectional communication links (e.g., telemetry, command & control) between the GCS software and the drone. Verifying that mission planning tools in the GCS correctly generate flight plans that the drone’s autopilot can execute. Ensuring real-time display of flight parameters, video feeds, and warning messages on the GCS accurately reflects the drone’s status and environment. This also includes testing the integration of auxiliary applications or cloud services with the GCS.

Challenges and Best Practices in Drone Software Integration Testing

While crucial, integration testing for drones presents unique challenges that require tailored solutions and best practices.

Simulating Real-World Conditions and Environmental Variables

Drones operate in dynamic, often unpredictable environments. Testing how software behaves under varying conditions like wind, GPS signal degradation, lighting changes (for vision systems), or sensor noise is challenging.

- Best Practice: Leverage advanced simulation environments (digital twins) that can accurately model physics, sensor noise, weather patterns, and interference. This allows for repeatable testing of complex scenarios that would be dangerous or impractical in real flight. Hardware-in-the-loop (HIL) and software-in-the-loop (SIL) simulations are invaluable here.

Managing Dependencies in Hardware-Software Integration

Drone software is deeply intertwined with its hardware. Testing the software often requires access to specific hardware components or their precise emulations.

- Best Practice: Employ clear interface definitions and protocols between software modules and hardware abstraction layers. Utilize robust mock objects and stubs for hardware components when testing software in isolation. Invest in specialized HIL testing rigs that incorporate actual flight controllers, sensors, and actuators to validate software behavior under realistic hardware loads.

Continuous Integration (CI) for Drone Development

Given the rapid pace of innovation and iterative development in drone tech, manual integration testing can quickly become a bottleneck.

- Best Practice: Implement a strong Continuous Integration (CI) pipeline. Every code commit should trigger automated builds and a suite of integration tests. This helps catch integration regressions early and maintains a continuously deployable codebase. For drones, CI often extends to continuous deployment (CD) for firmware updates.

The Role of Automated Testing Frameworks

Manual integration testing is tedious, error-prone, and unsustainable for complex systems.

- Best Practice: Adopt robust automated testing frameworks that can execute integration tests programmatically. These frameworks should support defining complex test scenarios, simulating user interactions (e.g., GCS commands), and asserting expected outcomes. They should also provide clear reporting for quick identification of failures. Python with libraries like Pytest, or specialized frameworks like ROS (Robot Operating System) testing tools, are commonly used.

The Future of Drone Software Reliability through Advanced Testing

As drones become more autonomous, intelligent, and ubiquitous, the sophistication of their software—and by extension, the testing methodologies applied to it—must keep pace.

AI-Driven Test Generation and Orchestration

The sheer number of possible interaction paths in highly autonomous drone software can overwhelm traditional manual test case generation.

- Future Trend: AI and machine learning will play an increasing role in generating test cases, identifying critical execution paths, and orchestrating complex integration test scenarios. This includes using AI to analyze code changes and predict areas most likely to experience integration issues, or to generate realistic anomaly scenarios for testing robust fault tolerance.

Digital Twins and High-Fidelity Simulation

While current simulation is powerful, the future promises even more realistic and comprehensive digital twins of drones and their operating environments.

- Future Trend: Highly detailed digital twins will allow for integration testing in virtual environments that are almost indistinguishable from reality. These twins will model not just the drone’s internal systems but also precise environmental factors, communication interference, and interactions with other autonomous agents. This will enable exhaustive testing of complex swarm behaviors, urban air mobility scenarios, and even drone-human interaction in a safe, repeatable, and cost-effective manner.

Enhancing Safety and Performance for Next-Gen UAVs

Ultimately, the drive for advanced integration testing is fueled by the need to build safer, more reliable, and higher-performing drones.

- Impact: By rigorously testing every interface and interaction, developers can push the boundaries of what drones can achieve, from fully autonomous package delivery and precision agriculture to advanced security surveillance and environmental monitoring. Flawless integration ensures that innovations like dynamic mission re-planning, multi-drone coordination, and real-time edge AI processing translate into dependable real-world performance. Integration testing is not just about finding bugs; it’s about validating the very promise of autonomous flight and realizing the full potential of drone technology as a force for innovation across countless industries.