In an era increasingly defined by seamless human-computer interaction, Apple’s Voice Control stands as a testament to the continuous evolution of accessibility and intuitive user interfaces. Far beyond the rudimentary voice commands of yesteryear, iPhone Voice Control is a sophisticated, on-device system that allows users to operate their entire iPhone, iPad, or iPod touch using only their voice. It represents a significant leap in how we interact with our most personal devices, blending advanced speech recognition with intelligent contextual understanding to offer a truly hands-free experience. This innovation isn’t merely a convenience; it’s a powerful tool that enhances accessibility for individuals with motor disabilities, while simultaneously offering a novel way for all users to interact with their technology, pushing the boundaries of what’s possible in the realm of tech and innovation.

The Evolution of Voice Interaction: A Tech Innovation Journey

The journey to sophisticated voice control is a long and fascinating one, deeply rooted in the history of artificial intelligence and human-computer interaction. Understanding iPhone Voice Control requires appreciating the technological milestones that paved its way.

Early Beginnings: Command and Control Systems

The concept of controlling devices with voice has been a staple of science fiction for decades, but its real-world implementation began much more modestly. Early voice recognition systems, emerging in the mid-20th century, were rudimentary. They could only recognize a handful of spoken words, required extensive training for each user, and often operated within highly constrained environments. These systems were primarily command-and-control, meaning they could execute specific, predefined actions based on a limited vocabulary. Think of dictating a few numbers or simple commands – a far cry from natural language processing. The computational power required, coupled with the nascent state of acoustic modeling and language processing algorithms, meant these early innovations remained largely niche applications, often in specialized industrial or military settings. Yet, they laid the foundational groundwork for more complex interactions to come, proving the viability of voice as an input method.

Siri and the Rise of Intelligent Personal Assistants

The landscape of voice interaction dramatically shifted with the introduction of Apple’s Siri in 2011. Siri wasn’t just a command processor; it was marketed as an “intelligent personal assistant.” It could understand natural language, answer complex questions, set reminders, send messages, and perform a wide array of tasks by tapping into various internet services. Siri brought voice interaction into the mainstream, demonstrating the potential for conversational interfaces. While initially reliant on cloud processing for much of its intelligence, Siri marked a pivotal moment, shifting user expectations from mere command execution to genuine, albeit often humorous, interaction. This move from rigid commands to flexible, context-aware queries represented a major leap in AI and natural language understanding, paving the way for more integrated and powerful voice interfaces like Voice Control. Other tech giants soon followed suit with their own personal assistants, solidifying voice as a critical interface for modern technology.

Voice Control: Beyond Basic Commands and Assistants

While Siri focuses on answering questions and performing tasks, iPhone Voice Control (distinct from Siri in its primary function) takes the concept of voice interaction to an unprecedented level by enabling full device navigation and control. It’s not just about asking a question or issuing a task; it’s about replacing physical touch entirely. This system allows users to tap, swipe, type, and even fine-tune selections on their screen using only spoken commands. This innovation signifies a critical pivot in accessibility technology, designed from the ground up to empower users who might find traditional touch interactions challenging. Unlike Siri, Voice Control operates primarily on-device, offering a level of responsiveness and privacy that cloud-based assistants sometimes struggle with. It represents the pinnacle of current mobile voice-driven interfaces, showcasing deep integration with the operating system and a sophisticated understanding of visual elements on the screen.

Demystifying iPhone Voice Control: Accessibility and Power

iPhone Voice Control is a marvel of modern tech innovation, blending advanced AI with a user-centric design philosophy. Its core strength lies in its ability to empower users by transforming spoken words into precise actions, making the digital world more accessible and manageable.

Core Functionality: Navigating Your iPhone Hands-Free

The fundamental premise of iPhone Voice Control is to allow complete hands-free operation of your device. This means every action that can be performed with a finger – from launching apps and typing messages to adjusting settings and interacting with web content – can be replicated with a voice command. For instance, instead of tapping an app icon, you can simply say “Open Photos.” To scroll, you can command “Scroll up” or “Scroll down.” More complex interactions are also streamlined: if you need to tap a specific button on screen, Voice Control can display numbers next to all tappable elements, allowing you to say “Tap 3” to select the desired option. Furthermore, it understands gestures like “Swipe left” or “Pinch open,” and even provides tools for precise cursor control and text selection. This comprehensive control system is powered by an always-listening, on-device neural engine that continuously processes spoken input against the device’s visual context.

Enhancing Accessibility for All Users

While Voice Control is a powerful feature for everyone, its most profound impact is on accessibility. For individuals with limited mobility, tremors, or other physical disabilities that make traditional touch interaction difficult or impossible, Voice Control is a game-changer. It unlocks the full potential of the iPhone, transforming it from a challenging interface into an intuitive extension of their will. The ability to control every aspect of the device without physical contact allows users to communicate, work, learn, and engage with media with unprecedented independence. This dedication to inclusive design is a hallmark of Apple’s innovation strategy, ensuring that technology serves a broader spectrum of human needs. It not only provides functional access but also fosters digital inclusion, enabling more people to participate fully in the digital age.

The Underlying Technology: Speech Recognition and AI

The magic behind Voice Control is a sophisticated interplay of cutting-edge speech recognition and artificial intelligence, predominantly operating on-device. Unlike cloud-based assistants, Voice Control processes your speech directly on your iPhone. This local processing offers several key advantages: enhanced privacy (your voice data doesn’t leave your device), faster responsiveness (no internet latency), and robust offline functionality. The technology leverages advanced neural networks trained on vast datasets of spoken language to accurately transcribe speech into text. Beyond simple transcription, the AI component analyzes the context of your commands relative to what is currently displayed on your screen. It understands the names of UI elements, their positions, and the likely intent behind your spoken phrases. For example, if you say “Tap send,” the system intelligently identifies the “send” button in the current application and executes the action, even if its precise label isn’t “send.” This contextual awareness, combined with advanced acoustic models, allows for remarkably fluid and accurate hands-free operation, making it a true innovation in pervasive computing.

Setting Up and Customizing Voice Control for Optimal Experience

Maximizing the utility of iPhone Voice Control involves not just enabling it, but also understanding how to personalize its settings to suit individual needs and preferences. This customization is a key aspect of Apple’s innovative approach to user experience.

Enabling Voice Control: A Step-by-Step Guide

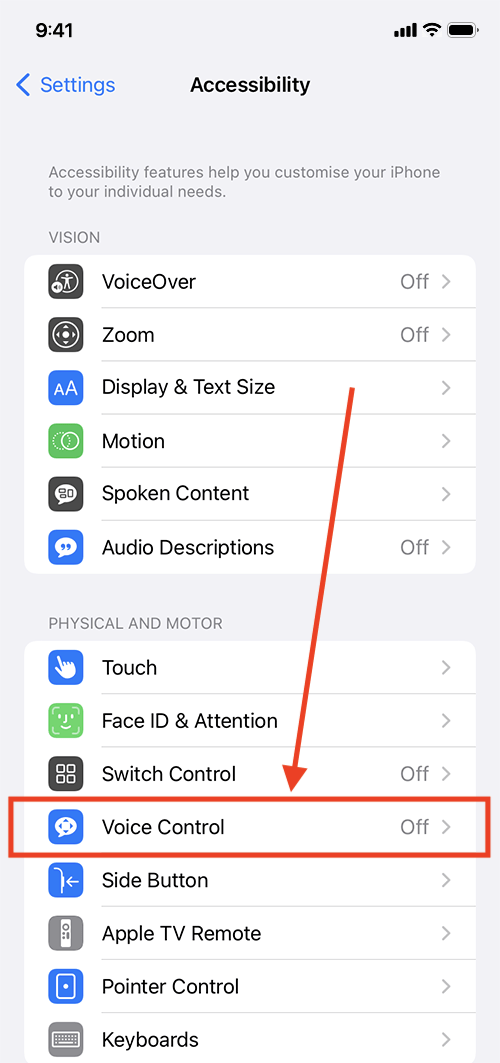

Activating Voice Control is straightforward, typically found within the Accessibility settings of your iPhone. Navigating to Settings > Accessibility > Voice Control allows users to toggle the feature on. Upon first activation, the system might download additional language files to enable full offline functionality and improve accuracy. Users will also be prompted with a brief tutorial explaining basic commands and gestures. A small microphone icon often appears in the status bar, indicating that Voice Control is active and listening. For quick access, it can also be assigned to the Accessibility Shortcut, allowing users to triple-click the Side or Home button to toggle it on or off as needed. This ease of activation ensures that the powerful capabilities of Voice Control are readily available to anyone who needs or desires them.

Personalizing Commands and Vocabulary

One of the most powerful features of Voice Control, highlighting its innovative flexibility, is the ability to customize and even create new commands. Within the Voice Control settings, users can access “Customize Commands.” Here, they can browse a vast list of predefined commands, learning the full extent of the system’s capabilities. More importantly, they can create “Custom Commands.” This allows users to assign specific phrases to perform a series of actions, open a particular app, or even input custom text. For instance, a user could create a command like “Start my morning routine” which, when spoken, opens a specific app, plays music, and sends a predefined message. This level of personalization is crucial for refining the user experience, allowing individuals to tailor the interface precisely to their workflow or to overcome specific interaction challenges. It transforms Voice Control from a generic tool into a highly personalized assistant.

Optimizing Performance for Diverse Environments

To ensure Voice Control performs optimally, especially in varying environments, several settings can be adjusted. Users can choose the language for Voice Control, ensuring it correctly interprets their dialect. The “Attention Aware” feature, if available on the device, can pause Voice Control when the user looks away, resuming only when they look back, enhancing privacy and reducing accidental commands. “Overlay” options allow users to choose how on-screen elements are identified, whether by Item Names, Numbers, or a Grid, offering different levels of visual guidance. For particularly challenging environments, training the system to recognize specific vocabulary, especially proper nouns or technical jargon, can significantly improve accuracy. Understanding these customization options empowers users to fine-tune Voice Control, making it more robust and reliable whether they are in a quiet room or a bustling public space, further cementing its status as a versatile piece of innovative tech.

Practical Applications and Innovative Use Cases

Beyond its fundamental role in accessibility, iPhone Voice Control unlocks a plethora of practical applications and innovative use cases, reshaping how we interact with our digital lives. Its versatility underscores its significance as a piece of assistive and empowering technology.

Everyday Productivity and Multitasking

Voice Control dramatically enhances everyday productivity, especially for tasks that traditionally require repetitive tapping or precise finger movements. Imagine effortlessly switching between applications (“Open Mail,” “Switch to Safari”), composing emails or messages hands-free (“New Mail,” “Type, I’ll be there in five minutes,” “Send”), or navigating complex menus without lifting a finger. For professionals, it can facilitate document editing, data entry, and presentation control during meetings, allowing them to focus on content rather than interface mechanics. Multitasking becomes more fluid; for example, a user cooking or working on a hands-on project can manage music playback, set timers, or answer calls without cross-contamination or interruption. This ability to maintain focus on the primary task while seamlessly managing device interactions represents a significant boost to efficiency, showcasing Voice Control as a productivity-enhancing innovation.

Creative Control and Digital Expression

For creative professionals and enthusiasts, Voice Control opens new avenues for digital expression. Artists can manipulate drawing apps with greater precision, using voice commands for brush strokes, color selection, or undo/redo actions, especially when physical interaction with the screen might disrupt their flow or require a different hand. Musicians can use voice to navigate music creation software, trigger samples, or control virtual instruments, integrating their vocals directly into the creative process. Even in photography and videography, voice commands can be used to control camera settings, trigger the shutter, or start/stop recording, allowing for unique shot compositions where physical interaction might introduce shake or disrupt a scene. This hands-free control empowers creators to experiment with new methodologies, blurring the lines between physical and digital interaction and fostering innovative artistic approaches.

Integration with Smart Home and IoT Devices

As part of the broader “Tech & Innovation” ecosystem, iPhone Voice Control extends its utility to the burgeoning smart home and Internet of Things (IoT) landscape. While Siri is often the primary interface for smart home control, Voice Control provides a granular level of interaction directly through the Home app or any app controlling smart devices. Users can open the Home app via voice (“Open Home”), then navigate through rooms and devices, adjusting lights, thermostats, or security systems with specific commands like “Tap Living Room Lights” or “Scroll down.” This offers an additional layer of control, particularly beneficial for users who rely on Voice Control for all their device interactions. Furthermore, through custom commands, users can create complex routines that integrate app actions with smart home triggers, effectively bridging the gap between their iPhone’s capabilities and their connected environment, pushing the boundaries of integrated smart living.

The Future of Voice Control and Human-Computer Interaction

The current capabilities of iPhone Voice Control, while impressive, are merely a glimpse into the future of human-computer interaction. As technology continues to advance, particularly in AI and machine learning, we can anticipate even more intuitive, predictive, and seamless voice-driven experiences. This ongoing innovation promises to redefine our relationship with technology.

Predictive Intelligence and Contextual Understanding

The next generation of Voice Control will likely feature even more advanced predictive intelligence. Rather than just understanding direct commands, systems will anticipate user needs based on learned behaviors, location data, time of day, and ongoing activities. Imagine a system that, upon hearing “Schedule a meeting,” not only opens the calendar but also suggests likely attendees based on recent communications and proposes optimal times based on everyone’s availability, all without explicit instruction. Contextual understanding will become hyper-localized and deeply personal. The system will differentiate between ambient noise and direct commands with higher accuracy, understand subtle inflections in speech to gauge user sentiment, and offer more nuanced responses. This shift towards proactive assistance, driven by increasingly sophisticated AI, will make voice interfaces feel less like tools and more like genuine collaborators.

Seamless Multimodal Interactions

While Voice Control currently focuses on an exclusively voice-driven experience, the future of human-computer interaction is multimodal. This means seamlessly integrating voice with touch, gestures, eye-tracking, and even bio-signals. Users might initiate a task with a voice command, refine it with a touch gesture, and confirm it with a glance. For instance, “Show me my emails,” followed by a voice command to “Highlight the unread ones,” and then a finger tap to open a specific email, all flowing naturally. The system will intelligently interpret and combine these different input methods to create a richer, more intuitive experience. This hybrid approach will cater to diverse user preferences and situations, ensuring that the most effective and comfortable mode of interaction is always available, further enhancing accessibility and usability across the board.

Ethical Considerations and Data Privacy

As voice control technology becomes more pervasive and intelligent, ethical considerations and data privacy will remain paramount. The processing of highly personal speech data, especially when conducted on-device, offers significant privacy advantages. However, as systems become more predictive and integrate with more aspects of our lives, questions surrounding data collection, storage, and the potential for misuse will grow. Future innovations must continue to prioritize user control, transparency, and robust security measures. Striking a balance between personalized, intelligent assistance and protecting individual privacy will be a critical challenge and a key area for innovation. As Voice Control continues to evolve, its developers must proactively address these concerns, ensuring that the technology remains a force for empowerment and positive change, solidifying its place as a thoughtful and impactful innovation in the tech landscape.

In conclusion, iPhone Voice Control is more than just a feature; it’s a profound statement on the power of tech innovation to transform lives. By offering unparalleled hands-free control, it democratizes access to digital information and communication, showcasing how advanced AI and intuitive design can create truly inclusive technology. As this field continues to evolve, driven by advancements in predictive intelligence and multimodal interaction, the future promises an even more integrated and natural partnership between humans and their devices, with voice leading the charge in redefining human-computer interaction.