In the rapidly evolving landscape of drone technology and innovation, the pursuit of perfection drives relentless research and development. From fully autonomous flight and sophisticated AI-driven analytics to hyper-accurate remote sensing and mapping, modern drones are at the forefront of technological advancement. However, even in the most meticulously engineered systems, there exist deviations from the ideal, the perfect, or the expected. This article delves into the concept of “what is impure” within this cutting-edge domain, not as a moral judgment, but as a technical descriptor for anomalies, inaccuracies, biases, or vulnerabilities that can compromise the integrity, reliability, and effectiveness of advanced drone operations and the data they generate. Understanding these “impurities” is crucial for pushing the boundaries of drone capabilities and ensuring their trustworthy deployment in critical applications.

Impurity in Data Integrity for Remote Sensing & Mapping

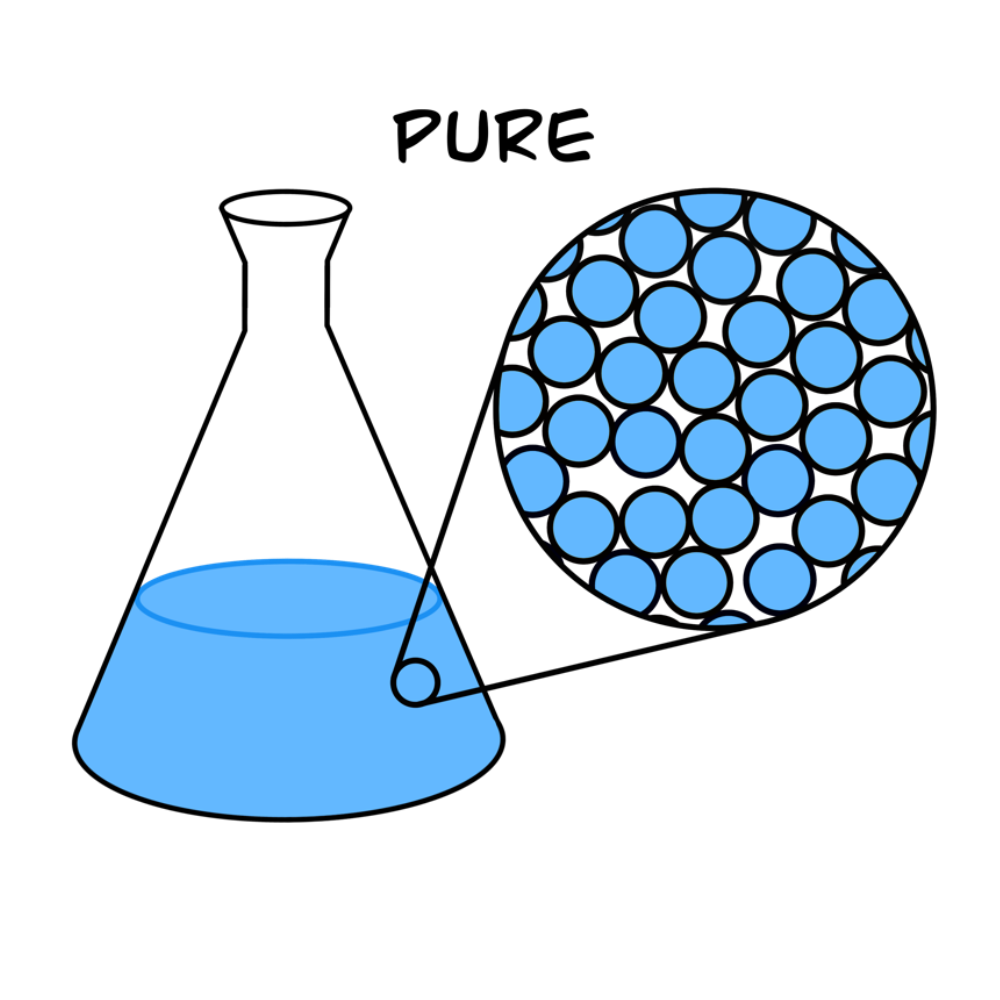

Drones equipped with advanced sensor payloads are revolutionizing fields like agriculture, construction, environmental monitoring, and urban planning through highly detailed remote sensing and mapping. The quality and trustworthiness of the insights derived from these applications are directly contingent on the purity of the collected data. Any deviation from accurate, complete, and unbiased information constitutes an “impurity” that can lead to flawed analyses and erroneous decisions.

Noise and Environmental Contamination

One of the most common sources of data impurity stems from environmental factors and inherent sensor limitations. When drones capture imagery (photogrammetry), LiDAR scans, or multispectral data, various elements can introduce noise or contamination. Atmospheric conditions such as haze, fog, or dust can scatter light, leading to blurred images or inaccurate reflectance values. Uneven lighting, shadows, or glare from reflective surfaces can create inconsistencies in photogrammetric models, making it difficult to generate precise 3D reconstructions. Furthermore, the very physics of sensor operation, including read noise, shot noise, and dark current, can introduce random fluctuations into the data stream, irrespective of environmental factors. For instance, a LiDAR pulse might receive erroneous reflections from rain droplets or dense foliage, creating “ghost” points that don’t represent the true terrain. Addressing these environmental and intrinsic noises often requires sophisticated filtering algorithms and meticulous flight planning to minimize their impact.

Data Drift and Inconsistencies

Data purity can also be compromised over time or across different collection methodologies, leading to data drift and inconsistencies. In long-term monitoring projects, for example, changes in sensor calibration, software updates, or even slight variations in flight parameters (altitude, speed, overlap) between successive data captures can introduce subtle but significant discrepancies. These inconsistencies make it challenging to compare datasets accurately, track changes over time, or build robust predictive models. For instance, if a drone mapping a construction site uses slightly different camera settings or GPS accuracy on two different days, the resulting 3D models might not perfectly align, introducing impurities that complicate progress tracking or volume calculations. Such inconsistencies can also arise when integrating data from multiple drone platforms or sensors that haven’t been rigorously cross-calibrated, leading to a mosaic of information that is internally contradictory or less reliable as a whole.

The Human Element and Data Annotation Bias

Beyond environmental and technical factors, human involvement in data processing and AI model training can introduce a subtle yet pervasive form of impurity: bias. Many advanced drone applications, particularly those leveraging machine learning for object detection, classification, or autonomous decision-making, rely heavily on vast datasets that require human annotation. If the annotators have inherent biases, lack diverse perspectives, or follow inconsistent labeling guidelines, these “human impurities” are inadvertently baked into the training data. For example, if a dataset for detecting infrastructure defects predominantly features defects from a certain geographic region or lighting condition, the AI model trained on it may perform poorly or incorrectly classify defects in different environments. Similarly, if annotations for land cover classification are imprecise or subjective, the resulting maps will carry these impurities forward. Recognizing and mitigating human-induced bias is a critical step towards developing truly robust and fair AI systems for drone applications.

Algorithmic Impurities: Bias, Errors, and Vulnerabilities in AI & Autonomous Flight

The promise of autonomous drone operations and intelligent decision-making hinges on the purity of the underlying algorithms. When AI models exhibit bias, when software contains errors, or when systems are vulnerable to external manipulation, these constitute algorithmic impurities that can undermine performance, compromise safety, and erode trust.

Bias in Machine Learning Models

As touched upon with data annotation, bias can permeate AI algorithms at various stages. If the training data used to develop AI models for tasks like obstacle avoidance, target tracking, or automated inspection is unrepresentative, skewed, or incomplete, the resulting model will inherit these “impure” biases. For example, an AI system designed for autonomous navigation might struggle to recognize certain types of obstacles if its training data predominantly featured urban environments and lacked sufficient rural or highly dynamic scenarios. This can lead to discriminatory performance, where the drone performs well in familiar contexts but fails or makes incorrect decisions in less represented ones. Such biases can also manifest in object recognition systems that perform poorly on specific populations or objects due to insufficient representation in the training data, leading to “impure” classifications. Addressing algorithmic bias requires not only diverse and high-quality data but also careful model design, rigorous testing across varied conditions, and sometimes the implementation of fairness-aware machine learning techniques.

System Instability and Edge Cases

Autonomous drone systems are complex tapestries of sensors, software, and control logic. While designed for robust operation, inherent “impurities” can lead to system instability, particularly when confronted with unforeseen edge cases. An edge case is a situation that falls outside the typical or expected operational parameters, for which the system’s programmed logic might not have a clear or optimal response. For instance, an autonomous delivery drone might perform flawlessly in clear weather but encounter an “impure” scenario when sudden, localized high winds combine with unexpected electromagnetic interference near its landing zone. If the system’s algorithms haven’t been robustly tested and trained for such a confluence of events, it could lead to erratic flight, loss of control, or an aborted mission. These instabilities highlight the challenge of achieving pure, deterministic behavior in highly dynamic, real-world environments, necessitating comprehensive simulation, extensive real-world testing, and the integration of adaptive learning and fallback mechanisms.

Software Glitches and Cyber Vulnerabilities

Perhaps the most direct forms of algorithmic impurity are software glitches, coding errors, and cyber vulnerabilities. A “bug” in the flight control firmware, a logic error in an AI’s decision-making module, or an oversight in network security protocols can have profound consequences. These impurities can lead to unexpected system crashes, incorrect sensor interpretations, or erroneous command execution. Even minor glitches can accumulate, leading to degraded performance or unpredictable behavior over time. More critically, cyber vulnerabilities represent “malicious impurities” that can be exploited by external actors. A drone’s communication link might be susceptible to jamming, its GPS signal to spoofing, or its onboard software to hacking. Such attacks could lead to unauthorized control, data theft, or even the weaponization of the drone, fundamentally compromising its intended function and security. Therefore, rigorous software testing, robust encryption, secure communication protocols, and continuous vulnerability assessments are essential to maintaining the purity and integrity of advanced drone systems against both accidental and intentional corruption.

Sensor Impurities and Performance Degradation

At the heart of every advanced drone operation are its sensors, which gather the raw data that informs navigation, stabilizes flight, and enables sophisticated mapping and inspection tasks. When these sensors provide “impure” or inaccurate readings, the entire system’s reliability is jeopardized.

Hardware Limitations and Calibration Issues

The physical components of drone sensors inherently possess certain hardware limitations, which can introduce impurities. Manufacturing tolerances mean that no two sensors are perfectly identical, leading to slight variations in accuracy and precision. Over time, wear and tear, temperature fluctuations, or physical impacts can further degrade sensor performance. More commonly, improper or infrequent calibration introduces significant impurities. An Inertial Measurement Unit (IMU) that is not correctly calibrated will provide biased acceleration and angular rate readings, leading to drift in position estimates and unstable flight. Similarly, an uncalibrated vision sensor might produce distorted images, affecting object recognition or visual odometry. Even GPS receivers can suffer from antenna degradation or poor satellite geometry, yielding impure position data that impacts autonomous navigation precision. Regular, meticulous calibration and quality control are therefore paramount to ensure sensors provide pure, reliable data.

Environmental Interference

Beyond intrinsic hardware issues, external environmental factors can actively introduce impurities into sensor data streams. Electromagnetic interference (EMI) from power lines, communication towers, or other electronic devices can disrupt the sensitive signals of GPS, radio controllers, or even onboard data buses. This can manifest as erratic position readings, command latency, or data packet loss. Similarly, acoustic noise from propellers or the environment can contaminate microphone data, while strong vibrations can negatively impact IMU performance. Signal jamming, whether accidental or intentional, can entirely block communication or GPS signals, rendering autonomous functions impossible and forcing manual intervention or emergency landings. Even physical obstructions like dense foliage, urban canyons, or large metallic structures can block or reflect GPS signals, leading to multipath errors and impure position fixes. Developing sensors and systems that are resilient to these diverse forms of environmental interference is a continuous challenge in drone innovation.

Mitigating Impurities: Strategies for Robust Drone Innovation

Recognizing and understanding the various forms of “impurity” is the first step; the subsequent, more critical step is developing robust strategies to mitigate their impact. The pursuit of purity in drone technology is an ongoing endeavor that drives advancements in data science, AI, and system engineering.

Advanced Data Pre-processing and Filtering

To combat impurities in collected data, sophisticated pre-processing and filtering techniques are essential. This includes algorithms for noise reduction (e.g., Kalman filters for sensor data, median filters for images), outlier detection and removal, and data normalization. For mapping and remote sensing, techniques like radiometric correction (to account for atmospheric effects) and geometric correction (to rectify image distortions) are crucial for generating pure, accurate datasets. Data validation checks, anomaly detection systems, and the implementation of robust data pipelines ensure that only high-quality, trustworthy information feeds into analytical models and decision-making processes.

Robust AI Model Development and Explainable AI (XAI)

Mitigating algorithmic impurities, especially bias, requires a multi-faceted approach. This involves creating more diverse and representative training datasets, employing fairness-aware machine learning techniques, and continuously testing models against a wide range of real-world and simulated scenarios. The development of Explainable AI (XAI) is also pivotal. XAI aims to make AI decisions transparent and understandable, allowing developers and operators to scrutinize the logic behind an AI’s output, identify potential biases, and debug unexpected behavior. This transparency is key to building trust and ensuring that AI-driven drone systems make pure, unbiased decisions.

Redundancy, Self-Correction, and Fault Tolerance

To counteract hardware and sensor impurities, robust system design incorporates redundancy and fault-tolerant mechanisms. This means duplicating critical sensors (e.g., multiple GPS units, redundant IMUs) so that if one provides impure data, the others can take over or provide validation. Self-correction algorithms can detect discrepancies between sensor readings and dynamically adjust models or flight paths. Adaptive control systems can compensate for sensor degradation or partial system failures, allowing the drone to continue its mission or safely return home. Furthermore, comprehensive pre-flight diagnostics and automated calibration routines ensure that all components are operating within specified parameters before takeoff, reducing the likelihood of impurity-induced failures.

Cybersecurity Measures and Ethical AI Frameworks

Protecting against external “malicious impurities” through robust cybersecurity is non-negotiable. This encompasses end-to-end encryption for communication links, secure boot processes for onboard computers, continuous vulnerability scanning, and intrusion detection systems. Regular penetration testing and adherence to best practices in secure software development are vital. Beyond technical measures, the establishment of clear ethical AI frameworks ensures that the development and deployment of autonomous drone systems are guided by principles of fairness, accountability, and transparency, thereby mitigating the risk of societal impurities arising from biased or irresponsible use of the technology.

In conclusion, “what is impure” in the context of advanced drone technology is a broad and intricate question encompassing everything from microscopic sensor noise to complex algorithmic biases and sophisticated cyber threats. It underscores the perpetual challenge of achieving reliability, accuracy, and trustworthiness in increasingly autonomous and intelligent systems. By diligently identifying, understanding, and proactively mitigating these impurities across data, algorithms, sensors, and system design, researchers and engineers continue to refine drone capabilities, unlocking their full potential for safe, efficient, and transformative applications across countless industries. The pursuit of purity is not merely an ideal; it is a fundamental requirement for the future of drone innovation.