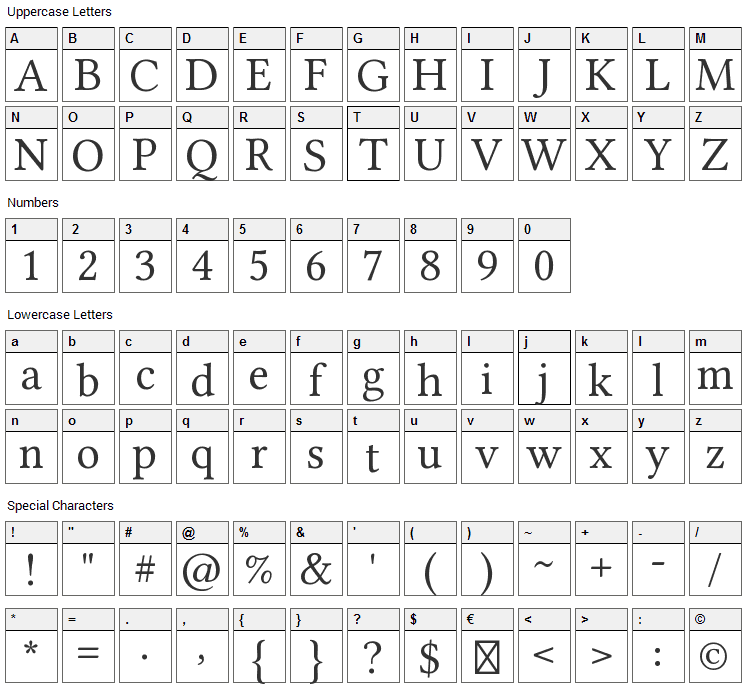

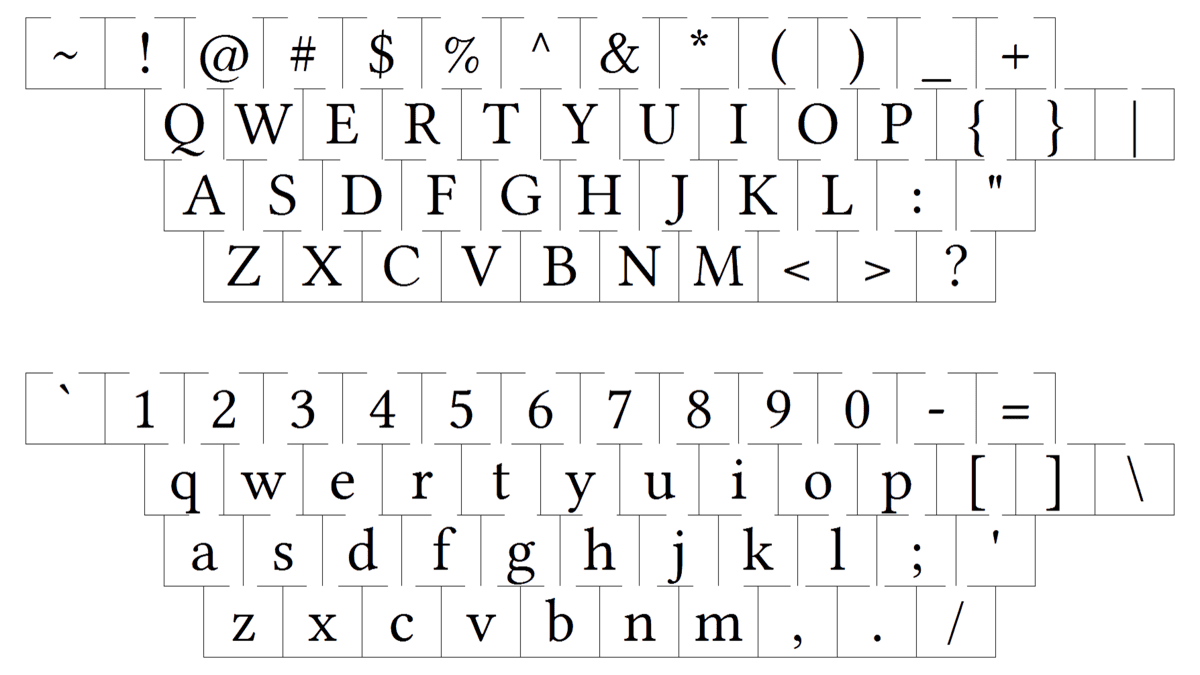

In the vast digital expanse of the internet, Wikipedia stands as a monumental repository of human knowledge. Its success isn’t just in its content but also in its unparalleled accessibility and readability. For those curious, Wikipedia primarily employs sans-serif fonts – historically Helvetica Neue and now often relying on system-default fonts like San Francisco (Apple), Segoe UI (Windows), or Roboto (Android), falling back to other widely available sans-serifs like Arial. This choice isn’t arbitrary; it’s a deliberate decision rooted in universal readability, clean aesthetics, and minimal cognitive load. It underpins an experience where information, no matter how complex, is presented with clarity.

This dedication to clear information delivery holds profound implications for other sophisticated technological domains. Consider the burgeoning field of drone technology and innovation. While the immediate association might be with hardware, sensors, and flight dynamics, the true frontier of innovation increasingly lies in how humans and intelligent systems interact with, interpret, and act upon the colossal streams of data generated by drones. From orchestrating complex autonomous missions to analyzing high-resolution mapping data or ensuring the safety of AI-driven follow modes, the “font”—or more broadly, the user interface and visual communication strategy—is as critical to success in drone operations as it is to reading an encyclopedia.

Beyond Typography: The Unseen Principles of Information Delivery

The essence of Wikipedia’s font choice transcends mere aesthetics; it embodies a commitment to effective information delivery. This commitment is a blueprint for any system dealing with complex data and demanding precise user interaction, especially in high-stakes environments like drone operations.

The Wikipedia Paradigm: Clarity in Complex Data

Wikipedia’s design philosophy prioritizes unobtrusiveness and functional clarity. The chosen fonts are universally understood, highly legible across various screen sizes and resolutions, and free from ornate distractions. This ensures that the user’s focus remains squarely on the content, not on the medium through which it’s delivered. In a world awash with information, Wikipedia provides a calm, clear window into knowledge, largely due to its meticulous attention to fundamental UI principles, including typography. It demonstrates that when information is paramount, the interface must be a facilitator, not a barrier.

Human-Machine Interface in Drone Operations: A New Frontier

The comparison to Wikipedia might seem a stretch, but the underlying challenge is identical: how to distill vast, real-time, often critical information into an easily digestible format. For drones, this isn’t just about reading; it’s about decision-making under pressure. Drone operators, whether managing a fleet of autonomous vehicles or piloting a single unit for remote sensing, are constantly bombarded with data: altitude, speed, battery life, GPS coordinates, sensor feeds, mission parameters, obstacle warnings, and more. The efficiency and intuitiveness of the human-machine interface (HMI) directly impact operational safety, mission success, and the cognitive load on the operator. Just as a poorly chosen font can make Wikipedia unreadable, an ill-conceived drone UI can turn a powerful technological marvel into a liability. Innovation in drone technology, therefore, extends beyond the drone itself to the interfaces that control and interpret its capabilities.

Elevating User Experience in Drone Tech: From Pixels to Precision

The “font” of drone technology is its entire visual communication system. For cutting-edge applications like autonomous flight and advanced mapping, this visual language must be nothing short of revolutionary.

Visualizing Autonomous Flight and AI Follow Modes

Autonomous flight systems and AI follow modes represent the pinnacle of drone innovation. They rely on complex algorithms, real-time sensor fusion, and predictive analytics. For human operators to monitor, intervene, or simply trust these systems, the drone’s behavior and intentions must be clearly communicated. Imagine a graphical user interface (GUI) for an AI follow drone:

- Target Acquisition: How clearly is the tracked subject identified and highlighted?

- Predictive Pathing: Can the operator see the drone’s anticipated flight path and potential obstacles in an intuitive overlay?

- System Status: Is the AI engaged? What are its confidence levels? Are there any anomalies?

These questions underscore the need for visual clarity. Just as a well-designed font minimizes reading errors, a well-designed GUI for autonomous drones minimizes interpretation errors, ensuring that the operator is always informed and in control, even when the drone is acting independently. Innovation here means developing dynamic, context-aware visual cues that make complex AI decisions transparent and understandable.

Optimizing Data Presentation for Mapping and Remote Sensing

Drones equipped for mapping and remote sensing generate an unprecedented volume of geospatial data. High-resolution orthomosaics, 3D point clouds, thermal imagery, multispectral data – these are invaluable for agriculture, construction, environmental monitoring, and urban planning. The challenge lies in presenting this data in a way that is immediately actionable and interpretable by analysts and decision-makers.

- Layered Information: How are different data layers (e.g., elevation, vegetation index, temperature) presented without overwhelming the user?

- Annotation & Analysis Tools: Are the tools for measurement, annotation, and anomaly detection intuitive and easily accessible?

- Real-time Feedback: Can the operator see the quality of the data being collected in real-time to adjust flight parameters?

The “font” here isn’t just on the screen; it’s the entire mapping software interface, the color palettes used for heat maps, the symbology for geographic features, and the responsiveness of interactive visualization tools. Innovating in this space means creating interfaces that transform raw data into insightful intelligence with minimal effort, making complex datasets as comprehensible as a Wikipedia article.

The Science of Readability: Applying Design Principles to Drone Displays

The principles that guide Wikipedia’s font choice – legibility, contrast, hierarchy – are critical for the design of drone interfaces, especially for on-screen displays (OSD) and ground control stations (GCS) where information density is high and rapid comprehension is essential.

Font Selection for On-Screen Displays (OSD) and Ground Control Stations (GCS)

In the demanding environment of drone operations, particularly for FPV (First Person View) flight or critical mission oversight, every pixel counts.

- Legibility under Varied Conditions: OSD text must be legible in bright sunlight, low light, and against dynamic backgrounds. Sans-serif fonts with clear differentiation between similar characters (e.g., ‘I’ and ‘l’, ‘0’ and ‘O’) are preferred.

- Information Hierarchy: Just as Wikipedia uses different heading sizes and bold text to organize information, drone interfaces must establish a clear hierarchy. Critical flight data (altitude, battery) should be immediately recognizable, while secondary data can be less prominent.

- Contrast and Color Palette: High contrast between text and background is non-negotiable. Color choices should also consider color blindness and avoid overly saturated hues that cause eye strain during prolonged use.

The selection of a “font” in drone OSDs is a functional decision, not an aesthetic one. It’s about optimizing data glanceability and reducing the cognitive burden on the operator, directly impacting safety and operational efficiency.

Ergonomics and Cognitive Load in Drone Interface Design

Beyond fonts, the overall ergonomic design of drone ground control stations and mobile applications significantly influences cognitive load. An intuitive layout, logical grouping of controls, and clear visual feedback minimize the mental effort required to operate the drone and process information.

- Spatial Consistency: Controls and data displays should be consistently placed across different screens or modes.

- Feedback Mechanisms: Visual, auditory, and haptic feedback should be clear and immediate to confirm actions or warn of issues.

- Minimizing Distractions: Unnecessary animations, cluttered layouts, or non-essential information can increase cognitive load and divert attention from critical tasks.

Drawing a parallel to Wikipedia, imagine if the navigation bar constantly shifted, or if articles were filled with blinking ads. The streamlined, consistent design of Wikipedia is its strength. Similarly, drone UI designers must strip away complexity, focusing on core functionalities and presenting information in the most ergonomic way possible to allow operators to concentrate on the mission at hand. This is innovation in usability, transforming complex tech into accessible tools.

Innovating for Tomorrow: The Future of Drone UI/UX

As drone technology continues its exponential growth, the demands on UI/UX will only intensify. The future will see more autonomous capabilities, more data, and more sophisticated human-drone collaboration, making the “font” of information delivery even more pivotal.

Dynamic Data Visualization and Augmented Reality in Drone Operations

The next generation of drone interfaces will move beyond static displays to dynamic, adaptive visualizations, potentially leveraging augmented reality (AR).

- Contextual Data Overlays: Imagine an operator wearing AR goggles, seeing not just the live drone feed, but also real-time overlays of mapping data, predicted flight paths, no-fly zones, or even thermal signatures directly integrated into their field of view.

- Interactive 3D Models: For complex inspections or construction monitoring, AR could project a drone’s scanned 3D model directly onto the real-world structure, highlighting discrepancies or progress in real-time.

- AI-Assisted Visual Cues: AI could dynamically highlight critical information or potential hazards within the AR environment, much like an intelligent co-pilot guiding the operator’s attention.

These innovations require not just powerful hardware but also incredibly sophisticated UI/UX design to prevent information overload while delivering actionable insights. The “font” of tomorrow’s drone operations will be a dynamic, multi-layered visual narrative crafted by advanced algorithms and human-centered design principles.

Personalization and Adaptability in Drone Control Systems

No two drone missions are identical, and no two operators have the same preferences or cognitive styles. Future drone control systems will increasingly offer personalization and adaptability, learning from operator behavior and mission requirements.

- Customizable Dashboards: Operators should be able to configure their GCS or app interface, prioritizing specific data points, control layouts, and visual themes.

- Adaptive Information Density: The system could dynamically adjust the amount and detail of information displayed based on the mission phase (e.g., takeoff vs. mapping vs. landing), environmental conditions, or operator stress levels detected via biometrics.

- Multi-Modal Interaction: Beyond touchscreens and joysticks, future interfaces might incorporate voice commands, gesture control, or eye-tracking to provide more natural and efficient interaction methods.

This level of personalization and adaptability represents a significant leap in drone innovation, allowing the interface to truly serve the operator rather than demanding adaptation from them. It’s about making complex technology feel intuitive and responsive, much like a well-designed book adapts to the reader’s needs through clear typography and structure.

Conclusion: The Unsung Hero of Drone Innovation

The question “what font does Wikipedia use” might seem trivial at first glance, but it serves as a powerful reminder of the fundamental importance of clear, accessible information design. In the high-stakes, data-intensive world of drone technology and innovation—from the nuanced guidance of AI follow modes to the precision required for autonomous flight, mapping, and remote sensing—the “font” of the human-machine interface is not a mere afterthought. It is a critical component of safety, efficiency, and ultimate mission success.

Innovation in drone tech is not solely about faster motors or smarter algorithms; it’s also about designing interfaces that make these advancements usable, understandable, and trustworthy. By meticulously applying principles of readability, visual hierarchy, ergonomic design, and forward-thinking data visualization, developers can ensure that drone operators can interpret complex information quickly and accurately, make informed decisions, and push the boundaries of what these incredible flying machines can achieve. Just as Wikipedia empowers users with knowledge through transparent design, the next wave of drone innovation will empower operators with control and insight through equally sophisticated and user-centric interfaces. The unsung hero in this technological revolution is indeed the thoughtful design of how information is presented—the “font” of drone operations.