In an era increasingly defined by advanced technology, the seemingly simple query “what does this hand emoji mean?” often points towards deciphering nuances in digital communication. However, when juxtaposed against the backdrop of cutting-edge drone technology, this question takes on a profound, metaphorical significance. It ceases to be about a universal digital pictogram and transforms into an inquiry about the evolving language of human-machine interaction, particularly within the domain of Tech & Innovation in drones. Here, the “hand emoji” symbolizes a burgeoning frontier: the intuitive gesture, the subtle human command, and the seamless interface that aims to bridge the gap between human intent and autonomous aerial systems. This exploration delves into how hand-based interactions, once the sole domain of ground-level communication, are now being integrated into the sophisticated world of drones, revolutionizing how we control, communicate with, and ultimately collaborate with these intelligent machines.

The Evolution of Human-Drone Interaction: Beyond the Joystick

For years, controlling a drone was an art form, demanding dexterity, coordination, and a deep understanding of complex remote-control interfaces. Operators would spend countless hours mastering joysticks, toggles, and multi-functional buttons to navigate their aerial vehicles. This traditional paradigm, while effective for skilled pilots, presented a significant barrier to entry for casual users and limited the natural flow of human-drone collaboration. The “hand emoji,” in this context, becomes a beacon for a more accessible and intuitive future – one where human input is as natural as a wave or a point, echoing the directness of human-to-human communication.

From Manual Piloting to Intuitive Gestures: A Paradigm Shift

The drive for greater accessibility and more fluid interaction has spurred a paradigm shift in how we envision drone control. Developers are moving beyond the confines of physical controllers, exploring alternative input methods that leverage natural human movements and cognitive processes. This shift is not merely about simplification; it’s about enabling a deeper, more organic relationship between humans and their robotic counterparts. Imagine a scenario where a drone understands a raised hand as a command to halt, or a sweeping motion as an instruction to follow. Such intuitive control dramatically lowers the learning curve and expands the operational scope of drones, allowing individuals with varied skill sets to engage with advanced aerial technology. This journey from complex manual piloting to sophisticated, gesture-based control signifies a monumental leap in human-machine interface design.

Bridging the Gap: The Need for Natural Interfaces

The human brain is wired for intuitive interaction with the physical world, relying heavily on visual cues, body language, and immediate sensory feedback. Traditional drone controllers, while precise, often bypass these natural predispositions, forcing users to learn an entirely new “language” of buttons and levers. The push towards natural interfaces, therefore, is an effort to bridge this cognitive gap. By employing systems that recognize and interpret gestures, facial expressions, or even voice commands, drone technology can become an extension of human will, rather than a separate, complex apparatus. The “hand emoji” embodies this aspiration: a universal symbol of human communication, now being translated into actionable commands for autonomous systems, making interactions seamless, efficient, and profoundly more engaging. It represents the quest for a control mechanism so innate that it feels less like piloting a machine and more like directing a highly intelligent assistant with a mere flick of the wrist.

Gesture Control: The Hand’s New Language in Drone Tech

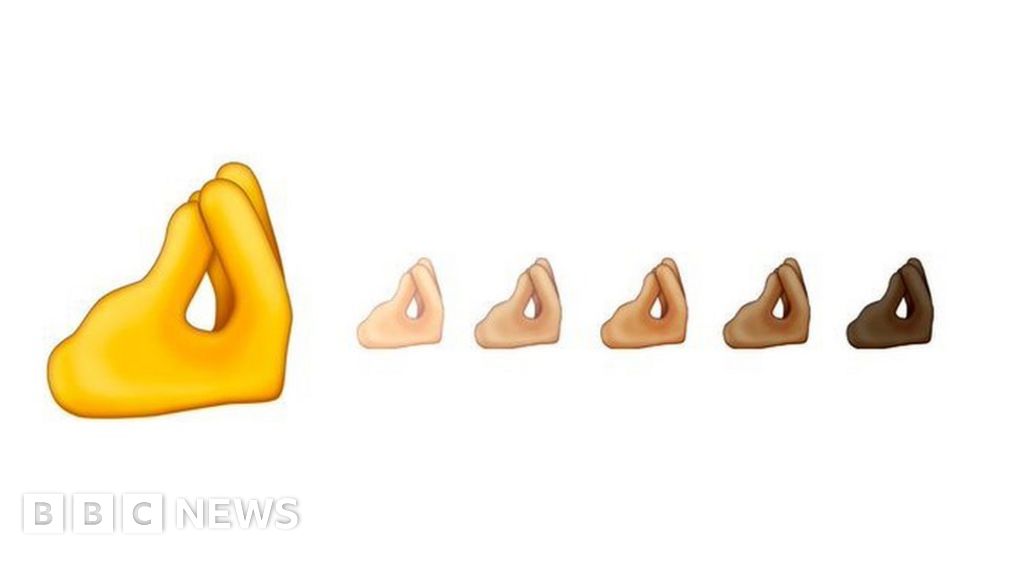

The most direct interpretation of “what does this hand emoji mean?” in the realm of drones leads us to gesture control – a revolutionary method where human hand movements serve as direct commands for drone operation. This technology harnesses sophisticated computer vision and artificial intelligence to interpret specific hand poses and movements, transforming them into instructions for flight, camera adjustments, or intelligent follow modes. It’s a literal manifestation of the hand’s newfound “language” in dictating the actions of an aerial robot.

Vision Systems and AI: How Drones “See” and Interpret Gestures

At the core of gesture control lies advanced vision technology and powerful artificial intelligence. Modern drones are equipped with high-resolution cameras and depth sensors that capture intricate details of an operator’s hand movements. This raw visual data is then fed into on-board or cloud-based AI algorithms. These algorithms, trained on vast datasets of human gestures, can identify specific hand poses (e.g., an open palm, a clenched fist, a V-sign) and dynamic movements (e.g., waving, pointing, rotating). Machine learning models continuously refine their understanding, improving accuracy in diverse lighting conditions and against varying backgrounds. The AI doesn’t just recognize a hand; it understands the intent behind the gesture, translating a dynamic human action into precise flight parameters or operational commands. This sophisticated interplay of optical input and intelligent processing is what empowers a drone to “understand” what a human’s hand is trying to communicate, turning a simple gesture into a complex instruction without ever touching a physical controller.

Practical Applications: Launch, Follow, Land with a Wave of the Hand

The practical implications of gesture control are vast and immediately impactful. Imagine standing in an open field, and with a simple upward sweep of your hand, your drone gracefully lifts off the ground. A horizontal palm might signal it to hover in place, while pointing in a direction could instruct it to fly that way. The “follow me” mode, a popular feature in many consumer drones, is being refined to be initiated and managed with gestures: a specific hand signal could tell the drone to begin tracking you, maintaining a safe distance and angle, freeing your hands for other activities like hiking or cycling. To land, a downward motion of the hand could guide the drone back to its home point.

Beyond basic flight, gestures are also being integrated into camera control. A hand forming a frame might instruct the drone to focus its camera on that specific area, while a pinching motion could initiate a zoom. For professionals in aerial filmmaking or inspection, this provides an unprecedented level of intuitive control, allowing them to frame shots or inspect structures without diverting their attention to a complex controller. This hands-free operation enhances both convenience and safety, ensuring operators can maintain visual line of sight with their drone or focus on the immediate task at hand, making the “hand emoji” not just a symbol, but a blueprint for a more natural and efficient drone interaction.

The “Hand Emoji” as a Symbol of Autonomous Communication

Beyond mere command input, the “hand emoji” also serves as a potent symbol for the burgeoning field of autonomous communication and intuitive understanding between humans and drones. It represents a future where drones don’t just execute pre-programmed tasks or respond to explicit commands, but actively perceive human intent, anticipate needs, and even provide feedback in a more natural, less robotic manner. This delves into the more subtle aspects of human-machine collaboration, moving towards a partnership rather than just a master-slave dynamic.

Beyond Simple Commands: Interpreting Complex Human Intent

The ultimate goal of advanced human-drone interaction is to transcend simple, one-to-one command mapping (e.g., “wave up = ascend”). Instead, it aims for drones to interpret complex human intent, much like a human assistant would. This involves understanding context, predicting actions, and responding in a nuanced way. For instance, if an operator points vigorously at a distant object while expressing concern verbally, an advanced drone might not only fly towards the object but also automatically adjust its camera to zoom in, capture high-resolution imagery, and perhaps even initiate a thermal scan if its sensors detect a relevant anomaly.

The “hand emoji” here signifies the entire spectrum of non-verbal cues that humans use to convey meaning – a gesture combined with body posture, gaze direction, and even emotional state. AI systems are increasingly being trained to fuse these multi-modal inputs to build a richer, more holistic understanding of human directives. This capability is crucial for applications in search and rescue, surveillance, or even construction, where dynamic, rapidly changing situations demand quick, intelligent, and context-aware responses from autonomous aerial systems. The interpretation of complex human intent transforms a drone from a tool into a proactive, intelligent partner.

Feedback Loops and Adaptive AI: Drones Learning from Human Interaction

The communication pathway isn’t unidirectional; it’s a dynamic feedback loop. Just as humans provide input, drones are learning to provide more intuitive feedback. This might not be a “hand emoji” appearing on a drone’s display (though future designs could incorporate such visual cues), but rather adaptive behaviors that signal understanding or seek clarification. If a drone misinterprets a gesture, it might hover in place and flash a light pattern, or transmit an auditory query, allowing the operator to correct the command.

Furthermore, autonomous AI is designed to be adaptive. Over time, through repeated interactions, the drone can learn an individual operator’s specific gestures, preferences, and even subtle idiosyncrasies in their communication style. If a particular operator consistently uses a slightly different hand movement for “start recording,” the drone’s AI can learn and adapt to this personalized input, enhancing the seamlessness of future interactions. This continuous learning from human input is pivotal. The “hand emoji” therefore symbolizes this ongoing dialogue, this mutual adaptation, where the drone’s AI constantly refines its understanding of human communication, striving for an ever-closer, more intuitive partnership. This adaptive learning is key to moving beyond rigid programming into a truly intelligent and responsive aerial system.

Implications for Future Drone Technology: Expanding Horizons

The concept encapsulated by “what does this hand emoji mean?” – specifically, the shift towards intuitive, gesture-based, and context-aware human-drone interaction – has profound implications for the future trajectory of drone technology. It points to a future where drones are not just sophisticated machines, but intelligent collaborators, seamlessly integrated into various aspects of human endeavor. This evolution will touch upon fundamental aspects of accessibility, safety, and the very definition of human-drone teamwork, opening up unprecedented opportunities across numerous sectors.

Enhancing Accessibility and Safety: Democratizing Drone Operation

One of the most significant impacts of intuitive gesture control and advanced human-drone communication is the enhancement of accessibility. By simplifying the control interface and making it more natural, the barrier to entry for operating complex drones is drastically lowered. This democratization of drone technology means that individuals without extensive piloting experience – from first responders in high-stress situations to field researchers collecting data, or even casual hobbyists – can effectively deploy and manage drones. This widespread accessibility will accelerate innovation, as more diverse minds engage with and adapt drone capabilities to new challenges.

Simultaneously, these advancements significantly bolster safety. When an operator can control a drone with a hand gesture while keeping their eyes on the drone and its surroundings, situational awareness improves dramatically. This reduces the risk of collisions or operational errors that might arise from diverting attention to a complex remote controller. In critical scenarios, such as disaster relief or industrial inspections, the ability to issue commands quickly and instinctively, without fumbling with buttons, can be the difference between success and failure, or even life and death. The “hand emoji” here represents the human touch that makes powerful technology safer and more broadly available to everyone.

Collaborative Human-Drone Teams: New Frontiers in Work and Exploration

Perhaps the most exciting implication is the potential for highly collaborative human-drone teams. Imagine a future where a surveyor walks a field, pointing out areas of interest with a simple gesture, and a drone autonomously flies to those points, capturing detailed imagery or sensor data. Or a construction worker directing a heavy-lift drone to position materials with precise hand signals, while simultaneously managing ground operations. These are not distant sci-fi fantasies but imminent realities, fueled by the intuitive communication enabled by gesture control and adaptive AI.

These collaborative teams will unlock new frontiers in various sectors. In entertainment, directors could sculpt complex aerial shots with natural movements. In environmental monitoring, scientists could guide drones to specific flora or fauna for observation, eliminating the need for arduous manual navigation. In exploration, astronauts could deploy drones on other planets, directing them with a wave to investigate intriguing geological features. The “hand emoji” ultimately symbolizes this new paradigm of symbiotic partnership: a future where humans and drones work in concert, each leveraging their unique strengths – human intuition and decision-making combined with drone precision, reach, and data collection capabilities – to achieve goals previously deemed impossible. It’s a vision of integration where the line between human and machine interaction becomes increasingly seamless and profoundly productive.

The question “what does this hand emoji mean?” in the context of drone technology, therefore, transcends a literal interpretation of a digital symbol. It serves as a profound metaphor for the industry’s relentless pursuit of intuitive, natural, and ultimately, human-centric interfaces. From the early days of intricate joystick controls to the emerging era of gesture recognition, adaptive AI, and intelligent contextual understanding, the journey has been one of bridging the gap between human intention and machine execution. The “hand emoji” embodies this ongoing revolution: a symbol of direct communication, seamless control, and the growing potential for humans and autonomous aerial systems to collaborate as true partners. As drone technology continues to evolve, our hands—and the natural language they convey—will become ever more integral to unlocking the full, transformative power of these incredible flying machines.