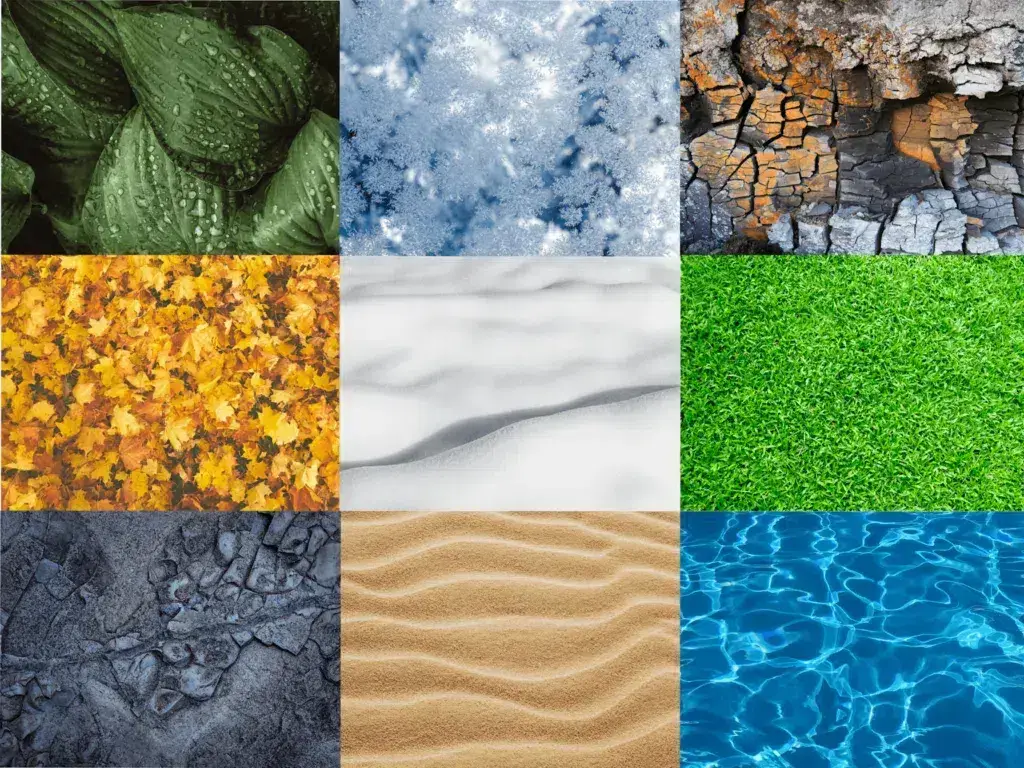

In the realm of technology and innovation, particularly as it intersects with drone applications and advanced imaging, “textures” transcend their common, tactile definition. While in everyday life we associate textures with the feel of a surface – its smoothness, roughness, or grain – in the context of drone technology and imaging, textures represent the intricate visual details and patterns that define the surface of objects and environments. These visual cues are not merely aesthetic; they are fundamental to how we perceive, interpret, and interact with the world captured by our aerial platforms.

Understanding textures is crucial for a wide array of drone-based applications, from sophisticated mapping and surveying to autonomous navigation and advanced object recognition. Drones, equipped with increasingly powerful cameras and sensors, are essentially gathering vast amounts of visual data. The ability to process, analyze, and leverage the textural information within this data unlocks significant potential for innovation and improved performance. This article delves into the multifaceted nature of textures in the context of drone technology, exploring their significance, how they are captured and analyzed, and their transformative impact across various sectors.

The Visual Language of Surfaces: Defining Textures in Drone Imaging

Textures, from a purely visual standpoint, are the discernible patterns and variations in luminance and color across a surface. These variations can arise from a multitude of factors, including the material composition, its physical structure, lighting conditions, and even the presence of wear or imperfections. For drone imaging systems, the ability to accurately capture and represent these textural nuances is paramount.

Micro-Level Variations: The Building Blocks of Texture

At the micro-level, textures are composed of an interplay of fine details. Consider the seemingly uniform surface of a concrete road. Under close examination, it reveals a complex arrangement of aggregate particles, pores, and subtle gradients. Similarly, the leaves of a forest canopy, while appearing as a solid green mass from afar, are composed of individual leaves with distinct vein patterns, surface gloss, and microscopic structures. These micro-level variations are the fundamental building blocks of what we perceive as texture. Drone cameras, especially those with high resolution, are capable of capturing these subtle details, providing a richness of information that is often lost in lower-fidelity imagery.

Macro-Level Patterns: The Broader Surface Characteristics

Beyond the fine details, textures also manifest in broader, more apparent patterns. These are the arrangements of shapes, lines, and forms that repeat or create a sense of visual rhythm across a surface. Think of the regular patterns of agricultural fields, the irregular yet recognizable shapes of rock formations, or the ordered lines of buildings in an urban landscape. These macro-level patterns provide context and allow for easier identification and classification of objects and environments. Drone-based imaging systems excel at capturing these larger-scale textural patterns, enabling tasks such as land use classification, infrastructure monitoring, and environmental assessment.

The Role of Light and Shadow in Texture Perception

It is impossible to discuss visual textures without acknowledging the critical role of illumination. The way light interacts with a surface profoundly influences how its texture is perceived. Rough surfaces, for instance, scatter light in many directions, creating a diffuse appearance and highlighting irregularities. Smooth, glossy surfaces, on the other hand, reflect light more specularly, leading to highlights and reflections that can mask or alter the underlying texture. Shadows, cast by the surface’s own irregularities or by external objects, further contribute to the perceived depth and complexity of a texture. Drone operators and imaging algorithms must account for varying lighting conditions to ensure consistent and accurate textural analysis.

Capturing and Analyzing Textures with Drone Technology

The sophisticated imaging payloads carried by modern drones are designed to capture the rich textural information present in the environment. This captured data then undergoes rigorous analysis to extract meaningful insights.

High-Resolution Imaging and Multispectral Data

The cornerstone of effective texture capture is the camera system. High-resolution sensors are essential for resolving fine textural details. However, texture analysis goes beyond just spatial resolution. Multispectral and hyperspectral cameras, increasingly integrated into drone payloads, capture information across various wavelengths of the electromagnetic spectrum. Different materials reflect and absorb light differently across these wavelengths, creating unique spectral signatures that can reveal textural properties not visible to the human eye or even standard RGB cameras. For example, subtle variations in the spectral reflectance of vegetation can indicate stress levels, soil moisture content, or even species identification – all of which are intrinsically linked to the surface texture of the plants and the soil.

Sensor Fusion for Enhanced Textural Understanding

While optical cameras are primary for visual texture, other sensors can complement and enhance our understanding. LiDAR (Light Detection and Ranging) sensors, for instance, provide precise 3D geometric data. By analyzing the density and distribution of LiDAR points on a surface, we can infer its roughness and micro-topography, which are direct manifestations of texture. Combining LiDAR data with imagery from optical sensors through sensor fusion techniques allows for a more robust and comprehensive analysis of surfaces, enabling the creation of highly detailed 3D models with accurate textural mapping.

Computational Approaches to Texture Analysis

Once textural data is captured, computational algorithms come into play. These algorithms are designed to quantify and categorize textural properties. Techniques such as statistical analysis of pixel intensity variations, co-occurrence matrices (which describe how often different pixel intensity pairs occur in specific spatial relationships), and transform-based methods (like Gabor filters or wavelets) are employed to extract features that characterize texture. Machine learning and deep learning models, particularly convolutional neural networks (CNNs), have revolutionized texture analysis by learning complex patterns directly from the image data, enabling highly accurate classification and segmentation of textured surfaces.

The Impact of Texture Analysis Across Diverse Drone Applications

The ability to effectively analyze and utilize textural information from drone-captured data has profound implications for a wide range of technological advancements and practical applications.

Precision Agriculture and Crop Health Monitoring

In precision agriculture, understanding crop textures is vital. Variations in leaf texture, for example, can indicate hydration levels, nutrient deficiencies, or the presence of pests and diseases. Drone imagery, analyzed for textural patterns, can help farmers identify areas of their fields that require specific attention. This allows for targeted application of fertilizers, pesticides, or irrigation, optimizing resource use, reducing environmental impact, and maximizing crop yields. The texture of the soil itself also provides valuable information about its composition, moisture content, and compaction, guiding decisions on plowing and planting.

Infrastructure Inspection and Material Characterization

The inspection of infrastructure, such as bridges, pipelines, roads, and buildings, heavily relies on identifying surface defects. Textural analysis of drone imagery can pinpoint cracks, spalling concrete, corrosion, and other forms of degradation that might be subtle or difficult to detect from ground level. Advanced algorithms can distinguish between normal surface wear and potentially critical structural weaknesses based on their textural characteristics. Furthermore, by analyzing the spectral and textural properties of materials, drones can assist in identifying different types of construction materials, aiding in maintenance planning and material management.

Environmental Monitoring and Geological Surveying

In environmental science and geology, textures provide critical clues about the Earth’s surface. The texture of rock formations can reveal their geological history, erosion patterns, and material composition. Analyzing the textures of soil and sediment can help in understanding land degradation, erosion rates, and water flow patterns. For instance, the textural variations in a riverbed can indicate sediment transport dynamics, crucial for hydrological modeling and flood risk assessment. In forest management, distinguishing between different types of ground cover based on their textures is essential for habitat mapping and conservation efforts.

Autonomous Navigation and Object Recognition

For drones to operate autonomously and safely, they need to understand their surroundings. Textural cues play a significant role in navigation and object recognition. By analyzing the textures of the ground, drones can infer their position and orientation, and differentiate between various terrain types (e.g., grass, asphalt, gravel). In object recognition tasks, the distinctive textures of objects – be it a parked car, a specific type of tree, or a building facade – aid algorithms in identifying and classifying them within the environment. This capability is fundamental for applications like autonomous delivery, search and rescue, and automated inspection.

Mapping and 3D Reconstruction

The creation of highly accurate maps and 3D models relies heavily on the interpretation of visual textures. Photogrammetry, a technique that uses overlapping aerial images to create 3D representations, inherently uses the textural features within the images to match points and build the geometric model. The quality and detail of the textures captured by the drone directly influence the resolution and realism of the resulting 3D models, which are invaluable for urban planning, construction, archaeology, and virtual reality applications.

The Evolving Landscape of Texture in Drone Innovation

As drone technology continues its rapid evolution, so too does our understanding and utilization of textures. The ongoing advancements in sensor technology, computational power, and artificial intelligence are pushing the boundaries of what is possible in texture analysis.

Real-time Texture Analysis for Enhanced Situational Awareness

The development of onboard processing capabilities is enabling drones to perform texture analysis in real-time. This means that critical textural information can be extracted and acted upon instantaneously, without the need for data to be transmitted to a ground station for processing. This real-time capability is crucial for applications requiring immediate responses, such as collision avoidance in complex environments, dynamic path planning for autonomous systems, and immediate threat assessment in surveillance operations.

AI-Powered Texture Interpretation for Deeper Insights

The application of advanced AI, particularly deep learning, is transforming texture interpretation from simple pattern recognition to nuanced understanding. AI models can now learn to distinguish subtle textural anomalies that might indicate a hidden defect, a change in material state, or even the presence of specific biological markers. This allows for a much deeper level of insight into the data captured by drones, moving beyond mere identification to predictive analysis and complex diagnostics.

The Future of Texture in an Immersive Digital World

Looking ahead, the rich textural data captured by drones will play an increasingly vital role in the creation of digital twins, immersive virtual environments, and augmented reality experiences. By accurately replicating the textures of real-world objects and landscapes, we can create digital replicas that are not only visually indistinguishable but also functionally accurate. This will revolutionize fields ranging from design and simulation to training and remote collaboration, where interacting with highly realistic digital representations of the physical world will become commonplace. The humble concept of “texture” is, therefore, becoming an indispensable component of our increasingly digitized and technologically advanced future.