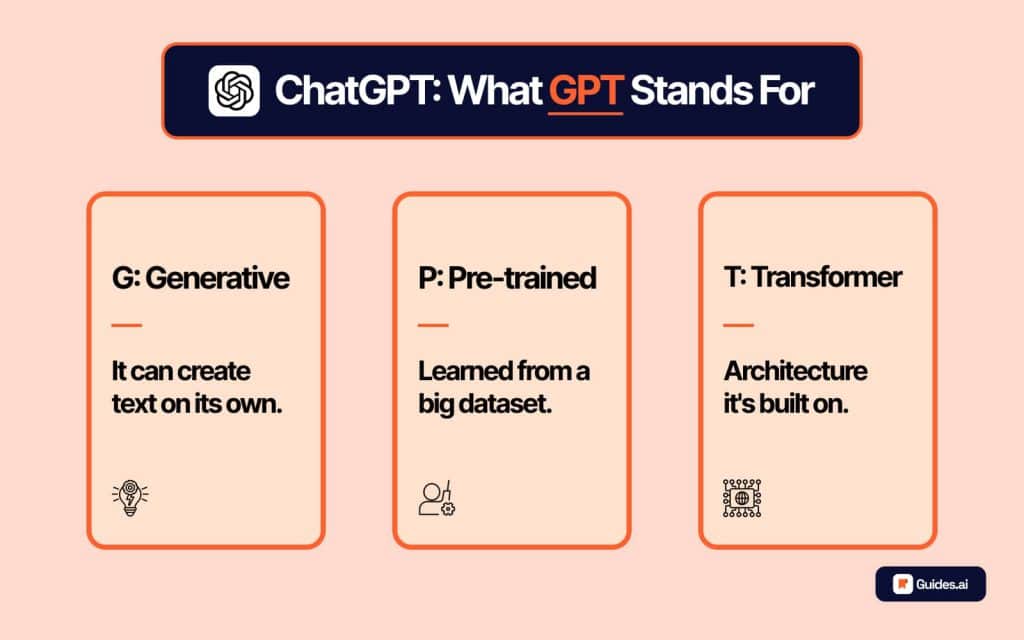

The acronym “GPT” has become a ubiquitous term in the landscape of artificial intelligence, often appearing in discussions about advanced language models and their capabilities. While its usage is widespread, a clear understanding of what GPT stands for and the foundational technology it represents is crucial for appreciating the ongoing revolution in AI. At its core, GPT is an acronym for Generative Pre-trained Transformer. This seemingly simple designation encapsulates a powerful paradigm shift in how machines process and generate human-like text, driving significant advancements across various technological domains.

The Pillars of GPT: Generative, Pre-trained, and Transformer

To truly grasp the significance of GPT, we must dissect each component of its name. These three elements are not arbitrary; they represent the key innovations and methodologies that have propelled GPT models to the forefront of natural language processing (NLP).

Generative: The Art of Creation

The “Generative” aspect of GPT highlights its primary function: the ability to create new content. Unlike earlier AI models that were primarily designed for analysis, classification, or retrieval, generative models are engineered to produce novel outputs that mimic human creativity. In the context of GPT, this means generating coherent, contextually relevant, and often remarkably insightful text. This can range from answering questions and writing essays to composing poetry, crafting code, and even simulating conversations.

The generative capability stems from the model’s underlying architecture and its extensive training. By learning the statistical relationships and patterns within vast amounts of text data, GPT models develop an internal representation of language. This allows them to predict the most probable next word or sequence of words, effectively “generating” text that flows logically and semantically. This ability to create is what makes GPT so versatile, opening doors to applications that were once the exclusive domain of human intellect.

Beyond Simple Prediction: Understanding and Synthesis

It’s important to distinguish GPT’s generative power from simple autocomplete or pattern matching. While these simpler forms of text generation rely on identifying immediate statistical correlations, GPT models engage in a more profound level of understanding and synthesis. Through their extensive pre-training, they absorb not just words but also concepts, relationships between ideas, and even stylistic nuances. This enables them to generate text that is not only grammatically correct but also semantically rich and contextually appropriate.

For example, when asked to explain a complex scientific concept, a GPT model doesn’t just retrieve definitions. It can synthesize information from its training data to provide a clear, concise, and often simplified explanation, tailored to the user’s apparent level of understanding. Similarly, when asked to write a story, it can weave together plot points, character development, and descriptive language in a way that feels creative and engaging.

Pre-trained: The Foundation of Knowledge

The “Pre-trained” component is arguably the most critical factor in GPT’s success. This signifies that these models are not built from scratch for every new task. Instead, they undergo an initial, massive training phase on an enormous dataset of text and code. This dataset typically comprises a significant portion of the publicly available internet, including books, articles, websites, and code repositories.

This pre-training phase is computationally intensive and requires vast resources. During this period, the model learns fundamental linguistic principles, grammar, facts about the world, reasoning abilities, and various writing styles. It develops a broad understanding of human knowledge and communication. This foundational knowledge acts as a powerful starting point, making the model incredibly efficient when it comes to fine-tuning for specific downstream tasks.

The Advantage of Transfer Learning

The pre-training paradigm is a prime example of transfer learning in AI. Instead of training a separate model for each individual task (e.g., one for translation, one for summarization, one for question answering), a single pre-trained GPT model can be adapted to perform a wide array of tasks with relatively little additional training data. This is a significant leap forward in AI development because it drastically reduces the time, cost, and data requirements for deploying AI solutions for new applications.

Imagine needing to build a specialized chatbot for a particular industry. Without pre-training, you would need to gather a massive dataset specific to that industry and train a model from scratch, which could take months or even years. With a pre-trained GPT model, you can “fine-tune” it on a smaller, industry-specific dataset, achieving high performance in a fraction of the time and with fewer resources. This democratizes access to advanced AI capabilities and accelerates innovation.

Transformer: The Architectural Breakthrough

The “Transformer” in GPT refers to a specific neural network architecture that has revolutionized sequence-to-sequence modeling, particularly in NLP. Introduced in the groundbreaking 2017 paper “Attention Is All You Need,” the Transformer architecture eschewed traditional recurrent neural networks (RNNs) and convolutional neural networks (CNNs) for language processing, introducing a mechanism called self-attention.

Prior to the Transformer, RNNs processed sequences word by word, making it difficult to capture long-range dependencies in text. For example, understanding the relationship between a pronoun and its antecedent several sentences earlier was a significant challenge. CNNs, while good at capturing local patterns, also struggled with global context. The Transformer, through its self-attention mechanism, can weigh the importance of different words in an input sequence simultaneously, regardless of their position. This allows it to effectively understand the context and relationships between words across an entire sentence or even an entire document.

The Power of Attention Mechanisms

The self-attention mechanism is the heart of the Transformer. It allows the model to assign different “attention scores” to each word in the input when processing another word. This means that when the model is trying to understand a particular word, it can “look at” and assign importance to all other words in the input sequence, effectively building a rich contextual representation. This is analogous to how humans read, where we don’t just process words in isolation but constantly refer back and forth to understand the overall meaning and relationships within a text.

The Transformer architecture also benefits from parallelization. Unlike RNNs, which are inherently sequential, the self-attention mechanism allows for parallel computation, making it much more efficient to train on large datasets and handle longer sequences. This scalability and ability to capture nuanced linguistic relationships are what have made the Transformer architecture so successful and foundational for models like GPT.

The Evolution of GPT and Its Impact

The GPT series, developed by OpenAI, represents a significant lineage of advancements in generative AI. Each iteration has pushed the boundaries of what’s possible, showcasing exponential improvements in scale, performance, and capabilities.

From GPT-1 to GPT-4: A Trajectory of Growth

The journey began with GPT-1, which demonstrated the potential of the Transformer architecture for language understanding and generation. GPT-2, released in 2019, was significantly larger and showed impressive zero-shot learning capabilities, meaning it could perform tasks it wasn’t explicitly trained for with remarkable proficiency.

GPT-3, unveiled in 2020, marked a monumental leap. With 175 billion parameters, it was orders of magnitude larger than its predecessor and demonstrated a much deeper understanding of language, enabling it to perform a vast array of complex tasks with unprecedented accuracy and fluency. This version truly captured the public imagination and showcased the power of large language models.

The latest iterations, such as GPT-4, continue this trend of increasing scale and sophistication. These models exhibit even more advanced reasoning abilities, a greater capacity for understanding nuanced instructions, and a reduced tendency to generate factual inaccuracies or nonsensical outputs. The focus has also shifted towards multimodal capabilities, where models can process and generate not only text but also images and other forms of data.

Implications Across Industries

The advancements embodied by GPT have profound implications across a multitude of industries:

- Content Creation: From marketing copy and blog posts to scripts and creative writing, GPT models are transforming how content is generated, making it faster, more scalable, and often more innovative.

- Customer Service: AI-powered chatbots and virtual assistants are becoming increasingly sophisticated, capable of handling complex customer inquiries, providing personalized support, and improving overall customer satisfaction.

- Software Development: GPT models can assist developers by generating code snippets, debugging, explaining complex code, and even helping with the design of new software architectures.

- Education and Research: These models can act as powerful research assistants, summarizing complex papers, explaining difficult concepts, and even helping students brainstorm ideas.

- Healthcare: GPT’s potential in healthcare includes assisting with medical documentation, analyzing patient data, and even aiding in drug discovery by synthesizing research findings.

- Accessibility: Generative AI can help create more accessible content by summarizing lengthy texts, translating languages in real-time, and providing alternative explanations for complex information.

Understanding the Broader Context: AI and Language Models

While GPT is a specific acronym, it represents a broader category of AI known as Large Language Models (LLMs). These are sophisticated AI systems trained on massive datasets that excel at understanding and generating human language. The principles behind GPT are foundational to many other LLMs currently being developed and deployed.

The Democratization of Advanced AI Capabilities

The development and widespread availability of models like GPT have a democratizing effect on advanced AI capabilities. Previously, developing such powerful language models required immense specialized expertise and resources, often confined to a few leading research institutions and tech giants. However, through APIs and increasingly accessible platforms, these powerful tools are becoming available to a wider range of developers, businesses, and even individuals.

This accessibility fuels innovation, allowing for the creation of novel applications and services that were previously unimaginable. It empowers small startups and independent creators to leverage cutting-edge AI without needing to invest in building their own foundational models. This democratization is crucial for driving widespread adoption and ensuring that the benefits of AI are shared across society.

Ethical Considerations and Future Directions

As GPT and other LLMs become more powerful and integrated into our lives, it’s essential to consider the ethical implications. Issues such as the potential for misinformation, bias present in training data, intellectual property concerns, and the impact on employment are critical areas of ongoing research and discussion.

The future of GPT and LLMs is one of continued evolution. We can expect to see further improvements in their reasoning abilities, enhanced multimodal capabilities, greater efficiency, and a deeper integration into complex workflows. The ongoing pursuit of more robust, reliable, and ethically sound AI systems will shape the next generation of these transformative technologies. Understanding what GPT stands for is not just about memorizing an acronym; it’s about grasping the fundamental concepts that are reshaping our digital world and unlocking unprecedented possibilities.