Context-Free Grammar (CFG) is a fundamental concept in computer science, particularly in the fields of formal languages, compiler design, and natural language processing. At its core, a CFG is a set of production rules that describe how to form valid strings in a language. The “context-free” aspect refers to the fact that the application of these rules does not depend on the surrounding symbols in the string. This characteristic makes CFGs a powerful tool for defining and analyzing the structure of many types of languages, from programming languages to certain aspects of human language.

The importance of CFGs stems from their ability to precisely define the syntax of a language, ensuring that sentences or code are grammatically correct. This definition is crucial for machines to parse and understand the structure of these languages, enabling tasks like compilation of source code into executable programs or the interpretation of human commands.

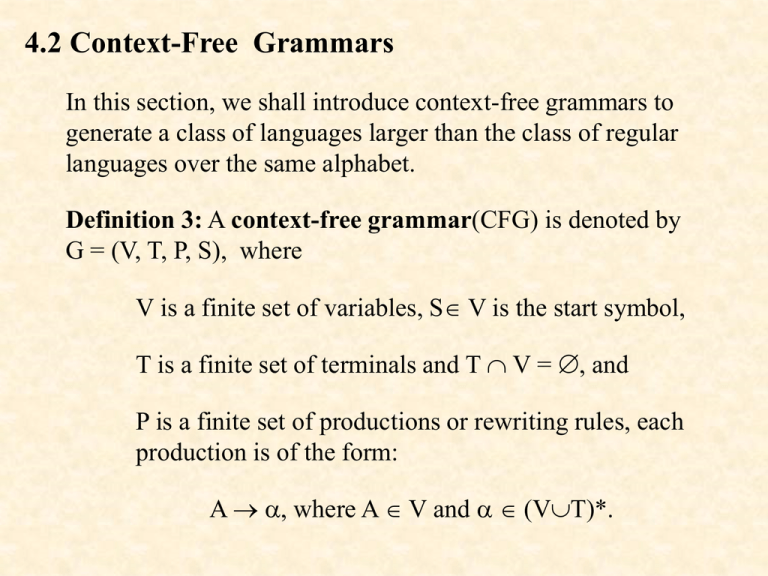

The Formal Definition and Components of Context-Free Grammars

To truly understand what a context-free grammar is, we must delve into its formal definition and the distinct components that constitute it. A CFG is formally defined as a four-tuple $(V, Sigma, R, S)$, where each element plays a specific role in defining the language.

1. Variables (Non-terminals)

The set of variables, denoted by $V$, represents abstract syntactic categories. These are placeholders for grammatical constructs that can be further expanded. Think of them as the “building blocks” of the grammatical structure. For example, in a grammar for simple arithmetic expressions, variables might include Expression, Term, and Factor. These variables themselves do not appear in the final, valid strings of the language but are used during the derivation process to construct those strings. The set of variables is typically finite.

2. Terminals

The set of terminals, denoted by $Sigma$, represents the actual symbols that can appear in the strings of the language. These are the “words” or “tokens” of the language. For a programming language, terminals would be keywords, operators, identifiers, and literal values. For example, in our arithmetic expression grammar, terminals might be +, -, *, /, (, ), and digits. The set of terminals is also finite, and it is distinct from the set of variables.

3. Production Rules

The set of production rules, denoted by $R$, is the heart of the context-free grammar. Each rule in $R$ has the form $A rightarrow beta$, where $A$ is a single variable (a non-terminal) from $V$, and $beta$ is a string of variables and terminals (a sequence of symbols from $(V cup Sigma)^*$). These rules dictate how a variable can be replaced by a sequence of other symbols. The “context-free” nature is evident here: the replacement of $A$ by $beta$ can occur regardless of the symbols that appear before or after $A$ in any given derivation.

For instance, a production rule for our arithmetic expression grammar might be:

Expression -> Expression + Term

This rule signifies that an Expression can be formed by an Expression followed by a + operator and then a Term. Other rules could define how a Term is constructed from Factors, and how Factors can be numbers or parenthesized Expressions.

4. Start Symbol

The start symbol, denoted by $S$, is a special variable from $V$ that initiates the derivation process. Every valid string in the language must be derivable starting from this single symbol. It represents the top-level syntactic category of the language. For our arithmetic expression grammar, $S$ would likely be Expression. The grammar essentially defines the set of all strings that can be generated by starting with $S$ and repeatedly applying the production rules.

How Context-Free Grammars Generate Languages

The primary function of a context-free grammar is to generate strings belonging to a specific formal language. This generation process is achieved through a mechanism called derivation. A derivation is a sequence of steps where we start with the start symbol and repeatedly apply production rules to replace variables with other sequences of symbols until only terminal symbols remain.

Derivations: The Step-by-Step Generation Process

A derivation begins with the start symbol $S$. At each step, we select a variable in the current string and replace it with the right-hand side of one of its production rules. This process continues until the string consists solely of terminal symbols.

Let’s consider a simple CFG for binary numbers:

$V = {B}$

$Sigma = {0, 1}$

$R = { B rightarrow 0, B rightarrow 1, B rightarrow 1B, B rightarrow 0B }$

$S = B$

Here, $B$ is the start symbol representing a binary number.

A possible derivation of the binary string “101” could be:

- $B$ (Start symbol)

- $1B$ (Applying rule $B rightarrow 1B$)

- $10B$ (Applying rule $B rightarrow 0B$)

- $101$ (Applying rule $B rightarrow 1$)

In this derivation, we started with the variable $B$ and, through a sequence of rule applications, arrived at the terminal string “101”. This process demonstrates how the production rules, applied in a context-free manner, construct valid strings of the language.

Parse Trees: Visualizing Syntactic Structure

While derivations show the step-by-step generation, parse trees provide a more intuitive and structured visualization of the syntactic structure of a string generated by a CFG. A parse tree for a string $w$ generated by a CFG $G$ has:

- Root Node: The start symbol $S$.

- Internal Nodes: Variables from $V$.

- Leaf Nodes: Terminals from $Sigma$.

The tree is constructed such that:

- The root is the start symbol.

- Every internal node is a variable.

- If a node labeled $A$ has children labeled $X1, X2, dots, Xk$ from left to right, then there must be a production rule $A rightarrow X1X2dots Xk$ in the grammar.

- The string formed by concatenating the labels of the leaf nodes from left to right is the original string $w$.

For our binary number example, the parse tree for “101” would visually represent the derivation process. The root would be $B$. The first application of $B rightarrow 1B$ would result in $B$ having two children, a “1” (terminal) and another $B$ (variable). This second $B$ would then be expanded by $B rightarrow 0B$, and so on, until all branches terminate in terminals. This hierarchical structure clearly illustrates how the string “101” is formed from its constituent parts according to the grammar’s rules.

The Importance and Applications of Context-Free Grammars

The power and elegance of context-free grammars lie in their ability to precisely define the structure of languages, making them indispensable in various domains of computer science and beyond.

1. Compiler Design: Parsing Programming Languages

One of the most significant applications of CFGs is in the design of compilers. Programming languages, by their very nature, are formal languages with strict syntactical rules. CFGs are used to define these rules, allowing compilers to parse source code.

- Lexical Analysis: While CFGs are not typically used for the very first stage of compilation (lexical analysis, which breaks code into tokens), the tokens generated are then fed into a parser.

- Syntactic Analysis (Parsing): The parser’s job is to check if the sequence of tokens conforms to the language’s grammar and to build an internal representation of the code’s structure, usually an Abstract Syntax Tree (AST). This AST is derived from the parse tree concept and is crucial for subsequent compilation stages like semantic analysis and code generation. A CFG provides the formal specification that parsers rely on to perform this task efficiently and accurately. If the source code violates the CFG’s rules, the parser will report a syntax error.

2. Natural Language Processing (NLP): Analyzing Human Language

Although human languages are far more complex than what a pure CFG can fully capture (due to their inherent context-sensitivity and ambiguity), CFGs are foundational in certain aspects of Natural Language Processing.

- Syntactic Parsing of Sentences: CFGs can be used to define the grammatical structure of sentences. For example, a CFG can specify that a sentence can consist of a noun phrase followed by a verb phrase. This allows NLP systems to parse sentences, identify their grammatical components (subjects, verbs, objects), and understand their relationships.

- Ambiguity Resolution: While CFGs can generate ambiguous structures (a single sentence having multiple valid parse trees), they provide a framework for analyzing and potentially resolving this ambiguity, which is critical for deep language understanding.

- Foundation for More Advanced Models: CFGs serve as a stepping stone for more sophisticated NLP models, such as probabilistic context-free grammars (PCFGs) that assign probabilities to rules, and dependency grammars, which focus on relationships between words.

3. Formal Language Theory and Computability

In theoretical computer science, CFGs are central to the Chomsky hierarchy, a classification of formal grammars and the languages they generate. Context-free languages (the languages generated by CFGs) are a significant class of languages within this hierarchy, positioned above regular languages and below context-sensitive languages. Studying CFGs helps computer scientists understand the expressive power and limitations of different formalisms, which has implications for understanding what can be computed and how.

4. Other Applications

Beyond compilers and NLP, CFGs find applications in:

- Markup Languages: Defining the structure of XML and HTML documents.

- Configuration File Parsing: Specifying formats for configuration files.

- Protocol Specification: Defining the syntax of communication protocols.

- Database Query Languages: Formally defining the syntax of SQL-like languages.

In essence, any system that needs to process structured text or commands can benefit from the precise and systematic definition provided by a context-free grammar. It offers a clear blueprint for understanding and manipulating the rules that govern the formation of valid strings within a defined language.